Clear Sky Science · en

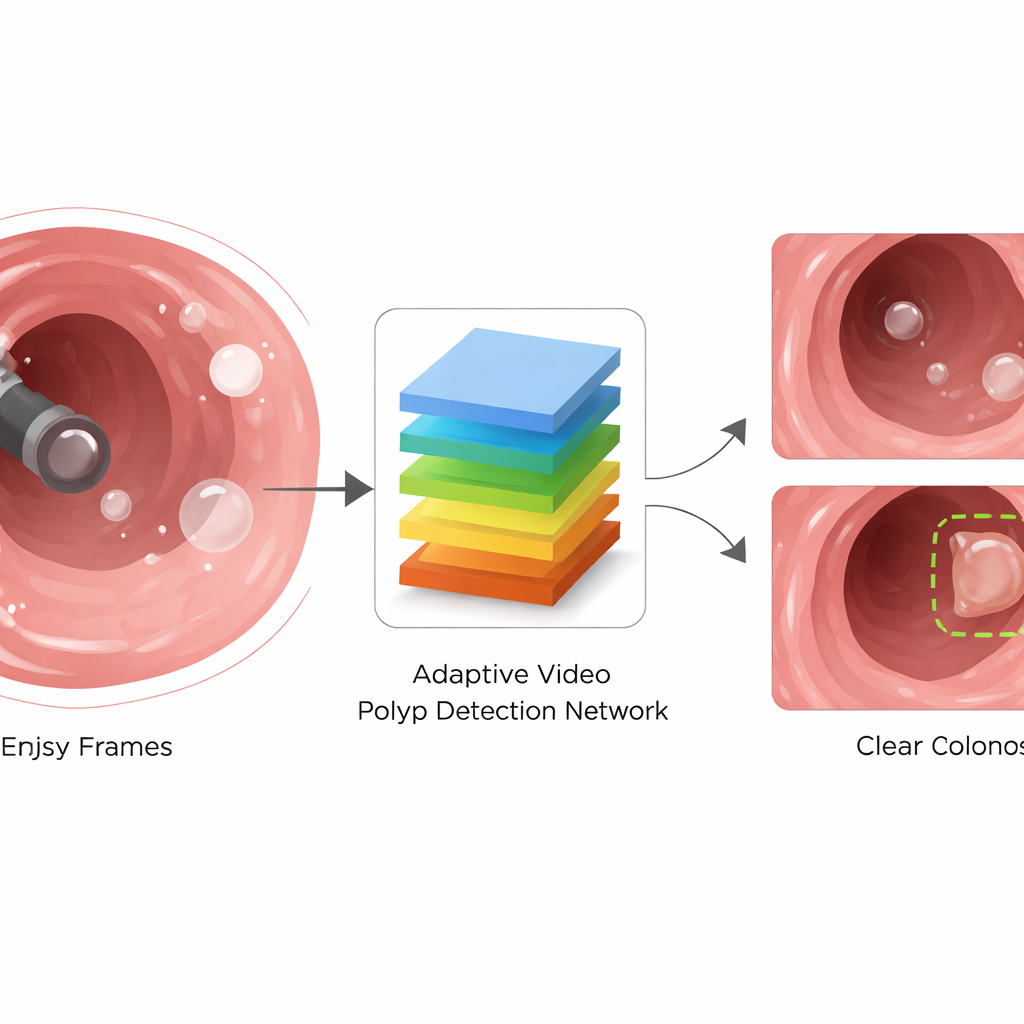

AVPDN: learning motion-robust and scale-adaptive representations for polyp detection in dynamic colonoscopy frames

Why Finding Tiny Growths Matters

Most colorectal cancers begin as small growths called polyps on the lining of the intestine. During a colonoscopy, doctors try to spot and remove these polyps before they turn dangerous. Modern video scopes record everything, but the camera moves quickly, the view is often blurry or shiny, and polyps can be tiny and hard to see. This paper introduces a new computer system that learns to see through the visual chaos of real colonoscopy videos, helping doctors find more polyps accurately and in real time.

The Challenge of a Moving Camera

Colonoscopy is not like taking a still photograph—it's more like filming a shaky, close-up exploration inside the body. As the scope advances, the camera shakes and rotates, the bowel wall contracts, and fluids and air bubbles swirl in front of the lens. These movements create motion blur, bright white reflections, and sudden changes in how big the same structure looks from one frame to the next. Small polyps can look almost identical to the surrounding tissue folds, and they may briefly disappear behind bubbles or glare. Most existing computer vision systems were originally built for natural photos or ordinary videos, where the camera is steadier and objects are easier to separate from the background, so they struggle in this extreme setting.

A Smarter Way to Read Colonoscopy Video

To cope with these problems, the authors propose the Adaptive Video Polyp Detection Network (AVPDN). At its core, AVPDN takes each video frame as an image and passes it through a standard feature extractor that captures edges, textures, and colors. But instead of stopping there, it adds a specialized "enhancement" stage designed specifically for colonoscopy. This stage is built from repeatable blocks that clean up noisy signals, strengthen truly polyp-like patterns, and keep track of polyps of many different sizes. Importantly, the method works frame by frame without needing to analyze long stretches of video over time, which keeps the system fast enough for real-time use.

Filtering Noise While Keeping Important Clues

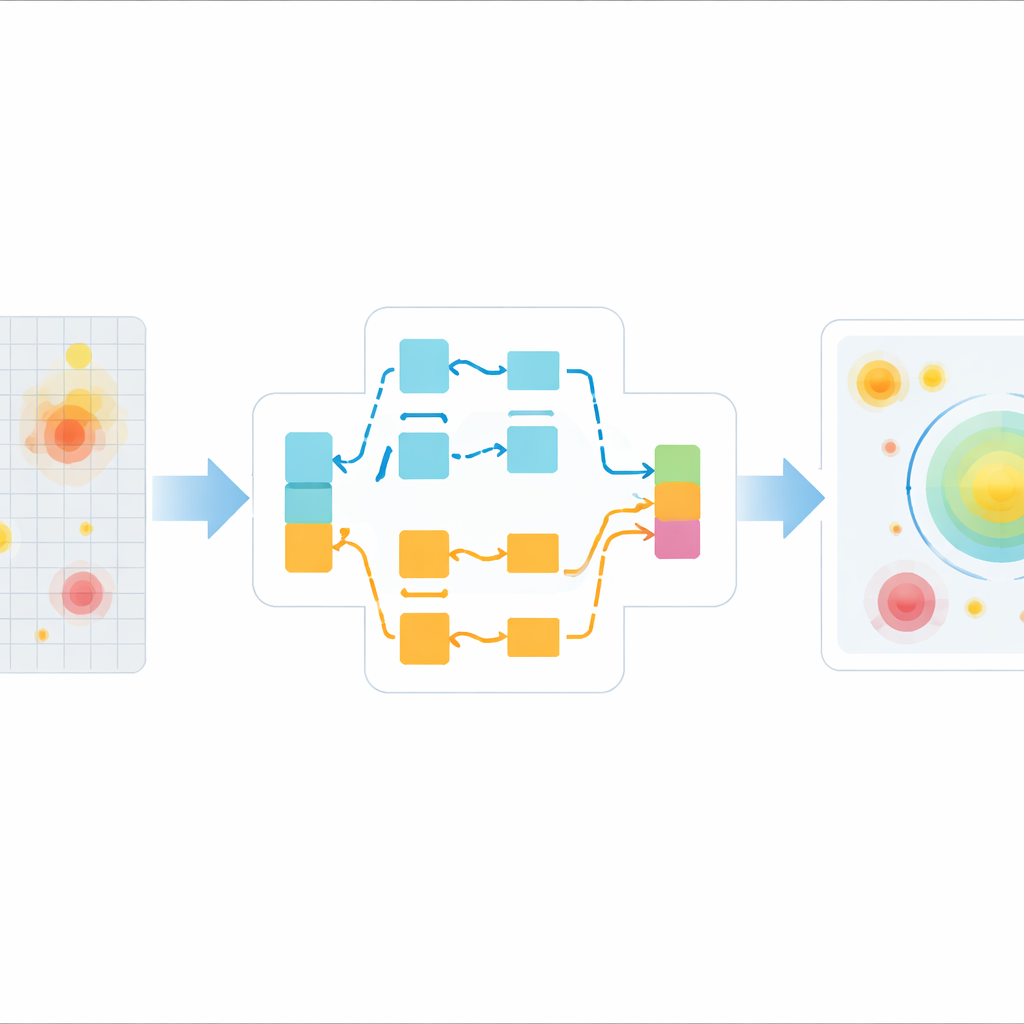

The first key building block is called Adaptive Feature Interaction and Augmentation. In simple terms, it looks at the image features in two different ways at the same time. One branch considers broad connections across the whole image, which helps it understand the overall scene and not miss distant hints of a polyp. The other branch is more selective: it aggressively downplays parts of the image that show weak or inconsistent patterns, such as blur and glare. The system then learns how much to trust each branch for each frame, blending them adaptively. A clever "channel shuffle" step mixes information between different groups of features, encouraging the network to discover richer combinations of texture and shape that distinguish true polyps from harmless folds and spots.

Seeing Polyps at Many Sizes

The second key block is called Scale-Aware Context Integration. Polyps can be very small when the camera is far away and much larger when the scope approaches them, so the system must work across a wide range of sizes. This module looks at the scene through multiple "virtual lenses" at once—some focus on fine detail while others capture a wider neighborhood. By using dilated filters that reach farther without losing resolution, the module gathers both local detail and broad context. It then combines these views so that the network can reliably highlight tiny polyps tucked between folds as well as larger lesions that dominate the field of view, even when the camera is moving quickly.

How Well the System Performs

The researchers tested AVPDN on two large public collections of colonoscopy videos that contain tens of thousands of frames from many patients, with polyps of varied shapes, sizes, and appearances. They compared their method against widely used object detectors and several specialized polyp systems. Across all key measures—how often polyps are correctly found, how often false alarms are avoided, and how well the system balances these two goals—AVPDN consistently came out on top. It improved the main accuracy score by a couple of percentage points over strong modern baselines, while still running fast enough for real-time use on current graphics hardware. Careful internal tests showed that each of the two new modules contributed noticeably to this edge.

What This Means for Patients

In plain terms, this work shows that an AI system can be trained to look past the blur, glare, and rapid size changes that make colonoscopy video so difficult, and to tune itself to the telltale patterns of polyps. By cleaning and re-weighting visual information inside the network rather than relying on extra sensors or slower video analysis, AVPDN detects more polyps with fewer misses and fewer false alarms. If integrated into clinical tools, such technology could act as a second set of eyes during procedures, helping doctors notice subtle growths earlier and more reliably, and ultimately reducing the risk that a dangerous polyp is left behind.

Citation: Chen, Z., Lu, S. AVPDN: learning motion-robust and scale-adaptive representations for polyp detection in dynamic colonoscopy frames. Sci Rep 16, 11591 (2026). https://doi.org/10.1038/s41598-026-42286-5

Keywords: colonoscopy, polyp detection, medical imaging AI, video analysis, colorectal cancer screening