Clear Sky Science · en

Generative adversarial network-based super-resolution reconstruction of remote sensing images

Sharper Views of Our Changing Planet

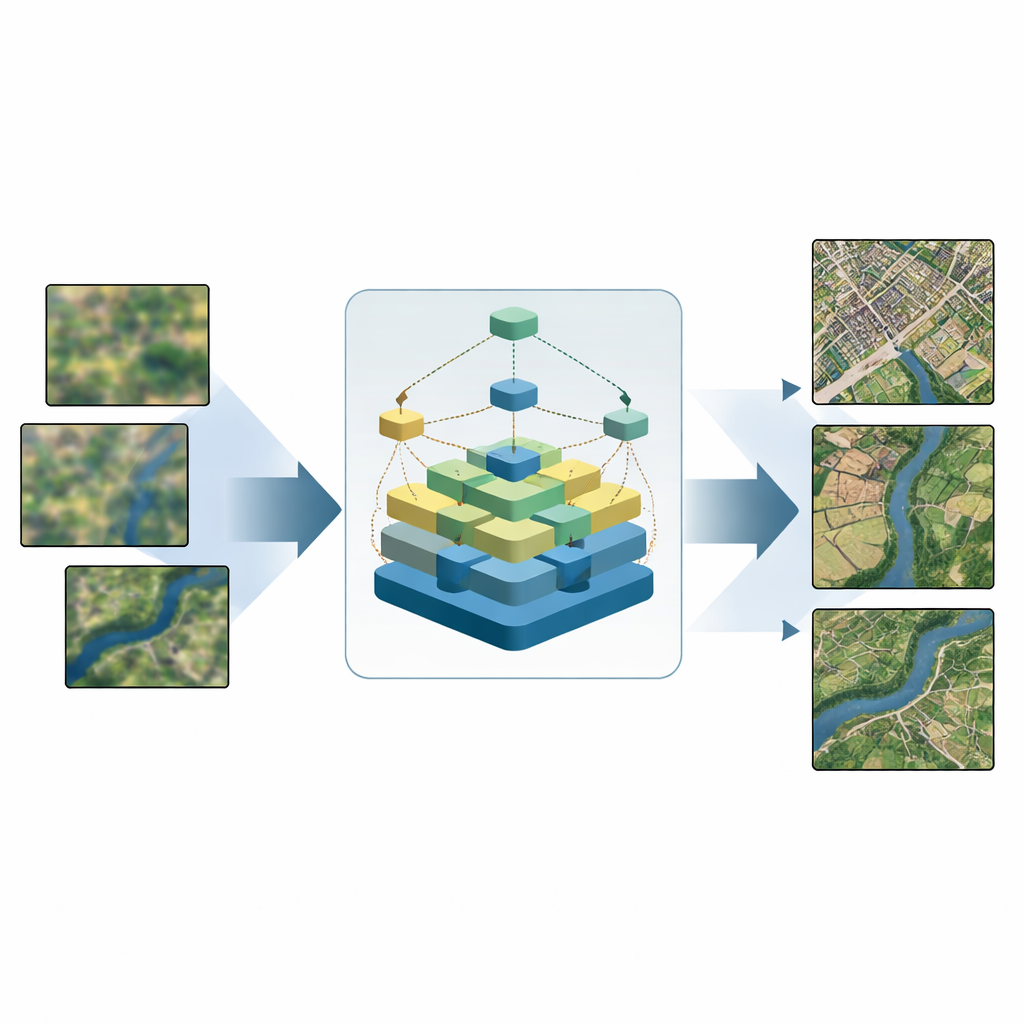

Every day, satellites capture vast mosaics of Earth that guide city growth, disaster response, farming, and environmental protection. Yet many of these images are blurrier than we would like: fine road lines fade, building edges smear, and small features disappear. This study introduces a new computer-vision method, called SDGAN, that turns coarse satellite pictures into clearer, more detailed views while using less computing power—making sharp, timely maps more practical for real-world use.

Why Satellite Images Get Blurry

Modern satellites orbit far above Earth and must peer through a restless atmosphere, all while working within strict size, power, and cost limits. As a result, many raw remote sensing images have limited resolution: small houses merge into blocks of color, narrow rivers lose their shape, and farmland textures become indistinct. Traditional sharpening tricks—like simple zoom and interpolation—can enlarge images but cannot invent convincing detail. More advanced learning-based methods help, but often create strange artifacts, fail on scenes with mixed land types, or demand too much computing power for devices such as drones and field laptops.

A Smart Way to Recover Lost Detail

The authors tackle this problem with SDGAN, a “super-resolution” framework that learns to reconstruct fine structures from low-resolution satellite images. At its core is a new building block called the super-dense residual block. Instead of passing visual information straight through a stack of layers, this design arranges many small processing units in a grid and links them in multiple directions—including diagonals. This web of connections lets basic patterns from early layers and richer patterns from deeper layers interact more effectively. In practice, this helps the network recover crisp edges and textures, such as intersecting roads or irregular field boundaries, while keeping the model relatively compact and efficient.

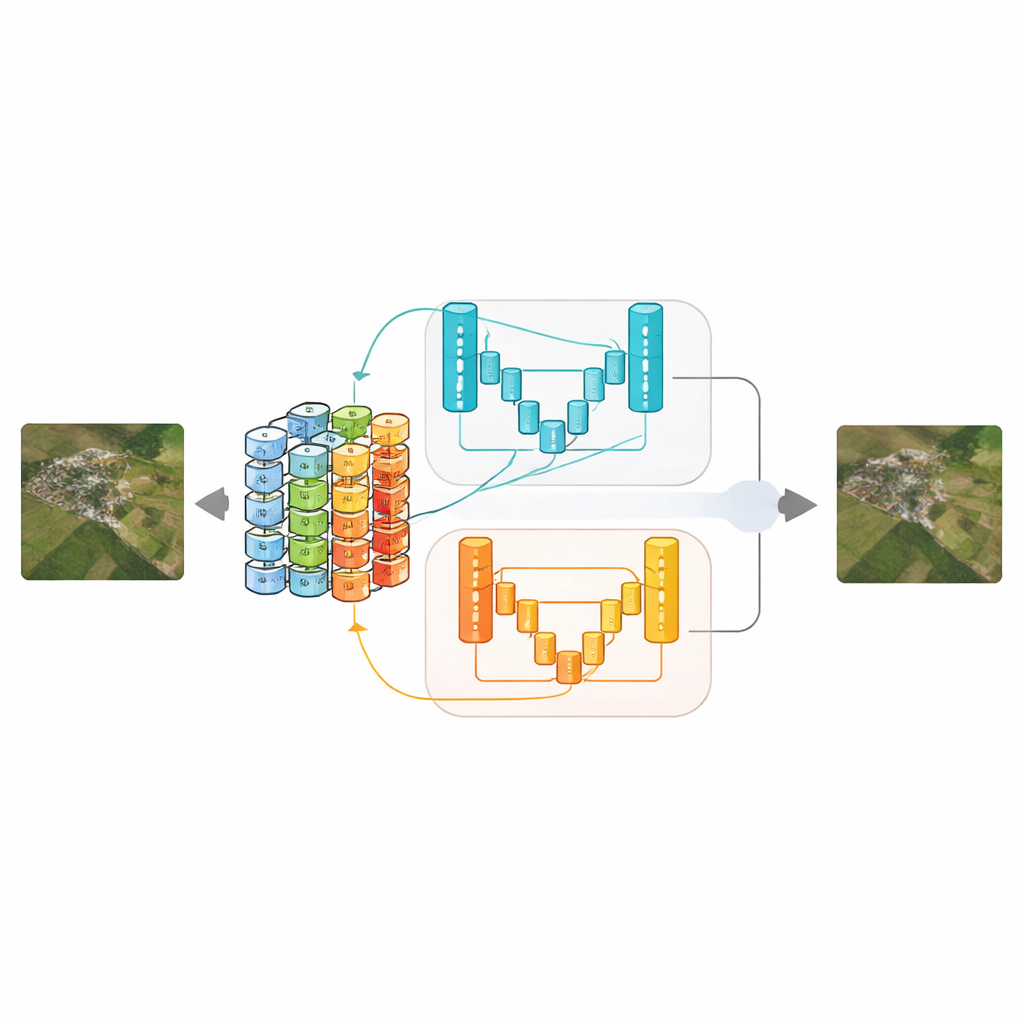

Two Critics Keep the Network Honest

To judge whether the sharpened images look realistic, SDGAN uses not one but two “discriminator” networks based on a streamlined U-shaped design with attention mechanisms. One discriminator examines full-size images and concentrates on tiny details, such as runway stripes or building outlines. The other looks at downsampled versions and focuses on the overall layout—are river systems continuous, do urban blocks and farmland patterns make sense at a glance? By training the generator against both critics at once, the system learns to produce images that are both locally sharp and globally coherent, reducing common problems like fake-looking textures or broken large-scale structures.

Putting the Method to the Test

The team tested SDGAN on three widely used remote sensing collections: UCMerced-LandUse, WHU-RS19, and AID, which together cover dozens of scene types from airports and ports to forests and residential areas. They compared their method with classic convolutional networks, leading generative approaches like SRGAN and Real-ESRGAN, and recent specialized models. Across different zoom factors, SDGAN generally delivered the best combination of sharpness, structural similarity, and perceived naturalness, according to both numerical scores and visual inspection. Importantly, it did so while cutting average processing time by up to about 22 percent compared with a strong prior method, showing that greater clarity does not have to come with a heavy computational bill.

Clearer Images, Better Decisions

For non-specialists, the key takeaway is that SDGAN offers a way to make satellite imagery both clearer and more trustworthy. By carefully preserving edges, textures, and large-scale patterns, it yields pictures that are easier for analysts and automated systems to interpret—whether they are spotting landslides, mapping land use, or tracking floodwaters. Because the method is relatively lightweight, it is also better suited to devices with limited computing power, such as drones used in emergency surveys. The authors suggest that future work could extend SDGAN to handle harsher image degradations, additional sensor types like radar or thermal cameras, and direct links to downstream tasks, pushing us closer to real-time, high-fidelity views of our dynamic planet.

Citation: Wang, L., Liu, L., Yu, Q. et al. Generative adversarial network-based super-resolution reconstruction of remote sensing images. Sci Rep 16, 11971 (2026). https://doi.org/10.1038/s41598-026-41832-5

Keywords: remote sensing, image super-resolution, satellite imagery, deep learning, generative adversarial networks