Clear Sky Science · en

GPT-4o for Automated Determination of Follow-up Examinations Based on Radiology Reports from Clinical Routine

Why Smarter Follow-Up Scans Matter

When a patient gets a CT or MRI scan, the story does not end with the images. Radiologists must also decide if and when follow-up scans are needed to track tumors, check suspicious spots, or confirm that treatment is working. These choices can mean the difference between catching disease early and exposing patients to unnecessary radiation, cost, and anxiety. This study asked a timely question: can a modern artificial intelligence system, GPT-4o, help standardize these follow-up decisions so that patients receive consistent, guideline-based care?

The Problem of Mixed Messages

Professional societies publish detailed recommendations for when and how to repeat imaging for many cancers and incidental findings. Yet in day-to-day practice, radiologists often disagree on follow-up. Some are quick to order repeat scans; others are more cautious. Previous research has shown that the likelihood of recommending further imaging can vary nearly sevenfold between radiologists looking at similar cases. Many suggested plans do not fully match published guidelines, leading some patients to undergo more scans than needed, while others may miss timely checks. This uneven landscape motivates tools that can gently nudge practice toward more consistent, evidence-based decisions.

How the Study Was Set Up

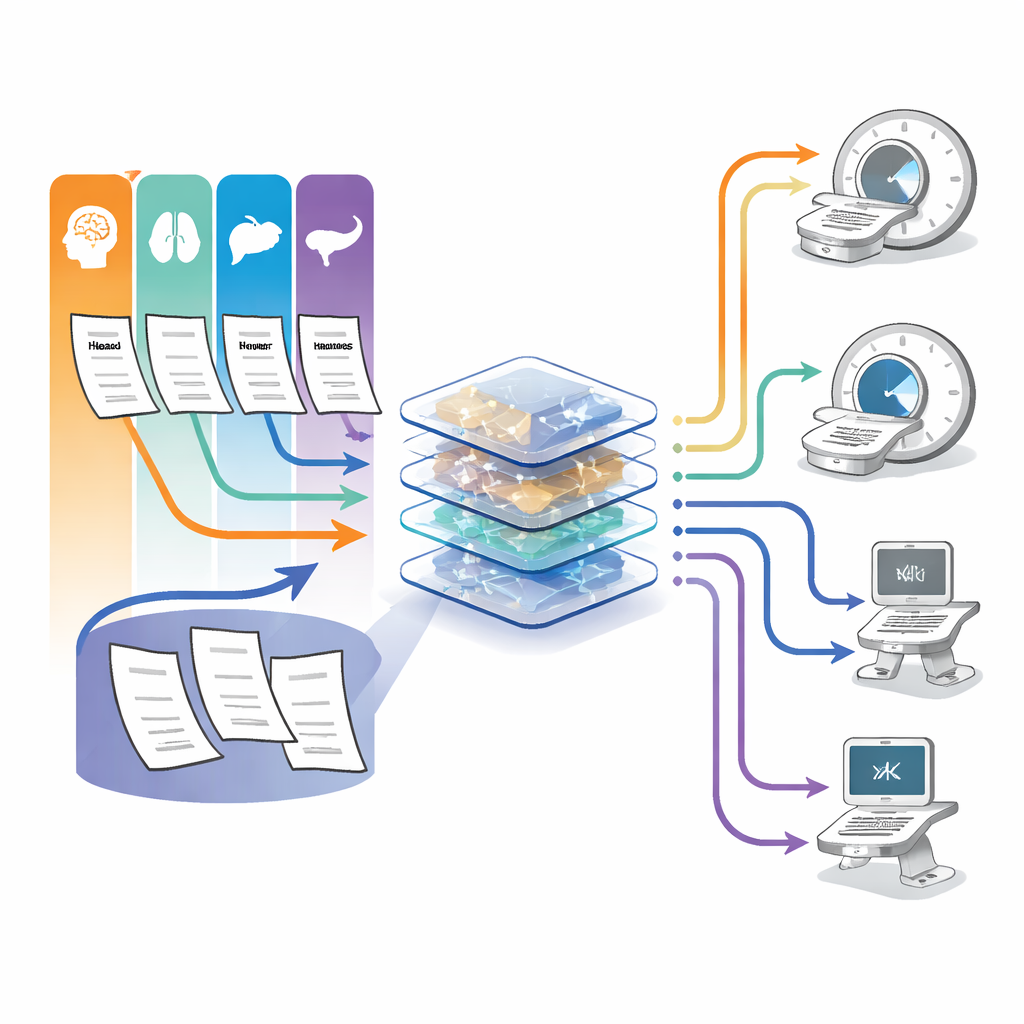

The researchers tested GPT-4o, a large language model designed to understand and generate text, on 100 real radiology cases from two German hospitals. All cases involved adults undergoing CT or MRI scans for cancer-related questions in four key regions: head and neck, liver, lung, and pancreas. For each case, the model received the full written report, including medical history, scan findings, and the radiologist’s conclusion. GPT-4o was asked one task: based on this information, propose the exact follow-up imaging method (such as CT or MRI) and the timing of the next scan. A radiology resident and an experienced board-certified radiologist answered the same question for every case.

Measuring Quality Against Guidelines

To judge these recommendations, two senior radiologists, who did not know which suggestions came from whom, compared all answers with major international guidelines from cancer and radiology societies. They rated each proposal on four fronts: whether all relevant findings needing follow-up were covered, whether the chosen imaging technique was appropriate, how accurate the suggested timing was, and an overall quality score on a five-point scale. In effect, the experts were asking: does this plan keep the patient safe, follow the rules, and avoid unnecessary scans?

How the AI Stacked Up Against Humans

Across all 100 cases, GPT-4o’s overall follow-up quality matched that of the experienced radiologist and surpassed the resident. The model’s median global quality score was 4 out of 5, essentially the same as the expert and significantly better than the trainee. GPT-4o got the timing fully or partly right in 96% of cases, outperforming the resident (75%) and slightly edging the expert (90%). It also produced the fewest potentially harmful timing errors. The model addressed all findings that needed follow-up in 92% of cases, similar to the resident and clearly better than the expert in this specific measure. For choosing the right type of scan, GPT-4o performed nearly on par with both human readers. Its strongest areas were lung, liver, and pancreas imaging, where guideline pathways are especially well standardized; performance was somewhat lower, for all readers, in the more complex head and neck region.

What This Could Mean for Future Care

The study suggests that GPT-4o can act as a reliable assistant for follow-up imaging decisions, working at roughly the level of an experienced radiologist and better than a trainee in many respects. Used as a decision-support tool rather than a replacement, such a system could help reduce unnecessary scans, cut delays in essential follow-up, and ease the workload on busy radiology departments, all while keeping practice closer to established guidelines. However, the authors stress that human experts must remain in charge: the model can still misinterpret reports, its inner workings are opaque, and the study involved only 100 cancer-related cases from two centers. Larger, prospective trials and secure, locally hosted deployments will be needed before such tools can be safely woven into everyday clinical workflows.

Citation: Kaya, K., Müller, L., Persigehl, T. et al. GPT-4o for Automated Determination of Follow-up Examinations Based on Radiology Reports from Clinical Routine. Sci Rep 16, 12587 (2026). https://doi.org/10.1038/s41598-026-40317-9

Keywords: radiology follow-up, large language models, medical decision support, oncologic imaging, GPT-4o