Clear Sky Science · en

Explainable multi agent reinforcement learning framework for secure and adaptive communication in UAV swarm based fanets

Smarter Drone Teams for Tough Situations

Imagine a team of drones rushing into a wildfire, a flood zone, or a search-and-rescue mission. They must share information instantly, dodge attackers trying to jam or trick them, and still explain to human operators why they chose one route over another. This paper presents a new way to make such drone swarms both safer and more understandable, so people can trust them in life‑or‑death situations.

Why Drone Swarms Need More Than Fast Wi‑Fi

Drones in a swarm don’t just fly; they talk constantly, relaying maps, sensor readings, and alerts from one to another. This type of airborne network, called a flying ad hoc network, is fast and flexible but also fragile. Signals travel through open air, drones move quickly, and there is no central tower in charge. That makes the network an easy target for digital attacks such as blocking signals, faking identities, or silently dropping messages. Existing security tools often depend on slow, centralized servers or opaque “black‑box” algorithms, which is risky when lives, property, or critical infrastructure are at stake.

Teaching Each Drone to Learn and Decide

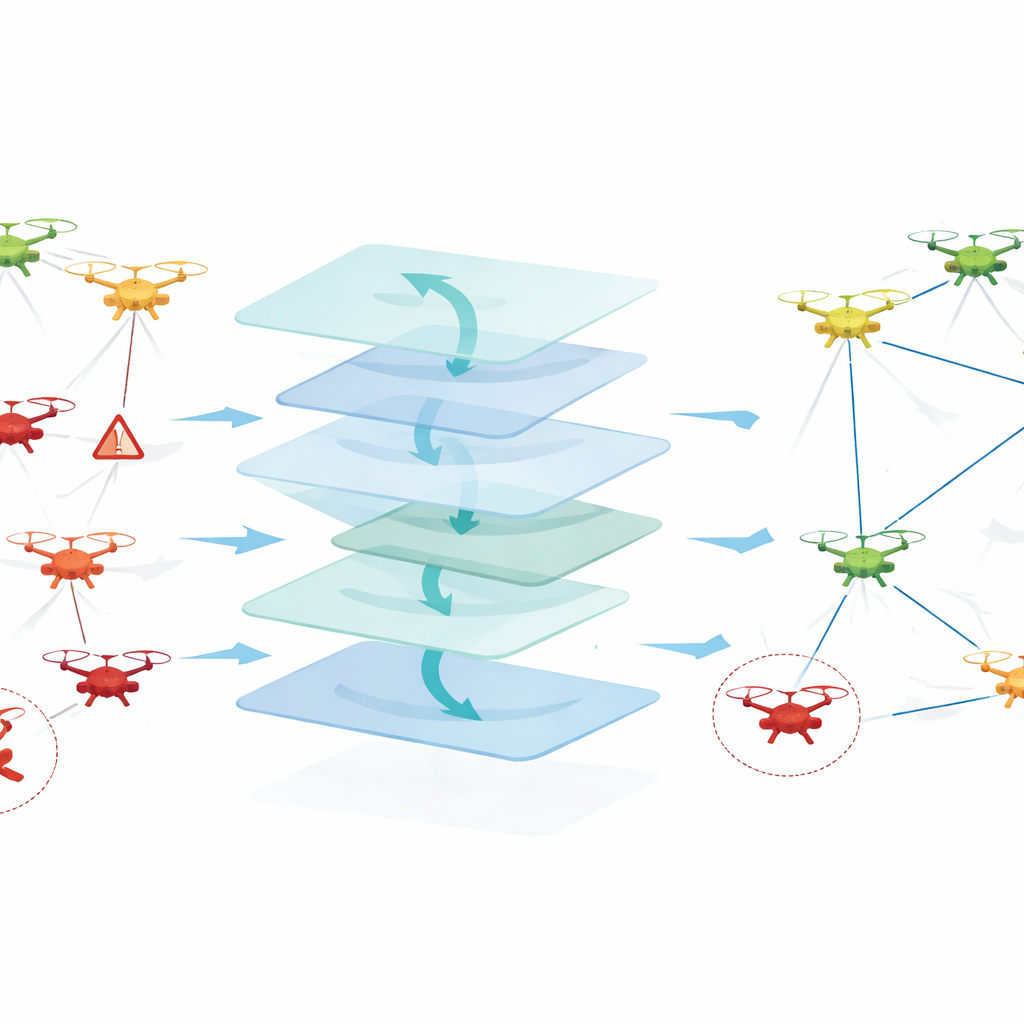

The authors propose an Explainable Multi‑Agent Reinforcement Learning framework—EMARL‑XAI—that turns every drone into a learning agent. Instead of following fixed rules, each drone observes its position, signal quality, battery level, and how trustworthy its neighbors appear to be. Using a cooperative learning method, the swarm gradually discovers which communication paths deliver messages reliably, avoid suspicious drones, and conserve energy. Decisions such as “who should forward this packet next?” emerge from experience rather than being hard‑coded. Crucially, there is no single master node: drones learn and act in a decentralized way, which better fits fast‑changing, hostile skies.

Spotting Bad Actors and Staying Connected

Security is woven directly into how the swarm learns. Each drone keeps a running “trust estimate” of nearby drones, based on whether they forward messages, behave consistently, or show signs of attack, such as dropping most packets or behaving like many fake identities at once. These trust scores influence future choices: reliable neighbors are favored as relays, while suspicious ones are gradually bypassed and effectively isolated. The framework is tested against a range of realistic threats—jamming, identity spoofing, Sybil attacks, and blackholes—inside a detailed simulation that couples a network simulator (for radio links and interference) with a drone flight simulator (for movement in 2D and 3D space).

Making the Drones’ Choices Understandable

Beyond raw performance, the system is designed to explain itself. The explainability module uses well‑known tools from modern AI to show which factors most influenced a decision. For example, a method called SHAP ranks inputs such as trust or signal strength by their impact on a chosen route, while another, LIME, builds simple local “what‑if” models around specific decisions. Visual attention maps highlight which patterns in recent behavior the learning system focused on. Together, these views let human operators see, for instance, that a drone avoided a certain neighbor mainly because its past forwarding behavior looked suspicious, not because of a momentary dip in signal strength. This turns a tangle of neural‑network calculations into a traceable story.

How the New Approach Performs in Virtual Skies

The researchers pit EMARL‑XAI against classic routing methods and more conventional learning‑based systems. In simulated missions with up to 75 drones and up to 30% of them behaving maliciously, the new framework delivers a higher share of packets, cuts delays, and keeps the rate of wrongly accusing honest drones relatively low. It also learns stable strategies faster than a similar multi‑agent learning system without trust or explanations, and uses less energy per successful delivery than traditional schemes. When the authors systematically remove parts of the design, performance drops: taking out trust sharply increases mistakes about who is malicious, while taking out the explainability tools leaves accuracy mostly intact but causes human evaluators’ confidence to plunge.

What This Means for Real‑World Drone Missions

For a non‑specialist, the main takeaway is that this work brings drone swarms closer to being trustworthy teammates rather than mysterious, fragile gadgets. EMARL‑XAI shows how drones can learn on their own to route messages safely through a shifting, hostile environment, while also leaving behind a clear, human‑readable trail of why they did what they did. Although the study is based on simulations and still needs testing on real hardware, it outlines a path toward drone fleets that are not only smarter and more secure, but also accountable—an essential step if we expect them to help manage disasters, monitor cities, or protect critical infrastructure.

Citation: Alkahtani, H.K., Galiya, Y., Akbayan, B. et al. Explainable multi agent reinforcement learning framework for secure and adaptive communication in UAV swarm based fanets. Sci Rep 16, 11830 (2026). https://doi.org/10.1038/s41598-026-39366-x

Keywords: UAV swarm, secure communication, multi-agent reinforcement learning, explainable AI, wireless network security