Clear Sky Science · en

Outplaying elite table tennis players with an autonomous robot

Robots Step Onto the Sports Stage

Imagine facing a robot across a table tennis table and discovering that it doesn’t just rally with you—it can beat seasoned competitors. This article introduces Ace, an autonomous table tennis robot that plays at the level of elite human athletes. Beyond being a cool sports gadget, Ace is a glimpse into a future where machines handle lightning-fast, physical interactions with people in everyday settings, from factories to home assistants.

Why Table Tennis Is a Tough Test

Table tennis is far more demanding than it might look on television. In high-level play, the ball can travel faster than 20 meters per second, and players sometimes have less than half a second between strokes to see, decide, and move. On top of speed, the ball’s spin—its rapid rotation—bends its path through the air and changes how it bounces off the table and racket. Spins can exceed 1,000 radians per second, making shots swerve or kick off the table in hard-to-predict ways. Earlier table tennis robots avoided much of this complexity by launching balls from simple machines, using smaller court areas, or largely ignoring spin. Ace instead confronts the full challenge of real matches played with standard equipment and official rules.

Seeing a Fast-Moving Ball in Real Time

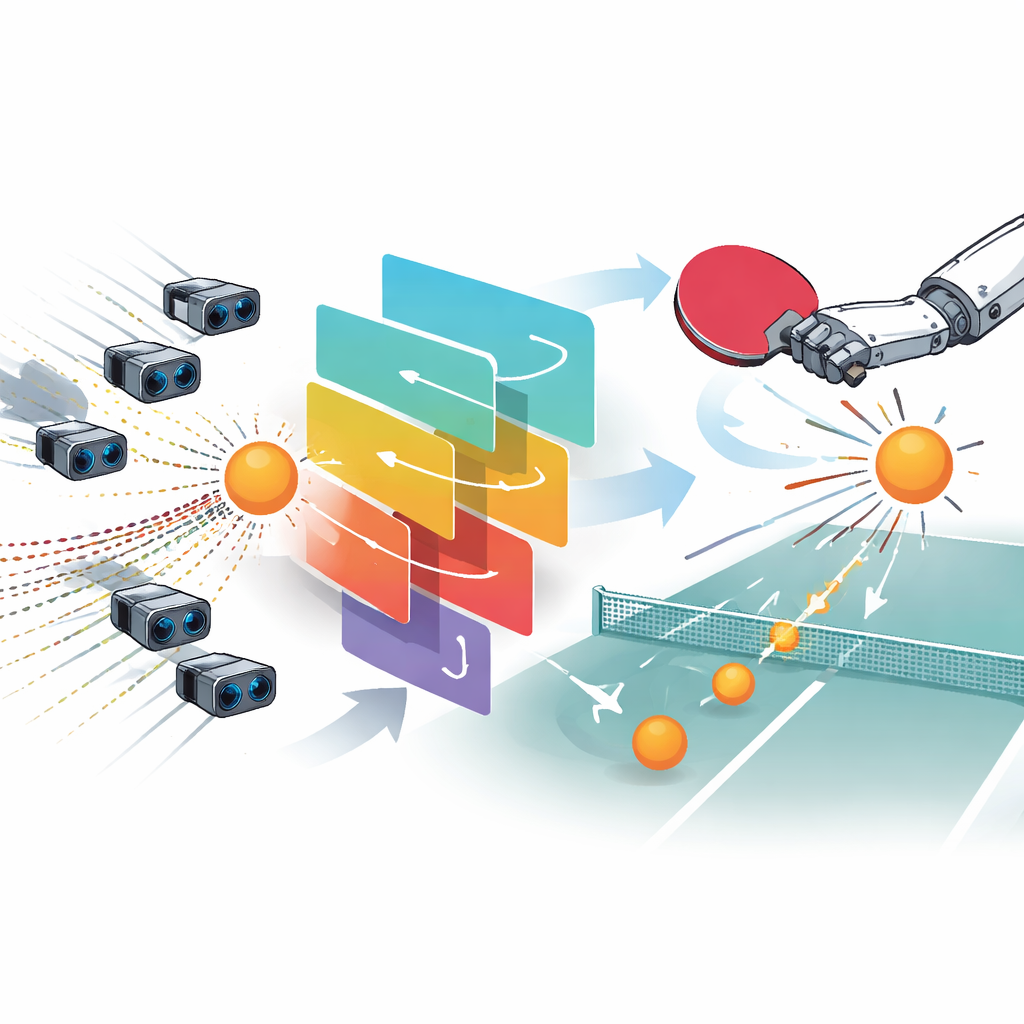

To cope with such speed, Ace needs exceptional vision. The system uses nine conventional cameras placed around an Olympic-sized court to track the ball in three dimensions. These cameras measure the ball’s position about 200 times per second with millimeter-level accuracy and only a few thousandths of a second delay. However, position alone is not enough. Spin is critical in serious play, so Ace also employs three specialized “gaze control” units, each combining a high-speed event-based sensor, steering mirrors, and a telephoto lens. Instead of recording full images, these sensors log only changes in brightness at each pixel, producing streams of tiny “events” that capture motion with extremely low blur. By feeding these events into a neural network and a second, more precise algorithm, Ace estimates how fast and in which direction the ball is spinning up to several hundred times per second. The system blends these measurements, choosing the best estimate at any moment, and passes them to the control software that decides how the robot should move.

How the Robot Chooses and Executes Its Shots

Ace’s “brain” is formed by a set of control policies learned with deep reinforcement learning, a method in which software improves through virtual trial and error. In simulation, the robot repeatedly practices returning individual shots, guided by a reward signal that favors successful returns with specific characteristics such as strong topspin or precise landing spots. A clever twist in the training procedure allows the learning algorithm to use perfect simulated information behind the scenes while the policies themselves see only noisy, realistic sensor readings, helping them transfer smoothly to the real world. During a rally, every 32 milliseconds Ace receives an updated history of ball and robot states, selects one of several learned “skills,” and produces an abstract action. This action is then converted by an optimization routine into a detailed, smooth joint trajectory that respects speed limits and avoids collisions with the table or the robot itself. In parallel, another planner constantly prepares a safe “reset” motion so the arm can quickly recover and be ready for the next shot.

Custom-Built Body for Athletic Play

To match human agility, the team designed a dedicated robot arm with eight joints—two sliding joints and six rotating ones—providing just enough freedom to position, angle, and swing the racket like a skilled athlete. The arm is made from lightweight, strong metal structures optimized by computer algorithms and manufactured additively, allowing fast motion without losing stiffness. The end of the arm carries a regulation racket and a small cup that holds the ball for one-armed serves, consistent with adaptations allowed in official rules. All actuators are synchronized to respond every millisecond, and the vision system shares the same timing, keeping sensing and movement tightly coordinated. At the lowest level, the robot behaves in a simple and predictable way, with delays kept to a few milliseconds even at top speed, which is crucial for hitting balls that arrive in fractions of a second.

How Ace Performed Against Human Experts

The researchers tested Ace in April 2025 against five elite players—experienced competitors with over a decade of intensive training—and two professionals from Japan’s top league. Matches followed international rules, with standard tables, nets, and balls, and judged by licensed umpires. Ace played best-of-three games against the elite group and best-of-five against the professionals. It won three of the five matches against elite players and took a game off one of the pros. Data recorded during play show that Ace reliably returns shots up to about 14 meters per second and handles a wide range of spins with return rates above 75 percent up to substantial spin levels. Compared with the humans, Ace does not rely on especially fast shots to win points. Instead, its “won” shots look similar in speed and spin to its regular returns, suggesting that consistency—getting the ball back on the table again and again—is its main strength. The robot also tends to hit the ball sooner after it bounces, and its carefully designed serves produce more direct points, or “aces,” against elite players than the humans manage in return.

What This Means Beyond the Ping-Pong Table

Ace shows that a physical robot, not just a computer program, can now challenge human experts in a fast, interactive sport without simplifying the rules. For non-specialists, the key takeaway is not just that a machine can play impressive table tennis; it is that the combination of rapid sensing, learned decision-making, and agile hardware can handle real-world tasks at the limits of human reaction time. The same techniques could eventually help robots work safely alongside people on factory floors, assistive devices coordinate with users’ movements, or new sports-training tools push athletes in ways that were previously impossible. Although modeling human strategy and long-term tactics remains a challenge, Ace marks an important step toward physical AI systems that can share our spaces and respond to us in real time.

Citation: Dürr, P., El Gheche, M., Maeda, G.J. et al. Outplaying elite table tennis players with an autonomous robot. Nature 652, 886–891 (2026). https://doi.org/10.1038/s41586-026-10338-5

Keywords: robot table tennis, physical AI, reinforcement learning, event-based vision, human–robot interaction