Clear Sky Science · en

Advanced neuronal logic circuit designs using spiking models: a framework for sequential biocomputation

Why Building Computers from Brain Cells Matters

As our laptops and data centers become ever more powerful, they also grow hotter, hungrier for electricity, and harder to shrink. This paper explores a radically different path: using networks of brain-like nerve cells as the building blocks of future computers. The authors show, in computer simulations, how small groups of model neurons can be wired and tuned to behave like familiar digital components—logic gates and memory cells—while keeping energy use under control. Their framework could guide future "living chips" made from real neurons or brain-inspired hardware.

From Hot Silicon to Living Circuits

Modern silicon chips are running into physical limits set by heat, power consumption, and how small we can reliably make transistors. At the same time, biological systems, especially neurons, already perform astonishing feats of information processing while using very little energy. Researchers are therefore asking whether we can borrow ideas or even materials from biology to build new kinds of computers. Neuronal networks grown in the lab have already learned to recognize speech and even play simple video games, hinting that cells can be organized into purposeful information processors. However, until now there has been no clear, reusable recipe for making these neural circuits behave like the precise, clocked logic blocks used in digital electronics.

Teaching Spiking Neurons to Speak in Bits

The authors tackle this by designing networks of simulated spiking neurons—mathematical models that mimic how real neurons send brief electrical pulses. They treat the presence of a burst of spikes as a digital "1" and their absence as a "0." By carefully choosing the strengths and timings of the connections between neurons, they build versions of standard logic gates: AND, AND-NOT, NOT, and NAND. These gates are the alphabet of digital logic; NAND alone is enough to construct any logical function. A key trick is mixing excitatory connections, which encourage a neuron to fire, with inhibitory ones, which suppress activity. For example, their AND-NOT gate fires only when the "go" input is active while the "stop" input is quiet, closely mirroring how some real neurons weigh incoming signals.

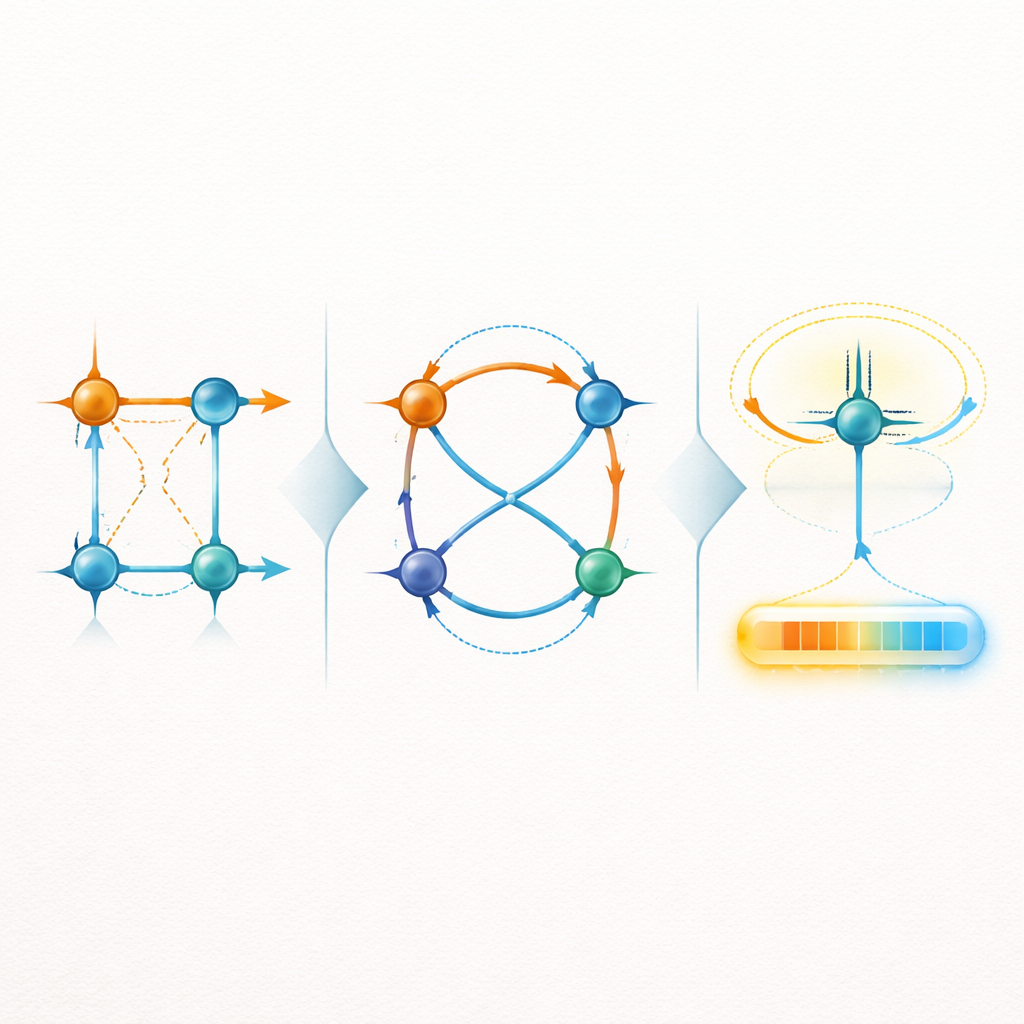

Making Neurons Remember Like Computer Chips

Beyond simple gates, real computers rely on circuits that can remember past inputs. The team shows how to assemble their neuronal gates into classic building blocks of digital memory. They create an SR latch, which stores one bit by feeding the outputs of two gates back into each other, as well as a gated SR latch that responds only when an extra control signal is active. Going further, they design a D flip-flop, a standard memory element that copies its input only at the rising edge of a clock signal. To keep the timing of spikes aligned across these more complex networks, they introduce "neuronal buffers"—extra neurons that act like adjustable delay lines so that signals arriving from different paths reach a gate at nearly the same moment, reducing logic errors caused by mistimed spikes.

Balancing Brain-Like Activity and Energy Use

A major concern for any biological computing system is metabolic cost: neurons need energy to fire and to reset their internal chemistry. The authors pair their spiking models with an energy model that tracks an abstract measure similar to a cell’s fuel level. They then measure how this energy variable changes as their gates and memory circuits operate. Across simple gates and more complex latches and flip-flops, the simulated energy burden stays within a narrow band, even as circuits grow in size. This suggests that, at least in principle, digital-style logic and storage can be carried out by neurons without runaway energy demands, provided the circuits are designed with timing and excitation–inhibition balance in mind.

Steps Toward Living Logic Machines

In plain terms, the paper argues that small networks of neurons can be wired and tuned to behave like the on–off switches and tiny memories inside today’s chips, while staying metabolically stable. The work is still virtual—no living neurons were used—but the designs are intended to be transplantable to real neuron-on-a-chip platforms and to neuromorphic hardware that imitates spiking neurons in silicon. By offering a library of reusable neuron-based logic components, rules for synchronizing their timing, and estimates of their energy needs, this framework moves neuron-based computers from a vague idea toward an engineered reality, where digital-like precision and biological adaptability might one day coexist in the same computing device.

Citation: Basso, G., Scherer, R. & Barros, M.T. Advanced neuronal logic circuit designs using spiking models: a framework for sequential biocomputation. npj Unconv. Comput. 3, 20 (2026). https://doi.org/10.1038/s44335-026-00066-4

Keywords: neuronal biocomputing, spiking logic circuits, biological memory, neuromorphic hardware, energy-efficient computing