Clear Sky Science · en

Language-based detection of depression with machine learning: systematic review and meta-analysis

Why Your Words Might Reveal Your Mood

Most of us share pieces of our lives in writing every day—through text messages, emails, or online chats. This study asks a striking question: can patterns in those everyday words help flag when someone is struggling with depression? By pulling together more than a decade of research from around the world, the authors examine how well computer programs can spot signs of depression purely from what people say or write, and what it would take for such tools to be used safely in real-world care.

Gathering Clues from Many Conversations

The researchers systematically searched medical and computer science databases and identified 123 studies that tried to detect depression from spoken or written language using machine learning. Together, these studies drew on text from over 35,000 people and nearly 60,000 samples of language. The words came from different places: structured clinical interviews where people were asked about their mood and daily life; short answers to open questions like “How do you feel today?”; therapy chats and counseling text sessions; and everyday messages, emails, or diary-style entries. In all cases, depression was determined independently—through standard questionnaires or clinician diagnoses—so the computer models were predicting a real clinical outcome, not just guessing from the text alone.

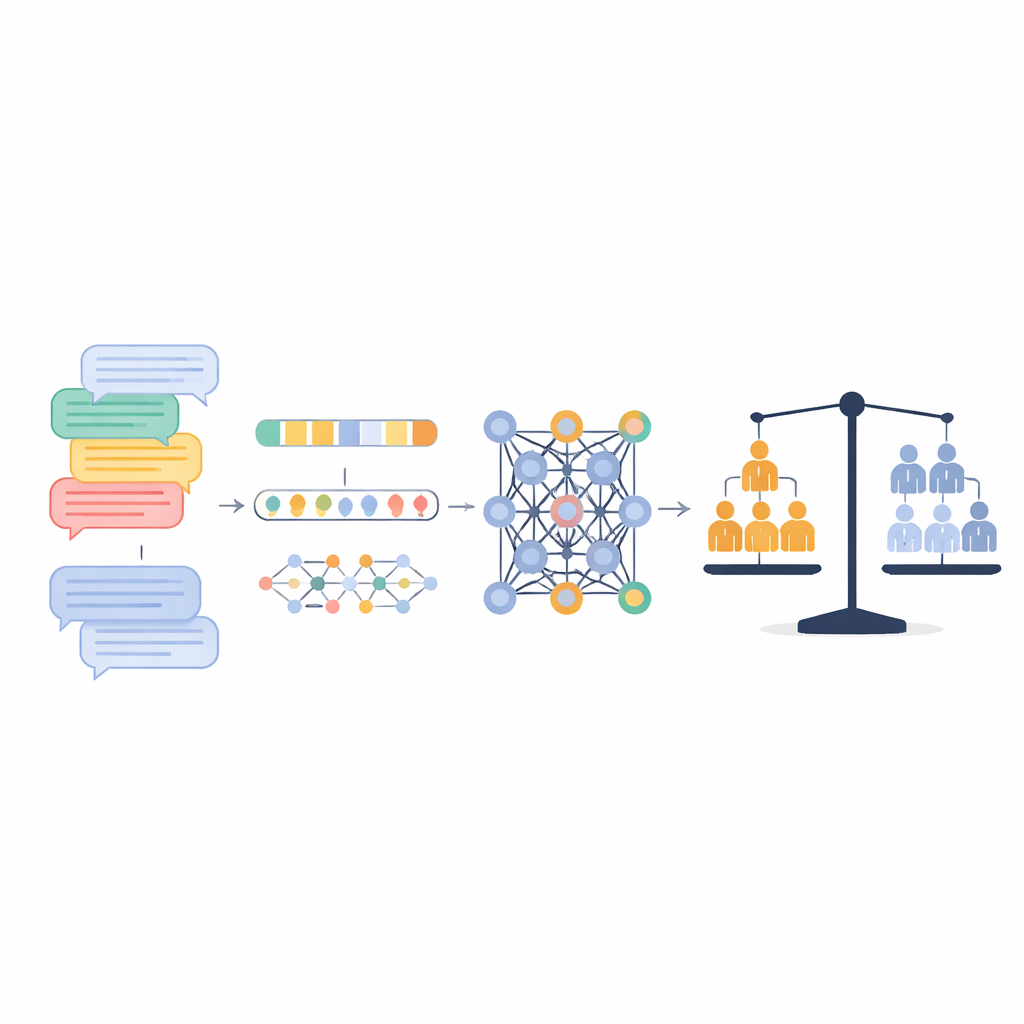

Turning Words into Signals for Computers

To make language usable for algorithms, the studies converted text into numbers in several ways. Some used simple counts of words or phrases, such as how often certain terms appeared. Others relied on dictionaries that group words into psychological categories (for example, negative emotion words or self-focused words), turning each person’s speech into a profile of these categories. More recent work used “embeddings” and large language models like BERT or GPT, which represent words and sentences as dense points in a mathematical space that capture subtle shades of meaning and context. On top of these inputs, different types of models were trained—from classic techniques like logistic regression and support vector machines to deep learning systems such as recurrent neural networks and transformer-based architectures.

How Well the Machines Did

Across 43 independent datasets suitable for pooling, the models correctly classified people as depressed or not depressed about 80% of the time. Precision (how often a positive result was truly depressed) averaged 78%, and recall (how many depressed cases were correctly found) averaged 76%. A broader measure that balances hits and misses, called AUC, was around 0.79, suggesting reasonably strong discriminative ability overall. But performance varied widely from study to study. Systems worked best when they analyzed language from structured clinical interviews that focused directly on mood and symptoms, where accuracy reached about 84%. Performance dropped when the models relied on more free-flowing therapy conversations or everyday chats, where signs of depression are more subtle and mixed with other topics.

What Matters Most: Context Over Complexity

When the authors dug deeper into why studies differed, one factor consistently stood out: the source of the text. Whether the language came from focused interviews, quick open questions, or natural conversations explained more of the variation in accuracy than the choice of algorithm or feature type. Surprisingly, in the small group of studies that used hand-crafted linguistic dictionaries, these simpler approaches sometimes matched or outperformed more complex deep learning systems. Traditional machine learning methods and cutting-edge transformer models showed similar overall accuracy, hinting that there may be a ceiling imposed by how much information is actually contained in the available snippets of language rather than by the sophistication of the model itself.

Promise, Limits, and Ethical Questions

The authors argue that text-based tools should be seen as early warning and monitoring aids, not replacements for clinicians. Automated systems could help flag people who might benefit from a closer look, reduce the burden of repeated questionnaires, or track changes in mood over time between appointments. But they also highlight serious cautions: language is shaped by culture, gender, and life circumstances, and models trained in one group may fail in another. Many datasets over-represent certain populations and reuse the same interview sources, limiting generalizability. Most studies also reported only simple accuracy, making it hard to judge real-world trade-offs between missing people in need and raising too many false alarms. Issues of privacy, informed consent, and bias are central if ordinary conversation or clinical transcripts are to be analyzed in this way.

What This Means for the Future of Care

For a layperson, the bottom line is that computers are already fairly good—but far from perfect—at picking up signs of depression from the way we speak and write. In carefully designed settings, especially structured interviews, these systems can correctly classify about four out of five people. Yet the study shows that where language comes from and how depression is defined matter as much as, or more than, the latest algorithmic tricks. Before such tools can be safely woven into healthcare, researchers will need more diverse datasets, clearer reporting standards, and designs that keep clinicians in the loop. Used thoughtfully, language-based screening may one day provide a low-friction way to notice when someone is slipping into distress earlier than would otherwise be possible.

Citation: Fisher, H., Jaffe, N.M., Pidvirny, K. et al. Language-based detection of depression with machine learning: systematic review and meta-analysis. npj Digit. Med. 9, 273 (2026). https://doi.org/10.1038/s41746-026-02448-1

Keywords: depression screening, natural language processing, digital mental health, machine learning, clinical interviews