Clear Sky Science · en

Potential of large language models for rapid clinical information support: evidence from acute kidney injury knowledge testing

Why this matters for patients and doctors

When doctors face a sick patient, especially someone whose kidneys may be failing, they must make quick, well‑informed decisions. This study asks a striking question: can modern artificial intelligence tools, known as large language models, recall and apply medical facts about acute kidney injury faster and more accurately than real clinicians—and if so, what does that mean for future care?

A common but dangerous kidney problem

Acute kidney injury is a sudden loss of kidney function that often appears in hospital wards and emergency rooms. It can affect about one in ten people admitted to the hospital, and up to half of those in intensive care. If it is missed or treated too late, patients may suffer permanent damage and go on to develop chronic kidney disease, a long‑term condition that affects more than one in ten people worldwide and is linked to higher risk of death, heart disease and reduced quality of life. Because of this, doctors are expected to know how to spot acute kidney injury early and manage it according to established guidelines.

Setting up a man‑versus‑machine challenge

To test how well artificial intelligence handles this topic, the researchers organized an “AI vs. human” challenge at a large internal medicine conference in Germany in 2025. At a self‑service booth, 123 volunteers—ranging from medical students to chief physicians—took the same online quiz. The test was based on two short patient stories about kidney problems and 15 guideline‑based multiple‑choice questions, all in German. At the same time, 13 publicly available language models from several well‑known providers were fed the very same cases and questions in one go, using their standard settings. This design let the team directly compare how accurately and how quickly clinicians and machines handled a focused slice of kidney knowledge.

How humans and machines performed

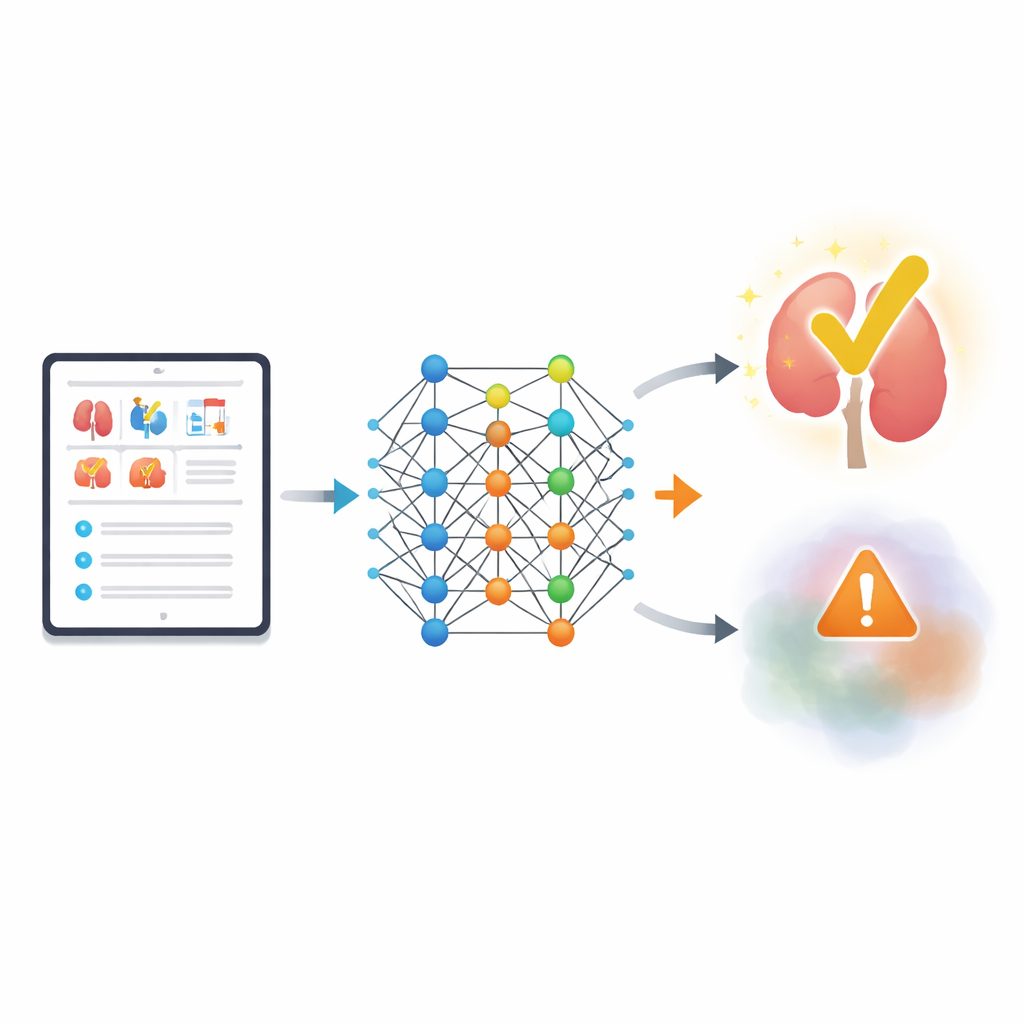

The results were stark. On average, human participants answered fewer than half of the questions correctly, scoring about 7 out of 15 points. Scores did not differ much between students, residents and senior doctors, though students showed the widest spread. The language models, in contrast, averaged 13.5 out of 15 points, or 90% correct. Several models reached a perfect score, while the weakest still equaled or beat most humans. Only about one in six participants matched the performance of the lowest‑scoring models, and very few came close to the strongest systems. The speed gap was just as notable: one model completed the entire quiz in roughly 30 seconds, while humans needed more than seven minutes on average.

Promise and risks of lightning‑fast answers

These findings suggest that large language models could serve as powerful, low‑cost tools for rapid access to medical facts, especially in settings where time and staff are limited, such as emergency rooms, night shifts or rural clinics. The study also hints that how a question is posed matters: in a small follow‑up, one model did even better when asked to respond as if it were an experienced doctor in a life‑or‑death situation. Still, the authors stress that the test measured only recall of guideline‑based facts in a controlled quiz, not full‑blown clinical reasoning, bedside judgment or real‑world patient outcomes.

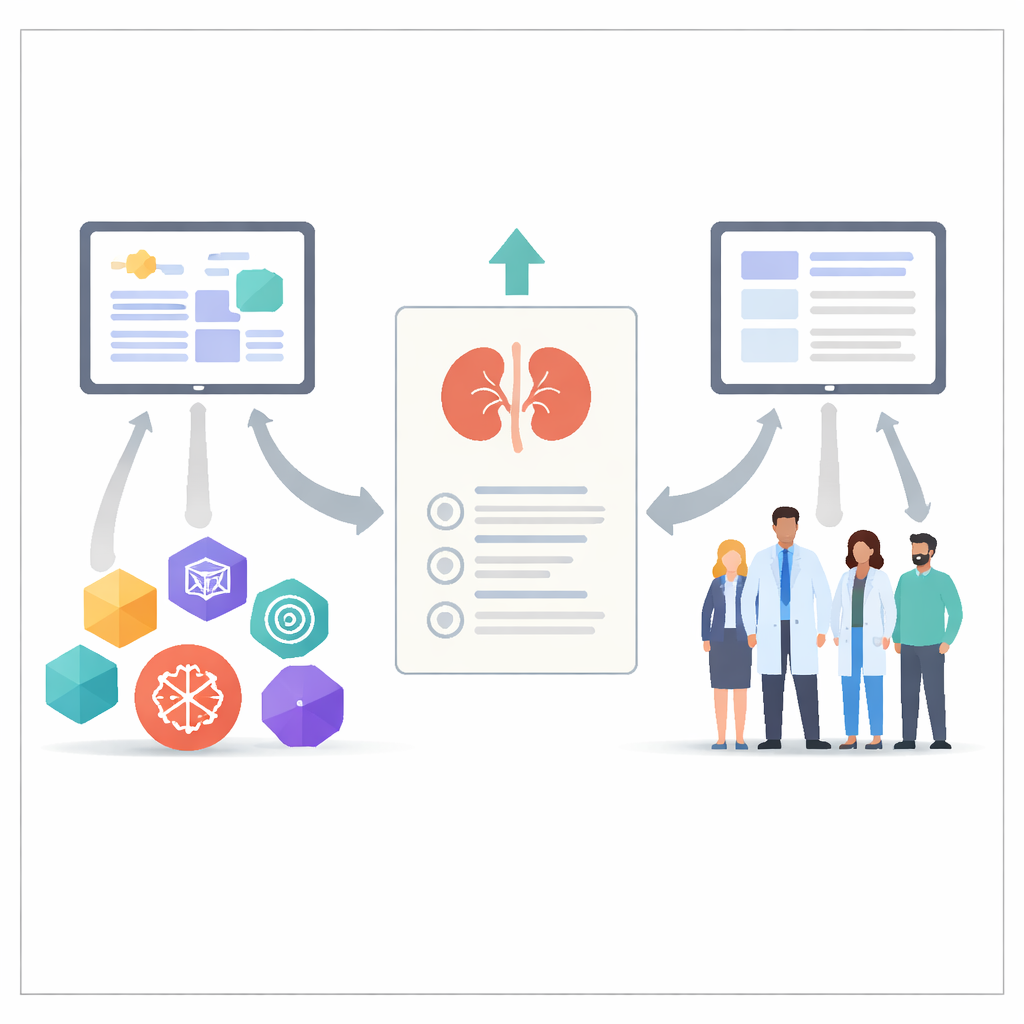

Why human judgment still comes first

The researchers emphasize that today’s language models also have serious weaknesses. They can “hallucinate,” confidently producing false or misleading statements, a risk that may grow in rare or complex cases where guidelines do not give clear answers. They cannot examine a patient, pick up subtle physical clues or convey empathy and trust, all of which are central to good care. Ethical and legal questions also loom large: models change over time, may handle data in opaque ways and cannot assume responsibility for medical decisions. For these reasons, the authors argue that such systems should be used only as supportive tools for knowledge retrieval and decision aid, with clear safeguards, regular testing and strong privacy rules.

Take‑home message for non‑experts

In short, this study shows that modern language models can outperform many doctors and students on a focused written quiz about acute kidney injury—and do so in a fraction of the time. This makes them promising companions for looking up medical facts quickly. But because they can still make confident mistakes and lack human understanding, they are not replacements for clinicians. For the foreseeable future, the best care will come from a blend of fast, well‑designed tools and the careful, empathetic judgment of trained professionals.

Citation: Russ, P., Bedenbender, S., Einloft, J. et al. Potential of large language models for rapid clinical information support: evidence from acute kidney injury knowledge testing. Sci Rep 16, 11224 (2026). https://doi.org/10.1038/s41598-026-46846-7

Keywords: acute kidney injury, large language models, clinical decision support, digital health, nephrology