Clear Sky Science · en

An algorithmic system for arabic fake news detection using neural networks and transformer embeddings with class weighting

Why spotting false stories online matters

In today’s always-connected world, a dramatic headline in Arabic can travel from a little-known Facebook page to millions of phones in minutes. Some of these stories are carefully crafted fakes that can inflame public opinion, distort elections, or sow distrust in institutions. Yet most automated tools for spotting fake news have been built for English. This study tackles that gap by designing and testing an efficient system that can flag misleading Arabic news articles with a level of accuracy approaching that of human fact‑checkers.

Building a realistic picture of Arabic news

To mirror the messy reality of online information, the researchers first assembled a large, mixed collection of 7,474 Arabic news articles published between 2015 and 2025. The texts came from trusted newsrooms, unverified blogs and social media posts, and translated samples from well‑known English fake‑news datasets. Each item was labeled as real or fake, using careful cross‑checking against official sources and Arabic fact‑checking platforms. A subset was double‑checked by three experts, and their strong agreement gave confidence that the labels were reliable. The final dataset reflects how fake stories are actually outnumbered by genuine reporting, a class imbalance that often trips up automated detectors.

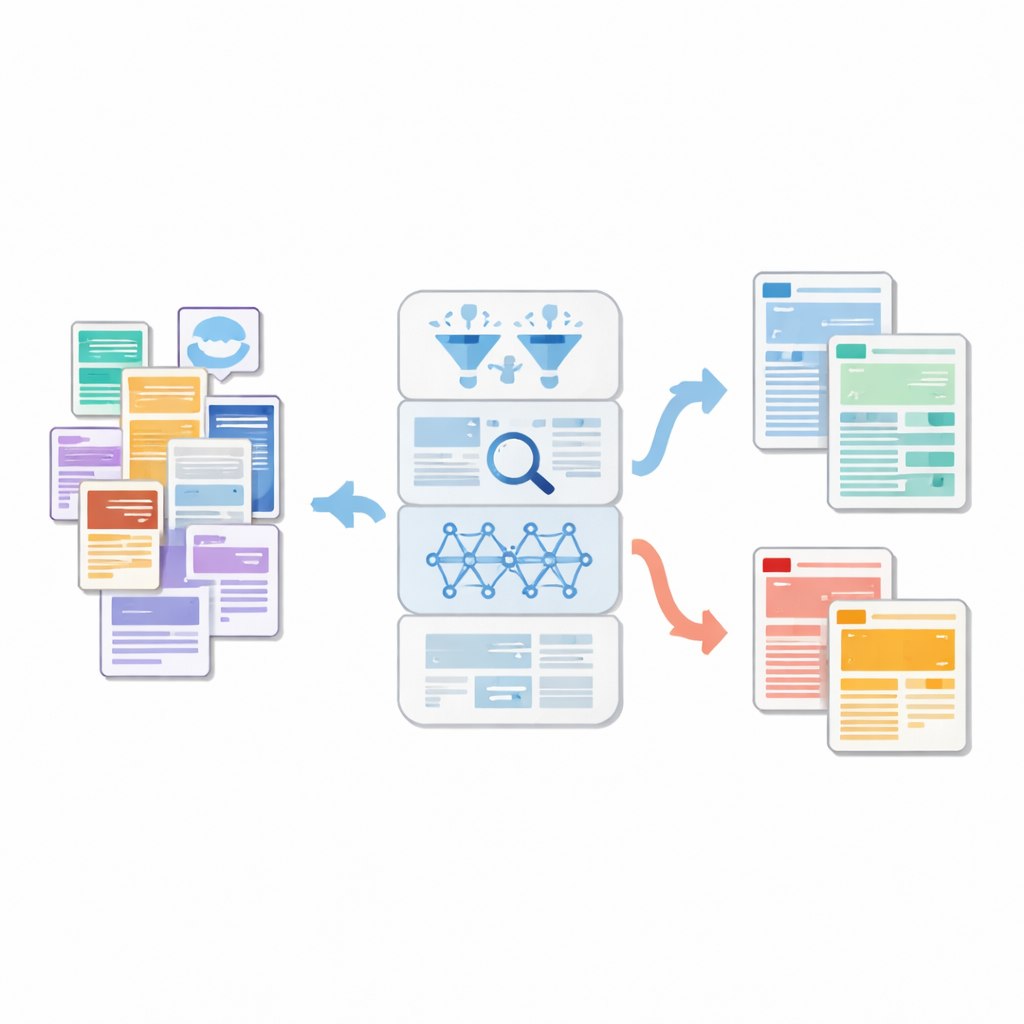

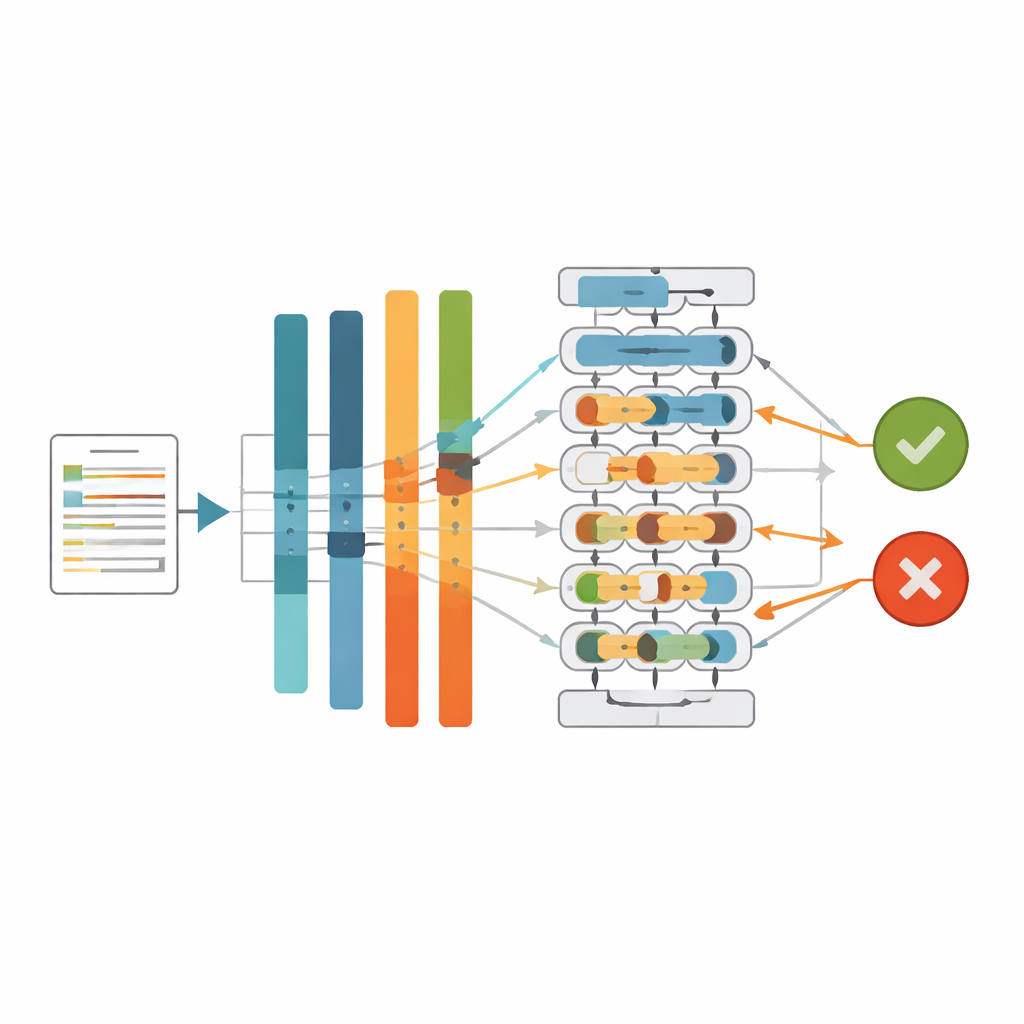

Teaching machines to really read Arabic

Rather than rely on simple word counts, the team turned to a modern family of language models called Transformers, which can capture meaning from context. They used an Arabic model known as CAMeLBERT, trained specifically on Modern Standard Arabic, as a kind of sophisticated reader. Each article was passed through a specialized preprocessing pipeline that cleans away emojis, links, and noisy characters while preserving the linguistic nuances important in Arabic. CAMeLBERT then converted each cleaned article into a dense numerical fingerprint that captures subtle shades of meaning, style, and structure. These fingerprints were fed into a compact deep neural network that learns patterns distinguishing real from fake news.

Fixing the imbalance between real and fake

A key challenge was that real news articles outnumbered fake ones in the dataset, just as they do in daily life. If left unchecked, a model will play it safe and call most stories real, missing dangerous fakes. Many earlier studies tried to fix this by duplicating rare fake examples, inventing synthetic ones, or discarding some real articles, but these tricks can add noise or throw away useful information. Instead, this work focused on an algorithm-level solution called class weighting. During training, mistakes on fake articles are made more “costly” to the model than mistakes on real ones. Without altering the data itself, this pushes the neural network to pay extra attention to the minority fake class and draw a more balanced boundary between true and false stories.

Putting the system to the test

The researchers compared several approaches: traditional machine‑learning models using word‑count features, the same neural network fed by different Arabic Transformer models, and the best Transformer combined with various balancing strategies. CAMeLBERT emerged as the strongest backbone among Arabic Transformers, outperforming alternatives such as AraBERT, MARBERTv2, and AraELECTRA. When paired with class weighting, the CAMeLBERT‑based system correctly classified Arabic news with an accuracy of about 95.5% and an F1‑score—a balance of precision and recall—of about 96.2%. Just as important, the tuned system sharply reduced the most worrying error: fake stories incorrectly treated as real. To open up the “black box,” the team also applied modern explanation tools (LIME and SHAP) that reveal which linguistic cues and patterns in the model’s internal representations tend to push an article toward a fake or real decision.

What this means for everyday readers

From a layperson’s perspective, this study shows that machines can be trained to read Arabic news in a surprisingly nuanced way, spotting subtle stylistic and contextual clues that often separate fabricated posts from professional reporting. By combining a language model tailored to Modern Standard Arabic with a fairness‑aware training strategy, the authors deliver a detector that is both accurate and relatively light‑weight—suited to integration into fact‑checking platforms, newsrooms, and social‑media monitoring tools. While it does not replace human judgment, this system offers a strong foundation for automated Arabic fact‑checking, helping to slow the spread of harmful misinformation and support a healthier information space across the Arabic‑speaking world.

Citation: Saad, M., Abdelrazek, S. & Abdelmaksoud, I.R. An algorithmic system for arabic fake news detection using neural networks and transformer embeddings with class weighting. Sci Rep 16, 12226 (2026). https://doi.org/10.1038/s41598-026-45653-4

Keywords: Arabic fake news, transformer models, neural networks, class imbalance, fact-checking systems