Clear Sky Science · en

Advancing plant disease classification using an attention-based CNN for intra-dataset and cross- dataset training

Why spotting sick leaves matters

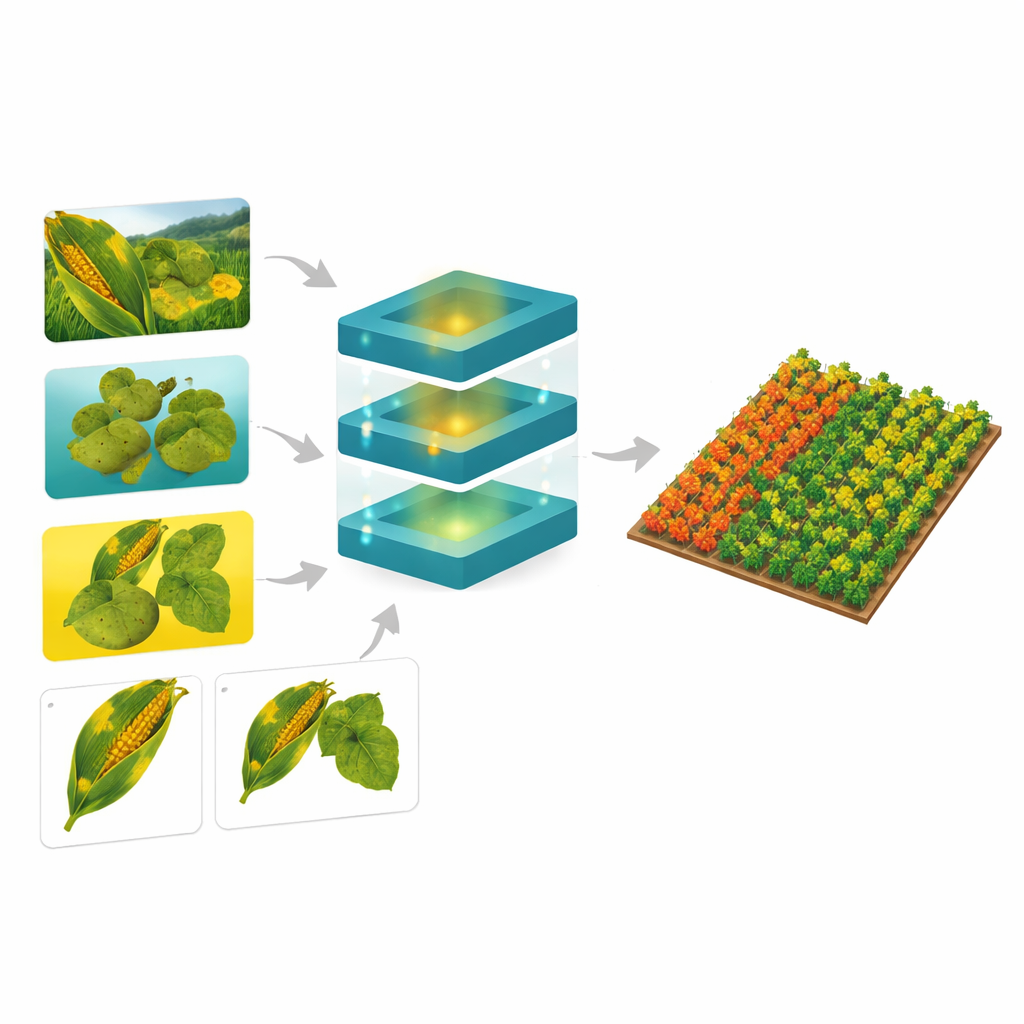

Food crops like corn and potatoes feed hundreds of millions of people, yet leaf diseases can quietly erode yields long before farmers notice visible damage. Today, smartphones and cheap cameras make it easy to collect pictures of crops, but turning those images into reliable early warnings is still hard. This study explores how a smarter kind of image-recognition system can learn to detect plant diseases not just in tidy lab photos, but also in messy real-world images taken in fields under changing light and background conditions.

Teaching computers to read leaves

Modern image-recognition systems often rely on convolutional neural networks, or CNNs, which excel at finding patterns in pictures—edges, spots, shapes and textures. These tools have already shown they can classify plant diseases with impressive accuracy when they are trained and tested on the same carefully curated dataset. However, most earlier work focused on what the authors call “intra-dataset” training: the model practices and takes its exam on very similar images. When such a model meets leaves photographed with a different camera, in a different country, or against a cluttered background, its performance can fall sharply.

Helping the model pay attention

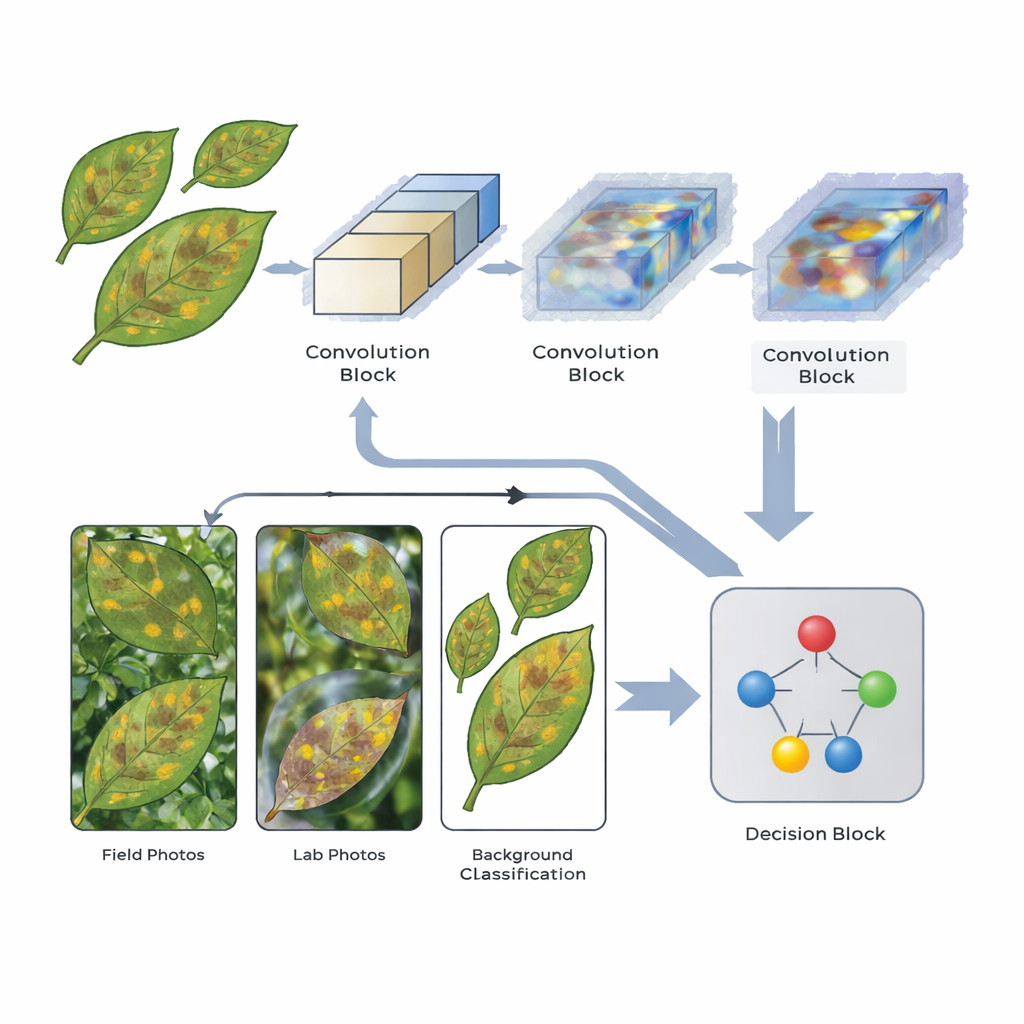

The researchers designed a streamlined CNN that includes special attention mechanisms—components that help the network concentrate on the most informative parts of each image. The model processes each leaf picture through three stages of feature extraction. After the second and third stages, attention layers reweight the internal signals so that patterns linked to disease spots and lesions are emphasized while less relevant details, such as background soil or sky, are toned down. The architecture is intentionally lightweight, using moderate image sizes and limited depth so that it can run on relatively modest hardware, a practical consideration for real-world agricultural use.

Testing on many kinds of leaf photos

To see how well this design holds up, the team drew on five publicly available leaf-image collections. These included the widely used PlantVillage dataset of clean, centered leaves; PlantDoc, which contains more realistic field photos; Digipathos and an NLB set focused on a specific corn disease; and the Corn Disease & Severity (CD&S) dataset, which offers multiple versions of each image: original field shots, background-removed leaves, and leaves placed on uniform black or white backgrounds. The model learned to recognize several diseases affecting corn and potatoes, as well as healthy leaves, and its performance was evaluated with standard measures such as accuracy, precision, and recall.

Strong results at home and abroad

When trained and tested within the same dataset, the attention-based network matched or outperformed many earlier methods. It reached 98% accuracy for corn disease classification and 99.38% accuracy for potato disease classification on the PlantVillage images, and 98% accuracy on the CD&S field images. More demanding experiments asked the model to train on one dataset and then classify diseases in another—a “cross-dataset” challenge closer to real-world deployment. Here, the best results came when the system was trained on CD&S images whose backgrounds had been removed. Under this setting it achieved an average accuracy of about 82.93% across several other corn datasets, improving on previous cross-dataset studies. The model was less consistent for potato images across datasets, but still provided a first benchmark for that task.

What this means for smarter farming

For non-specialists, the central message is that how we train disease-detection systems matters as much as what algorithms we use. By helping the network focus on the actual symptoms on leaves instead of the surrounding scenery, attention mechanisms make the system more capable of handling new kinds of images. While the study notes that public datasets still do not capture the full messiness of real farms, its results show that a relatively compact model can generalize better across different image collections. In the long run, such tools could support phone-based or drone-based scouts that warn farmers about emerging problems before they spread, contributing to more precise, less wasteful use of chemicals and better protection of global food supplies.

Citation: Mahapatra, P., Panda, M., Dash, S.K. et al. Advancing plant disease classification using an attention-based CNN for intra-dataset and cross- dataset training. Sci Rep 16, 10925 (2026). https://doi.org/10.1038/s41598-026-45464-7

Keywords: plant disease detection, deep learning, attention CNN, crop health monitoring, cross-dataset generalization