Clear Sky Science · en

Negotiation-augmented federated reinforcement learning for conflict-free edge–cloud stream scheduling

Why smart apps need smoother traffic behind the scenes

From live traffic maps to factory sensors, many modern apps depend on a constant stream of data that must be processed in milliseconds. To keep up, companies spread computations across nearby edge devices and distant cloud servers. But when many parts of this network make their own choices at once, they can clash, causing digital traffic jams, rising costs, and sluggish responses. This paper explores a new way to coordinate those decisions so streaming apps stay fast, stable, and efficient even under wildly changing demand.

The growing pains of edge and cloud teamwork

Smart cameras, vehicles, and industrial sensors now send endless streams of data that must be analyzed in real time. Edge computers close to users cut delay, while cloud data centers add extra muscle. Yet deciding where each piece of work should run is tricky, because tasks depend on each other and workloads spike without warning. Classic scheduling methods rely on fixed rules or offline planning. They work in calmer settings but struggle when thousands of tasks and machines must adapt every second across multiple regions. Purely centralized control can become a bottleneck, while fully independent local controllers often fight over shared resources.

Learning to schedule, but without stepping on toes

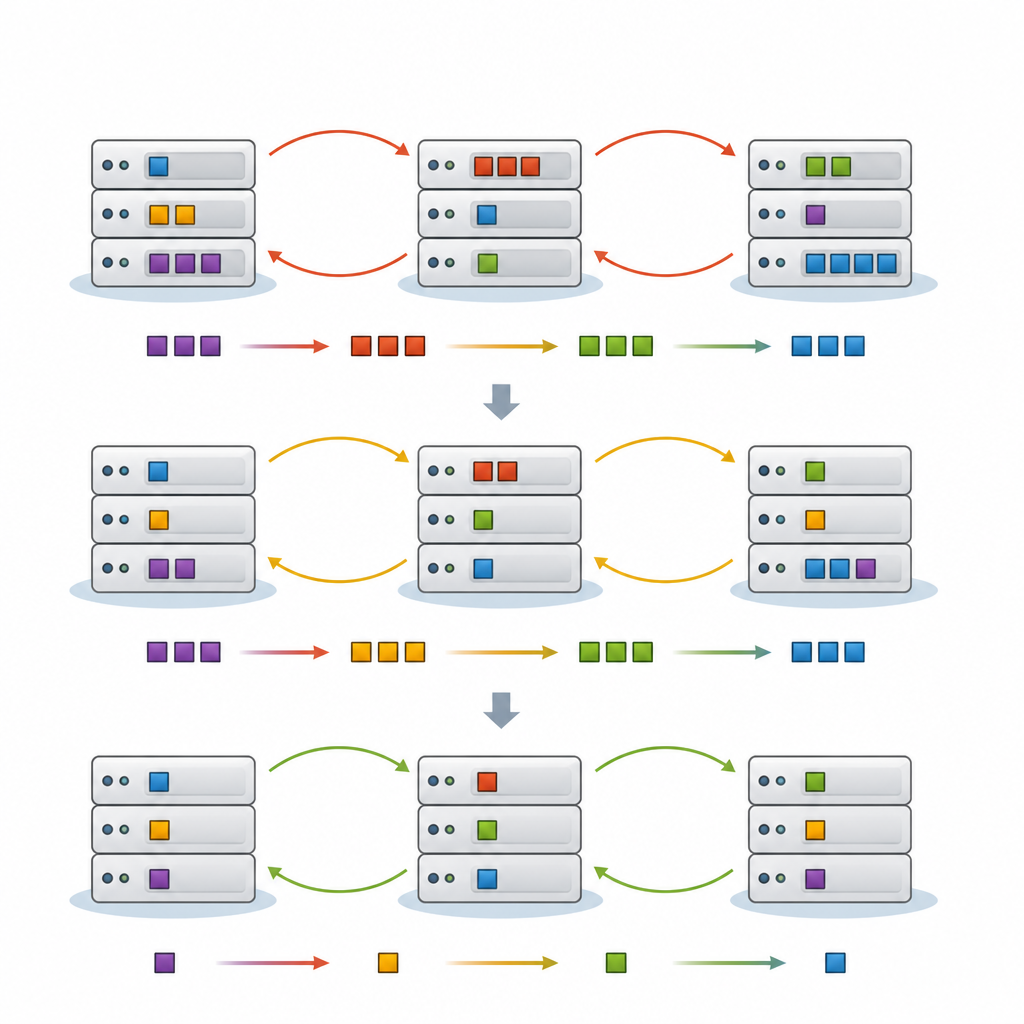

Recent approaches let software agents learn good scheduling rules by trial and error, a technique called reinforcement learning. Federated learning allows many agents to train together while keeping their raw data local, which is important for privacy and bandwidth. However, when each cluster of edge machines learns on its own and only occasionally syncs models, their actions can still conflict. Two clusters might offload to the same cloud servers at once, or shuffle tasks back and forth, creating extra delay and wasted energy. The authors argue that what is missing is an explicit way for these agents to talk to each other and negotiate before they act.

A negotiation table for digital schedulers

The proposed framework, FedNeg-RL, adds a lightweight negotiation layer on top of federated reinforcement learning. Each cluster of edge devices has a representative agent that monitors local load, predicts near-future traffic, and tracks which tasks are most sensitive to delay. Before making changes that might affect shared links or cloud nodes, these representatives exchange brief summaries, such as expected load and the likely impact of their moves, rather than raw data. Using simple argument-style protocols, they negotiate a joint plan that avoids clashes, then each cluster applies the agreed action locally. Over time, their learning process is shaped to favor plans that keep latency low, energy and cost reasonable, and conflicts rare.

Testing the approach in busy virtual cities

To evaluate FedNeg-RL, the authors built detailed simulations of Internet-of-Things style workloads, including hundreds of interconnected tasks and bursty, hard-to-predict data streams similar to those found in smart city traffic monitoring. They compared their method with rule-based schedulers, evolutionary algorithms, standard local reinforcement learning, pure federated learning, and a single centralized learning agent. Across many scenarios, FedNeg-RL cut the number of disruptive reconfigurations triggered by conflicts by up to 41 percent, reduced high-end latency (the slowest 10 percent of responses) by about 20 to 28 percent, and lowered adaptation overhead by roughly 35 percent. It also used energy more evenly and scaled well as the number of tasks and machines grew.

What this means for future connected systems

In plain terms, FedNeg-RL shows that teaching software controllers not only to learn from experience but also to negotiate with their peers can make shared edge and cloud infrastructure run more smoothly. Instead of scattered, competing decisions, clusters coordinate just enough to keep streaming applications responsive, stable, and efficient, without revealing private data or relying on a single central brain. As real-world deployments grow larger and more complex, such negotiation-aware learning could help ensure that the invisible computing fabric behind smart cities, factories, and services keeps working quietly in the background, even as demands constantly change.

Citation: Kang, X., Hua, C. Negotiation-augmented federated reinforcement learning for conflict-free edge–cloud stream scheduling. Sci Rep 16, 15158 (2026). https://doi.org/10.1038/s41598-026-45004-3

Keywords: edge cloud scheduling, federated reinforcement learning, IoT streaming, multi agent negotiation, latency reduction