Clear Sky Science · en

A fatigue driving detection method based on driver posture and facial state analysis

Why tired drivers matter to everyone

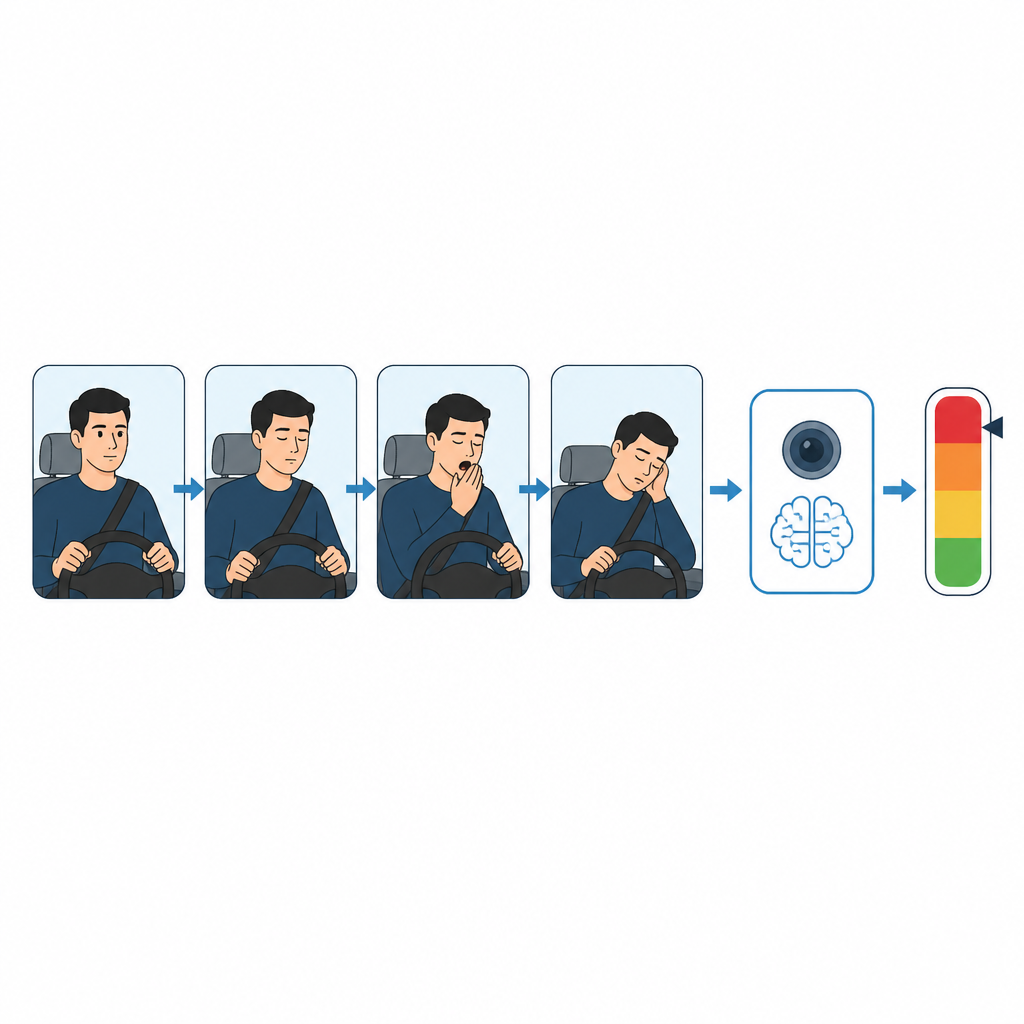

Most of us have felt our eyelids grow heavy on a long trip, but few realize how often that drowsy moment turns into a crash. This paper describes a camera-based system that watches a driver’s face, posture, and hands to spot early signs of fatigue and issue timely warnings, without attaching any sensors to the body or changing how people drive.

Watching the driver, not just the car

Traffic agencies estimate that fatigue plays a role in a large share of serious road accidents worldwide. Traditional warning systems have focused on signals from the car, such as steering corrections or lane departures, or on medical-style sensors that track brain or heart activity. These methods can be costly, uncomfortable, or unreliable when drivers or road conditions differ. The study instead relies on ordinary video from small cameras inside the car to read the driver’s behavior directly, using modern artificial intelligence to interpret what it sees.

Reading eyes, mouth, head, and hands

The researchers first break down what tired driving looks like in everyday terms. Warning signs include slower, longer blinks, repeated yawning, a drooping or tilted head, and hands slipping away from the steering wheel. Using a pose estimation method called AlphaPose, the system tracks 119 key points on the driver’s body, especially around the eyes, mouth, head, and wrists. From these points it calculates simple measures, such as how wide the eyes and mouth are open and how far the head has tilted or dropped relative to the neck and eyes. It also checks whether each wrist stays close to the steering wheel over time or drifts away, hinting that the driver is no longer firmly in control.

Seeing from several angles over time

Because a single snapshot can be misleading, the system looks at short video clips rather than isolated frames. For example, one normal blink could resemble sleepy eye closure, and a quick glance at the dashboard might look like nodding off if viewed alone. To avoid such mistakes, the method analyzes changes across at least 20 consecutive frames, tracking whether fatigue clues persist. It also uses three camera views collected in the Driving Monitoring Dataset: a front view of the face, a side view of the upper body, and a close view of the hands. Together these angles make the system more robust when the face is partly hidden by glasses, bright sunlight, motion blur, or head turns.

Smarter detection under the hood

Behind the scenes, the system has two main parts. First, a fast object detector called YOLOv11n* finds the driver in each frame so that AlphaPose can mark body keypoints accurately. The authors improve this detector with a special hybrid pooling block that helps the network understand both broad context and fine details such as eyes and fingers. Second, the positions and confidence scores of all tracked keypoints are fed into a Long Short-Term Memory (LSTM) network, a type of model well suited to sequences. The LSTM learns how combinations of eye, mouth, head, and hand changes unfold over time when a driver is alert, mildly tired, or severely fatigued, and it outputs the probability of each fatigue level for the current time window.

How well the system works in practice

The team tested their approach on a large public dataset of in-car videos, training and evaluating the models on thousands of labeled images and clips. Compared with several leading deep learning methods, including other combinations of convolutional networks, transformers, and LSTMs, the proposed AlphaPose*–LSTM framework reached the highest reported accuracy, precision, and F1-score for classifying fatigue levels. It also ran fast enough for near real-time use: once the first 20 frames are processed, new fatigue estimates can be updated roughly every 48 milliseconds, which is suitable for continuous monitoring in moving vehicles.

What this means for everyday driving

In plain terms, the study shows that a car can use cameras and smart software to watch for telltale signs of a tired driver, combining small changes in eyes, mouth, head, and hands into a reliable picture of alertness. While the current system is tuned to daytime conditions and would need adjustment for night or other environments, the results suggest that non-intrusive, vision-based monitoring could become a practical safety feature. By recognizing fatigue early and prompting drivers to take a break, such technology could help prevent many crashes that now happen simply because someone was too tired to stay fully awake at the wheel.

Citation: Hao, Y., Sun, X., Liu, H. et al. A fatigue driving detection method based on driver posture and facial state analysis. Sci Rep 16, 15159 (2026). https://doi.org/10.1038/s41598-026-44994-4

Keywords: driver fatigue, drowsy driving, driver monitoring, computer vision, road safety