Clear Sky Science · en

Lightweight multiscale behavior recognition for caged laying hens using an enhanced YOLOv8 framework

Why watching hens can safeguard our food

Eggs are a staple on breakfast tables worldwide, yet the hens that lay them often live in crowded cages where early signs of illness or stress are easy to miss. This study shows how a compact artificial intelligence system can watch caged laying hens through cameras, automatically recognize key behaviors linked to health and welfare, and do so fast enough to run on simple farm hardware. The work points toward farms where quiet digital sentinels flag trouble in real time, helping keep birds healthier and egg production more reliable.

How cameras become quiet barn helpers

The researchers first built a practical monitoring platform that could survive the dust, noise, and long days of a commercial chicken house. They installed cameras on each tier of stacked cages in a large farm in Nanjing, China, connecting them to small, low power Jetson Nano computers and battery packs. The cameras recorded hens from early morning to evening over several weeks, capturing how they moved, ate, and interacted. To make sure the images were useful, the team filtered out frames with heavy shadows, blur, or blocked views, keeping only clear snapshots of typical barn life.

Turning raw video into labeled hen behaviors

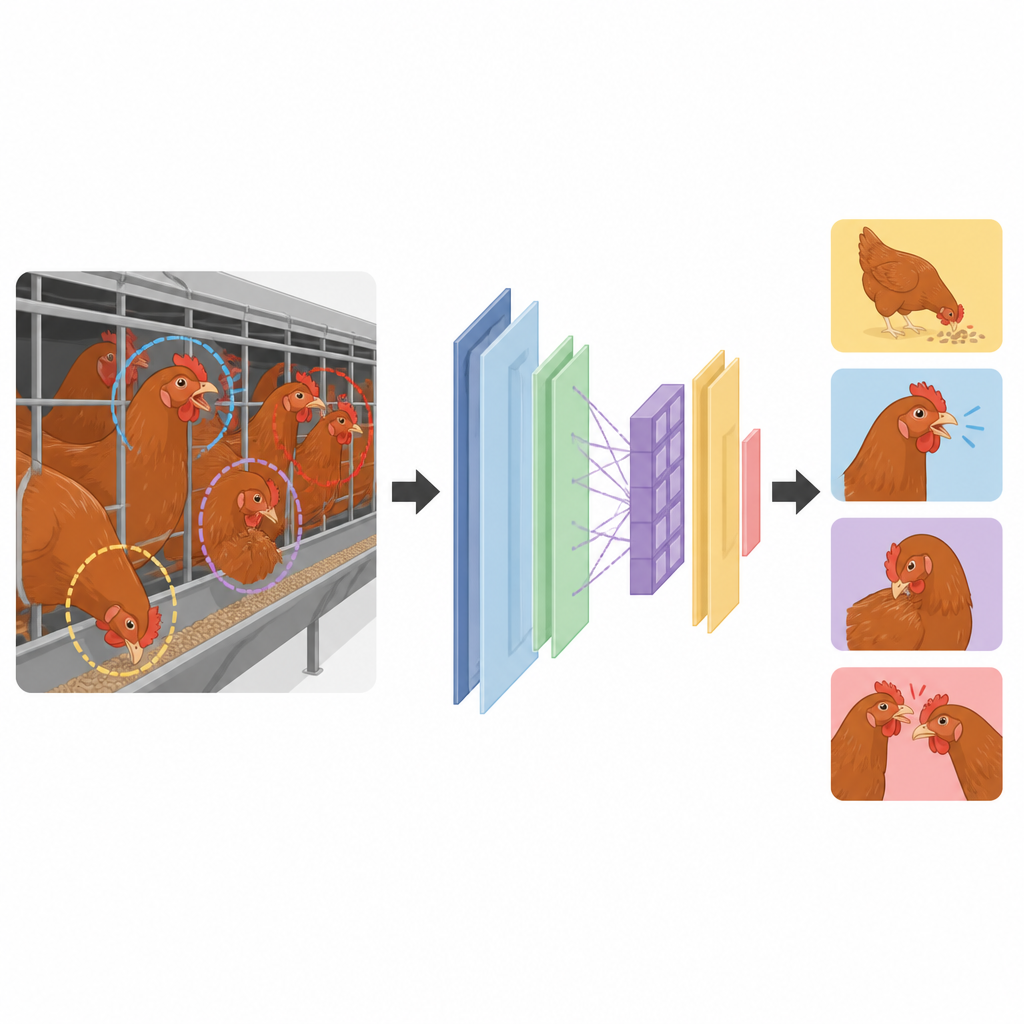

From the thousands of video frames collected, the scientists carefully labeled 2,035 images using open source software. They focused on four head related behaviors: normal eating, open mouth breathing, self pecking, and pecking another hen. These last three are considered warning signs, linked in earlier work to possible breathing problems, pain, or social stress. In each image, experts drew boxes around the hens’ heads and assigned one of the four categories. The dataset was then split into training, validation, and test sets so that the computer model could learn from one part of the data and be evaluated fairly on images it had never seen.

A leaner, sharper digital observer

At the heart of the system is an improved version of a popular object detection model known as YOLOv8. The team redesigned its internal structure so it could spot small, crowded hen heads efficiently without needing a bulky computer. They swapped in lighter building blocks that cut down on repeated calculations, added an attention mechanism that helps the model focus on important regions such as beaks, and used a smart upsampling step to sharpen the outlines of tiny targets. They also introduced a new training recipe that gives extra weight to hard to classify examples, such as partially hidden birds, making learning more stable and less biased toward easy cases.

How well the system understands hens

When tested on the Lukou farm dataset, the upgraded model recognized the target behaviors with high accuracy while remaining small enough for real time use. It improved overall detection performance compared with the standard YOLOv8 version, yet reduced model size by nearly a quarter and lowered computing needs. The system was especially strong at detecting eating, self pecking, and mutual pecking, often exceeding 93 percent on common accuracy scores. Open mouth breathing, a brief and subtle action that can resemble pecking, remained more challenging, showing lower precision even though the model still found many true instances. The authors note that overlapping birds, cage bars, and varying light all make this behavior harder to distinguish in single still images.

What this means for future smart farms

For a lay reader, the key message is that farms can begin to use relatively simple cameras and compact AI models to keep a constant eye on hen welfare, automatically counting how often birds eat, peck themselves, or peck each other. While the system is not perfect and has been tested at only one farm with one breed, it already shows that automatic behavior monitoring can work under real barn conditions and on modest hardware. With more diverse data and models that look at short video clips rather than single frames, such tools could become early warning systems that alert farmers to health problems before they spread, supporting both animal welfare and a stable supply of eggs.

Citation: Tang, Y., Wei, J., Xie, B. et al. Lightweight multiscale behavior recognition for caged laying hens using an enhanced YOLOv8 framework. Sci Rep 16, 14936 (2026). https://doi.org/10.1038/s41598-026-43523-7

Keywords: laying hens, animal behavior, computer vision, smart farming, poultry welfare