Clear Sky Science · en

Advanced hybrid transformer CNN framework for improved skin lesion classification and segmentation

Why spotting skin cancer early matters

Skin cancer is one of the most common cancers worldwide, but it is also among the most treatable when caught early. Doctors increasingly rely on close-up photographs of moles and spots to decide which ones are dangerous. Yet even experts can struggle when images are low contrast, poorly lit, or obscured by hair. This study introduces a new artificial intelligence (AI) system designed to help: it not only decides what kind of skin lesion is present but also outlines the exact area of concern with high precision, even in difficult images.

A two-step helper for doctors

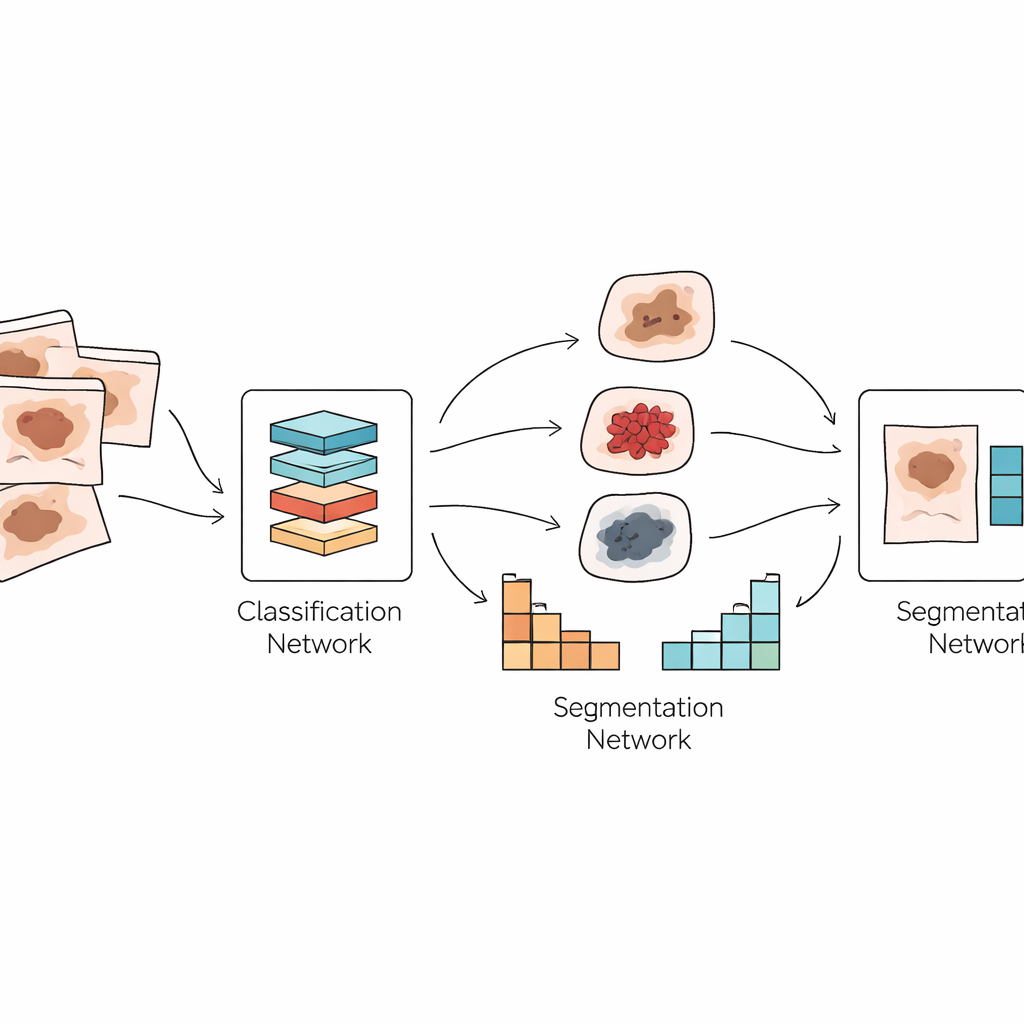

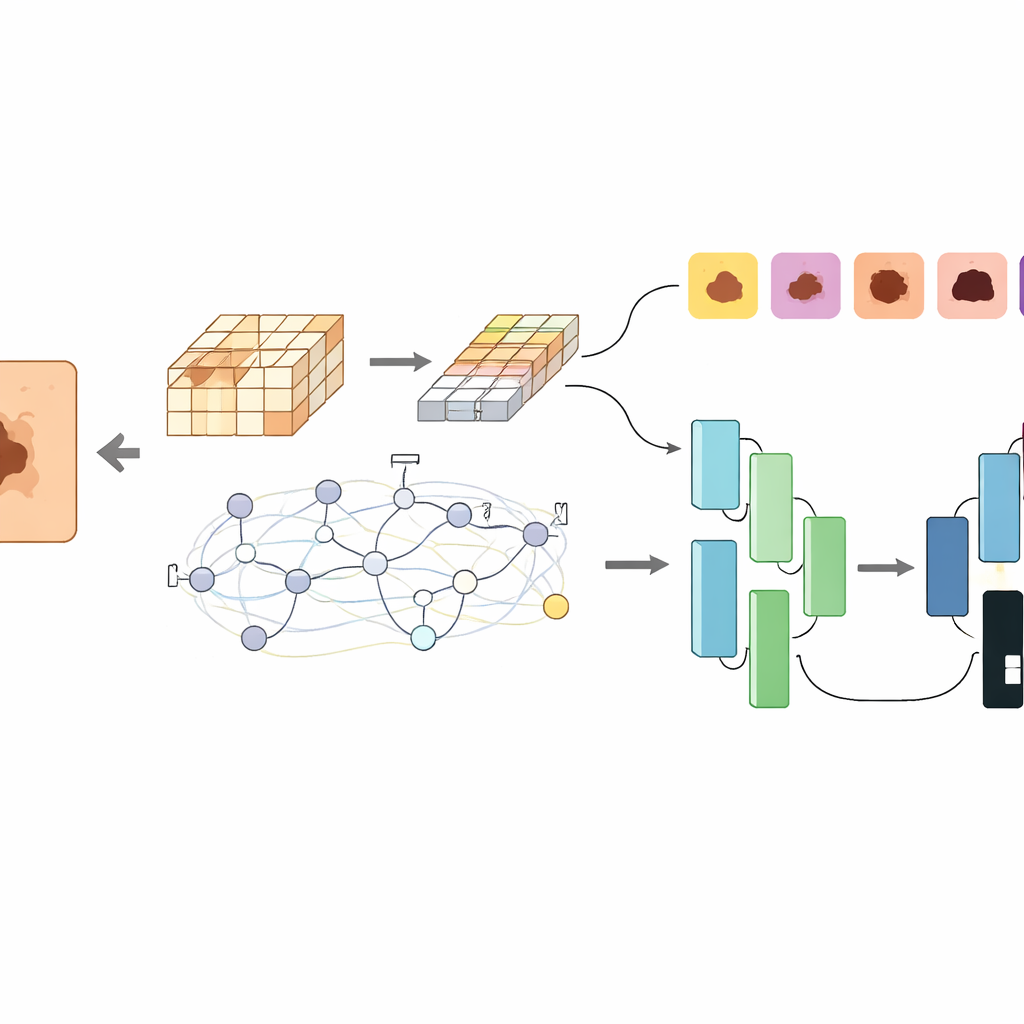

The authors propose a dual system that mirrors how a dermatologist works. First, their model, called GlobalSkinNet, looks at a magnified skin image and decides which type of lesion it is most likely to be, separating benign growths from dangerous cancers such as melanoma. Second, a companion model named SkinFormNet takes the same image and produces a detailed mask that traces the lesion’s boundary pixel by pixel. Together, these two steps provide both a “what is it?” answer and a clear “where is it?” map that could support diagnosis, treatment planning, and follow-up comparisons over time.

New ways of seeing patterns in skin images

Under the hood, both models combine two powerful but different AI techniques. Convolutional neural networks are good at spotting fine textures and edges, like irregular borders or subtle color shifts in a mole. Transformer networks, originally developed for language, excel at understanding global context, such as how different regions of the image relate to one another. GlobalSkinNet uses a “global contextual” transformer that breaks the image into overlapping patches and applies both local and wide-ranging attention, allowing it to capture tiny visual clues while keeping track of the overall lesion shape and surroundings. SkinFormNet uses a transformer-based feature extractor together with a U-shaped decoder that gradually rebuilds a sharp, clean outline of the lesion.

Tested on real-world, messy data

A key strength of this work is how widely it is tested. Many earlier AI studies rely on just one or two tidy datasets, but this system is evaluated on nearly all major public collections of dermoscopic images, including PH2, HAM10000, and the ISIC challenges from 2016 to 2020. These datasets differ in camera type, lighting, image quality, and patient populations, and include both benign and malignant lesions. Despite this variety, GlobalSkinNet reaches around 97–100% accuracy on several datasets and maintains strong sensitivity to melanoma, the deadliest form of skin cancer. SkinFormNet likewise achieves very high overlap between its predicted masks and expert-drawn outlines, often outperforming previous leading methods.

Looking inside the model’s decisions

Because medical AI must be trustworthy, the researchers also explore what their system is actually “looking at.” Using a visualization technique that highlights the most influential image regions for a decision, they show that the model tends to focus on clinically meaningful areas: irregular borders, color variations, and asymmetry in the lesion, while largely ignoring background skin, hairs, and rulers. When the system fails, its errors resemble human difficulty cases, such as very blurry boundaries or lesions that fade gradually into normal skin. These analyses suggest that the model’s behavior is aligned with how dermatologists reason about risk, rather than relying on spurious cues.

What this means for future skin checks

In plain terms, this study presents an AI assistant that can both label a suspicious mole and neatly color in its shape, even when the picture is noisy or unevenly lit. By fusing two complementary families of neural networks, the framework offers more reliable performance than many earlier single-purpose tools. While it still needs real-world clinical testing and lighter versions for everyday devices, the work points toward a future in which dermatologists can use such systems as a second pair of eyes—helping to catch dangerous cancers earlier, reduce unnecessary biopsies, and ultimately improve patient outcomes.

Citation: Yousaf, N., Amin, J., Butt, W.H. et al. Advanced hybrid transformer CNN framework for improved skin lesion classification and segmentation. Sci Rep 16, 13592 (2026). https://doi.org/10.1038/s41598-026-43376-0

Keywords: skin cancer, dermoscopy, deep learning, medical imaging, lesion segmentation