Clear Sky Science · en

A dual-branch deep learning framework for emotion recognition from EEG signals

Why Reading Brainwaves Could Help Your Mood

Mental health problems such as stress, anxiety, and depression often creep up before we or those around us fully notice them. What if everyday devices could quietly track our emotional state and flag when we might need a break or support? This paper explores a new way to read patterns in brain activity, using a headset that records electrical signals from the scalp, and combines them with modern artificial intelligence to recognize emotions quickly, accurately, and with little manual tuning.

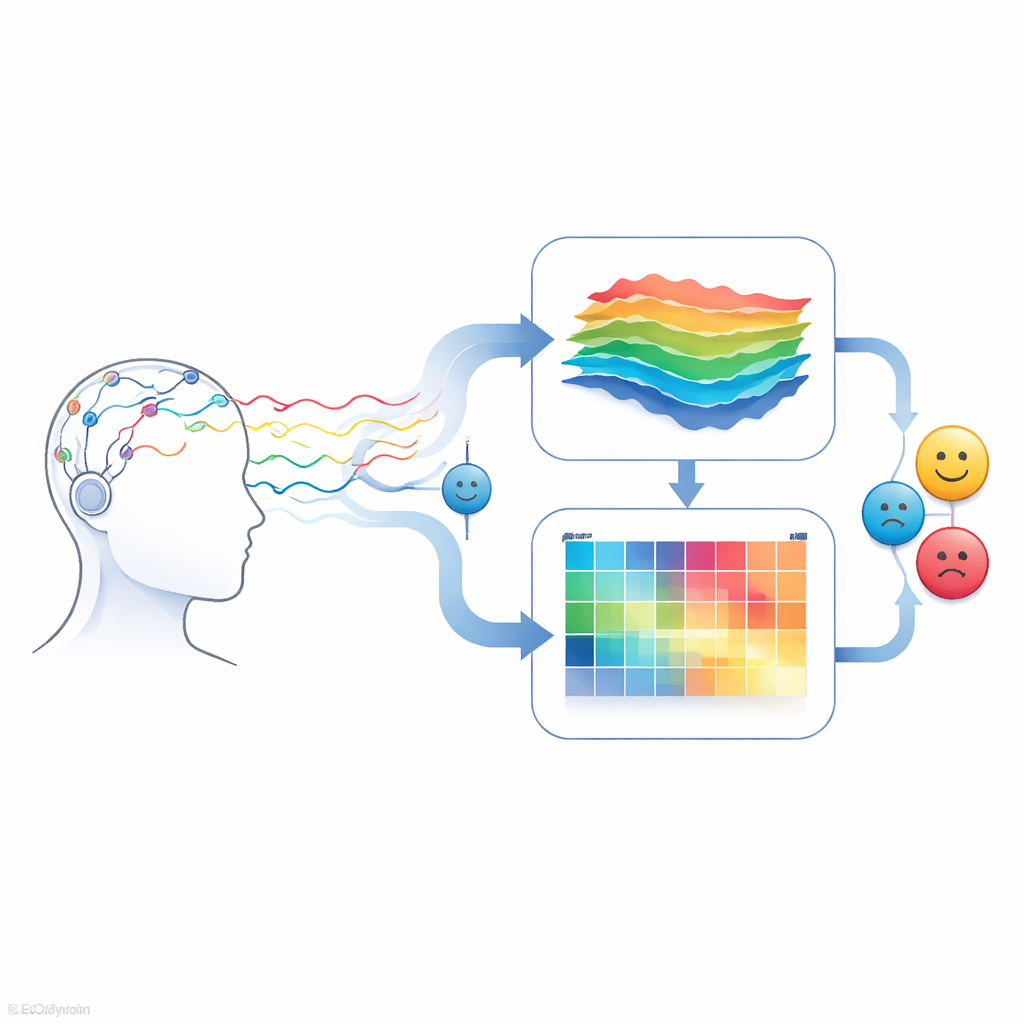

Turning Brain Signals into Emotional Clues

Our brains constantly produce tiny electrical pulses that can be measured by a technology called EEG, short for electroencephalography. These signals change subtly when we feel calm, stressed, or amused. Traditional methods for interpreting EEG data depend heavily on experts hand‑crafting mathematical features from the raw signals, a slow and often fragile process that does not translate well from one study to another. The authors aim to replace this manual work with an end‑to‑end system that learns directly from the data, so that emotion recognition can become more reliable and easier to deploy in real‑world mental health monitoring.

Two Paths for Understanding the Same Signal

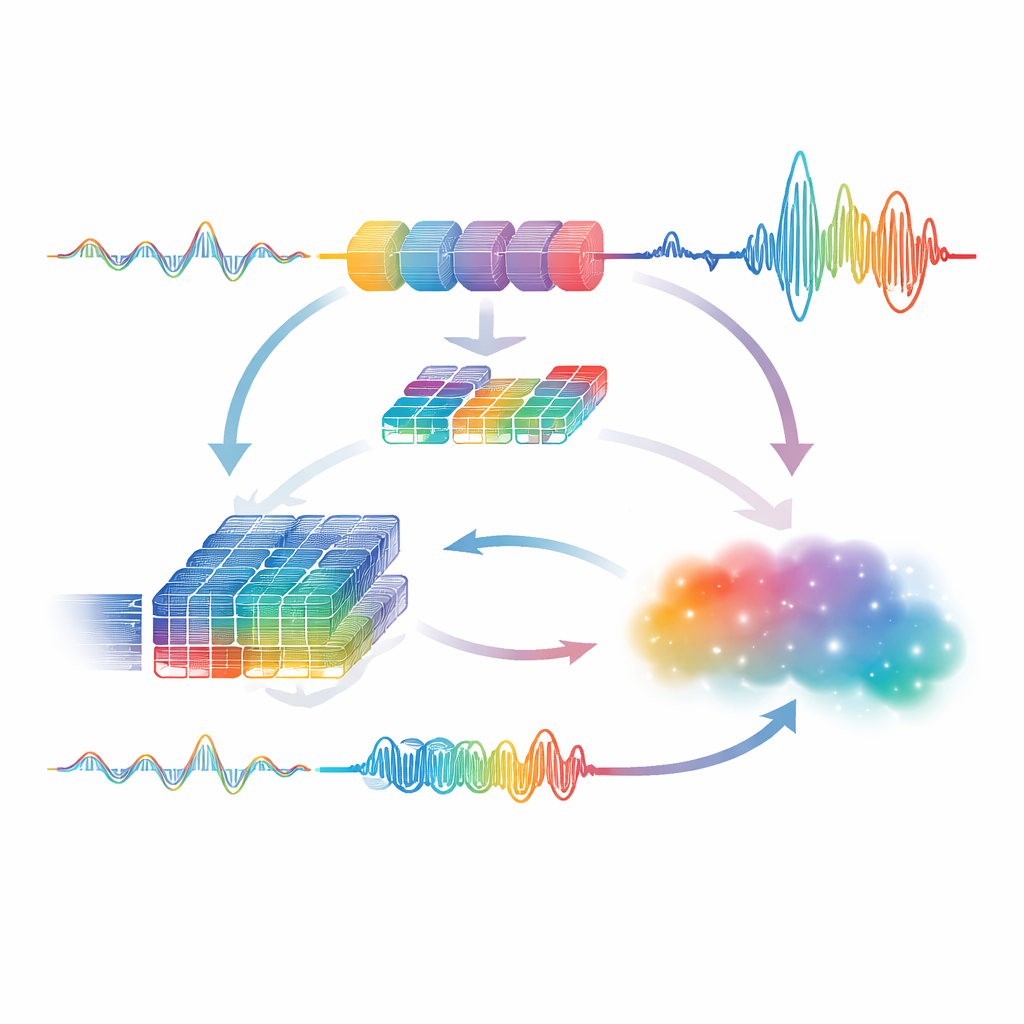

The heart of the study is a dual‑branch deep learning model that looks at the same EEG signal in two complementary ways. One branch focuses on how the signal evolves over time, feeding the raw brainwave sequences into a type of neural network called an LSTM that excels at tracking patterns and rhythms. The other branch converts the same signal into a map of frequencies, similar in spirit to how sound engineers visualize audio, and passes this map through a convolutional network that is good at picking out local patterns. By letting one branch specialize in timing and the other in frequency, the system captures richer information about how different emotions show up in the brain.

Letting the Two Views Teach Each Other

Rather than treating these two branches as separate experts that only meet at the end, the authors design them to interact in both directions. The frequency‑based features are transformed back into a signal‑like form and sent through an extra temporal network, encouraging the model to check whether spectral patterns also make sense over time. Likewise, the summary produced by the temporal branch is turned into a synthetic signal, converted into a frequency map, and examined again by the spectral branch. This back‑and‑forth exchange helps the model agree on which aspects of the signal truly matter for distinguishing emotional states. Finally, the model fuses these perspectives using simple mathematical operations that highlight where both branches strongly concur before handing the result to a compact classifier that outputs an emotion category.

Testing Across Different Real‑World Settings

To see if their approach holds up beyond a single carefully controlled experiment, the authors test it on three public datasets that capture emotions and stress in very different situations. One uses a consumer‑grade headband to record positive, neutral, and negative feelings while people watch movie clips. The second follows volunteers wearing chest and wrist sensors through stressful interviews, calm baselines, and amusing videos. The third observes office workers dealing with time pressure and interruptions over long sessions. Across these varied scenarios, devices, and participants, the dual‑branch model achieves strikingly high accuracy—above 96 percent on the brainwave dataset and essentially perfect scores on the other two—while keeping the design efficient enough for near real‑time use.

What This Means for Everyday Life

In plain terms, the study shows that combining two simple but complementary views of brain activity, and letting them continually refine each other, can yield an emotion recognition system that is both powerful and practical. Instead of relying on fragile hand‑built features, the framework learns directly from data and stays robust when faced with new people and recording conditions. While more work is needed to adapt it across datasets, shrink it for wearables, and incorporate additional body signals, this dual‑branch approach points toward future tools that could quietly track our emotional well‑being, offering early warnings and personalized support long before a crisis hits.

Citation: Saha, D., Ali, A., Gulvanskii, V. et al. A dual-branch deep learning framework for emotion recognition from EEG signals. Sci Rep 16, 13076 (2026). https://doi.org/10.1038/s41598-026-42998-8

Keywords: EEG emotion recognition, deep learning, mental health monitoring, brain–computer interfaces, stress detection