Clear Sky Science · en

Deep learning for high-resolution material texture enhancement in 3D environments

Why Sharper Surfaces Matter

Whether you are exploring a fantasy world in a video game or walking through a virtual museum in VR, much of what feels “real” comes down to the tiny details on surfaces: the grain in wood, the pits in stone, and the shine of metal. Creating those details at very high resolution is expensive and technically tricky, so many virtual worlds rely on blurry or repeating textures that break the illusion. This paper presents a deep-learning method that automatically sharpens and enriches these material textures, making digital scenes look more lifelike without requiring artists to rebuild everything from scratch.

From Blurry Tiles to Lifelike Materials

In modern 3D graphics, an object’s appearance is controlled by several image-like layers called texture maps. One map defines color, but others control how bumpy, shiny, or metallic a surface appears. Existing upscaling tools mostly focus on color alone and often struggle with very large images, leading to visible seams, repeated patterns, or lost detail. The authors propose a system that improves all key material layers at once—color, height (displacement), metalness, surface direction (normals), and roughness—so the final result not only looks sharper but also behaves more realistically under changing lights and camera angles.

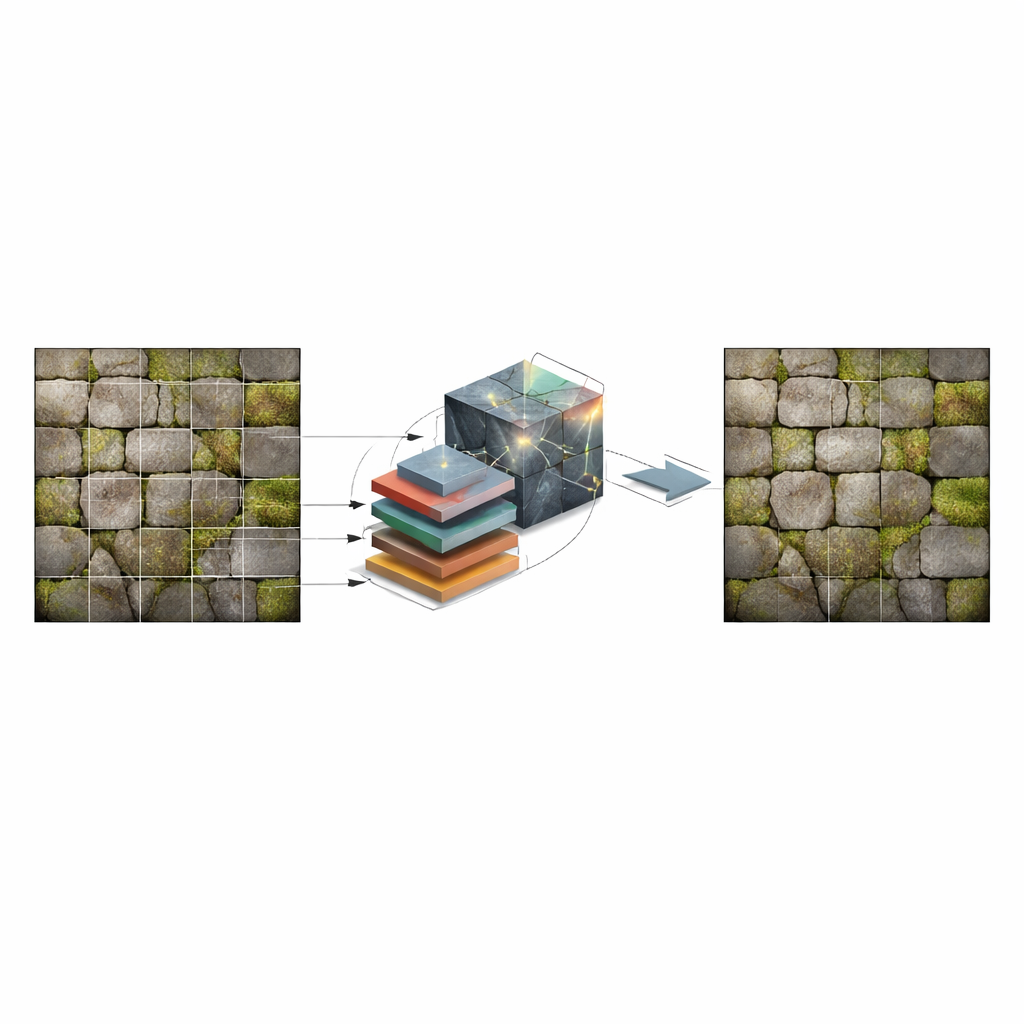

Breaking the Problem into Pieces

A central challenge is that film- and game-quality textures can be huge, easily overwhelming the memory of typical graphics hardware. The new framework tackles this by dividing each large texture into smaller tiles that can be processed independently and then stitched back together without visible borders. Before learning, textures are decompressed, split into tiles, and fed through a powerful deep network adapted from a model known as ESRGAN, which is especially good at inventing plausible fine detail. After enhancement, the tiles are recombined and recompressed, yielding high-resolution textures that fit smoothly into existing production pipelines.

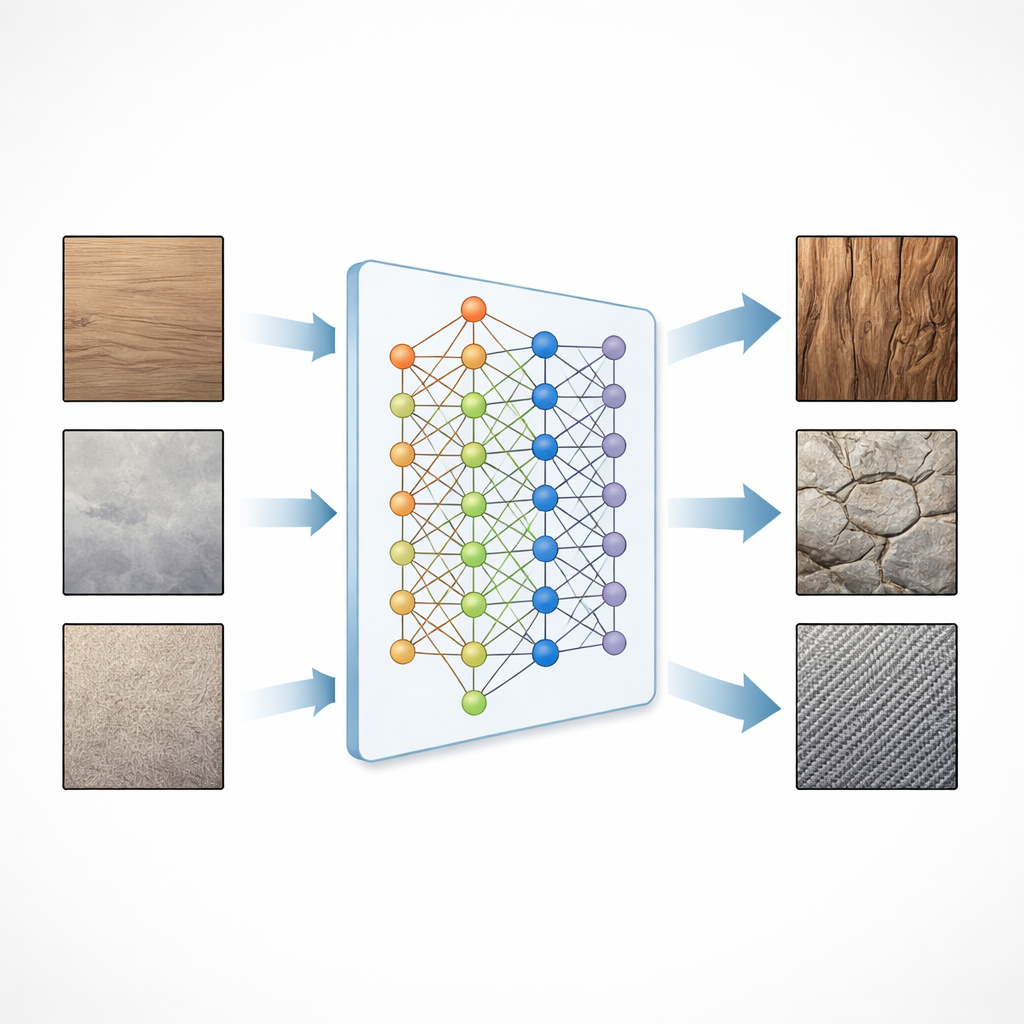

Teaching the Network What Real Surfaces Look Like

To train the system, the authors curated a specialized database of 300 open-source material sets commonly used in games: wood, stone, tiles, metal, fabric, water, lava, asphalt, electronics, and more. Each set includes multiple coordinated maps, giving the network a rich picture of how color, height, shine, and roughness interact on real surfaces. Learning is guided by several complementary goals: matching the original pixels, preserving structures recognized by a separate "perception" network, and competing against a discriminator network that learns to tell real textures from generated ones. Together, these forces push the model to create results that both match measurements and look convincing to the human eye.

How Well It Works in Practice

The researchers evaluated their method against classic image resizing and popular deep-learning upscalers, including ESRGAN and Real-ESRGAN, using standard test textures that were not seen during training. They measured tiny color differences between outputs and ground truth textures and also used NVIDIA’s FLIP metric, which estimates how noticeable differences would be to a viewer. Across a range of materials and maps, their system consistently produced lower errors and higher perceived quality, with clearer patterns and fewer artifacts than competing approaches. Although the method is somewhat slower than simpler techniques, it remains practical for both offline rendering and many real-time workflows.

Limits Today and Paths Forward

The authors note that their model works best on materials similar to those in the training set; very unusual textures can still show minor glitches, and extreme upscaling beyond the target factor yields diminishing gains. The tile-based strategy may also introduce faint seams in rare edge cases, suggesting that further refinement or post-processing could help. Future work could add specialized fine-tuning for new material classes, smarter seam handling, and extra refinement stages aimed at restoring ultra-fine structure for extreme zoom levels.

Sharper Worlds for Players and Creators

In everyday terms, this research offers a smart "magnifying glass" for digital materials. Given existing game or film textures, the system can automatically boost resolution and detail across all the layers that govern how a surface looks and feels, leading to richer, more immersive scenes without ballooning storage or manual labor. As such techniques mature and spread, players and viewers can expect virtual worlds whose walls, floors, fabrics, and landscapes look ever closer to reality—no matter how close they get to the screen.

Citation: Alonso, K., Patow, G. Deep learning for high-resolution material texture enhancement in 3D environments. Sci Rep 16, 12532 (2026). https://doi.org/10.1038/s41598-026-42313-5

Keywords: texture upscaling, 3D graphics, deep learning, game art, virtual reality