Clear Sky Science · en

Application of smooth OWA operators to classification of retinitis pigmentosa

Why this matters for eyesight

Slowly losing vision from an inherited eye disease is a frightening prospect, and diagnosing these conditions early is crucial for preserving sight. This study explores how modern artificial intelligence can help doctors distinguish between several rare but serious retinal disorders, including retinitis pigmentosa, using detailed photographs of the back of the eye. By finding a smarter way to combine the judgments of many different computer models, the researchers show that computers can spot these diseases far more reliably than before, even when only a small number of patient images are available.

Seeing disease in eye photos

Retinitis pigmentosa is a group of genetic conditions that slowly destroy the light-sensing cells in the retina. People often first notice trouble seeing at night, then lose side vision, and some eventually go blind. Related disorders, such as cone-rod dystrophy and Usher syndrome, can look similar in eye images and may share subtle visual patterns. In this study, specialists at a medical center in Lublin, Poland, collected ultra-widefield images of the retina from 186 patients with these conditions, along with images from healthy eyes obtained from a separate database. The result was a five-way classification task: two genetic flavors of retinitis pigmentosa, cone-rod dystrophy, Usher syndrome, and healthy controls. The dataset was small and unbalanced, reflecting real-world rarity—some categories had many more examples than others, making the task especially challenging.

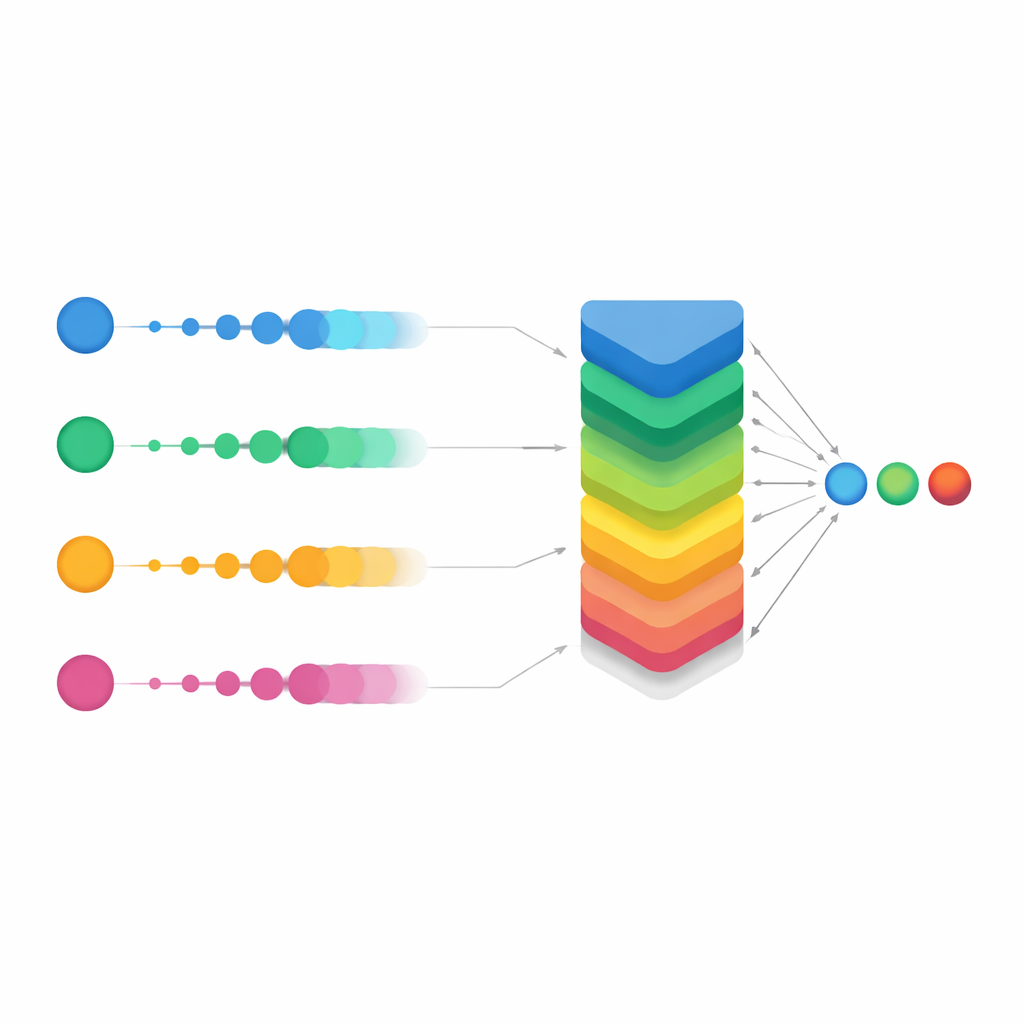

Teaching machines to read the retina

To interpret these images, the authors turned to powerful image-recognition systems originally developed for everyday photos: convolutional neural networks and newer transformer-based models. They tested several architectures, including EfficientNet, ResNet, VGG, Inception, and two versions of the Vision Transformer. Each network was first trained on a large general-purpose image database and then fine-tuned on the retinal images, a strategy known as transfer learning. Standard data augmentation, such as flipping, rotating, and slightly distorting the images, helped the models cope with differences in imaging devices and patient anatomy. Even with these advanced techniques, the best single network correctly classified only about two-thirds of the test cases, and performance was noticeably worse on the rarest disease categories.

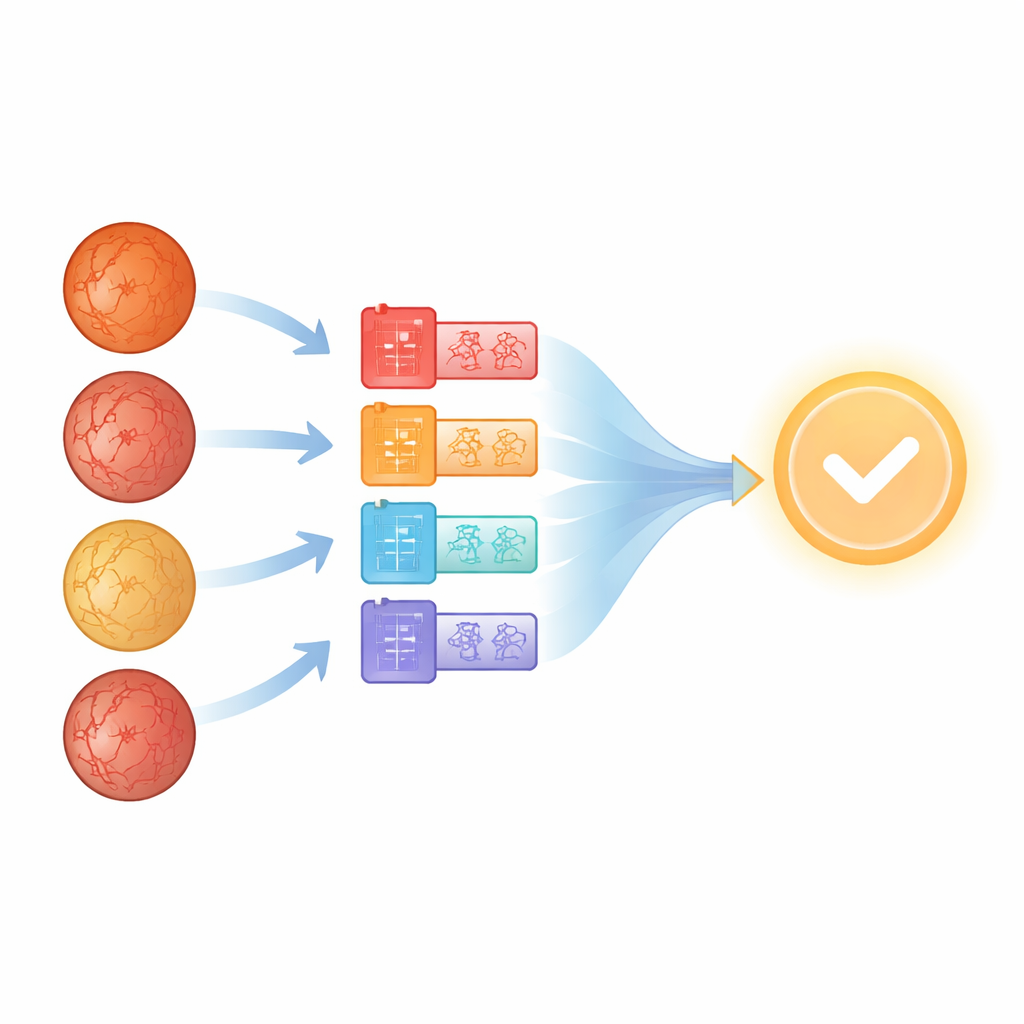

Letting many models vote together

Rather than trusting any single network, the researchers next asked: what if we treated each model as one expert on a panel and combined their opinions? This idea, called aggregation or ensemble learning, is common in machine learning but is often implemented with simple voting or averaging. Here, the team used a more flexible family of tools known as Ordered Weighted Averaging (OWA) operators. For each possible diagnosis, OWA takes the probabilities produced by all the networks, sorts them from highest to lowest, and then blends them with a carefully chosen set of weights. In effect, it gives more influence to models that are more confident, while still considering the rest. This aggregation alone produced a dramatic jump in overall accuracy, from roughly 68% for the best individual model to over 93% when the networks’ outputs were combined with a basic OWA scheme.

Smoothing the vote for tough cases

The study’s main innovation was a refined version called Smooth OWA. Instead of treating each model’s probability in isolation, Smooth OWA gently "smooths" each value using its neighbors before applying the weighted blend. The smoothing rules are borrowed from classical numerical integration formulas, known as Newton–Cotes quadratures, which are normally used for precise area calculations. Translated into this setting, they help even out unstable or borderline predictions across models. While the extra gain over standard OWA was modest—on the order of half a percentage point in accuracy—it was consistent across repeated tests and pushed performance to about 94%. Crucially, the confusion matrices showed that misclassifications dropped sharply across all five classes, especially for the rarest diseases. In some groups, correct recognition rates climbed from around two-thirds with the best single model to well above 90% with Smooth OWA.

What this means for patients and doctors

For a non-specialist, the key message is that no single artificial intelligence model has to be perfect to be clinically useful. By carefully combining several good but imperfect models and gently stabilizing their outputs, the researchers turned a set of "pretty good" tools into one very strong decision aid. Their Smooth OWA approach handled a small, imbalanced dataset and still achieved performance levels that would be hard to reach otherwise. Although the work is still at a research stage and needs to be validated in larger, multi-center studies before guiding real patient care, it suggests a clear path forward: future diagnostic systems for rare eye diseases may rely not on a single algorithm, but on a coordinated "council" of algorithms whose collective judgment is both more accurate and more dependable.

Citation: Rachwał, A., Rachwał, A., Powroźnik, P. et al. Application of smooth OWA operators to classification of retinitis pigmentosa. Sci Rep 16, 11995 (2026). https://doi.org/10.1038/s41598-026-41840-5

Keywords: retinitis pigmentosa, deep learning, retinal imaging, ensemble methods, medical diagnosis AI