Clear Sky Science · en

A multi-task deep learning and radiomics framework for fetal anatomical structure detection and classification in ultrasound imaging

Why Early Baby Brain Scans Matter

Expectant parents and clinicians alike want clear, early answers about a baby’s health. Ultrasound scans in the first three months of pregnancy already offer a window into the developing brain and face, where subtle changes can hint at future problems. But reading these grainy images is hard and depends heavily on the skill of the person holding the probe. This study explores how modern artificial intelligence can act as an extra pair of expert eyes, automatically spotting and classifying tiny structures in the fetus’s head to support earlier, more consistent screening.

Seeing More Than the Human Eye

The researchers focused on nine key brain and facial features that doctors routinely check between 11 and 14 weeks of pregnancy, including parts of the brain, the fluid spaces around it, and facial markers such as the nasal bone and palate. Changes in these features can signal genetic or structural conditions. Traditionally, even experienced specialists can disagree about what they see on ultrasound, and image quality varies from clinic to clinic. To tackle this, the team gathered a large, diverse set of 4,532 ultrasound exams from nine medical centers, capturing a wide range of equipment, image styles, and fetal positions. This diversity allowed them to build and test an automated system that is less tied to any single hospital or machine.

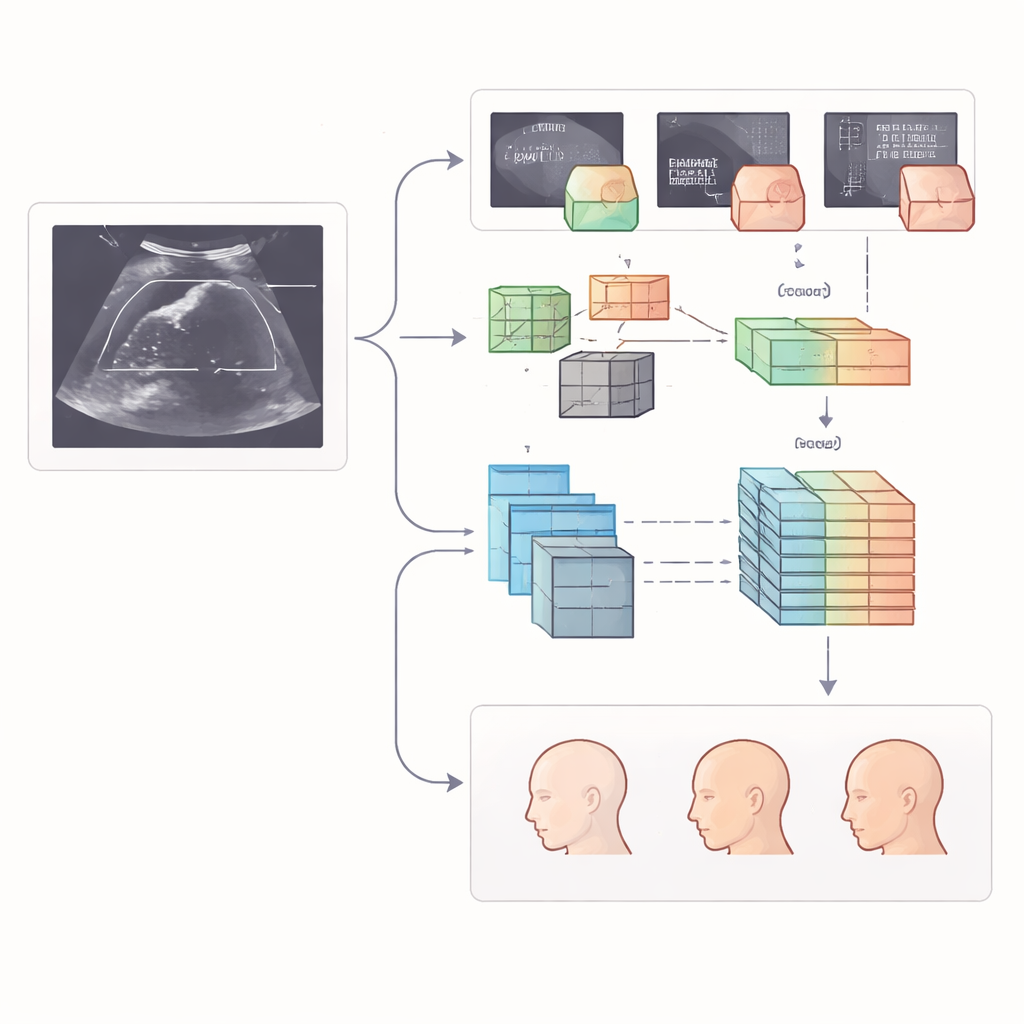

How the Smart Pipeline Works

The heart of the study is a step-by-step digital pipeline that mimics, and then extends, a human examiner’s workflow. First, two advanced image-analysis models scan each ultrasound frame and draw boxes around the nine target structures, such as the midbrain or the nasal bone. One model is a fast detector designed to quickly pick out small objects, while the other relies on a transformer-style architecture that looks at the image in overlapping windows to capture both local detail and the broader context. Once the regions are located, the system studies the contents of each box in two complementary ways: it calculates hundreds of handcrafted “radiomic” measurements that describe texture, brightness, and shape, and it lets a deep neural network learn its own patterns directly from the pixels.

Turning Raw Features into Clear Decisions

Collecting thousands of numerical measurements is only useful if they are reliable and not redundant. The authors therefore applied a three-stage filter. They first kept only those features that were stable across different centers and reviewers, then removed strongly overlapping measurements, and finally used a sparsity-promoting method to choose the most informative subset. These refined features, drawn from both radiomics and deep learning, were then fed into a specialized model designed for handling tables of numbers. This model learns how different features interact, allowing it to sort each structure into clinically meaningful categories, such as normal, borderline, or clearly abnormal, based on how it looks in the scan.

How Well the System Performed

To test whether the approach would hold up beyond the data it was trained on, the team evaluated performance on internal test images and on a separate set of nearly 500 scans from another center. The transformer-based detector proved especially accurate at finding the right anatomical regions, often matching the expert-labeled boxes with very high overlap. When it came to judging whether a structure was normal or not, the combined feature models—using both radiomics and deep-learned patterns—consistently beat models that relied on either source alone. On the toughest external test set, the best combined models correctly classified structures in about 96% of cases, with strong ability to pick out subtle abnormalities such as a shortened nasal bone or narrowed fluid spaces behind the brainstem.

What This Could Mean for Prenatal Care

From a lay perspective, the message is that computers can now help make early pregnancy scans more consistent and less dependent on a single expert’s judgment. By automatically finding and grading delicate structures in the fetal brain and face, this multi-step system could support earlier, more objective detection of potential problems, even in busy clinics with varying equipment. While the authors note that rare and unusual conditions still pose a challenge, and further real-world testing is needed, their results suggest that carefully designed, multi-center trained AI tools may one day become routine partners in first-trimester screening, offering parents clearer information and clinicians stronger decision support at a critical early stage.

Citation: Zhou, X., Wan, J., Sun, F. et al. A multi-task deep learning and radiomics framework for fetal anatomical structure detection and classification in ultrasound imaging. Sci Rep 16, 11586 (2026). https://doi.org/10.1038/s41598-026-41635-8

Keywords: fetal ultrasound, prenatal screening, deep learning, radiomics, brain and facial development