Clear Sky Science · en

HSICNet a novel deep learning architecture for hyperspectral image classification in remote sensing and environmental monitoring

Why smarter satellite images matter

From tracking crop health to spotting pollution from space, modern satellites see far more than just red, green, and blue. Hyperspectral cameras split light into hundreds of tiny color bands, revealing subtle fingerprints of plants, soil, water, and concrete. The problem is that this data is huge, noisy, and hard to label, which makes it difficult to use in real time. This paper introduces HSICNet, a new deep learning system designed to tame that complexity so that hyperspectral images can more reliably power applications in farming, cities, and environmental monitoring.

Seeing beyond ordinary color

Hyperspectral image classification is the task of deciding, for every pixel in an image, what type of material it shows—for example, corn versus soybeans, asphalt versus grass, or healthy versus diseased plants. Because hyperspectral sensors measure hundreds of wavelengths, they provide both rich “spectral” information (how a material reflects light across colors) and “spatial” information (how neighboring pixels relate to each other). Traditional machine learning and early neural networks struggle with this kind of data: there are too many bands, many of them redundant, and relatively few ground-truth labels. Newer attention and transformer-based models can improve accuracy, but they tend to be heavy, slow, and prone to overfitting when labels are scarce.

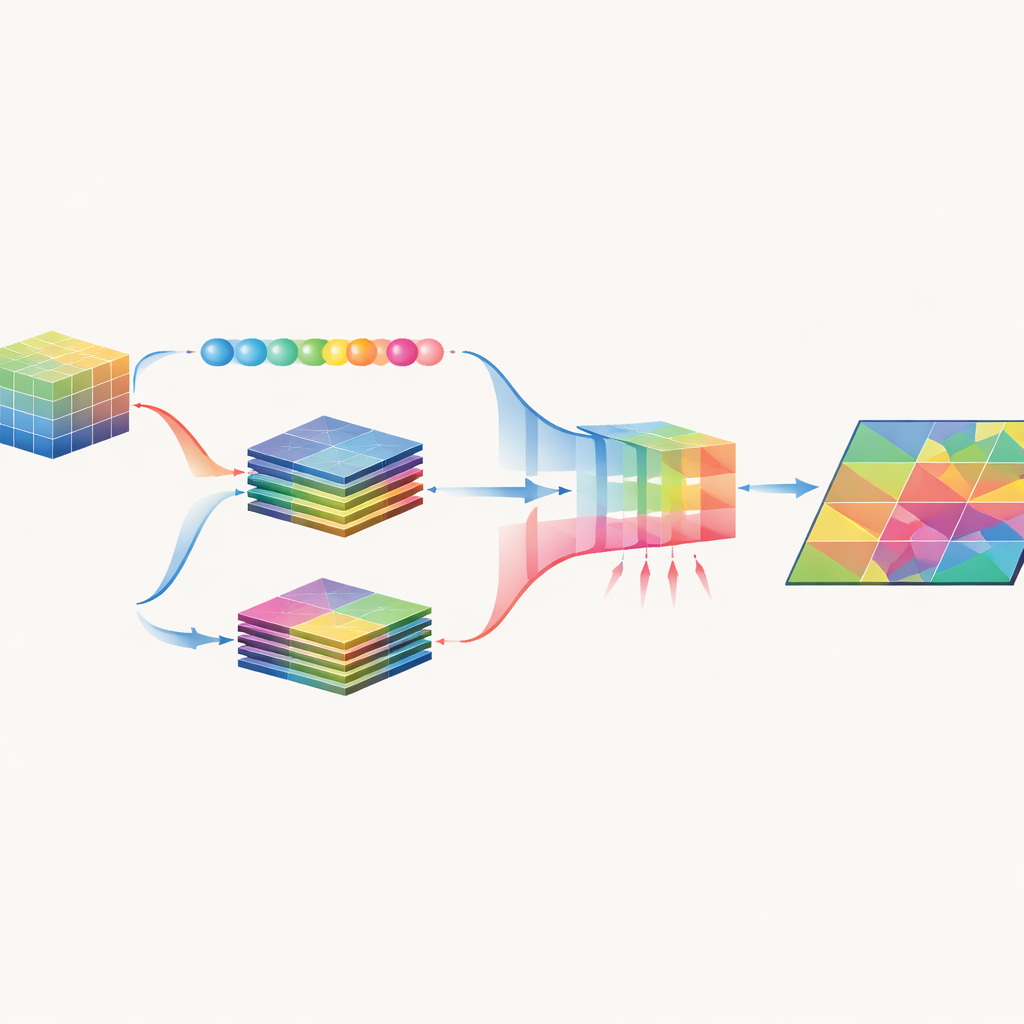

A two-path network for color and context

HSICNet tackles these challenges with a dual-branch architecture purpose-built for hyperspectral data. First, the raw image cube is cleaned by removing noisy bands and compressed using principal component analysis, which keeps almost all useful variation while lowering dimensionality. The preprocessed data is then fed into two parallel paths. One path uses one-dimensional convolutions along the spectral axis to learn fine-grained color signatures for each pixel. The other uses two-dimensional convolutions over small image patches to capture textures, shapes, and patterns—such as field boundaries or road grids—that provide spatial context. By separating these roles, the model reduces computation while still learning both what each pixel is made of and how it fits into its surroundings.

Letting the network focus on what matters

After spectral and spatial features are extracted, HSICNet does not simply stack them together. Instead, it applies an attention-based fusion module inspired by “squeeze-and-excitation” designs. Global averages across channels are used to estimate how informative each feature channel is, and the network learns a set of weights that boost useful channels and suppress redundant or noisy ones. This produces a compact, fused representation that emphasizes truly discriminative spectral–spatial patterns. Finally, a small fully connected classifier converts these fused features into land-cover labels for every pixel, and the results are evaluated with standard measures that track overall accuracy, class balance, and agreement with ground truth.

How well does it work in the real world?

The authors tested HSICNet on three widely used benchmark datasets that mimic real remote sensing tasks: Indian Pines (mixed crops and vegetation), Pavia University (urban surfaces and vegetation), and Salinas (high-resolution agricultural fields). Across all three, HSICNet reached around 99% overall accuracy and near-perfect agreement with reference labels, outperforming strong baselines such as support vector machines, random forests, 3D convolutional networks, residual networks, and attention-enhanced or transformer-based hybrids. Importantly, it did so with far fewer parameters, lower training time, and faster per-image predictions, making it more attractive for use on devices with limited computing power or for near-real-time processing of satellite and drone data.

Why this approach is promising

To a non-specialist, HSICNet can be seen as a carefully streamlined pipeline that learns to read hyperspectral “fingerprints” of the Earth’s surface without being bogged down by unnecessary detail. By reducing the data sensibly, splitting color and context into separate paths, and then recombining them with a learned focus on what matters most, the model achieves both high accuracy and speed. This combination suggests that future remote sensing systems could classify crops, map urban materials, or monitor environmental change directly from incoming hyperspectral streams, bringing more precise and timely information to farmers, planners, and environmental scientists.

Citation: Purnachand, K., Samrin, R., Guttikonda, J.B. et al. HSICNet a novel deep learning architecture for hyperspectral image classification in remote sensing and environmental monitoring. Sci Rep 16, 11166 (2026). https://doi.org/10.1038/s41598-026-40509-3

Keywords: hyperspectral imaging, remote sensing, deep learning, land cover mapping, environmental monitoring