Clear Sky Science · en

Everyday Activity Science and Engineering Table Setting Dataset

Why setting the table can teach robots

Setting a table seems like a simple chore, but it actually packs in rich clues about how people move, plan, and think. This study turns that everyday act into a detailed laboratory experiment, creating a large public dataset that can help scientists build smarter assistive robots and better tools for understanding human behavior.

Capturing a simple task in rich detail

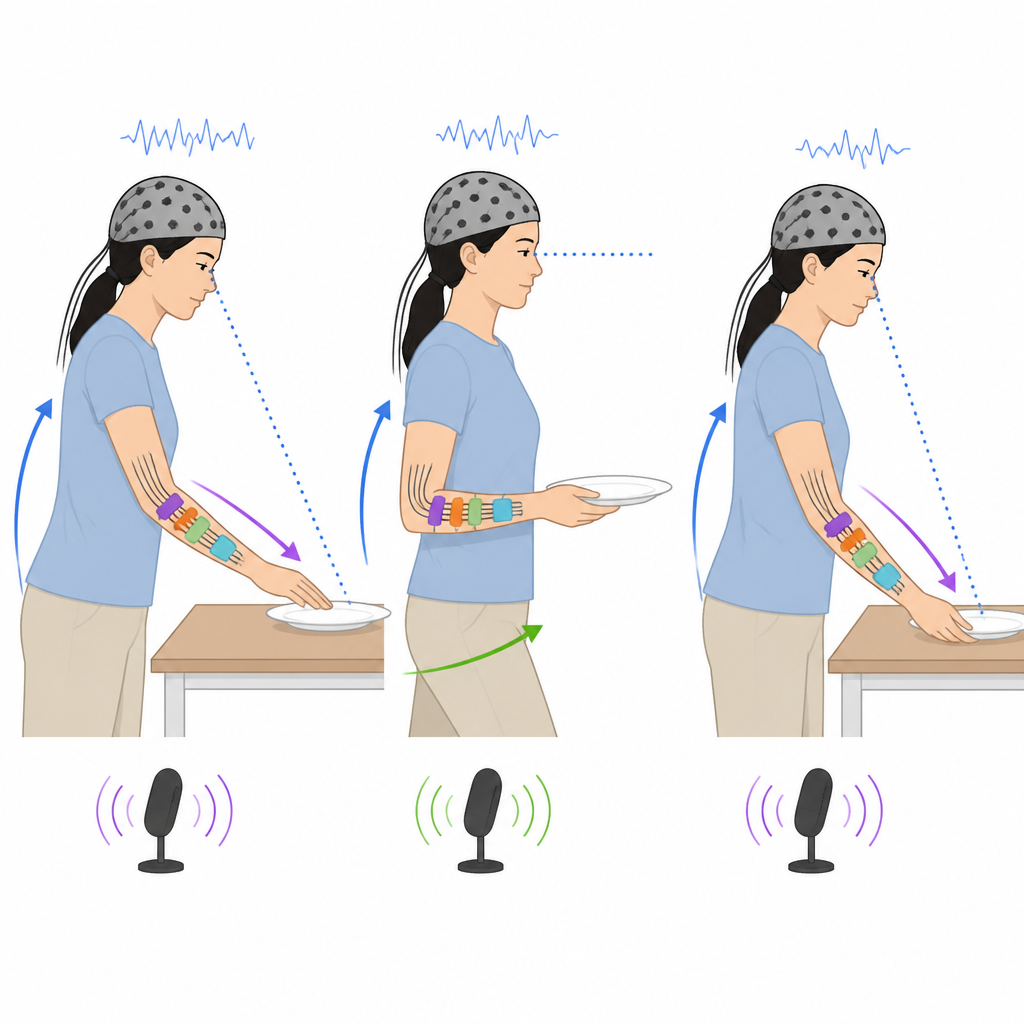

In this work, researchers asked volunteers to set a dining table in a lab kitchen while being recorded by a suite of sensors. The activity itself was familiar: arranging plates, cups, and cutlery for informal breakfasts and formal lunches for different numbers of guests. What makes the study special is not the task, but how carefully it is measured. Each participant wore lightweight gear that tracked their full body motion, eye movements, arm muscles, skin response, and brain activity, while microphones and multiple cameras watched and listened to the scene. This combination gives a dense, time-synchronized picture of both what people do and how their bodies respond while doing it.

Listening in on thoughts and plans

To go beyond motions alone, the team also asked participants to describe what they were doing. In some trials they spoke out loud as they worked, explaining choices such as which items to select and where to place them on the table. In others, they first performed the task in silence and later watched a video of themselves, commenting on their actions after the fact. These spoken reports were recorded and transcribed, then coded with tags that capture different kinds of thinking, such as planning, noticing problems, or explaining reasons. Combined with the sensor data, this lets researchers link inner thought processes to visible movements and decisions.

From raw recordings to usable data

Gathering so much information is technically demanding. The study used 22 devices, including motion capture cameras, wearable sensors, microphones, and eye trackers, all controlled by a central computer system. The authors carefully synchronized the timing of every data stream so that, for example, a grasp of a plate seen on video lines up with the corresponding spike in muscle activity and any changes in brain or skin signals. They cleaned the recordings, fixed dropped video frames, trimmed all signals to a common time span, and stored them in accessible formats. The team also developed special tools and an extended annotation scheme that breaks each trial into phases, specific actions, and fine-grained motions for different body parts, as well as logs for the objects being handled.

What is inside the table setting collection

The resulting resource, called the Everyday Activity Science and Engineering Table Setting Dataset, contains 78 recorded sessions, 50 of which are analyzed in detail in this article. Together they add up to around 300 hours of biosignals and about 260 hours of labeled activity segments. The dataset stands out compared with earlier efforts because it balances three hard-to-combine goals: a fairly large number of participants, many kinds of sensors, and detailed, multi-layered annotations for a realistic household task. To check that the signals are informative, the authors ran baseline machine learning experiments that used muscle, motion, brain, and acceleration data to automatically recognize different stages of the task, showing performance clearly better than random guessing, especially when full body motion was included.

Why this matters for everyday helpers

For a layperson, the benefit of this work is that it builds a shared, open resource for future systems meant to work with people in natural settings, such as kitchen assistants, rehabilitation aids, or smart homes. By making high-quality recordings of a simple but realistic daily activity freely available, along with clear documentation and code, the authors give researchers a common testbed for studying how people organize their actions and how machines might learn to interpret them. In short, this article shows how something as ordinary as setting the table can become a powerful lens on human behavior, and a stepping stone toward more helpful, human-aware technology.

Citation: Meier, M., Hartmann, Y., El Ouahabi, Y. et al. Everyday Activity Science and Engineering Table Setting Dataset. Sci Data 13, 721 (2026). https://doi.org/10.1038/s41597-026-07077-7

Keywords: table setting, human activity, multimodal dataset, cognitive robotics, biosignals