Clear Sky Science · en

A multimodal retinal image dataset for diabetic retinopathy detection using foundation models

Why catching eye damage early matters

For people living with diabetes, damage to the light‑sensing tissue at the back of the eye can silently progress for years before vision noticeably blurs. By the time symptoms appear, some harm may be permanent. Doctors know that regular eye scans can spot trouble early, but reading thousands of images by hand is slow and costly. This study introduces a large, carefully labeled collection of retinal images designed to help artificial intelligence (AI) systems learn to detect diabetic eye disease more accurately and more reliably, bringing earlier warning to many more patients.

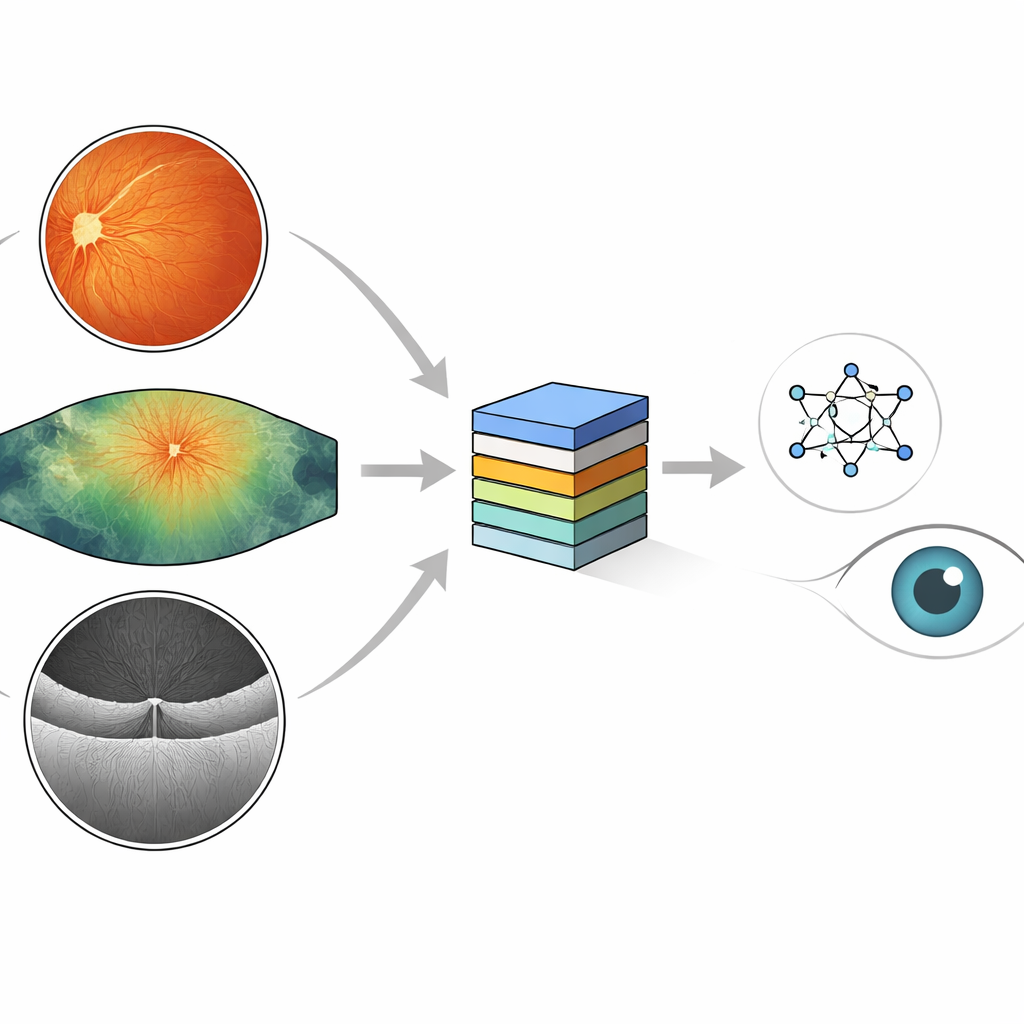

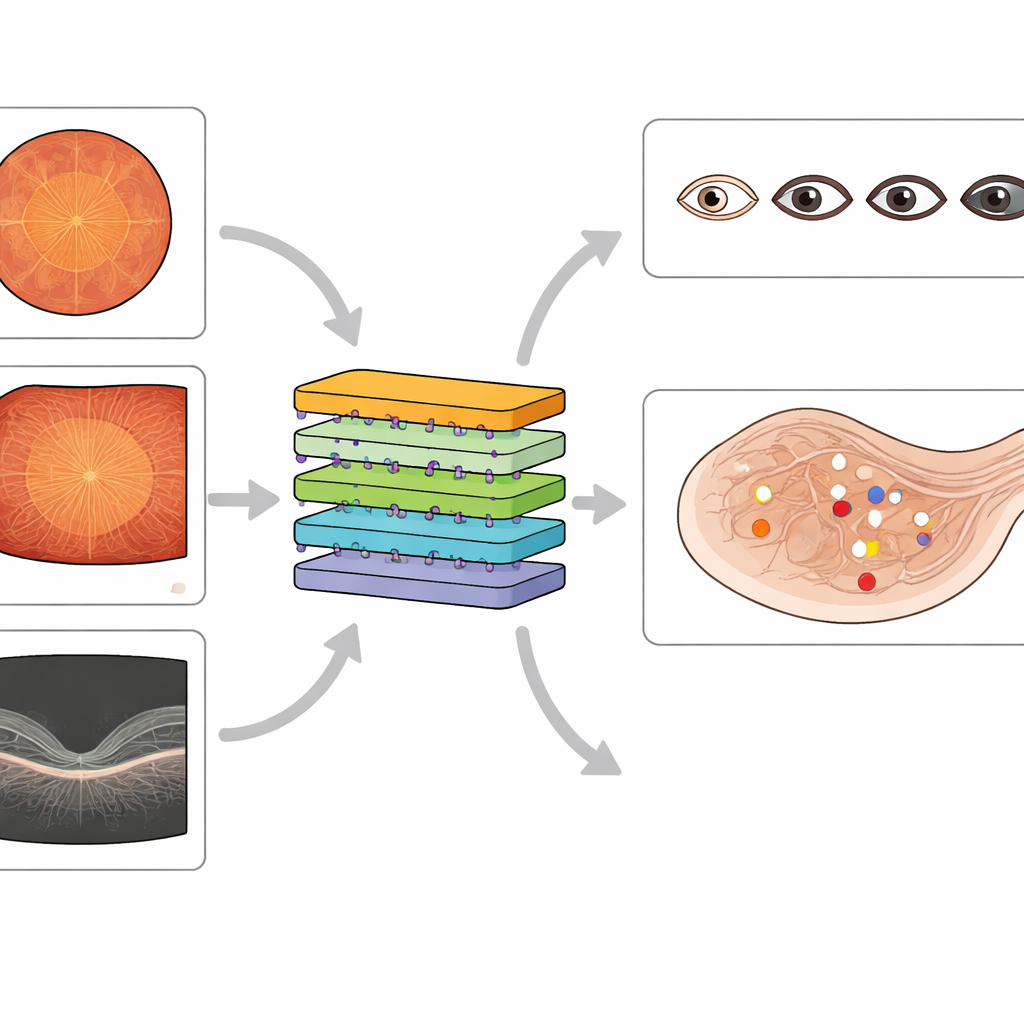

Different camera views of the same eye problem

Eye doctors use several kinds of cameras to look for diabetes‑related damage. Standard color photographs of the back of the eye show a round, reddish view where tiny blood spots, fatty deposits, and new fragile vessels can appear. Ultra‑widefield images capture a much larger area, including the far edges of the retina where early damage may hide. A third tool, optical coherence tomography (OCT), slices through the retina in cross‑section, revealing swelling and pockets of fluid linked to vision‑threatening macular edema. Each method reveals different pieces of the same disease process, and together they give a fuller picture of eye health.

What is new about this image collection

Existing public datasets have fueled many AI systems for diabetic eye screening, but most focus on just one imaging method and offer only coarse labels, such as a single overall disease grade. Some contain noisy labels or miss important lesion types, and many lack good coverage of widefield images or detailed information about macular swelling. The new MMRDR dataset aims to fill these gaps. It gathers 24,460 images across three modalities—standard color photos, ultra‑widefield images, and OCT scans—and attaches rich expert annotations. For color and widefield images, doctors graded overall disease on a five‑step scale and recorded seven specific lesion types, such as tiny bulges in blood vessels, hemorrhages, and retinal detachment. For OCT scans, they described whether macular edema is absent, present outside the center, or directly affecting the center of vision.

How the images were curated and labeled

The authors drew standard color photographs from an existing public competition dataset and obtained widefield and OCT images from a large Chinese eye hospital, focusing on people with diabetes. They removed low‑quality images with blur, poor lighting, or missing central regions to ensure that remaining scans were clinically useful. Four experienced ophthalmologists and a senior retinal specialist developed clear grading rules based on international guidelines. The specialist first created a reference set of images, then the other graders practiced on this set until they agreed closely with the expert’s decisions. After that, they independently labeled thousands of images, sending uncertain cases back to the specialist. The final collection balances thorough labeling with strong agreement between doctors, making it a trustworthy teaching set for AI.

Testing today’s AI on tomorrow’s eye data

The team then used the dataset to evaluate several types of advanced AI models. They tested large vision‑language models originally trained on general images and text, standard image classifiers trained on everyday photographs, and newer "foundation" models already tuned on eye images. Across the board, models struggled most with ultra‑widefield images, where the much larger view and more complex patterns reduced accuracy compared with standard color photos. Models designed specifically for eye images showed better transfer from standard to widefield views than general multimodal systems did, hinting that knowledge of retinal structure really matters. When the researchers fine‑tuned one large multimodal model on MMRDR, its performance improved markedly, showing that this dataset can teach even very flexible AI systems to handle eye disease more competently.

What this means for future eye care

In plain terms, this work delivers a high‑quality teaching library for computers learning to spot diabetic eye damage. By combining three complementary imaging methods and detailed expert labels, the MMRDR dataset lets researchers build and fairly compare AI tools that grade disease severity, pinpoint individual lesions, and track macular swelling. Although these images alone will not cure blindness, they provide a crucial foundation for more dependable automated screening systems that could catch sight‑threatening changes earlier and bring expert‑level eye care within reach of many more people living with diabetes.

Citation: Tang, Z., Wang, L., Guo, Z. et al. A multimodal retinal image dataset for diabetic retinopathy detection using foundation models. Sci Data 13, 639 (2026). https://doi.org/10.1038/s41597-026-07005-9

Keywords: diabetic retinopathy, retinal imaging, medical AI, foundation models, ocular OCT