Clear Sky Science · en

Sen2GF3Floods: A Benchmark Multi-Source Flood Dataset with Dual-Temporal and Active Learning Annotation

Why Smarter Flood Maps Matter

Floods are among the most destructive natural disasters, yet when rivers overflow or cities are swamped by sudden rain, emergency teams still struggle to see accurately where the water has spread. This article presents Sen2GF3Floods, a new, large-scale collection of satellite images and computer-ready flood maps designed to help artificial intelligence (AI) quickly and reliably trace floodwaters. By blending different types of satellite views taken before and after disasters, and by using a clever, cost-saving way to label flooded areas, the work aims to make real-time, high-quality flood maps much more widely available.

Seeing Water from Space in New Ways

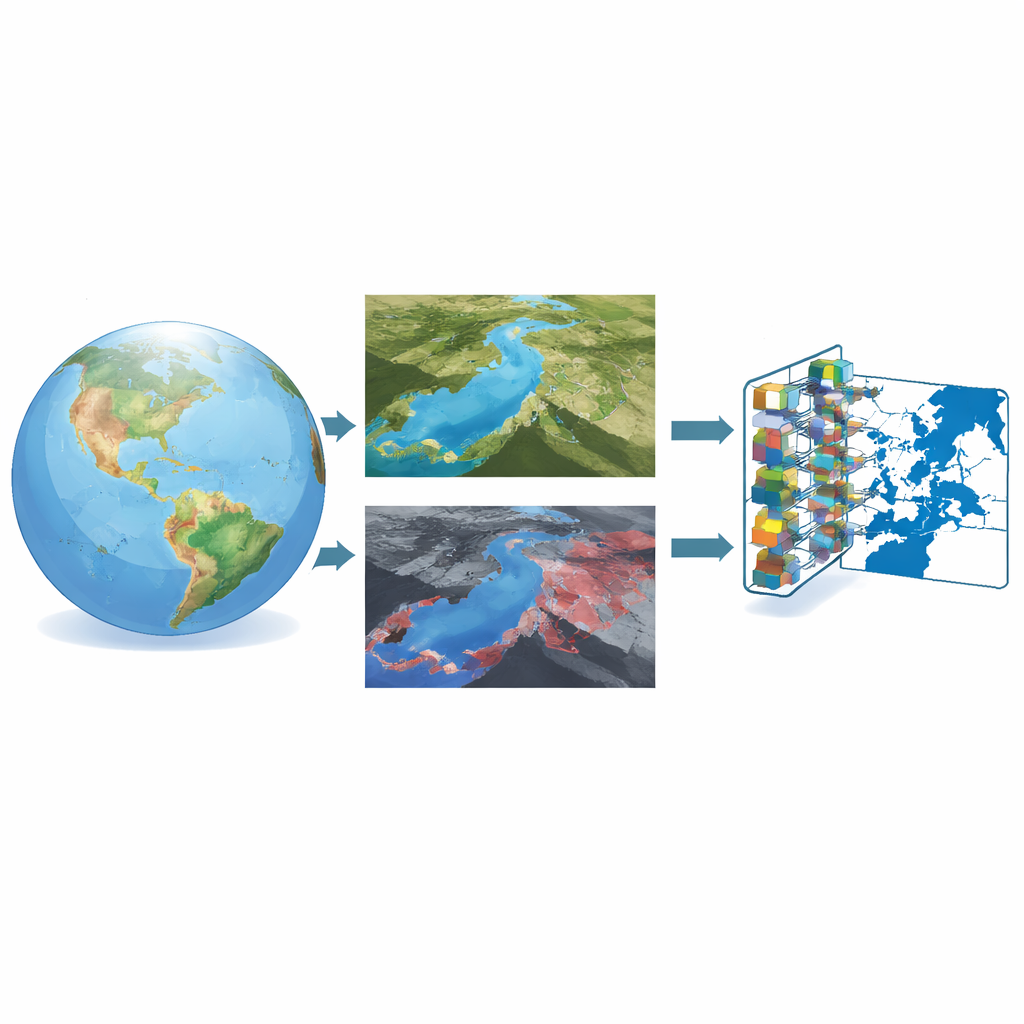

Flood mapping from space has long relied on two main kinds of satellite data. Optical images, similar to very detailed photographs, can clearly show rivers, fields, and city blocks—but clouds and heavy rain can block the view just when floods happen. Radar images, created by bouncing microwave signals off the ground, can see through clouds and work day or night, but they are noisy and harder for people to interpret. The researchers behind Sen2GF3Floods combine the strengths of both: clear optical images taken before a flood and radar images captured during the flood. The optical pictures provide a detailed snapshot of how the landscape normally looks, while the radar images reveal where water actually spreads when disaster hits.

Building a Rich Library of Flood Events

To be useful for modern AI techniques, a flood dataset must be large, diverse, and carefully labeled—and that is exactly what Sen2GF3Floods offers. It gathers satellite patches from nine big flood events across China, spanning rivers, farmlands, cities, and mountains. For each location, the team collected four color bands from Europe’s Sentinel-2 optical satellites and two radar bands from China’s Gaofen-3 mission, all at 10-meter resolution. These images were cut into over 21,000 small tiles that machine-learning models can digest. Each tile comes with a simple map marking which pixels were flooded and which were not, so algorithms can learn the subtle differences between normal water, temporary flooding, shadows, and dry land.

Letting the Computer Help Decide What Experts Should Label

A major bottleneck in creating such datasets is drawing accurate flood outlines by hand. To reduce this burden, the authors designed a three-step, dual-time annotation approach. First, they automatically generated a rough map of permanent water from the pre-flood optical image and another from the post-flood radar image, then compared the two to estimate where new water appeared. Next, human experts refined these rough maps using high-resolution background imagery, correcting tricky areas such as paddy fields and narrow channels. Finally, they trained a segmentation network—a sophisticated pattern-recognition model—to predict floods over the unlabeled tiles and measured where it was most uncertain. Only those "hard" tiles, where the model struggled, were sent back to experts for careful labeling. This loop of training, measuring uncertainty, and targeted correction allowed the team to grow the dataset while keeping manual work in check.

Testing How Well Machines Learn from the Data

With the dataset assembled, the researchers evaluated several leading image-segmentation models, including U-Net, U-Net++, DeepLabV3+, DANet, and SegFormer. Across the board, the models performed very well, correctly classifying the vast majority of pixels and capturing both broad floodplains and fine river branches. U-Net++ provided the best overall balance of accuracy and completeness. Experiments also probed deeper questions: How many labeled tiles are truly needed before accuracy stops improving much? Which combinations of optical and radar bands work best? And can a model trained on Gaofen-3 radar transfer to another radar satellite, Sentinel-1, without retraining from scratch? The results show that using both color and near-infrared optical bands together with dual radar channels yields the strongest flood maps, that performance plateaus once around a thousand labeled tiles are available, and that models trained on Gaofen-3 can indeed be applied to Sentinel-1 with promising results.

What This Means for Future Flood Response

In plain terms, the Sen2GF3Floods project delivers a high-quality "training gym" for flood-detecting AI. By fusing clear views of the landscape from before a flood with radar snapshots taken during the event, and by using an active learning scheme that focuses expert effort where it matters most, the authors have built a dataset that lets computers recognize floods quickly and reliably across many terrains. This foundation should help emergency managers and scientists generate rapid, large-area flood maps with less manual labor, even when clouds block the sky or when data must come from different satellites. Over time, expanding this approach to more cities and farming regions could turn satellite streams into practical, near-real-time tools for protecting people and infrastructure from rising waters.

Citation: Chen, W., Zhu, Y., Han, W. et al. Sen2GF3Floods: A Benchmark Multi-Source Flood Dataset with Dual-Temporal and Active Learning Annotation. Sci Data 13, 540 (2026). https://doi.org/10.1038/s41597-026-06929-6

Keywords: flood mapping, remote sensing, satellite imagery, deep learning, disaster response