Clear Sky Science · en

ULTRA-MoCap: A Multimodal IMU and sEMG Dataset for Upper Body Joint Kinematics Analysis

Why Tracking Everyday Arm Movements Matters

Every time you reach for a shelf, swing your arms while walking, or lift a bag, your shoulder, elbow, and wrist perform a precisely coordinated dance. Understanding this motion in detail could transform physical rehabilitation, sports training, and how we control assistive robots or exoskeletons. Yet most existing data about arm movement either comes from bulky camera setups in specialized labs or from wearables that capture only part of what the body is doing. This article introduces ULTRA-MoCap, a new open dataset that brings these pieces together, combining motion-capture cameras, tiny motion sensors, and muscle-activity recordings to give a richer picture of how the upper limb moves.

Bringing Multiple Sensing Worlds Together

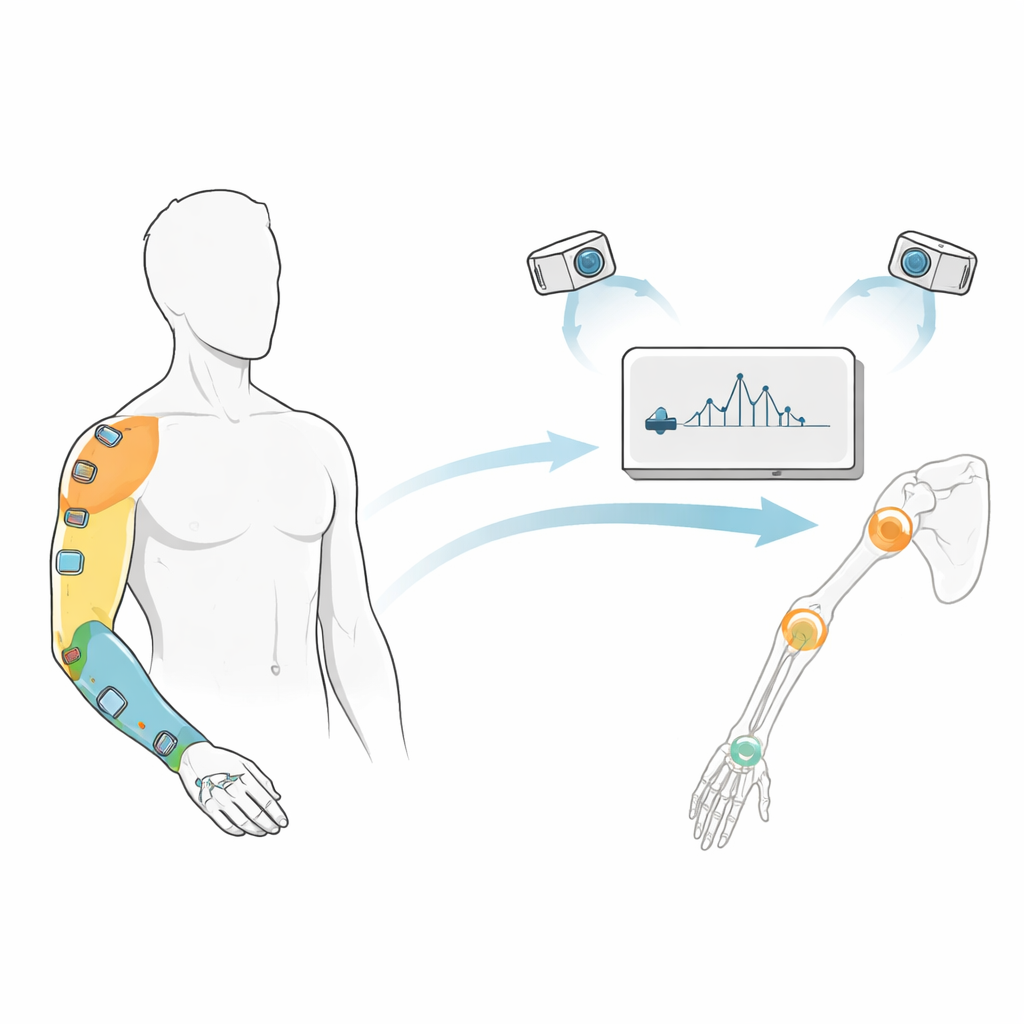

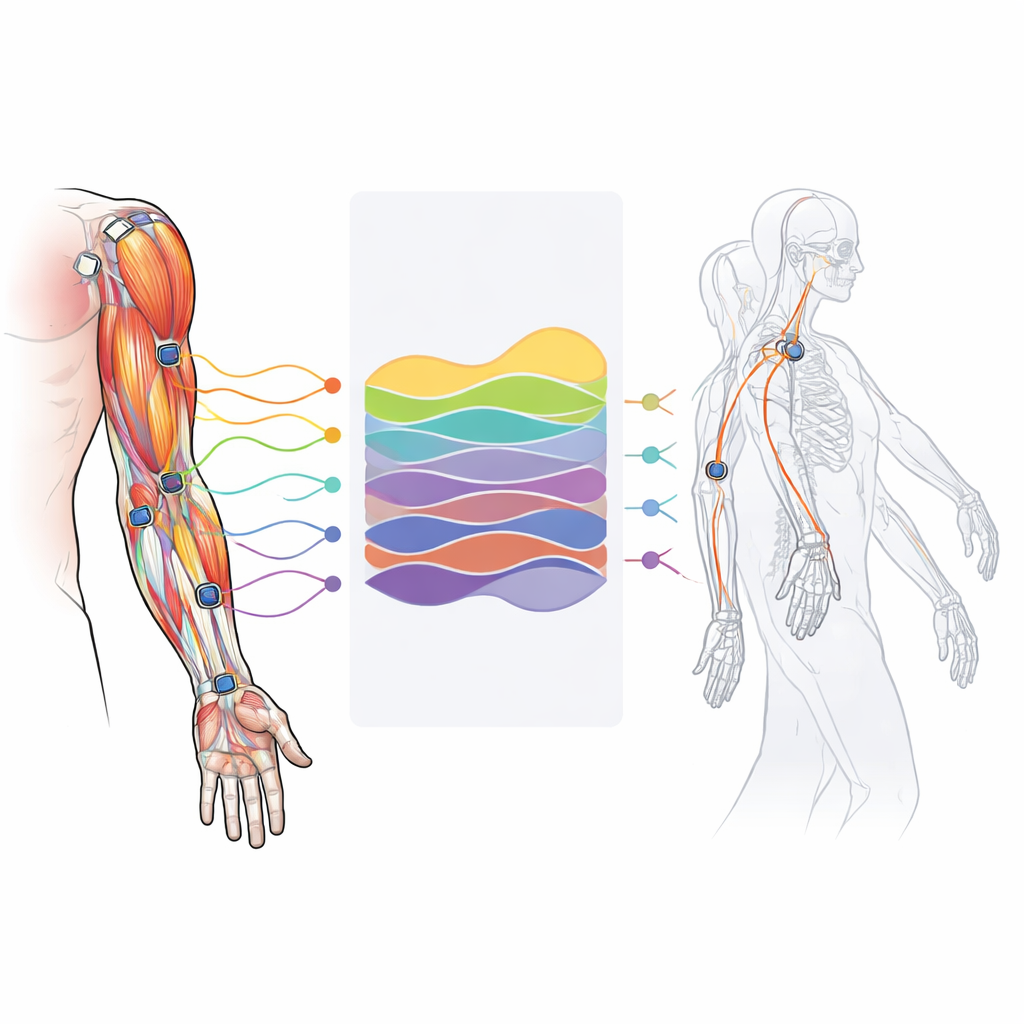

Most studies of arm motion rely on just one window into the body: either how joints move, how muscles fire, or how the limb accelerates through space. ULTRA-MoCap stands out by recording all three at once. It synchronizes a high-end camera-based motion-capture system with six small inertial measurement units (IMUs) that track movement on the hand, wrist, forearm, and upper arm, plus surface electromyography (sEMG) sensors that measure electrical activity in key muscles such as the biceps, triceps, and deltoid. This combination allows researchers to see how muscle activity, limb motion, and joint angles line up in time, offering a detailed, dynamic portrait of upper-body movement.

How the Data Were Collected from Real People

The dataset is built from experiments involving thirteen healthy adults, each carefully screened to avoid existing arm or shoulder injuries. Participants wore sixty reflective markers for motion capture and six wireless sensor units on their right arm. They performed five common upper-limb exercises: swinging both arms, reaching across the body, repeatedly bending and straightening the elbows, rotating the shoulders, and raising the arms overhead to different heights. Each trial lasted thirty seconds at self-chosen speeds ranging from slow to very fast, with rest breaks to avoid fatigue that might distort the muscle signals. The result is a wide variety of movements that still follow clear, repeatable patterns, much like natural daily activities.

From Raw Markers to Virtual Joints

To turn clouds of camera markers into meaningful joint angles, the authors used a detailed computer model of the upper body that represents bones and joints much like a virtual skeleton. They first “scaled” this model to fit each person’s body dimensions using a calibration pose, then ran an inverse-kinematics process that finds the joint positions and angles most consistent with the observed marker paths at every moment. Careful quality checks ensured that the virtual markers stayed within a few centimeters of the real ones, and that the calculated shoulder, elbow, and wrist motions looked anatomically reasonable over thousands of frames. The final dataset includes both these processed joint angles and the original sensor and marker recordings, all organized with consistent file names and formats so that others can easily reuse them.

Testing Data Quality with Machine Learning

To show that the signals are not only clean but also informative, the authors trained a deep-learning model to recognize which of the five exercises was being performed, based solely on short, two-second slices of sensor data. Using only IMU motion data, the model correctly identified the exercise more than 94 percent of the time, and performance climbed slightly higher when IMU and muscle signals were combined. Muscle data alone proved harder to generalize across different people, reflecting natural variation in how individuals recruit their muscles, but worked extremely well when the model was personalized to a single subject. These results suggest that ULTRA-MoCap is well suited for both general-purpose algorithms that must work on new users and personalized systems that adapt to one individual.

What This Resource Means for the Future

In everyday terms, ULTRA-MoCap is like having a richly instrumented “black box recorder” for the arm, capturing how bones, muscles, and wearable sensors behave together during realistic motions. Because the dataset and supporting code are publicly available, researchers can use it to design smarter rehabilitation exercises, improve control of robotic exoskeletons, refine virtual-reality interactions, or explore how to do more with fewer or simpler sensors. The study concludes that this multi-layered view of upper-limb movement fills a key gap in existing resources and should accelerate progress toward wearable technologies that understand and assist our arm motions in natural, intuitive ways.

Citation: Fritsche, O., Camacho, S., Hossain, M.S.B. et al. ULTRA-MoCap: A Multimodal IMU and sEMG Dataset for Upper Body Joint Kinematics Analysis. Sci Data 13, 622 (2026). https://doi.org/10.1038/s41597-026-06687-5

Keywords: upper limb motion, wearable sensors, electromyography, motion capture dataset, rehabilitation technology