Clear Sky Science · en

Multimodal ion-gated transistor based on 2D superionic conductor for in-memory computing in deep learning

Smarter Chips Inspired by the Brain

Modern artificial intelligence works wonders but gulps down energy, largely because today’s chips constantly shuttle data back and forth between memory and processors. This paper describes a new kind of tiny electronic device that behaves a bit more like a brain cell connection, or synapse. By handling both calculation and signal shaping in the same spot, these devices could make future AI hardware faster and far more energy-efficient, potentially benefiting everything from smartphones to edge sensors in cars and medical wearables.

Why Current AI Hardware Wastes Effort

Most AI algorithms rely on two core steps: multiplying and adding numbers to combine signals, and then passing the result through a curved “activation” step that decides which patterns matter. Conventional chips perform these steps in separate blocks. Data must be converted between digital and analog forms and moved repeatedly across the chip, which costs time and power. Engineers would like a single physical device that can both store the strength of a connection and apply the activation-like nonlinearity, but typical electronic components only do one of these jobs well. When they are pushed to do both, they tend to become unstable or inaccurate.

A New Kind of Transistor Building Block

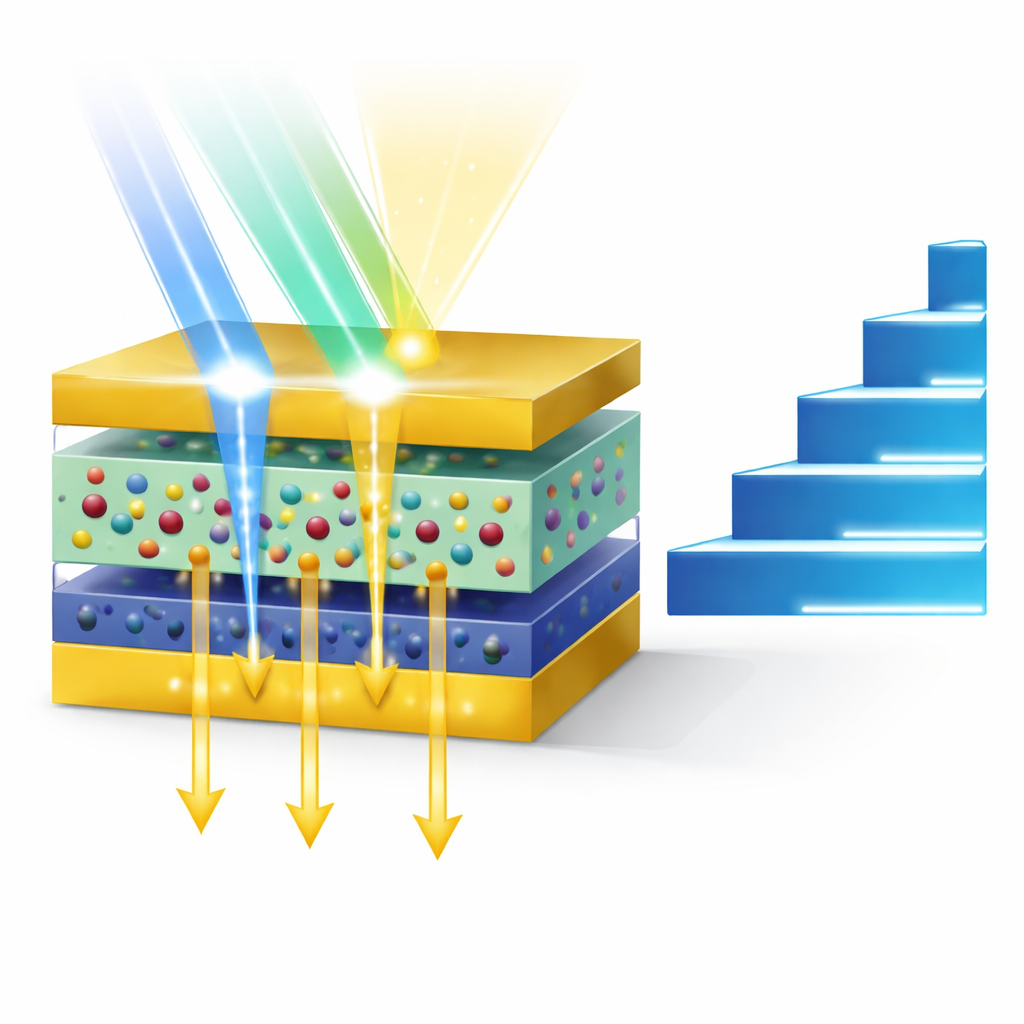

The authors introduce a transistor built from ultra-thin, layered crystals that solves this puzzle in a surprising way. The device uses a two-dimensional material called CdPS3-Li as a special ion-conducting layer, stacked with another two-dimensional semiconductor, MoS2, that carries electrical current. The CdPS3-Li layer contains lithium ions that can move easily in some directions but not others, and it also hosts empty sites (vacancies) that can trap charge. When an electrical pulse is applied, lithium ions drift to the boundary with the MoS2 layer and stay there, strongly changing how well the device conducts. When light shines on the device instead, charges created in the MoS2 layer are pulled into those vacancies, leading to rich, time-dependent responses.

Turning Light and Electricity into Brain-Like Signals

Because of this design, the same transistor can naturally support two very different but complementary behaviors. Under electrical pulses, it offers many stable resistance levels that change smoothly and predictably, acting like adjustable weights in a neural network. These levels are non-volatile, meaning they remain even after the pulse ends, and the device can reliably step through dozens of distinct states without wearing out. Under light pulses, however, the current rises and then decays in a curved way that can be closely fitted by mathematical functions commonly used as activation curves in deep learning. By tuning the strength, duration, and number of light pulses, the researchers can shape how quickly the response fades, effectively “programming” different activation profiles in hardware.

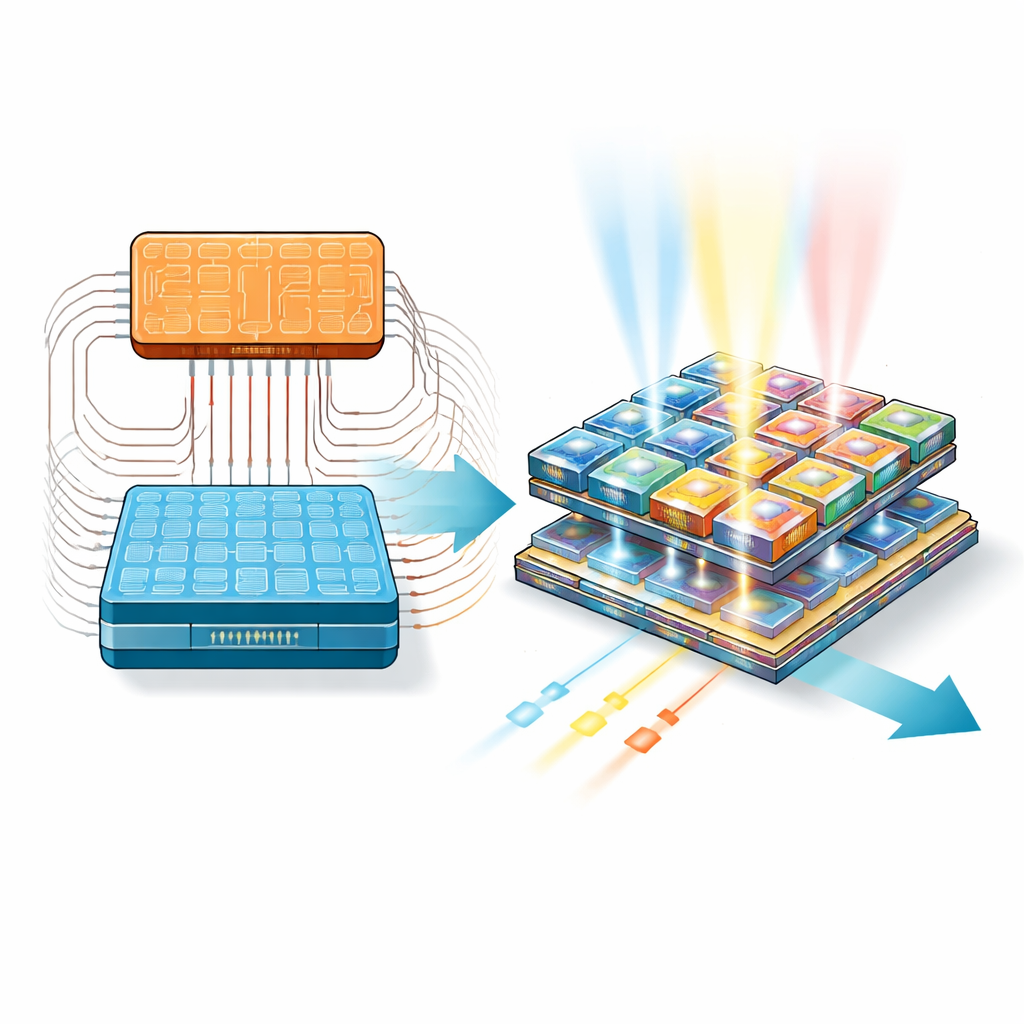

From Single Devices to Working AI Arrays

To show that this is more than a lab curiosity, the team built arrays of these transistors and wired them up to run parts of a real neural network. One array performs the multiply-and-accumulate step: the stored resistance of each device represents a weight, and applying voltages across a grid lets the currents naturally add up according to the rules of circuit physics. A second, smaller array is exposed to carefully timed light pulses so that its fading currents implement the activation step. By coordinating these two modules with conventional control electronics, the researchers trained a network to recognize handwritten digits, reaching an accuracy above 97 percent—comparable to purely digital systems, but achieved with components that inherently combine memory and processing.

What This Means for Everyday Technology

To a non-specialist, the key message is that these new ion-based, light-sensitive transistors act much more like biological synapses than traditional silicon switches. They can remember connection strengths, respond differently to electrical versus optical cues, and do so with extremely low energy per operation. While they are not ready to replace the chips inside your phone tomorrow, they point toward a future in which AI hardware is denser, more efficient, and more brain-like. Such advances could eventually enable powerful learning systems that fit into small, battery-powered devices, bringing smarter sensing and decision-making closer to where data are generated.

Citation: Tong, B., Du, T., Du, J. et al. Multimodal ion-gated transistor based on 2D superionic conductor for in-memory computing in deep learning. Nat Commun 17, 4127 (2026). https://doi.org/10.1038/s41467-026-70587-w

Keywords: neuromorphic computing, in-memory computing, ion-gated transistor, 2D materials, deep learning hardware