Clear Sky Science · en

Validity and fairness of the PISA 2018 Global Competence assessment: an argument-based evaluation via explanatory item response models

Why this study matters for everyday life

Today’s teenagers grow up in a world where news, friends, and future jobs cross national borders. Schools are trying to prepare them to navigate different cultures, sift through online information, and work with people unlike themselves. The Programme for International Student Assessment (PISA) attempted to measure this “global competence” in 2018. This study asks a simple but important question: can we trust those test scores to say who is really globally competent, and are they fair to different groups of students?

Looking closely at a worldwide school test

PISA’s 2018 test on global competence was taken by 15-year-olds in many countries and was treated as a key indicator of how well education systems prepare young people for an interconnected world. Yet researchers and educators have worried that the concept of global competence is hard to pin down and may be colored by Western views and cultural biases. This paper zooms in on the Canadian students who took the test and carefully inspects the questions and results. The author uses a structured approach to validity: first asking whether answers are scored consistently, then whether scores would look similar across different test versions, whether they agree with other signs of global competence, and finally whether they treat boys and girls fairly.

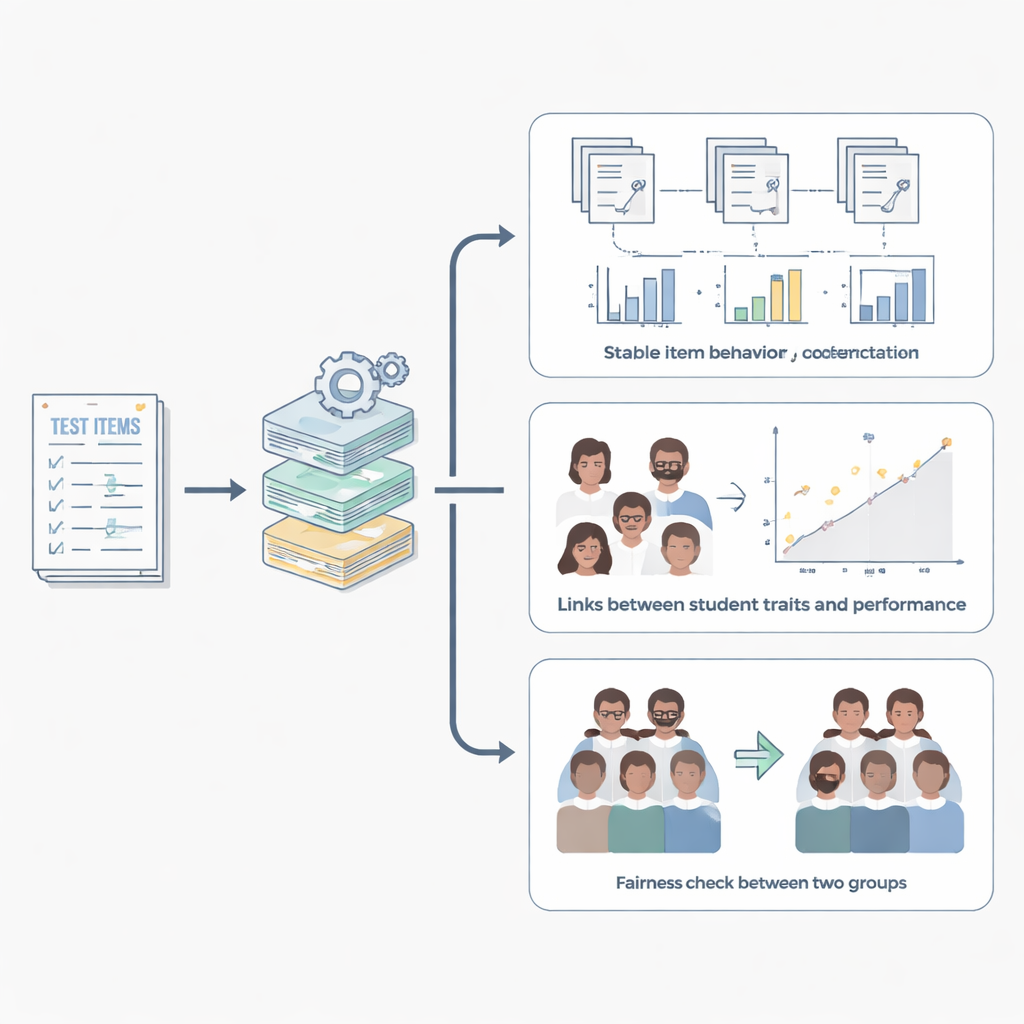

How the test and students were analyzed

The researcher used a modern statistical family of methods that looks not only at whether students get items right or wrong, but also at how features of the test and features of the students affect the difficulty of each question. PISA’s global competence items are grouped into small story-based sets called “testlets” and are delivered in different booklets, or forms. The study treated each booklet group separately, filled in small amounts of missing data with cautious imputation, and then combined results across groups using meta-analysis. Alongside test scores, it used students’ answers to survey questions about confidence in dealing with global issues, respect for people from other cultures, awareness of cross-cultural communication, and attitudes toward immigrants.

What the study found about score quality

The analysis showed that the story-based groupings of questions did not, by themselves, distort how hard items appeared to be. In other words, simply placing questions together in a scenario did not strongly sway results once overall ability was taken into account. Some booklets, however, did make items slightly harder than others, suggesting that which form a student received can nudge scores up or down a bit. At the student level, those who reported higher confidence in handling global issues, more respect for cultural diversity, and greater sensitivity to intercultural communication tended to perform better on the cognitive tasks. These links were generally stable across the different booklets. Not every related trait behaved as expected: some measures of feeling globally minded or aware of world issues had weak or even slightly negative connections with test performance, underscoring how complex and multi-layered global competence really is.

Checking fairness between girls and boys

The study also examined whether particular questions gave an unfair advantage to girls or boys once overall ability was controlled. For most items, differences between genders were tiny and inconsistent, meaning the questions behaved similarly for both groups. A handful of questions did show moderate or large advantages, more often favoring girls and occasionally boys. These were few in number but consistent enough across test forms to warrant closer review. Crucially, there was no sign that the test as a whole was stacked against either gender, but some individual questions could be refined or replaced in future versions.

What this means for using global competence scores

For readers outside the testing world, the bottom line is that PISA’s 2018 global competence scores for Canadian students are mostly sound: they capture a real ability connected to how young people think about and respond to global and intercultural situations, and they do so in broadly fair ways. At the same time, the study highlights that test design details—such as which booklet a student receives and how survey traits are defined—can subtly shape results. It shows that measuring something as rich as global competence is possible but demands constant attention to how questions are written, how they are grouped, and how they work for different kinds of students.

Citation: Yavuz, E. Validity and fairness of the PISA 2018 Global Competence assessment: an argument-based evaluation via explanatory item response models. Humanit Soc Sci Commun 13, 570 (2026). https://doi.org/10.1057/s41599-026-06979-6

Keywords: global competence, PISA 2018, educational assessment, test fairness, item response modeling