Clear Sky Science · en

A generalizable eye disease detection method based on Zero-Shot Learning

Why early eye warnings matter

Many serious eye problems begin with changes so subtle that even trained specialists can struggle to see them. Mild diabetic retinopathy, an early complication of diabetes, is one such silent warning sign: catching it early can prevent vision loss, but it demands careful inspection of retinal photographs and a huge number of expertly labeled images to train today’s artificial intelligence (AI) systems. This study introduces a new kind of AI that can learn to spot early disease even when doctors have never labeled any examples of that specific condition, potentially opening the door to faster, more affordable eye screening worldwide.

A new way to teach machines without answers

The researchers build on a concept called zero-shot learning, where an AI system learns to recognize something new without having seen labeled examples of it before. Instead of memorizing disease labels, the system mimics how clinicians reason: it looks for related diseases that share visual patterns and transfers what it learns from them. Here, the team focused on mild diabetic retinopathy (DR1) but trained their method without a single DR1 image labeled as such. Instead, they assembled a massive resource of retinal photographs, called the LCFP-14M dataset, integrating more than a million images and eleven eye disease categories drawn from many public databases. This rich mix of images provides the visual “experience” from which the AI can infer patterns of disease.

Finding a look‑alike disease

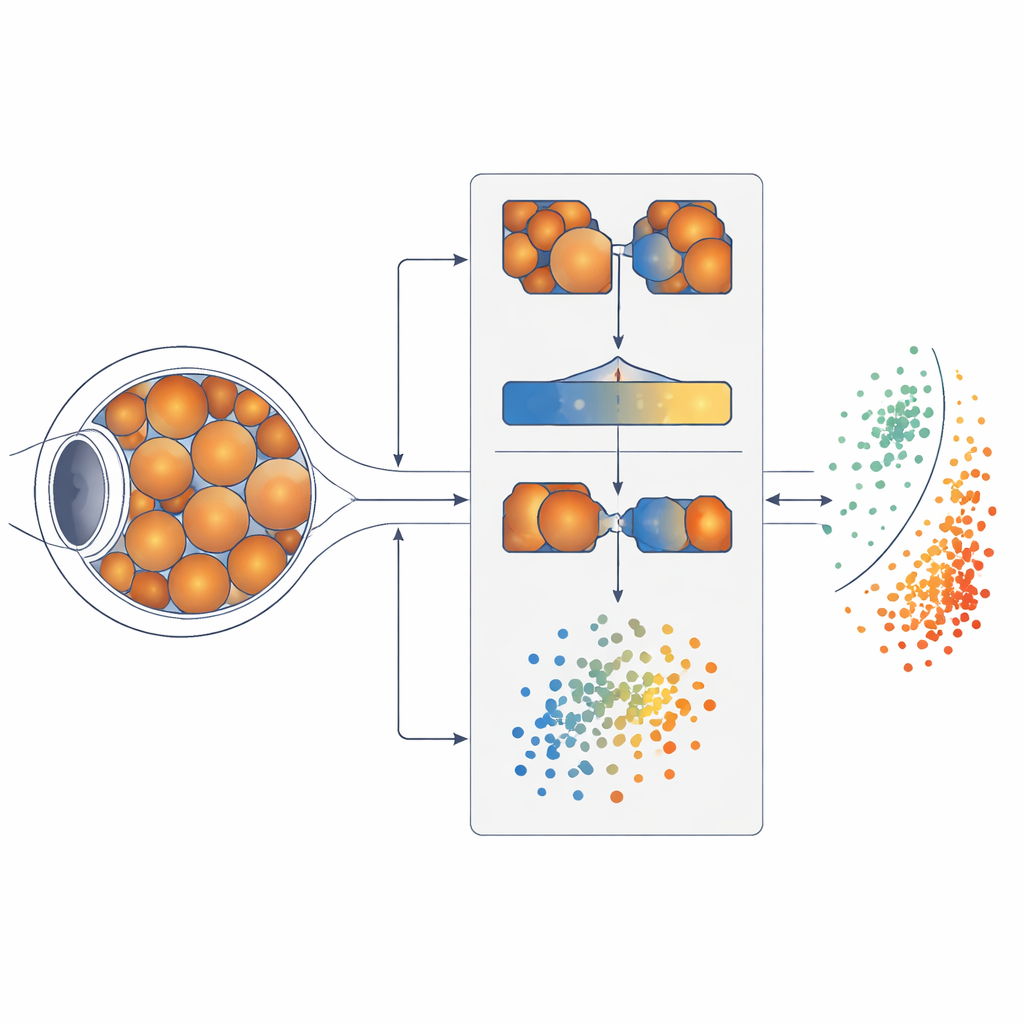

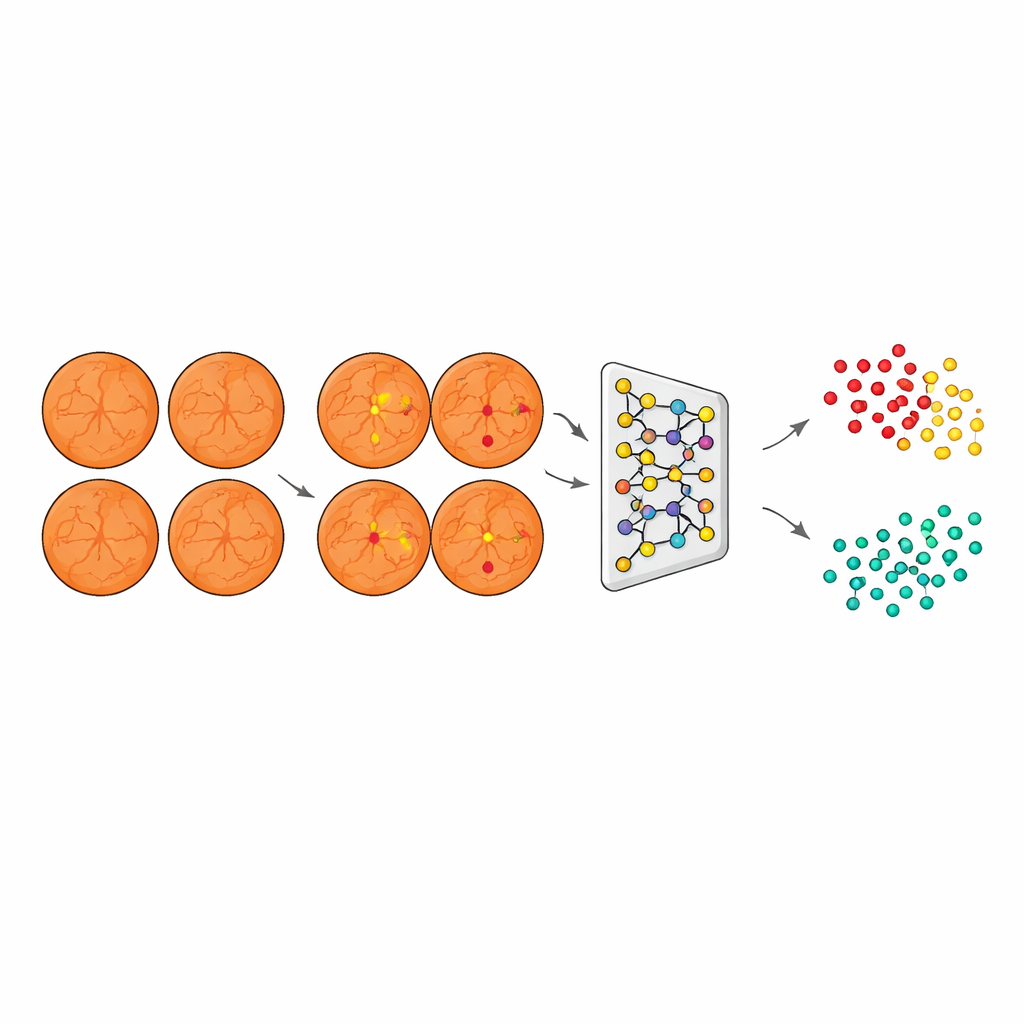

To figure out where to borrow knowledge from, the system’s first step is to measure how similar different eye diseases look to one another. The team used a Siamese neural network, a pair of identical AI models that inspect two retinal images at a time and learn to say whether they likely belong to the same disease. By comparing thousands of image pairs, the model built a map of how closely eleven diseases resemble mild diabetic retinopathy. It discovered that degenerative myopia, a condition involving stretching and thinning of the back of the eye, produced retinal images most strongly correlated with those early diabetic changes. In human terms, degenerative myopia became the “closest cousin” that could teach the system what to watch for.

Teaching the system what tiny lesions look like

Once a suitable “teacher” disease was found, the next task was to show the AI which specific spots on the retina matter most for early diabetic damage. Using a second model called U‑Net, the researchers trained a segmentation system on established datasets where experts had marked three key signs: tiny bulges in blood vessels known as microaneurysms, small hemorrhages, and pale cotton wool spots. Although not all are unique to the mild stage, together they trace an early trail of damage in the retinal circulation. U‑Net learned to highlight just these lesions on images, turning raw photographs into focused maps where important warning signs stand out while less-relevant details fade into the background.

Clustering unseen disease into healthy and not‑healthy

Armed with this lesion-focused view, the system then processed images from patients with degenerative myopia, but with diabetic-style lesions emphasized and disease-specific artifacts suppressed. A third model, based on a ResNet network combined with an agglomerative clustering algorithm, converted these segmented images into compact numerical descriptions and grouped them into two natural clusters. Crucially, the algorithm did this without any labels telling it which eyes were healthy or diseased; it simply organized images based on shared visual patterns. When the team later compared these clusters with true clinical labels on an independent test set, one cluster aligned with DR1 and the other with non-diseased eyes, showing that the AI had effectively “discovered” mild diabetic retinopathy on its own.

How well the new approach stacks up

To judge whether this zero‑shot system was practically useful, the researchers compared it to more conventional “few‑shot” deep learning models that were allowed to see a small number of labeled examples. They tested popular architectures such as ResNet, VGG, MobileNet, and AlexNet, all trained on limited amounts of labeled data and then evaluated on an external dataset called EyePACS. The zero‑shot model, despite never being shown labeled DR1 images during training, reached an accuracy of about 83% and a high area‑under‑the‑curve score, outperforming most of these supervised competitors—especially in precision, meaning the eyes it flagged as diseased were usually truly at risk. Ablation experiments, where individual components were removed, confirmed that both the disease‑similarity step and the lesion‑segmentation step were essential for this strong performance.

What this means for future eye care

In everyday terms, this work shows that an AI can learn to spot early diabetic eye damage by “reasoning by analogy” from related diseases and expert‑defined visual clues, rather than relying on thousands of hand‑labeled examples of the exact condition of interest. That could be a game‑changer for screening programs in parts of the world where expert labeling is expensive or rare, or for newly recognized eye diseases that lack large curated datasets. While the method still faces challenges—such as extending beyond fundus photos to other imaging technologies and making its decisions more transparent to doctors and patients—it points toward a future where machines help clinicians catch subtle eye disease earlier, using far less labeled data than today’s systems require.

Citation: Pan, C., Wang, Y., Jiang, Y. et al. A generalizable eye disease detection method based on Zero-Shot Learning. Commun Med 6, 249 (2026). https://doi.org/10.1038/s43856-026-01439-3

Keywords: diabetic retinopathy, retinal imaging, zero-shot learning, medical AI, eye disease screening