Clear Sky Science · en

A domain-adaptive deep contrastive network for magnetic resonance imaging-driven bladder cancer classification

Why smarter scans matter

Bladder cancer is common and can be life-threatening, but not all bladder tumors behave the same way. Some stay on the surface of the bladder wall, while others burrow into the muscle and require far more aggressive treatment. Doctors use magnetic resonance imaging (MRI) to help judge how deep a tumor has grown, yet these images can be hard to read and vary from hospital to hospital. This study introduces a new artificial intelligence (AI) system designed to read bladder MRI scans more consistently and accurately, no matter where the images are collected.

Two kinds of tumors, two very different paths

For patients, a key question is whether their tumor is confined to the inner lining of the bladder (non-muscle-invasive) or has invaded the muscle layer (muscle-invasive). The answer shapes everything from surgery type to chemotherapy and radiation plans. Today, doctors rely on a mix of endoscopy, tissue sampling, and imaging to decide, but MRI scans can show tumors with fuzzy borders, odd shapes, and small sizes that make judgment difficult. On top of that, scanners from different manufacturers and hospitals produce images with slightly different appearances, which can confuse standard computer models.

Building an AI that travels well

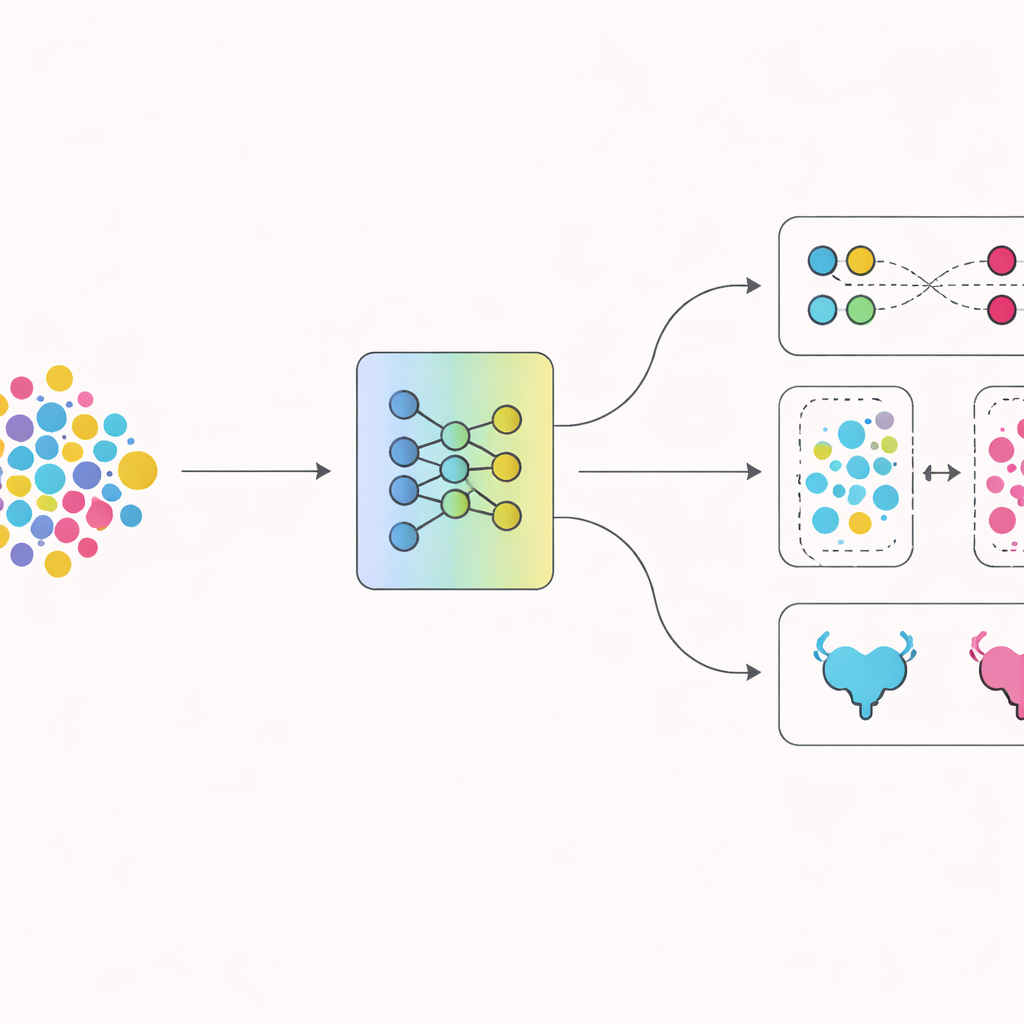

The authors developed a system called DADCNet that tries to overcome these hurdles. They trained it on MRI scans from 279 bladder cancer patients treated at four different medical centers, each using different scanner brands and settings. Instead of learning only from one hospital and then being tested on another, the network is fed images from both the "home" centers it learns from and the "new" centers it will encounter later. Inside the model, a feature-extraction stage transforms raw images into numerical patterns, while a special "domain adaptation" module nudges those patterns to look similar across hospitals. This helps the AI focus on the medical content of the image rather than quirks of the scanner.

Teaching the system to see clear differences

A second innovation lies in how the network is trained to separate the two disease types. Beyond simply rewarding correct answers, the authors use a technique that encourages images of the same kind of tumor to cluster tightly together in the model’s internal space, while pushing apart images from different tumor types. Over time, this contrast-based training helps the model carve out a cleaner boundary between non-muscle-invasive and muscle-invasive cancers. Visualization tools confirm that the internal representations of the two groups, which start out tangled together, gradually pull apart into distinct clusters as training progresses.

Putting the new approach to the test

The researchers compared their system with several well-known deep learning models that are widely used for medical imaging. Using repeated tests on different splits of the data, DADCNet achieved higher accuracy and better balance between correctly flagging muscle-invasive tumors and avoiding false alarms. When parts of the design were switched off—either the cross-center adaptation or the contrast-based training—the performance dropped and became less stable, underscoring that both pieces mattered. Additional experiments mimicking real-world use, where the AI is trained on some hospitals and tested on a completely different one, showed that DADCNet handled these shifts more gracefully than other models.

Seeing where the AI "looks"

To reassure clinicians, the team probed what regions of each MRI scan the network relies on when making its predictions. Heat maps revealed that the model focuses mainly on the tumor itself and the nearby muscle layer—the same regions radiologists inspect when deciding whether the cancer has invaded muscle. This alignment between human and machine attention, together with the clear separation of tumor types in the model’s internal space, suggests that the system is not just memorizing superficial patterns but is learning medically meaningful clues.

What this means for patients

In plain terms, this work shows that carefully designed AI can read bladder MRI scans with high accuracy, even when those scans come from different hospitals and machines. By learning to ignore technical differences between centers and to sharpen the contrast between early and advanced disease, DADCNet could support doctors in making more confident, consistent decisions about how aggressively to treat bladder cancer. Although larger studies and broader testing are still needed, this approach points toward future imaging tools that travel well from hospital to hospital and help ensure that patients receive the right level of care based on a more reliable reading of their scans.

Citation: Huang, J., Hu, H., Sun, M. et al. A domain-adaptive deep contrastive network for magnetic resonance imaging-driven bladder cancer classification. npj Digit. Med. 9, 305 (2026). https://doi.org/10.1038/s41746-026-02499-4

Keywords: bladder cancer MRI, muscle invasive bladder cancer, medical imaging AI, domain adaptation, contrastive learning