Clear Sky Science · en

Real-time reconstruction of 3D bone models via very-low-dose protocols

Sharper surgery with gentler imaging

Every year, tens of millions of people undergo orthopedic procedures on their bones and joints. Surgeons increasingly rely on custom 3D models of a patient’s bones to plan operations and guide precise cuts, but today those models usually require a bulky CT scanner, high radiation doses, and hours of expert labor. This study introduces a faster, gentler way: using just two low-dose X-ray images and artificial intelligence to rebuild a detailed 3D picture of the knee in about half a minute, potentially bringing high-end planning and guidance to more operating rooms and patients.

Why current bone scans fall short

Traditional 3D bone models start with a CT scan, which creates hundreds of X-ray slices that computers assemble into a full volume. While powerful, this approach carries three big drawbacks. First, it bathes patients in relatively high radiation, a serious concern for children, pregnant women, and anyone needing repeated scans. Second, CT machines are large, expensive, and awkward to fit into crowded operating rooms; setting them up during surgery can take 20–30 minutes and disrupt staff. Third, once the scan is done, experts still spend hours tracing the outline of each bone on specialized software to build a usable 3D model. Altogether, that makes CT-based models great for select cases, but impractical for real-time use in the middle of an operation.

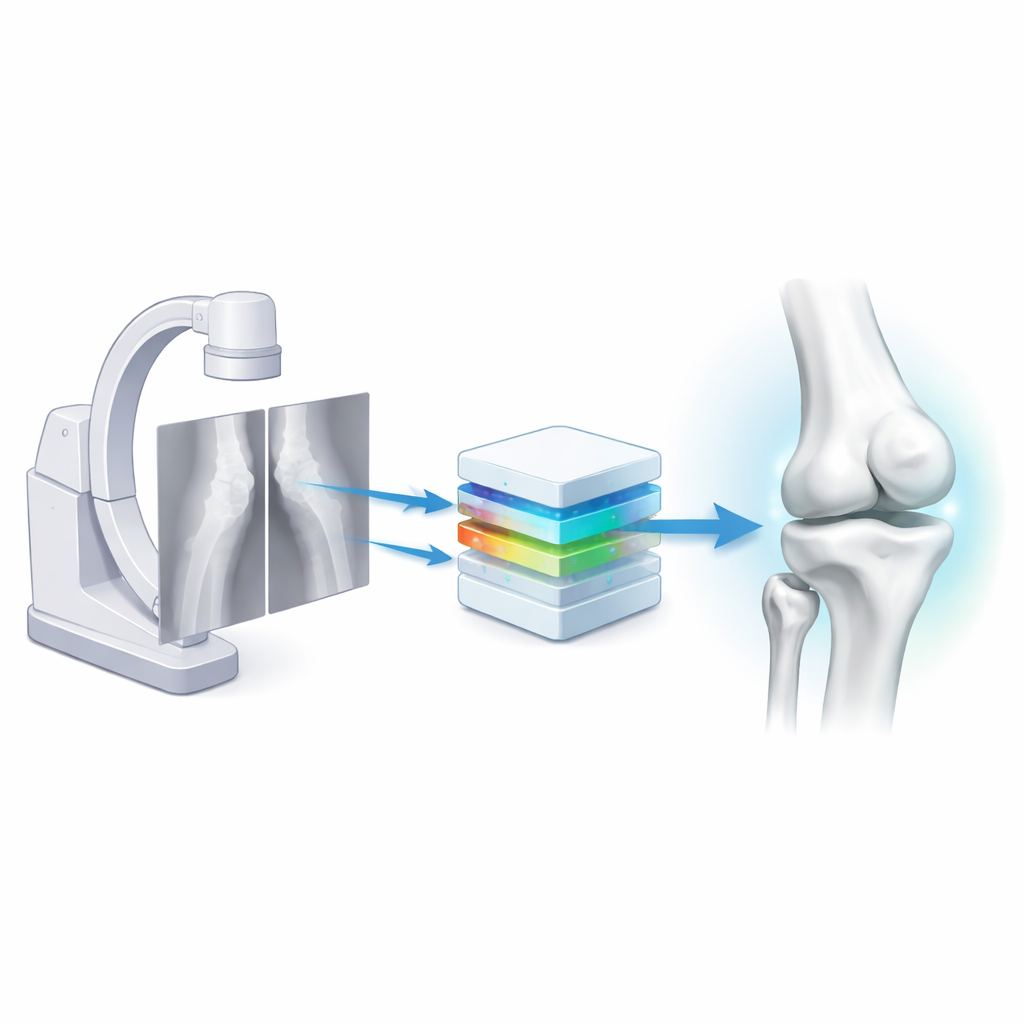

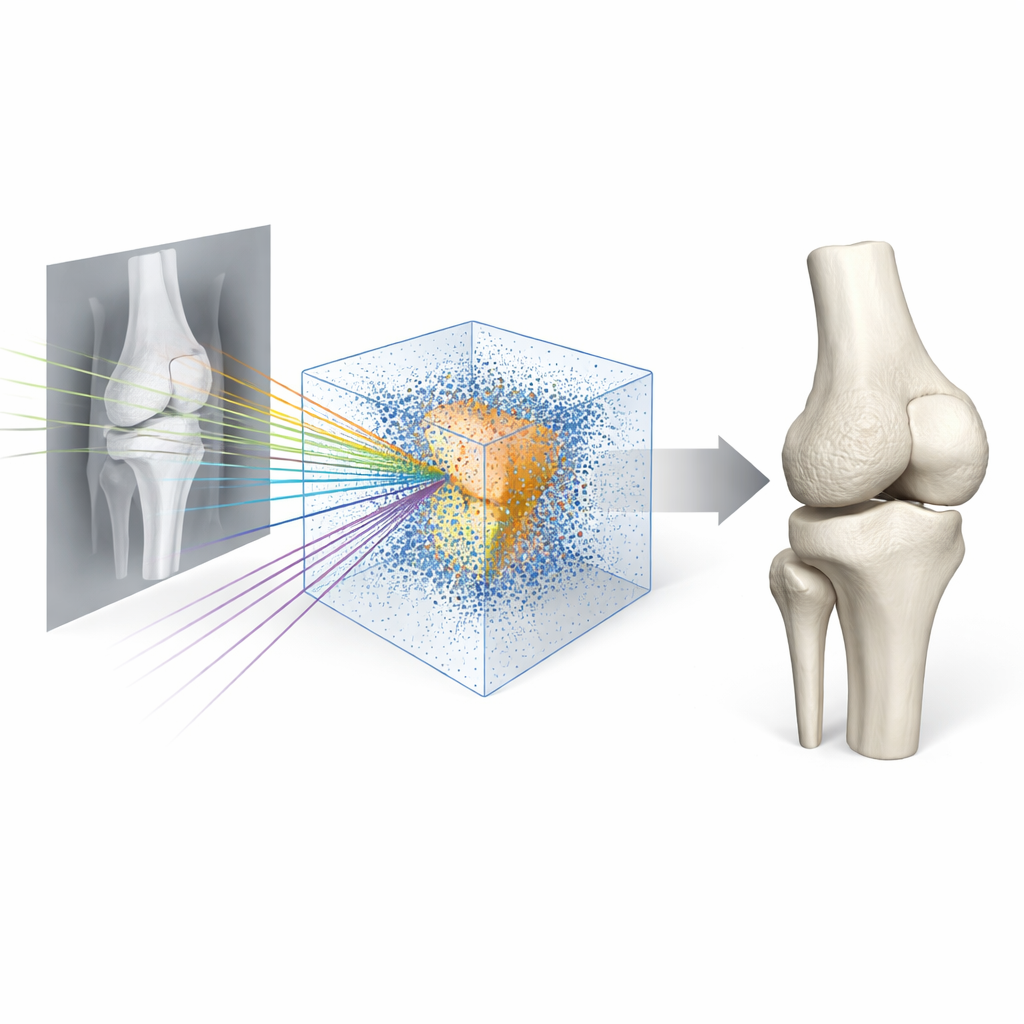

Turning two simple X-rays into a full 3D knee

The researchers set out to replace this slow pipeline with a nimble one based on biplanar X-rays—two standard views of the knee taken at right angles, using common fluoroscopy equipment like a C-arm. They built a deep-learning system called Semi-Supervised Reconstruction with Knowledge Distillation (SSR-KD). Instead of directly predicting a visible surface, the system learns an “occupancy field”: for any point in 3D space, it estimates whether that point lies inside or outside each of the four main knee bones—the femur, tibia, fibula, and patella. After the network has filled this invisible 3D grid with inside–outside decisions, a standard graphics algorithm converts the field into smooth 3D bone surfaces. On modern hardware, the entire process—from receiving the two X-rays to producing four detailed bone models—takes roughly 25 seconds.

Teaching the AI with limited expert time

High-quality training data is usually the bottleneck for medical AI. Here, manually outlining a single leg’s bones in CT took experts about four hours, so doing that for hundreds of cases would be unrealistic. The team instead collected 605 knee CT scans but painstakingly labeled just 120 of them, using the rest as unlabeled support. They first trained one network to reconstruct bones directly from CT scans, a task that is easier because the 3D information is fully present. That CT-based model then served as a teacher: for unlabeled cases, it produced “pseudo” 3D bone information that guided the X-ray-based student network. By combining this cross-modal teaching with a semi-supervised training scheme, the student learned to infer invisible 3D structure from only two views, performing well even when only a few dozen cases had expert-labeled bones.

How well the new models match reality

To see whether these quick reconstructions are good enough for real surgery, the team evaluated them in several ways. Against expert-created CT models, SSR-KD’s average surface error was under one millimeter, with the large femur and tibia often within 0.8 millimeters—similar to the native resolution of the CT scans themselves. A panel of ten orthopedic experts and medical engineers rated 3D knees from either CT or from the two-X-ray method, without knowing which was which, judging shape, fine detail, and clinical usefulness. The scores were essentially indistinguishable, and experts generally felt the AI-based models were suitable for planning complex bone cuts. In a more hands-on test, surgeons performed simulated high tibial osteotomies on 3D-printed bones, using custom cutting guides designed either from CT-based or from AI-reconstructed bones. Guides based on the new method took no longer to use and achieved comparable scores for fit, stability, and accuracy.

Limits, challenges, and future uses

The approach is not flawless. Smaller bones like the kneecap and fibula, which can be hidden in the X-ray views, showed slightly higher errors. Very complex cases, such as knees with large metal implants that distort the anatomy, remain challenging, and the current study relied heavily on simulated X-rays derived from CT rather than on large numbers of real clinical images. Still, the method proved robust across different hospitals and maintained clinically useful accuracy even when the two X-ray views were taken less than a right angle apart—a realistic constraint in the operating room. The same framework could potentially be adapted to other joints with relatively simple shapes, like the elbow, though more intricate regions with many small bones would likely require further advances.

What this could mean for patients

In plain terms, this work shows that a computer can learn to “see” a 3D knee from just two quick, low-dose X-rays, well enough to support precise surgical planning and custom guides that once demanded a full CT scan and hours of expert effort. If validated on large sets of real-world X-rays and extended beyond the knee, such technology could lower radiation exposure, cut costs, and bring personalized 3D bone models to more hospitals—including those without advanced scanners—making careful, image-guided bone surgery more widely available.

Citation: Lin, Y., Sun, H., Li, Y. et al. Real-time reconstruction of 3D bone models via very-low-dose protocols. npj Digit. Med. 9, 353 (2026). https://doi.org/10.1038/s41746-026-02389-9

Keywords: orthopedic surgery, 3D bone models, low-dose X-ray, medical AI, knee imaging