Clear Sky Science · en

DermaScanAI an explainable hybrid deep learning framework for automated skin lesion classification using dual attention and metadata fusion

Why smarter mole checks matter

Skin cancer is one of the most common cancers, and spotting it early can save lives. Yet many people wait months or years before having a suspicious mole checked, and even experienced doctors can find it hard to judge tricky cases. This study introduces DermaScanAI, a computer system designed to help doctors sort harmless spots from dangerous ones by looking at detailed skin images and basic patient information, while also showing clearly what it "looked at" to reach its decision.

Teaching computers to read skin images

Dermatologists often use a handheld device called a dermoscope to capture close-up images of moles and other spots. These images reveal tiny color and texture patterns that can hint at cancer, but they are also full of distractions such as hairs, uneven lighting and camera differences. DermaScanAI begins by cleaning the images: it digitally removes hairs, evens out brightness and colors, and enlarges the lesion area to a standard size. The system then boosts the data by flipping, rotating and lightly altering colors so that the computer sees many realistic variations of each lesion, helping it cope better with real-world variety.

Seeing both fine detail and the bigger picture

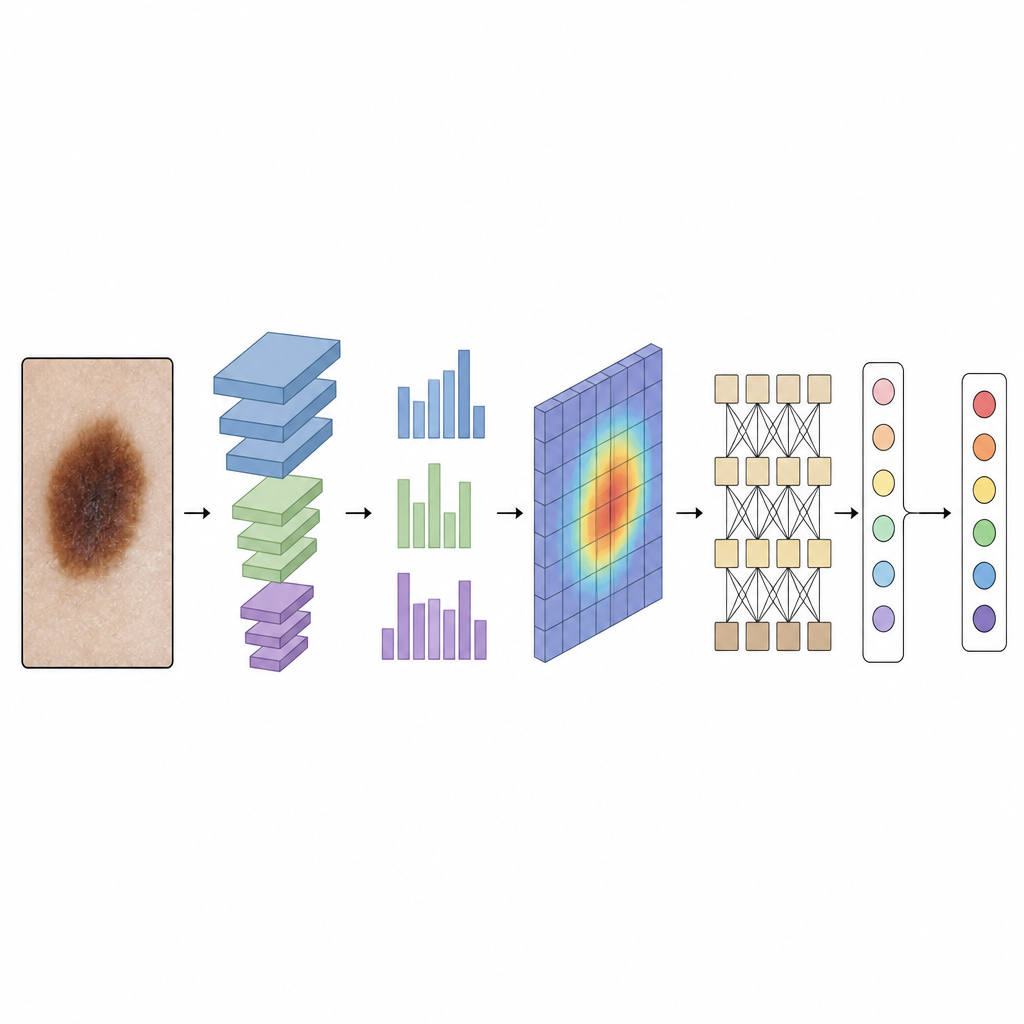

Many past computer tools for skin cancer focused only on small local patterns or only on overall shape, which can cause confusion when very different lesions look similar in parts. DermaScanAI combines two kinds of pattern recognition to avoid this problem. First, a modern convolutional network, a type of image-processing engine, picks out fine details such as edges, color dots and texture at several scales, from tiny patches to larger regions. On top of this, special attention modules help the system "turn up the volume" on the most informative color channels and image areas, while quieting unhelpful background regions.

Adding global context and patient clues

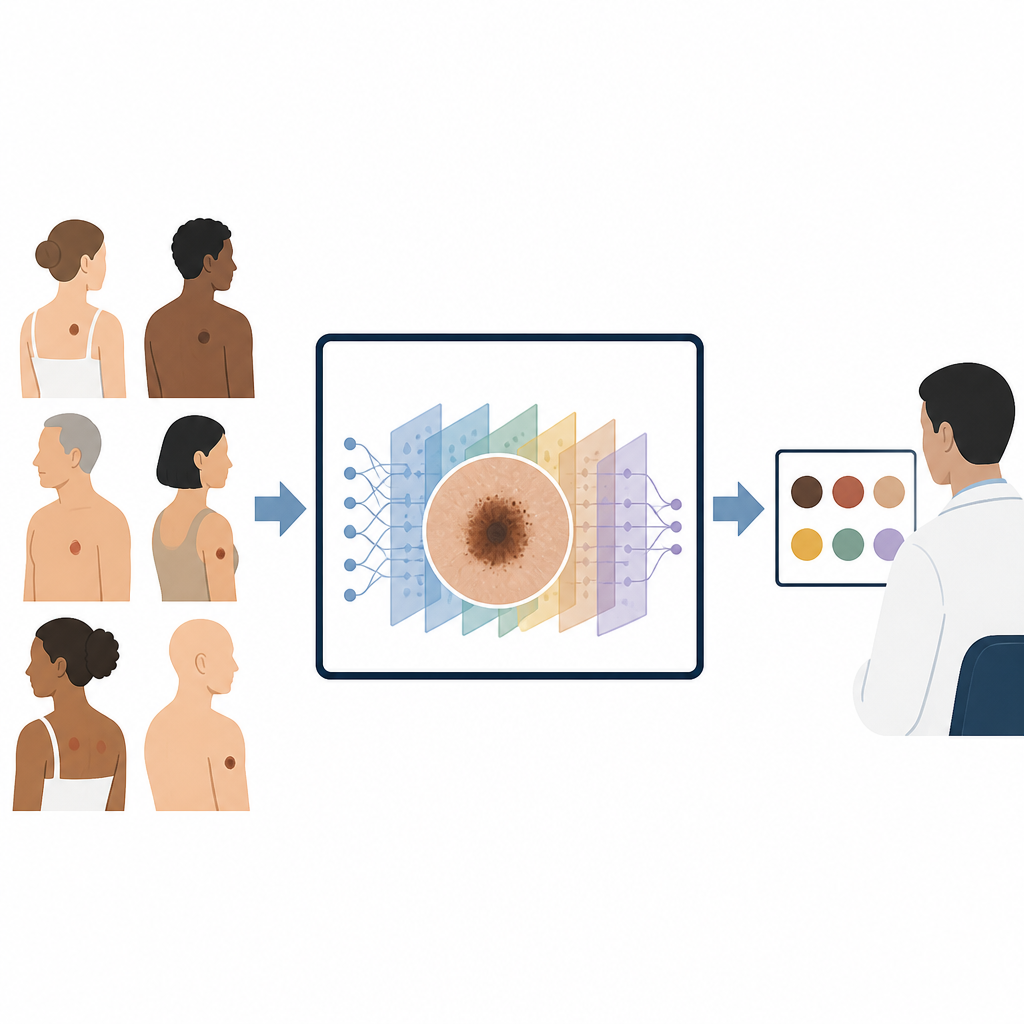

After focusing on the most important image regions, DermaScanAI passes the refined features to a lightweight transformer module. This part of the model is good at linking distant parts of the image, so it can relate the center of a mole to its border and surrounding skin. At the same time, the system brings in simple patient information such as age, sex and body location of the lesion. These clues reflect real clinical practice, where a dark spot on a young person’s leg can carry a different risk than a similar spot on an older person’s back. By fusing image features with these basic details before making a decision, the model mimics how a dermatologist thinks about context.

Making decisions that doctors can inspect

A major barrier to using artificial intelligence in clinics is that many systems feel like black boxes. To address this, DermaScanAI includes an explainability module. Using a technique called Grad-CAM++, it produces heatmaps that highlight which parts of the lesion most influenced its decision; another method, SHAP, estimates how much each piece of information, including the patient metadata, pushed the result toward one diagnosis or another. When these heatmaps are laid over the original images and compared with expert-drawn lesion outlines, they show strong overlap, suggesting the system is focusing on medically meaningful regions rather than random noise.

How well the system performs

The researchers tested DermaScanAI on HAM10000, a large public collection of more than 10,000 dermoscopic images covering seven types of skin lesions, including melanoma, common moles and several benign conditions. After training and careful cross-checking with multiple data splits, the model reached about 94.8% overall accuracy, a macro-average F1-score near 92% and an area under the ROC curve of 0.962, indicating strong ability to separate lesion types. It also remained reasonably accurate when trained on only a fraction of the data, and it compared favorably to other leading systems built solely on convolutional networks or transformers while using fewer computational resources.

What this means for future skin checks

In plain terms, this work shows that a carefully designed computer model can sort different types of skin lesions with high reliability, use simple patient information to refine its judgment and show doctors exactly where it is "looking" on the skin. DermaScanAI is not a stand-alone diagnostic tool yet: it still needs testing across many hospitals, cameras and patient groups before supporting real clinical decisions. But it offers a clear blueprint for future skin-cancer aides that are both accurate and transparent, potentially helping doctors catch dangerous lesions earlier while giving patients and clinicians more confidence in how those decisions are made.

Citation: Murali, P., Mazumder, D.H. DermaScanAI an explainable hybrid deep learning framework for automated skin lesion classification using dual attention and metadata fusion. Sci Rep 16, 15130 (2026). https://doi.org/10.1038/s41598-026-46011-0

Keywords: skin cancer, dermoscopy, deep learning, medical imaging AI, explainable AI