Clear Sky Science · en

ClarityTrack for multi object tracking via hierarchical association and environment specific cost matching

Why following many moving things is hard

From self-driving cars to security cameras and sports broadcasts, modern cameras are expected to keep track of many people or objects at once. But real life is messy: people cross paths, disappear behind others, or blur as they move. This paper introduces ClarityTrack, a new way to keep digital "eyes" on multiple moving targets more reliably, even in crowded streets or fast dance scenes.

How computers usually follow objects

Most tracking systems first detect objects in each video frame, then try to link those detections over time to form smooth paths. They rely on two main hints: motion (where something is predicted to move next) and appearance (how it looks, via visual fingerprints learned by deep networks). Existing methods usually mix these two hints using a fixed recipe, for example always weighting motion and appearance in the same proportion. That works in simple scenes, but breaks down when the crowd gets dense, motion becomes unpredictable, or camera blur changes how people look.

Why one fixed recipe is not enough

Imagine watching a crowded crosswalk: positions overlap, so motion-based distance becomes unreliable, but clothing and height can still separate people. Now picture a dance performance: everyone wears similar outfits and moves erratically, so appearance and motion cues are both unstable. The paper shows that traditional trackers ignore this variety, treating every frame as if the same mixture of motion and appearance should work. They also tend to just add the two pieces of evidence together without checking whether they actually agree, which can silently produce identity swaps and broken paths.

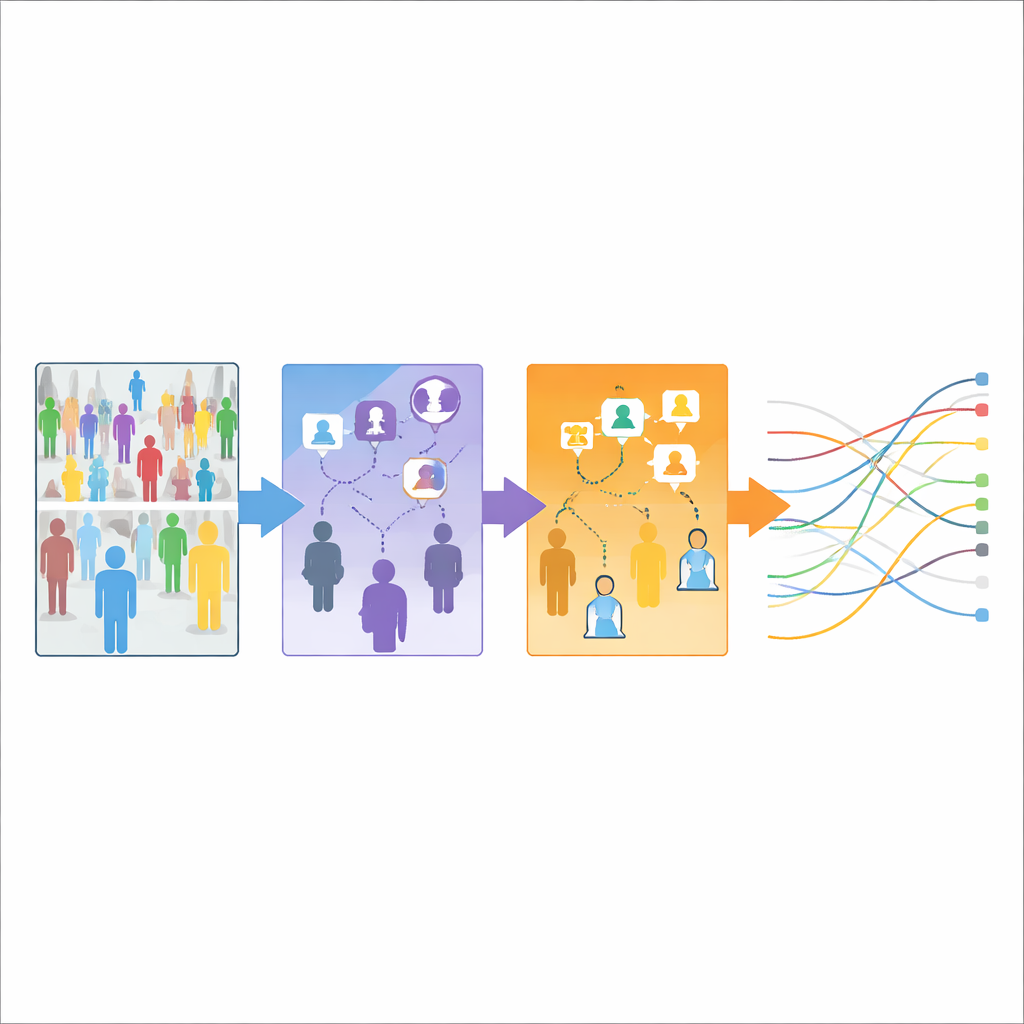

A three-step strategy for clearer tracking

ClarityTrack tackles these problems with a rule-based design built from three modules that work in sequence. First, Balanced Cascade Association splits detections into high- and low-confidence groups. For high-confidence detections it blends motion and appearance evenly, taking advantage of both. For low-confidence ones, it falls back to a cautious, motion-only match to avoid being misled by blurry or occluded images. Second, Condition-Aware Matching with Weights recognizes that different video environments behave differently. It pre-learns separate parameter sets for balanced scenes, very crowded scenes, and unstable, highly non-linear motion. For each potential match between a tracked object and a new detection, it decides on the fly whether to keep the neutral 50:50 blend or switch to an environment-tuned blend that favors either motion or appearance, but only when clear quality conditions are met.

Checking whether motion and looks tell the same story

The third module, Motion-Appearance Consistency Check, acts like a referee between motion and appearance. For each possible match, it examines whether the predicted position and the visual similarity both look good, only one looks good, or neither does. When both agree, it slightly lowers the matching cost to encourage that connection. When they contradict each other, it raises the cost to discourage a likely mistake. When motion fails but appearance is very clear, it gently supports reconnecting an object that has reappeared after occlusion or sudden movement. These adjustments are tuned differently for each environment type so that the system stays cautious in very crowded scenes but more willing to re-link dancers in chaotic motion.

How well the new approach works

The authors tested ClarityTrack on three widely used benchmarks: MOT17, representing typical street scenes; MOT20, representing extremely crowded sidewalks; and DanceTrack, filled with groups of dancers performing complex moves. Across these datasets, ClarityTrack matched or beat the best existing online trackers in key measures of tracking quality, especially those that judge how well identities are maintained over time. Importantly, most of these gains come from smarter data association rather than heavier neural networks, and the system still runs at or above real-time speeds for typical scenes.

What this means for everyday technology

For non-experts, the main takeaway is that ClarityTrack shows how simple, transparent rules, when carefully tuned to the environment, can rival or improve on more opaque, one-size-fits-all approaches. By separating high- and low-confidence detections, adapting to the type of scene, and explicitly checking whether motion and appearance agree, the method keeps track of who is who more reliably in everything from street crowds to dance floors. This kind of environment-aware tracking could make camera-based systems safer and more trustworthy in the messy, ever-changing real world.

Citation: Lee, SE., Yang, HS., Jung, SH. et al. ClarityTrack for multi object tracking via hierarchical association and environment specific cost matching. Sci Rep 16, 10581 (2026). https://doi.org/10.1038/s41598-026-45425-0

Keywords: multi-object tracking, computer vision, video surveillance, crowd analysis, autonomous driving