Clear Sky Science · en

Lightweight FMCW radar framework for human activity recognition under limited data conditions

Watching Daily Life Without a Camera

Imagine a home that can quietly notice if an older adult has fallen, or if someone has stopped moving for an unusual length of time—without cameras, microphones, or wearable gadgets. This paper presents a new way to use small radar sensors and efficient artificial intelligence to recognize everyday human activities in a bedroom-like setting, even when only a small amount of training data is available. The goal is to build practical, privacy-preserving monitoring systems that can eventually run on low-cost devices in real homes, hospitals, and care centers.

Why Radar Instead of Cameras or Wearables?

Many current activity-monitoring systems rely on wearable devices such as smartwatches or on video cameras placed around a room. Wearables can be uncomfortable, need charging, and are often forgotten or removed, especially during sleep or bathing. Cameras raise obvious privacy concerns and can fail in poor lighting or when a person is behind furniture. Radar offers a different option: it sends out radio waves and measures their reflections to infer movement and position. Millimeter-wave radar can work in the dark, through some obstacles, and without revealing a person’s appearance, making it an appealing technology for respectful, continuous monitoring of daily life.

Turning Invisible Waves Into Useful Patterns

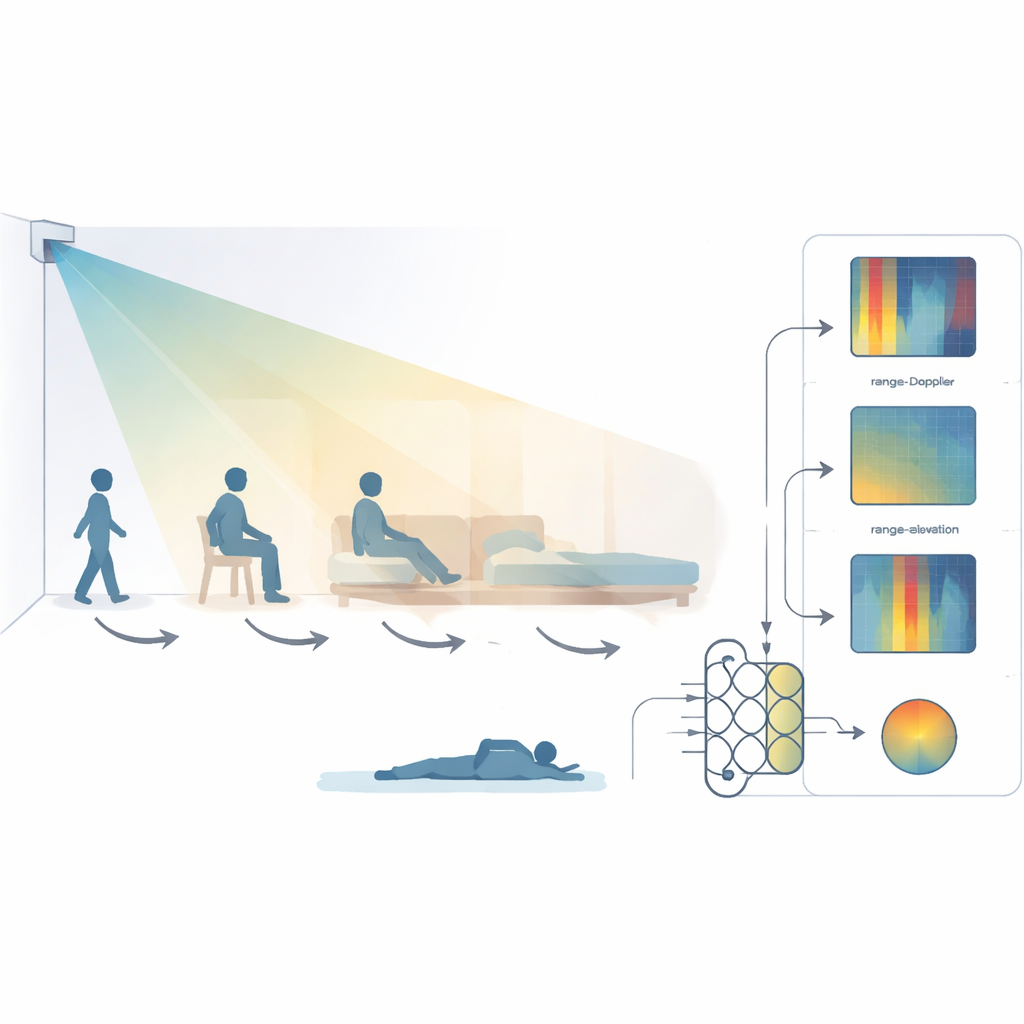

The researchers used a compact 60 GHz radar sensor mounted high in a bedroom-like room, tilted down to cover a bed, chair, and floor. Three volunteers took part in 16 recording sessions, performing seven categories of activities: the room being empty, walking, sitting on a bed, sitting on a chair, lying on the bed, lying on the floor, and transitions between these states. Instead of turning the radar signals into pictures, as many earlier studies do, the team kept the data in a form that directly reflects physical quantities: distance to the radar, motion speed, and direction from different angles. From each radar frame they extracted three kinds of feature maps—range–Doppler (motion versus distance), range–azimuth (horizontal angle), and range–elevation (vertical angle)—and stacked them into compact multi-dimensional vectors that preserve how a person’s body moves in space over time.

Lightweight Intelligence for Small Devices

To read these radar patterns, the authors built a deep-learning model that is deliberately small and efficient. It combines a streamlined version of a popular image-recognition network (ResNet-18) with a bidirectional long short-term memory module, which is a type of recurrent neural network. Specialized “depthwise separable” convolutions greatly cut the number of calculations and parameters while still capturing important spatial details, and the recurrent part learns how activities unfold over short time windows of half a second. To make the system more resilient despite having data from only three people, the team applied realistic data augmentation: shifting the apparent position or speed slightly, scaling and biasing signal intensity, flipping motion direction, and adding gentle noise—mimicking how real homes and real people vary from one moment to the next.

How Well Does It Recognize Everyday Actions?

The framework was tested using two strict evaluation strategies. In cross-scene testing, the model was trained on most of the recorded sessions and tested on scenes it had never seen before. In leave-one-person-out testing, it was trained on two people and tested entirely on the third, probing how well it generalizes to new individuals. Across a challenging seven-activity task, the system reached about 92% accuracy and nearly 90% F1-score when scenes were held out, and about 90% accuracy on unseen people. When the task was simplified to four core activities—walking, sitting on bed, sitting on chair, and lying on bed—accuracy climbed to around 99% in cross-scene tests. Notably, this performance matched or surpassed larger, more computationally demanding neural networks, while using less than one million parameters and a tiny model size of under 7 megabytes.

What This Could Mean for Future Smart Homes

In plain terms, the study shows that a small radar unit and a compact AI model can reliably distinguish common indoor activities, even with limited training data and without invading privacy. By working directly with physically meaningful radar features and carefully chosen augmentation tricks, the authors achieve both accuracy and efficiency, making their approach suitable for embedded hardware at the edge rather than bulky servers in the cloud. As datasets are expanded to include more people and behaviors, this kind of radar-based monitoring could underpin future smart bedrooms, hospital rooms, and assisted-living spaces that quietly keep an eye on safety and well-being while respecting the dignity and privacy of the people they serve.

Citation: Fard, A.S., Mashhadigholamali, M., Zolfaghari, S. et al. Lightweight FMCW radar framework for human activity recognition under limited data conditions. Sci Rep 16, 9650 (2026). https://doi.org/10.1038/s41598-026-44815-8

Keywords: radar-based activity recognition, FMCW millimeter-wave radar, smart home monitoring, lightweight deep learning, ambient assisted living