Clear Sky Science · en

Classification of health product defect reports by deep learning

Why catching bad medicines faster matters

Most of us assume that the medicines and health products we use are safe and made to strict quality standards. Yet around the world, hundreds of drug products are recalled every year because of contamination, incorrect ingredients, or misleading labels. Each faulty product is a potential threat to patients. Regulators must quickly read and interpret thousands of defect reports to decide which ones demand urgent action. This paper describes how a deep-learning system was built to help health authorities classify these reports faster and more consistently, so they can focus attention on problems with the greatest risk to public health.

How product problems are reported today

When a possible defect is found in a medicine or other health product, a short written report is sent to regulators. These reports can describe many issues: glass shards in a vial, the wrong ingredient in a pill, packaging that leaks, or labels that could lead to dosing mistakes. In Singapore, the Health Sciences Authority uses a standard medical dictionary, adapted for local needs, to group each report into one of several specific categories, such as contamination with microbes or advertising that breaks the rules. The category assigned to a report helps determine how serious the problem is and how quickly it must be handled. At present, trained officers read every report and assign a label by hand. This work is slow, complex, and can be inconsistent, especially as the number of reports grows.

Teaching a computer to read defect reports

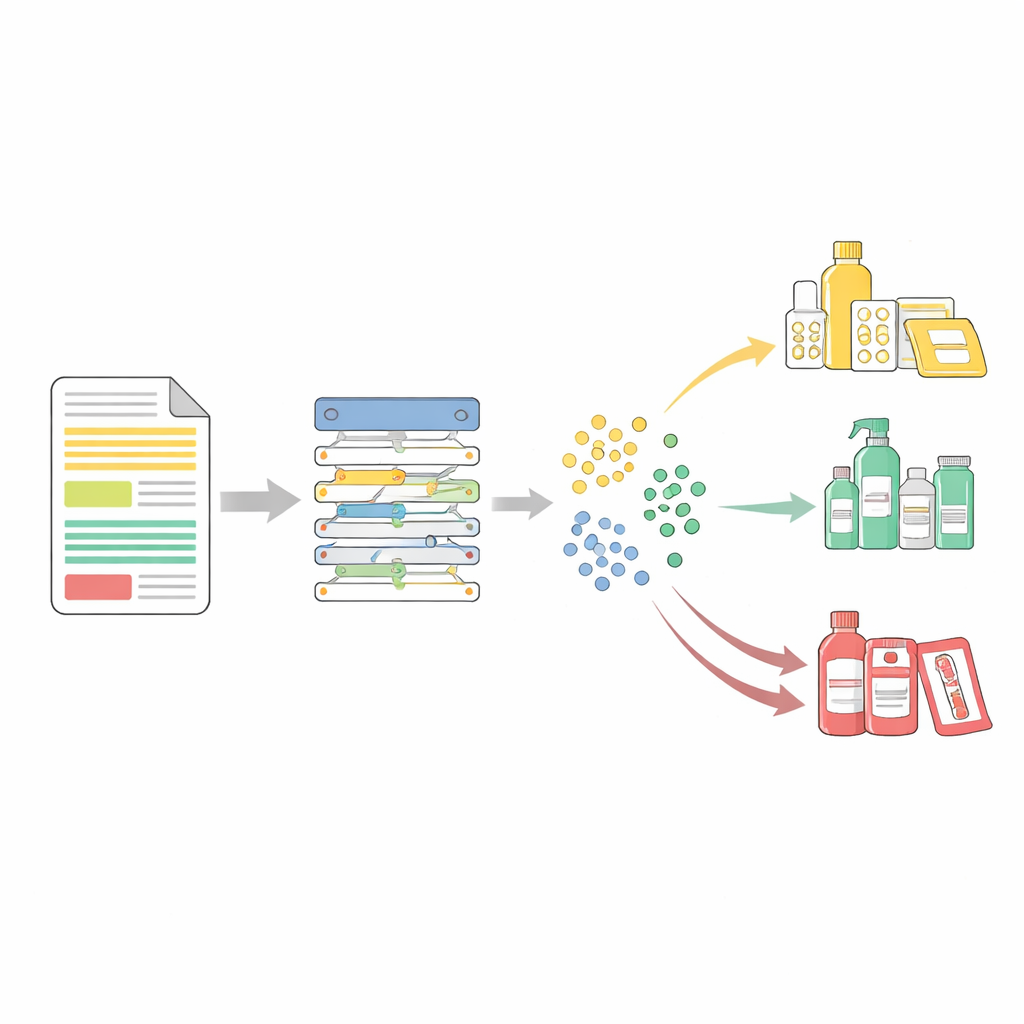

The researchers set out to build an artificial intelligence system that could support these officers rather than replace them. They collected 13,830 defect reports received between 2010 and 2021, covering medicines, vaccines, supplements, and cosmetics. A team of experienced pharmacists carefully reviewed and labeled each report using 21 of the most common defect categories, which together covered more than 99% of all cases. The team then used a popular language model called BERT, which is designed to understand the meaning of words in context, as the core of their system. By fine-tuning BERT on this labeled collection, they created a tool—called MedDefects‑BERT—that could read a report’s title and description and predict the most likely defect category.

How well the system performs

When tested on reports it had not seen before, MedDefects‑BERT matched the experts’ top choice 86% of the time. If the system was allowed to suggest its three most likely categories, it included the correct one 96% of the time. This is important because a real officer can simply review a short list of suggestions rather than start from scratch. The system worked better for categories with more training examples, which is typical for machine learning. Even so, allowing up to three suggested labels raised performance above 70% for every category, including rarer ones. The model’s confidence scores—numbers between 0 and 1 indicating how sure it is—were strongly linked to how often it was right. By setting a confidence cut-off, the team showed they could raise accuracy to about 91% on “certain” predictions while flagging a modest fraction of cases as “uncertain” for closer human review.

Looking inside the model’s decisions

The authors also tackled a key concern with AI in safety-critical fields: transparency. They used visualization tools to show that reports belonging to the same defect type cluster together in the model’s internal “map” of document meanings, while misclassified reports sit at the edges between clusters. At the level of individual words, they applied a method called SHAP to highlight which terms in a report pushed the model toward a given category. For example, words related to fungi or mold strongly influenced predictions of microbial contamination, while terms like “sediment” or “precipitation” supported a category linked to deposits in products. These explanations give officers a quick way to see why the model made a suggestion and to judge whether it makes sense in context.

Making the system smarter and more efficient

To further improve performance without adding heavy computing costs, the team used a technique known as deep prompt tuning. Instead of changing all of the model’s internal settings, they added small trainable “prefixes” to each layer that gently steer the model toward this specific task. Combining traditional fine‑tuning with these prompts boosted the system’s accuracy in more than half of the defect categories and improved its ability to correctly detect cases overall. Tests on newer reports from 2022 showed that the system’s accuracy held up over time, suggesting that its understanding of defect reports did not quickly go out of date.

What this means for patients and regulators

The study shows that a well-designed language model can significantly help regulators sift through large volumes of health product defect reports, standardize how cases are categorized, and highlight high-risk problems more quickly. Because the system also explains which words and passages drove its suggestions, human experts remain firmly in control of final decisions. With further refinement—such as handling multiple defect types in one report and expanding to rarer categories—similar tools could strengthen medicine quality surveillance worldwide, reduce delays in recalling dangerous products, and ultimately offer better protection for patients.

Citation: Sancenon, V., Huang, Y., Zou, L. et al. Classification of health product defect reports by deep learning. Sci Rep 16, 13528 (2026). https://doi.org/10.1038/s41598-026-43961-3

Keywords: drug safety, medicine quality, deep learning, regulatory surveillance, natural language processing