Clear Sky Science · en

The most important features in generalized additive models might be groups of features

Why groups can matter more than single clues

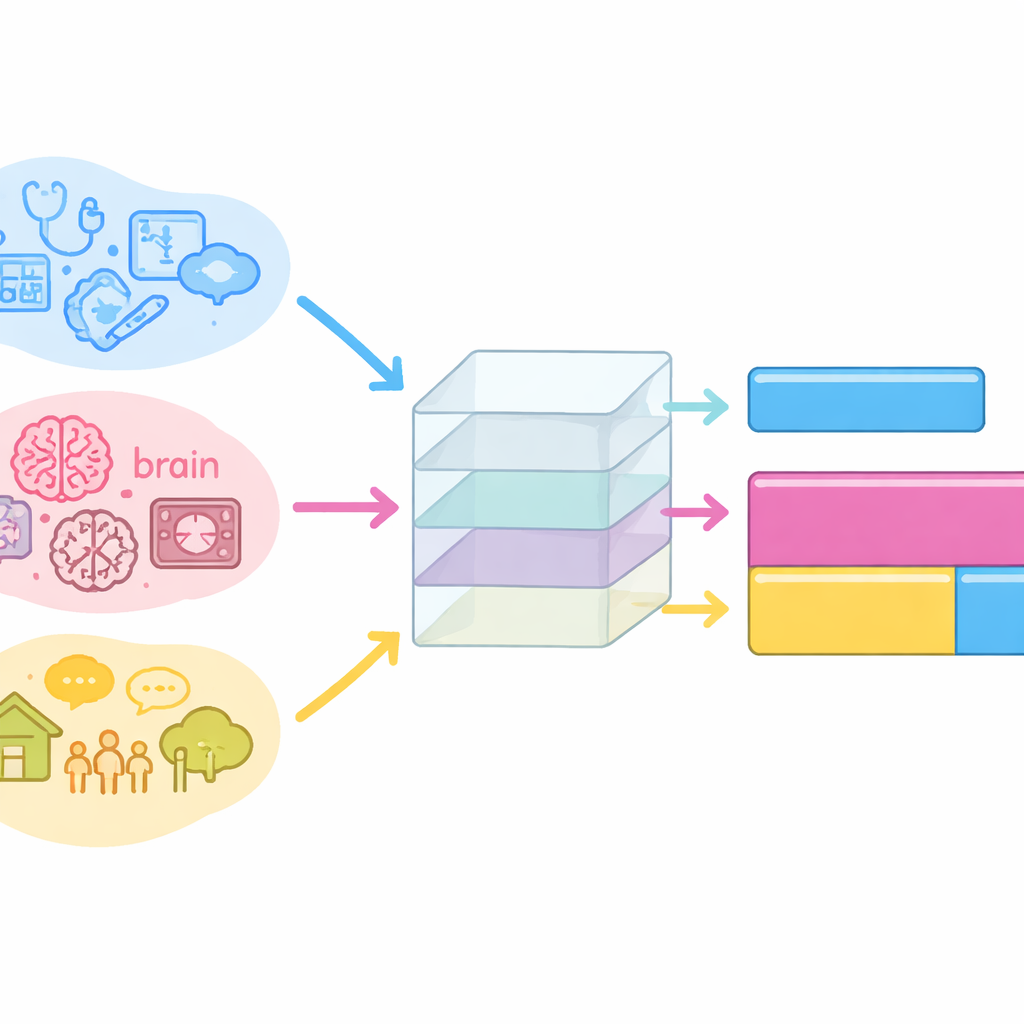

Modern predictive models often sift through hundreds of measurements, from brain scans to neighborhood statistics, to forecast health outcomes. We usually ask which single factor matters most: age, a lab test, or maybe a brain region. This paper argues that this lens is too narrow. In many real medical problems, what really drives predictions is the combined signal from groups of related features, not any one feature alone. The authors propose a fast way to measure how important such groups are in a widely used class of transparent models, and they show that this group perspective uncovers medical insights that would otherwise be missed.

Looking beyond single risk factors

Most interpretability tools today rank individual features by how much they influence a model’s predictions. That works reasonably well when features are independent. But in health data, many variables move together: trauma experiences cluster, brain networks co-activate, and social conditions co-occur. When features are highly correlated, a model often spreads the signal across them, giving each a modest score even if, together, they carry strong predictive power. Focusing only on single factors can therefore hide the true drivers of risk, or even lead to dropping useful measurements during feature selection.

A simple way to measure group influence

The authors focus on Generalized Additive Models, a transparent family that includes linear models and a popular variant called Explainable Boosting Machines. These models predict outcomes by adding up separate contribution curves, one for each feature and, optionally, for feature interactions. Existing methods for measuring group influence, such as Shapley-based scores or grouped permutation tests, can be accurate but are often computationally heavy because they require many masked versions of the data or repeated model retraining. In contrast, the new method defines a group’s importance as the average size of the combined contribution from all its features (and interactions) across the training data. Thanks to the additive structure of the model, this only requires summing existing component functions, so it is fast, works after the model is trained, and allows overlapping or post hoc defined groups.

Testing the idea in controlled settings

To understand how group importance behaves, the authors design synthetic experiments where they control both the relationship between features and the target, and the amount of correlation. In one setup, two perfectly correlated features each carry half of an additive signal; as expected, their group importance is roughly the sum of their individual scores. In another, two independent features push the prediction in opposite directions; their group importance becomes smaller relative to the sum, because their effects sometimes cancel. When the same opposing features are made highly correlated, the cancellation becomes stronger and the group importance shrinks dramatically, even though each feature still looks individually influential. These experiments show that the proposed measure naturally reflects how correlated features reinforce or oppose each other when acting together.

What real data say about mental health and surgery risks

The authors then turn to two medical case studies. In a large adolescent dataset combining brain imaging and behavioral questionnaires, they predict a depressive symptom profile known as negative valence. When they group features into domains such as life and trauma events, personality traits, neuropsychological tests, sleep, and brain networks, the group analysis reveals that life and trauma events and personality traits are the strongest drivers, with the neuropsychological battery also ranking high. Many trauma-related questions are highly correlated and each receives low individual importance, but the trauma group as a whole emerges as the most informative. Brain network measures, previously downplayed because of low single-feature scores, also form a meaningful group. In a second study of more than 100,000 hip replacement patients, they compare traditional risk factors like age, sex, and comorbidities to a group capturing community-level social determinants of health. The community group, which bundles neighborhood income, social support, digital access, education, and walkability, becomes the single most important predictor of 90-day mortality, outweighing even age and comorbidities.

Why this matters for fair and useful models

By showing that groups of related variables can be more predictive than any one variable alone, this work challenges the habit of reading model explanations as ranked lists of single features. The proposed method makes it practical to quantify how much whole domains—such as trauma history, cognitive function, or neighborhood context—contribute to predictions, even when their components are many and correlated. For clinicians, policymakers, and data scientists, this offers a more holistic and realistic view of what a model has learned, highlighting, for example, that lived experiences and community environment can rival or exceed classic clinical risk factors. In short, group importance provides a clearer window into complex health data, helping avoid misleading interpretations and supporting better, more transparent decision making.

Citation: Bosschieter, T., França, L., Wolk, J. et al. The most important features in generalized additive models might be groups of features. Sci Rep 16, 14371 (2026). https://doi.org/10.1038/s41598-026-43928-4

Keywords: feature importance, interpretable machine learning, generalized additive models, healthcare analytics, social determinants of health