Clear Sky Science · en

Long short-term attention memory (LSTAM): a global-feature-integrated model for joint moment prediction in human rehabilitation

Smarter Help for Healing Movement

When someone is learning to walk again after an injury or surgery, doctors need to know how hard each joint in the leg is working. Traditionally, that has required bulky lab equipment and careful measurements, which are hard to use in everyday life. This study introduces a new computer model that can estimate those hidden joint forces using lightweight body‑worn sensors, opening the door to more practical, personalized rehabilitation.

Hidden Forces Inside Everyday Steps

Every time we take a step, our hip, knee, and ankle generate turning forces called joint moments. These forces are crucial for understanding whether a movement is safe, efficient, or risky for further injury. But joint moments are hard to measure directly; labs usually rely on motion‑capture cameras, force plates in the floor, and detailed body models. That makes the process expensive and largely confined to research centers, even though clinicians and device designers badly need this information to tune braces, exoskeletons, and prosthetic limbs.

Listening to Muscles Instead of the Floor

To get around this limitation, researchers have turned to signals that can be measured on the skin. Small sensors record electrical activity from muscles (surface EMG) and track how joints bend over time. Earlier machine‑learning methods used these signals to predict joint moments with some success, but they struggled with a key problem: the raw data are full of short‑term jitters, noise from sensor motion, and brief spikes that do not reflect the overall pattern of how someone moves. Models that chase these small fluctuations can miss the bigger, more meaningful trends that describe how a person’s hip is really behaving across many steps.

A New Way to See the Big Picture in Noisy Signals

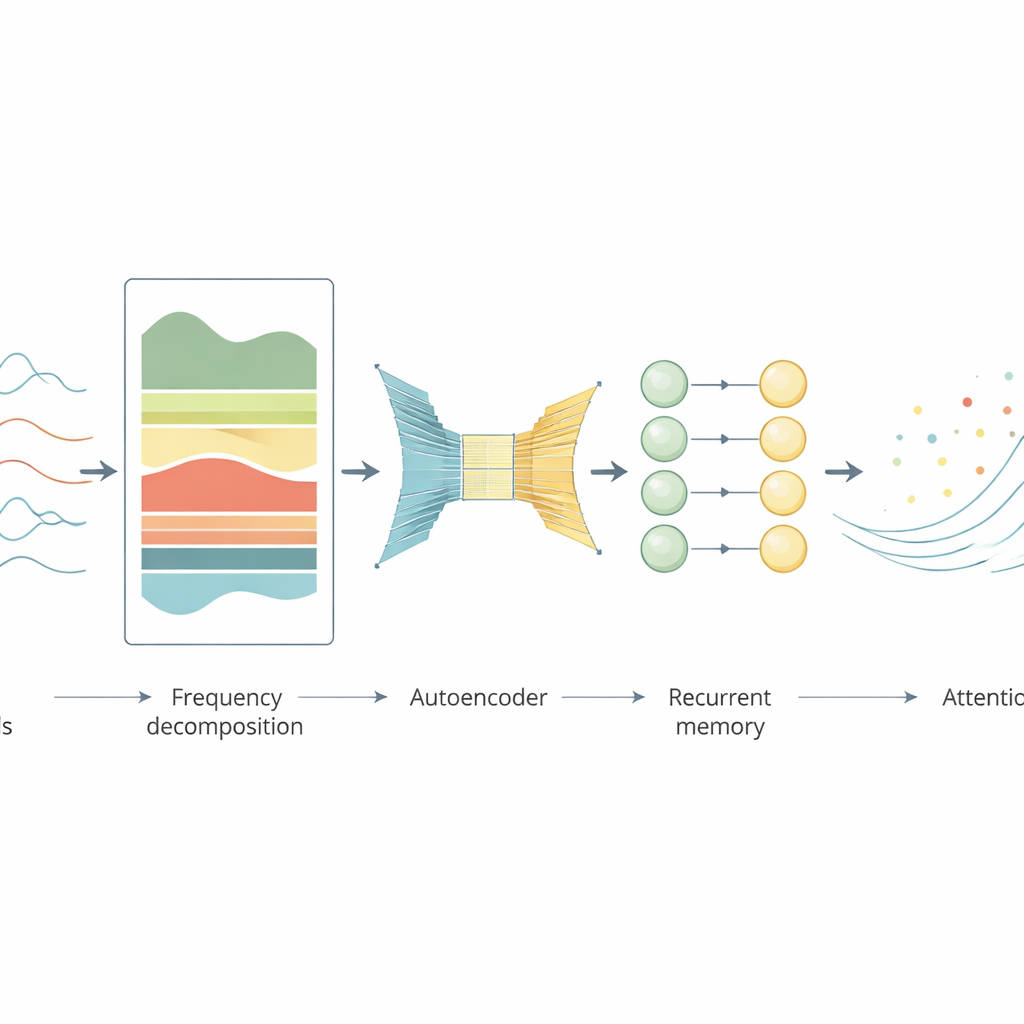

The authors propose a model they call long short‑term attention memory, or LSTAM, designed to focus on the long‑range structure of movement while downplaying distracting details. First, instead of working only with the original time‑based signals, the model converts them into a frequency view using a mathematical tool similar to how audio is split into bass and treble. In this form, steady patterns in muscle coordination stand out, while brief bursts of noise become less important. Next, an autoencoder—a kind of smart compressor built from simple neural layers and convolution filters—learns to represent the data in a cleaner, lower‑dimensional form that preserves important patterns and blurs away unnecessary local wiggles.

Memory and Focus for Moving Bodies

After the signals are cleaned and compressed, the information feeds into a long short‑term memory network, a type of recurrent model that is good at tracking how things evolve over time. On top of this, the authors add an attention mechanism, which allows the model to automatically “look harder” at the most informative moments and frequency components—such as key phases of a step when muscles switch on or off—and to pay less heed to unimportant intervals. Together, these stages transform raw, noisy sensor traces into a compact description of a person’s movement that is well suited to predicting hip joint moments.

Putting the Model to the Test

The team evaluated LSTAM using a public dataset of fourteen healthy volunteers walking on a treadmill, on level ground, and on ramps at many different speeds. For each person, the model was trained on data from just one treadmill session and then asked to predict hip moments in the remaining trials. Across subjects, LSTAM consistently produced predictions that closely tracked laboratory‑computed joint moments, with smaller errors and higher agreement than several advanced alternatives, including standard LSTM networks, temporal convolution models, and recent transformer‑based approaches. The authors also ran “ablation” tests, selectively removing pieces such as the frequency step, the autoencoder, or attention. Each removal degraded performance, showing that all three ingredients were important for capturing the global patterns in these biological signals.

What This Could Mean for Rehab Care

In plain terms, this work shows that it is possible to estimate invisible forces at the hip using only a handful of muscle and angle sensors, without heavy lab equipment, and to do so more accurately by teaching the model to pay attention to long‑term trends instead of short‑lived noise. Such a tool could help therapists monitor recovery, adjust exercise programs, or control smart rehabilitation devices like exoskeletons and prosthetic limbs so that they move in harmony with a patient’s own muscles. Although the study focused on healthy adults and walking tasks, the same approach could eventually support more complex activities and patient groups, making high‑quality movement assessment more accessible outside specialized motion labs.

Citation: Xiong, B., Guo, Y., Lou, J. et al. Long short-term attention memory (LSTAM): a global-feature-integrated model for joint moment prediction in human rehabilitation. Sci Rep 16, 13835 (2026). https://doi.org/10.1038/s41598-026-42722-6

Keywords: electromyography, gait analysis, rehabilitation technology, deep learning, wearable sensors