Clear Sky Science · en

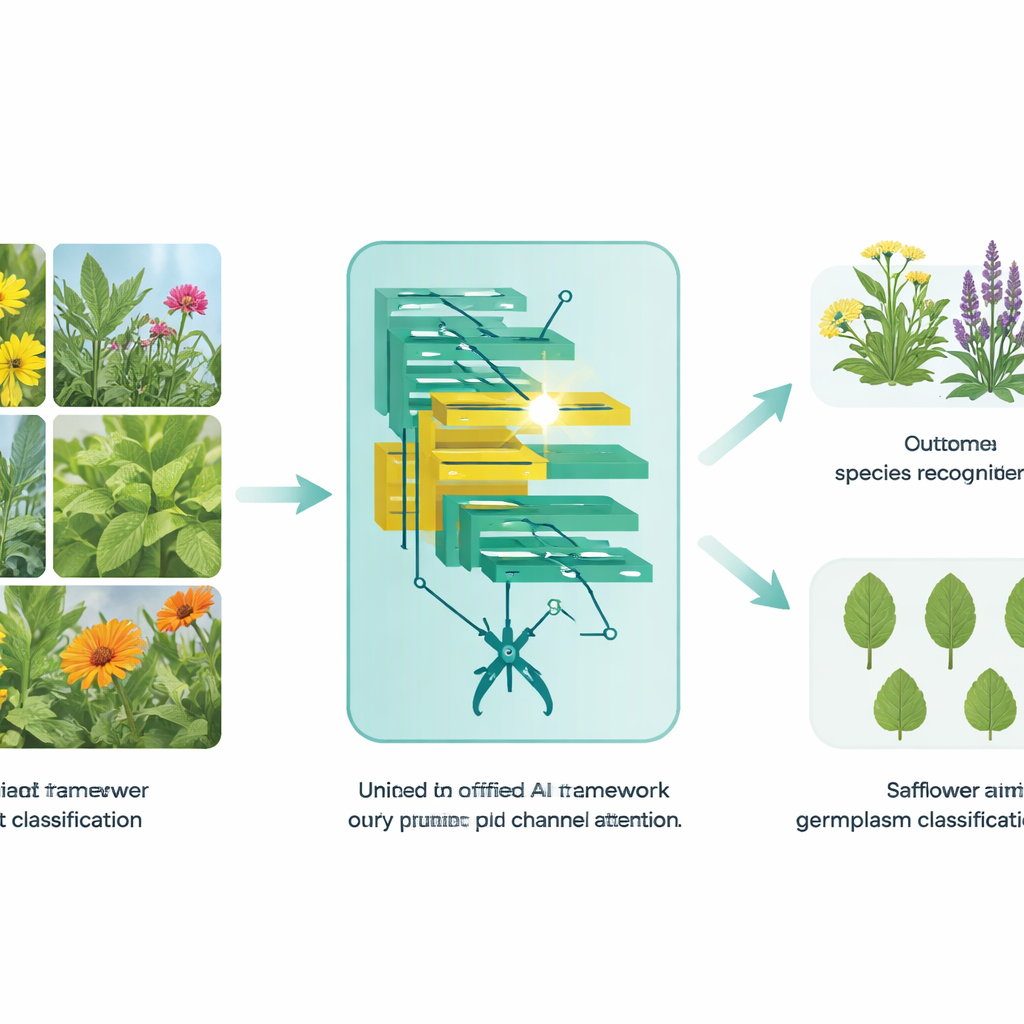

A unified EfficientSwinB-based framework for medicinal plant recognition and safflower germplasm classification

Why smarter plant ID matters

From kitchen herbs to traditional remedies, many plants that support our health look surprisingly alike. Telling them apart by eye requires years of training, and mistakes can affect medicine quality, farming decisions, and efforts to protect biodiversity. This study introduces a new artificial intelligence (AI) framework that can recognize medicinal plants from everyday photos and even distinguish very closely related safflower varieties from their leaves. By making plant identification faster and more reliable, the work points toward practical tools for pharmacists, farmers, and conservationists.

From leaf snapshots to trusted answers

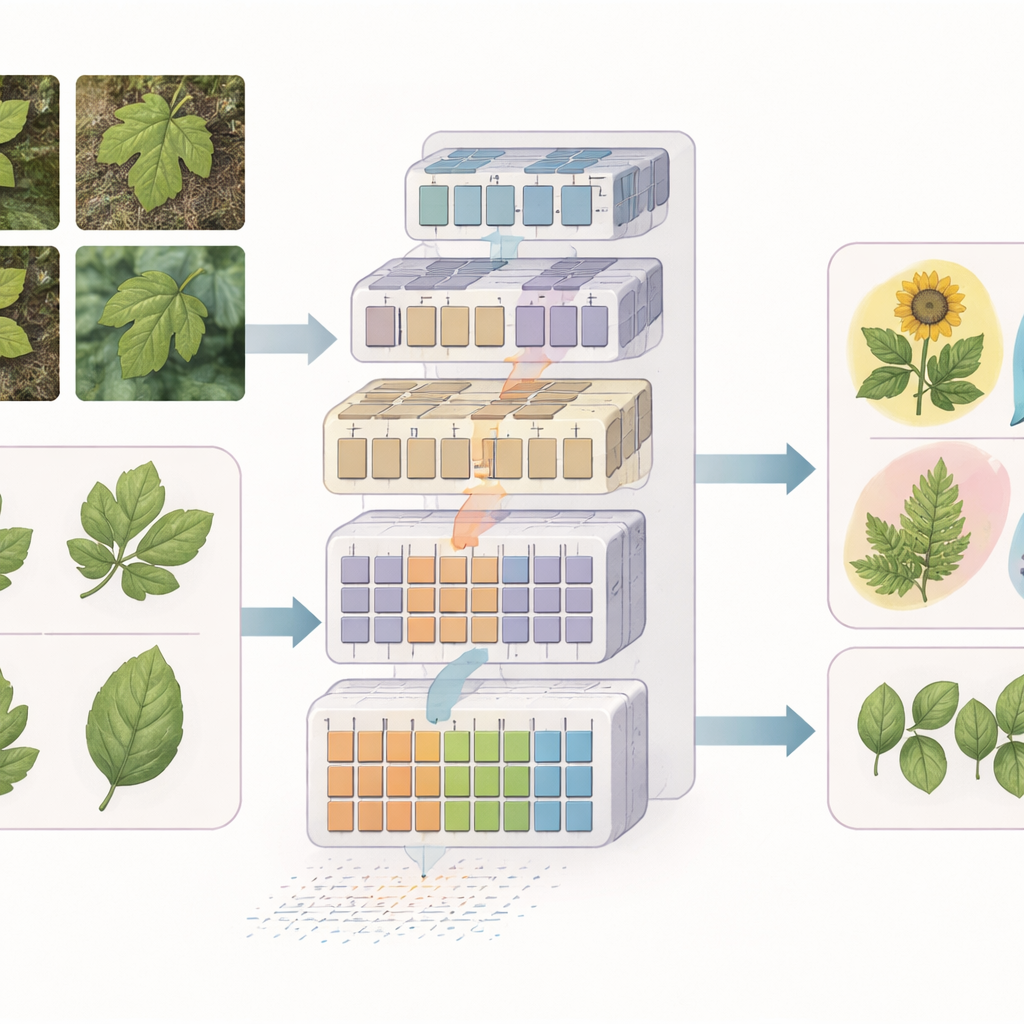

The authors focus on one of the hardest versions of plant recognition: working directly from leaf images. Outdoor photos of medicinal plants are cluttered with soil, shadows, and other plants, while many species share nearly identical shapes and vein patterns. Traditional computer vision methods needed large, carefully curated datasets and still struggled with such variation. Here, the researchers build on a modern family of AI models called vision transformers, which treat an image as a collection of small patches and learn how these pieces relate to one another. Their goal is to design a single framework that can handle both broad species recognition in the wild and fine distinctions among crop varieties in the lab.

A leaner, sharper eye for medicinal plants

For general medicinal plant recognition, the team proposes a model they call EfficientSwinB-SE. It starts from the Swin Transformer, a popular vision transformer that looks at overlapping windows of an image to capture both local details and the larger scene. The first improvement is a pruning step: the model automatically removes the least useful connections in its early image-processing layer, slimming down its internal calculations without changing the overall structure. The second improvement is a squeeze-and-excitation block, which teaches the model to weigh some visual channels more heavily than others, much like a botanist paying special attention to vein structure or edge texture while ignoring distracting background pixels.

Zooming in on safflower family differences

Recognizing varieties within a single crop is even tougher than telling different species apart, because the visual differences can be extremely subtle. To tackle this, the authors extend their framework into a second model called OLSF-EfficientSwinB-SE, tailored for safflower germplasm—the genetic lines used in breeding. In a laboratory setting, they first enhance leaf images with an "Optimal Leaf Structure Feature" process that highlights vein directions and fine texture. These enhanced images are then fed into the same pruned-and-attentive transformer backbone. This combination helps the AI focus on tiny, yet biologically meaningful, structural cues that distinguish one safflower line from another, supporting more precise breeding and genetic resource management.

Putting the new framework to the test

The researchers evaluate their models on three public datasets: Indian medicinal plants photographed in gardens, Indonesian medicinal plants collected from diverse online sources, and a safflower dataset containing dozens of varieties imaged under controlled lab conditions. Across the board, EfficientSwinB-SE outperforms well-known deep learning models, including classic convolutional networks and other transformers. It reaches about 99.75% accuracy on the Indian dataset and 97.70% on the Indonesian dataset, even under varied lighting and cluttered backgrounds. For the fine-grained safflower task, the OLSF-EfficientSwinB-SE variant achieves around 91.63% accuracy, surpassing a previous transformer-based approach designed specifically for this crop.

What this means for real-world plant use

In everyday terms, the study shows that it is possible to build a single, efficient AI “eye” that can both recognize a wide range of medicinal plants in the field and reliably sort nearly identical leaves in the lab. By trimming away unnecessary computations and teaching the model to emphasize the most informative visual cues, the authors create tools that are accurate, robust, and practical enough for near–real-time use. Such systems could underpin smartphone apps for herbal identification, automated cataloging of genetic resources, and intelligent farming platforms that track crops by their leaves. As the same ideas are extended to more species and environments, they may help bridge traditional plant knowledge and modern data-driven agriculture.

Citation: Khuat, P.T., Thien Van, H. A unified EfficientSwinB-based framework for medicinal plant recognition and safflower germplasm classification. Sci Rep 16, 11791 (2026). https://doi.org/10.1038/s41598-026-42449-4

Keywords: medicinal plant recognition, deep learning, vision transformer, safflower germplasm, plant identification