Clear Sky Science · en

UTR-DynaPro: a CNN–transformer multimodal language model for decoding 5′UTR regulatory mechanisms

How RNA’s Front End Shapes Life and Medicine

The instructions for building proteins in our cells are written in strands of messenger RNA, but not every part of that strand is read out as protein. A stretch at the very beginning, called the 5′ untranslated region, acts more like a control dial than a blueprint. Small changes there can dramatically alter how much protein is made, influencing everything from how well a vaccine works to whether a gene therapy delivers enough of a healing protein. This paper introduces a new artificial intelligence model, UTR-DynaPro, designed to read and interpret that control dial more accurately than previous methods.

The Quiet Control Zone Before the Code

Before the protein-coding part of an mRNA begins, the 5′ untranslated region (5′UTR) helps decide how efficiently protein will be produced. Its sequence and structure affect whether the cell’s protein-making machines, the ribosomes, can latch on, scan along, and start work smoothly. Features such as the length of the region, the balance of A, U, G, and C letters, and the presence of small upstream start signals can either speed things up or slow them down. These effects matter in real-world settings: in mRNA vaccines, for example, a well-tuned 5′UTR can mean stronger immunity with smaller doses; in genetic diseases, a disruptive change there can sharply cut protein output even when the main gene code is intact.

Why Old Prediction Tools Fall Short

Researchers have turned to deep learning to predict how a given 5′UTR will behave, hoping to design sequences that produce just the right amount of protein. Earlier models, however, tend to focus either on very short patterns or on broad, long-range relationships, but not both at once. Some struggle to adapt when experimental conditions change from one cell type or lab protocol to another, and many ignore important side information such as RNA folding energy or the length of the protein-coding region. As a result, their accuracy has plateaued, limiting our ability to systematically design 5′UTRs for vaccines, gene therapies, and industrial protein production.

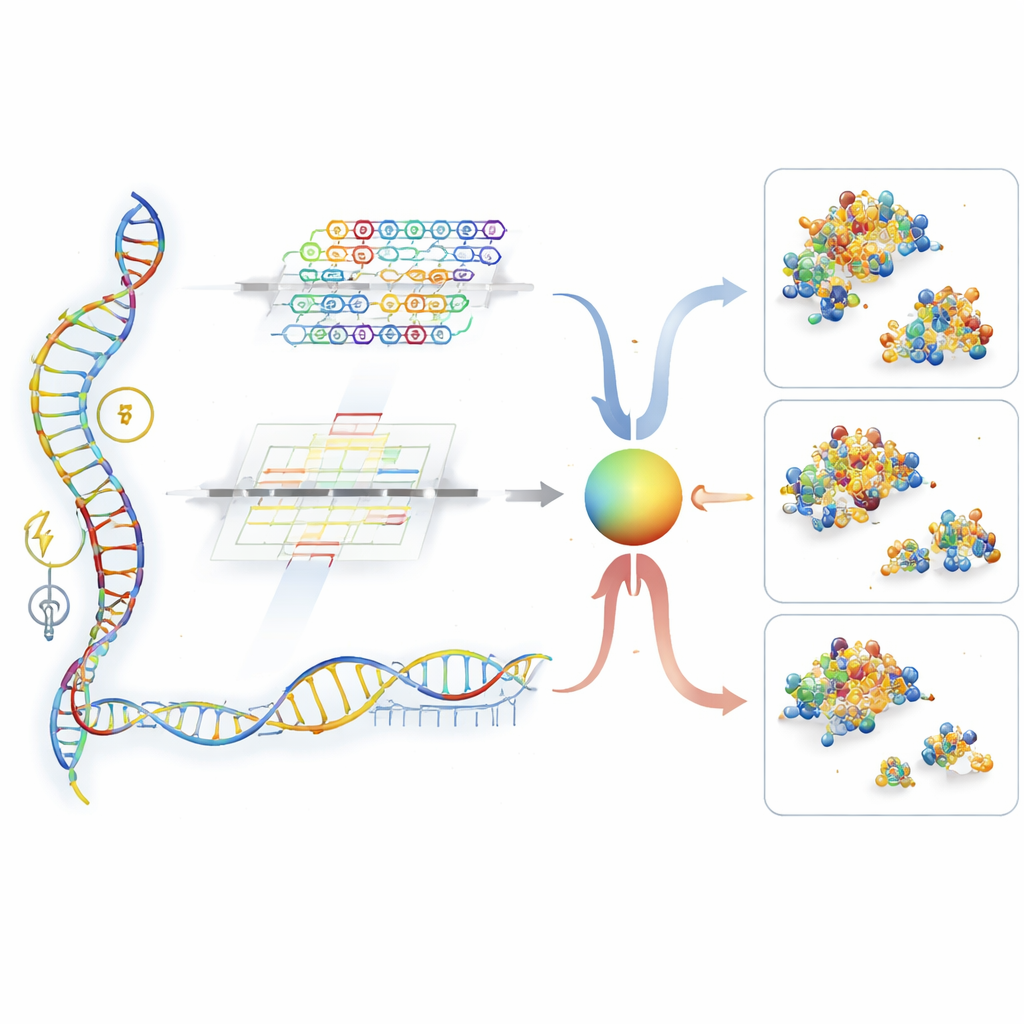

A Two-Pathway Reader for RNA Signals

UTR-DynaPro tackles these gaps by combining two complementary ways of reading the 5′UTR. One pathway, based on convolutional networks, is tuned to spot short, local patterns—akin to recurring “words” in the RNA that act as on–off switches. The other pathway, built from transformer layers, excels at picking up long-distance interactions, such as how distant parts of the strand fold together or coordinate with the coding region that follows. A dynamic “gate” then decides, position by position along the RNA, how much weight to give to local versus global information. On top of this, the model folds in extra signals, including how tightly the RNA tends to fold, how long the protein-coding segment is, and whether certain small upstream reading frames are present. Together, these ingredients allow UTR-DynaPro to construct a rich portrait of how a 5′UTR is likely to govern protein production.

Putting the Model to the Test

The authors trained and evaluated UTR-DynaPro on large, diverse datasets: synthetic and natural 5′UTRs from humans and other species, and measurements from multiple human cell types and tissues. They focused on three related outcomes: mean ribosome load (how many ribosomes pile onto an mRNA on average), translation efficiency (how much protein is made per RNA molecule), and overall expression level. Across all of these tasks, the new model consistently outperformed several leading approaches, sometimes cutting prediction errors by nearly ten percent. Careful “ablation” tests—removing or simplifying parts of the architecture—showed that each major component, from the dual-pathway design to the mixture-of-experts submodules and the experimental-condition inputs, measurably improved performance. Visualization of the fusion gate further revealed that the model shifts its reliance between local and global cues along the sequence and across cell types, echoing the complex biological logic scientists expect in this region.

From Better Predictions to Better Designs

For non-specialists, the key message is that this work offers a more powerful and flexible way to read the subtle control instructions at the front of an mRNA. By more accurately predicting how a change in the 5′UTR will alter protein output, UTR-DynaPro can guide the design of synthetic sequences that boost or tune production for specific needs—stronger vaccines, safer gene therapies, or better industrial enzymes. At the same time, its interpretable architecture helps researchers uncover both known and previously hidden regulatory patterns. In practical terms, this model moves us closer to treating the 5′UTR as a programmable control knob for gene expression that can be turned with confidence rather than trial and error.

Citation: Shen, H., Liu, S., Guo, F. et al. UTR-DynaPro: a CNN–transformer multimodal language model for decoding 5′UTR regulatory mechanisms. Sci Rep 16, 10779 (2026). https://doi.org/10.1038/s41598-026-42175-x

Keywords: 5′UTR regulation, mRNA translation, deep learning for biology, gene expression control, mRNA vaccine design