Clear Sky Science · en

Estimation of liquefaction-induced settlement of shallow foundation by machine learning with imbalanced data

Why Shaking Ground Matters for Everyday Buildings

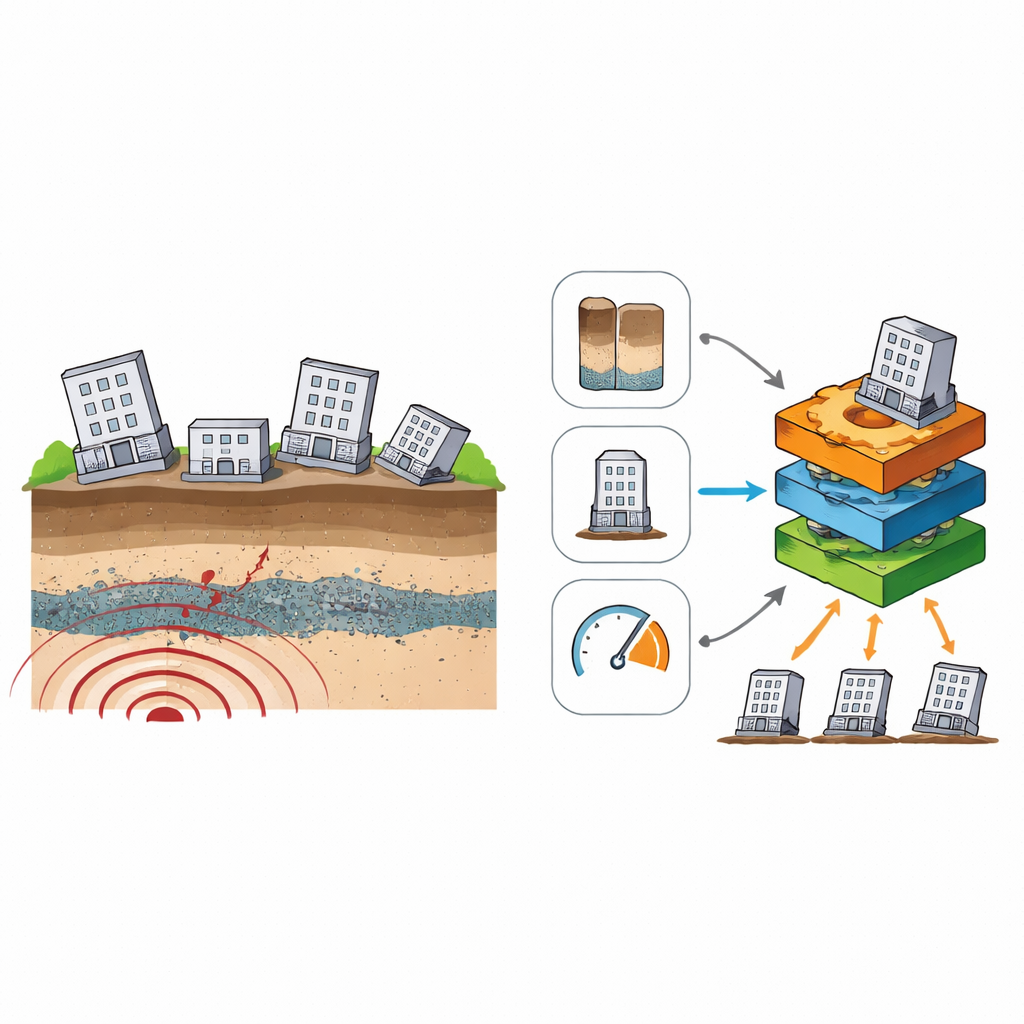

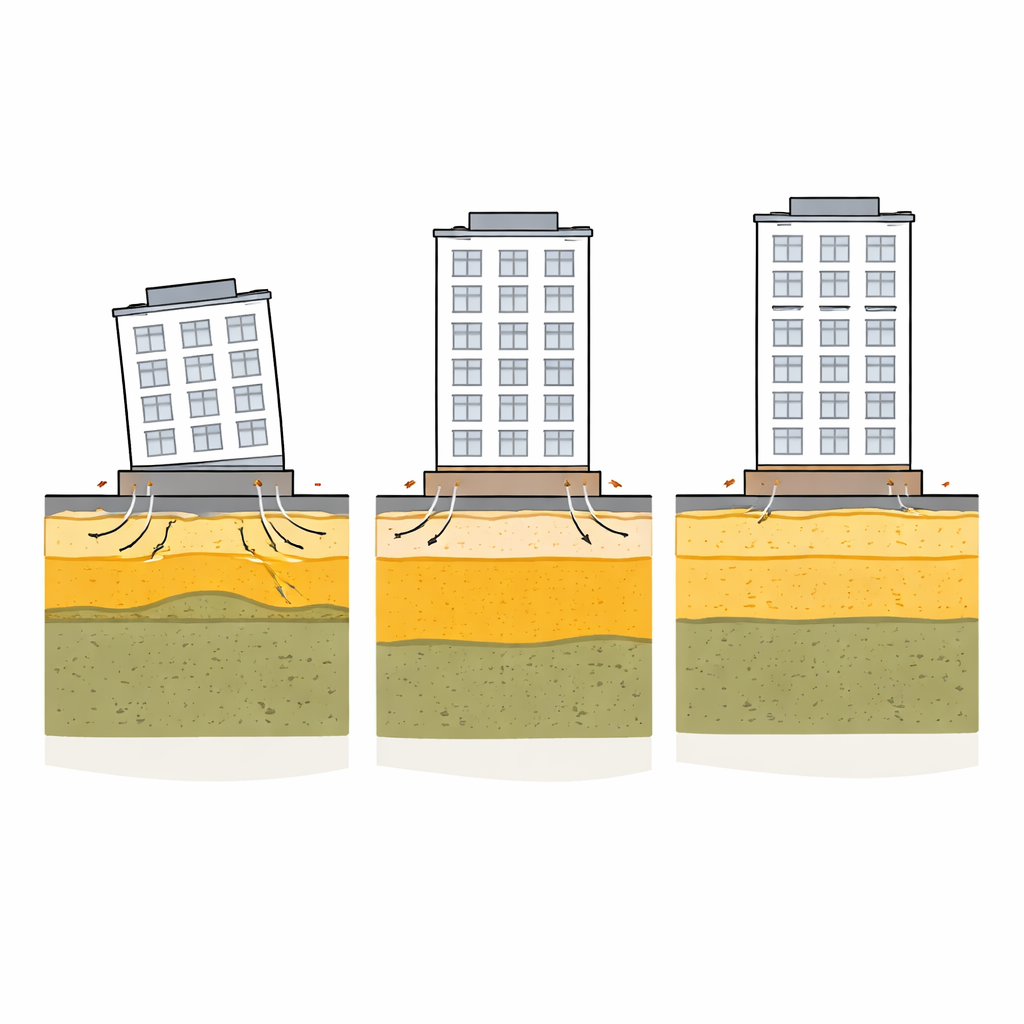

When a strong earthquake strikes, solid-looking ground can briefly behave like a liquid, causing buildings to sink, tilt, or crack. This phenomenon, called liquefaction, has damaged homes and infrastructure in many recent earthquakes, from Turkey to Japan. Engineers know that even a few tens of centimeters of uneven settlement can make a building unsafe or unusable. Yet predicting how much a given building will sink, based on limited site data, remains difficult. This study explores how modern machine-learning tools can turn scattered case histories from real earthquakes into a practical way to anticipate liquefaction-related damage and guide safer designs, insurance decisions, and post-disaster inspections.

From Scattered Case Reports to a Global Earthquake Dataset

The researchers began by assembling a worldwide database of 206 buildings that experienced liquefaction-related ground failure during past earthquakes. For each case, they gathered simple information that is often available in practice: the intensity of shaking at the site, the total height and width of the building, the pressure the building exerts on its foundation, and key properties of the underlying soil layers—especially how deep the first liquefiable layer sits beneath the surface and how thick it is. Rather than trying to calculate an exact settlement in centimeters, they grouped outcomes into four damage levels, from no visible settlement to extensive sinking and tilting. This "ground failure index" turns messy real-world measurements into clear categories that can be used to train and test machine-learning models.

Teaching Computers to Spot Dangerous Patterns in Uneven Data

Real earthquake records are far from tidy: some types of damage are common while others are rare, and the input variables can contain outliers and noise. In this dataset, severe damage cases were much more frequent than moderate ones, creating a strong imbalance that can mislead many algorithms into overlooking the rarest class. To confront this, the authors systematically combined several strategies. They cleaned the data with outlier-filtering methods, then explored two complementary approaches to handle imbalance: artificially copying minority cases (random oversampling) and telling the algorithms to treat mistakes on rare classes as more costly (cost-sensitive learning). To avoid overly optimistic results, all these steps were wrapped into a strict "leakage-free" workflow, in which the test data remained completely unseen during model training, tuning, and resampling.

Which Factors and Models Best Capture Settlement Risk?

Several widely used models were put to the test, including random forests, gradient-boosting methods, an artificial neural network, and an ensemble that averages the predictions of multiple models. Because overall accuracy can conceal poor performance on rare but important damage levels, the team emphasized recall—how many of the truly severe cases the model correctly flags—as a key metric. Gradient Boosting and an ensemble of tree-based models emerged as the most reliable options, particularly when combined with random oversampling, careful outlier treatment, and fine-tuned decision thresholds. To understand what the models were "looking at," the authors used SHAP, a tool that breaks down each prediction into contributions from individual features. This analysis showed that the depth to the first liquefiable layer was the single most influential factor, followed by shaking intensity and basic foundation geometry such as building width. In simple terms, shallow, easily liquefied layers beneath narrow foundations were consistently associated with the most serious damage.

Testing the Approach on Real Earthquake Case Studies

To check whether their methods would work outside the training sample, the researchers applied the final models to four detailed case studies from well-known earthquakes, including the 1989 Loma Prieta event in California, the 1999 Kocaeli earthquake in Turkey, the 2016 Kumamoto sequence in Japan, and the 2023 Kahramanmaraş earthquake in Turkey. In these examples, the only inputs were simple estimates of shaking intensity, building dimensions and loads, and the depth and thickness of liquefiable layers from field tests. The models generally matched the observed severity of settlement, correctly identifying both minor and extensive damage levels. In one case, the model intentionally erred on the safe side by predicting a higher damage class than reported. The authors argue that in high-stakes settings like earthquake resilience, such conservative predictions are preferable to missing severely damaged buildings.

A Data-Driven Aid for Safer Cities on Soft Ground

This work does not replace traditional physics-based formulas for settlement, which remain essential when detailed soil test results are available. Instead, it offers a complementary tool: a transparent, data-driven way to sort buildings into broad risk categories using a small set of routinely collected parameters. By carefully handling imbalanced data, avoiding hidden biases in evaluation, and revealing which soil and building features matter most, the proposed machine-learning framework provides engineers, planners, and insurers with a practical early-warning aid. In regions built on soft, water-saturated ground, it can help prioritize which buildings are most likely to suffer dangerous sinking and tilting when the next major earthquake hits.

Citation: Sargin, S., Korkmaz, G., Yildirim, A.K. et al. Estimation of liquefaction-induced settlement of shallow foundation by machine learning with imbalanced data. Sci Rep 16, 11198 (2026). https://doi.org/10.1038/s41598-026-41969-3

Keywords: soil liquefaction, earthquake engineering, machine learning, building settlement, seismic risk