Clear Sky Science · en

Integrating multi-scale convolution and attention mechanisms in HybridHAR for high-performance human activity recognition

Why teaching computers daily movements matters

Every day, our phones, watches, and other gadgets quietly record how we move—whether we are walking, climbing stairs, or resting on the couch. Turning these raw motion signals into a reliable understanding of human activity could transform health monitoring, elder care, rehabilitation, and smart homes. This paper introduces HybridHAR, a new computer model designed to read those signals more accurately and efficiently, bringing us closer to wearables that can truly understand what we are doing in real time.

Understanding activity from motion sensors

Human activity recognition is the task of figuring out what a person is doing based on sensors such as accelerometers and gyroscopes inside smartphones and wearables. Earlier systems depended on experts hand‑crafting features from these signals and then feeding them into traditional machine‑learning algorithms. That approach worked in controlled laboratory settings but often fell apart in the messier real world, where movements are more varied and noisy. Deep learning has improved things by automatically discovering patterns in the data, yet common designs still miss important details that unfold over different time spans and can lose information as the networks grow deeper.

Why existing deep models still struggle

Human movements happen on many time scales at once: a quick step, a short walk across the room, or a long period of sitting. Many deep‑learning models either focus on short fragments or on longer ranges, but not both equally well. As networks add more layers to capture complex patterns, they can suffer from fading learning signals, causing early layers to stop improving. Some models also lack guidance for their internal layers, so they do not learn the most useful mid‑level building blocks for recognizing activities that look similar in the raw signals, such as sitting versus standing.

A hybrid design that looks at motion in several ways

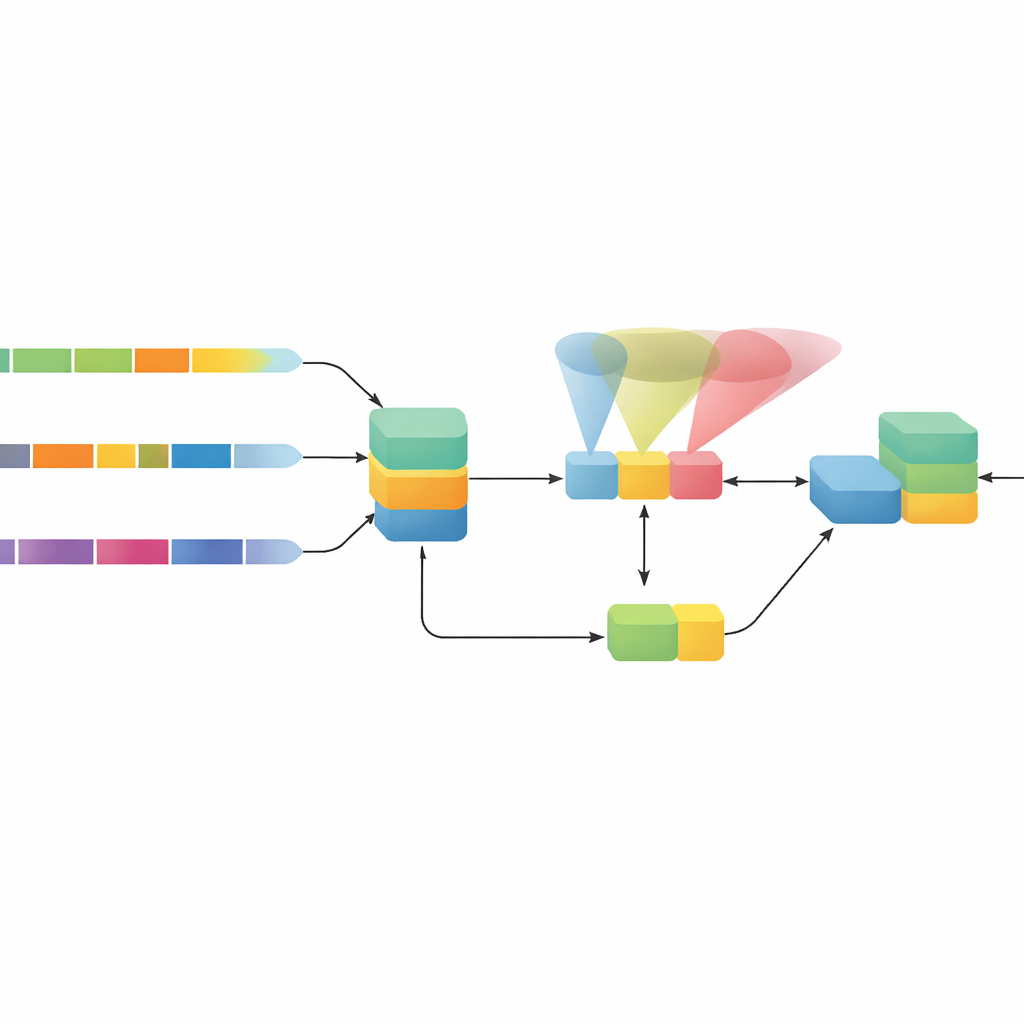

The authors propose HybridHAR, a carefully engineered model that tackles these weaknesses with three main ideas working together. First, instead of using a single view of time, it passes the same sensor signal through three parallel processing paths that each look at different time spans—from very short to somewhat longer segments. These paths act like three sets of lenses, capturing fine details of quick gestures as well as slower trends in posture and movement. Their outputs are then blended into a rich, combined representation that preserves information from all these scales.

Paying attention and guiding learning deep inside the model

Second, HybridHAR adds a special attention module on top of this blended representation. This mechanism learns to highlight the most telling parts of the signal—for example, the slight differences in motion that separate walking upstairs from walking downstairs—while still keeping a shortcut path that preserves the original information. This "residual" shortcut helps learning signals flow smoothly through the network, preventing information from being washed out in deeper layers. Third, the model is given an extra helper classifier that taps into intermediate features before attention is applied. During training, this auxiliary output is also graded, gently forcing earlier layers to learn features that are already good enough to make activity guesses, which stabilizes and speeds up learning.

How well the new approach performs

To test HybridHAR, the researchers used a widely adopted public dataset where volunteers wore a smartphone while performing six basic activities: three types of walking plus sitting, standing, and lying down. On this benchmark, HybridHAR reached about 99% accuracy on held‑out validation data and 96% accuracy on an unseen test set, beating several strong alternatives, including classic convolutional networks, recurrent networks, hybrid models, and reinforcement‑learning‑based approaches. It was especially strong at telling apart similar walking activities and reduced mistakes between confusing pairs such as walking upstairs and downstairs. The team also showed that each of the three ingredients—multi‑scale paths, attention, and deep supervision—measurably improved results, and that the full model achieved better performance than any variant missing one of them.

Why this matters for real‑world devices

Despite its high accuracy, HybridHAR remains compact and fast, with far fewer adjustable settings than many competing models and the ability to process hundreds of activity windows per second while using roughly a megabyte of memory. It also generalized well to a second, more complex dataset with more activities and richer sensor setups, where it performed even better. For non‑experts, the key takeaway is that this design presents a practical blueprint for turning noisy wearable signals into trustworthy, fine‑grained descriptions of what people are doing. Such models could make future health monitors, smart homes, and safety systems both more reliable and easier to run on everyday devices.

Citation: Huo, Y., Wei, C., Xu, Z. et al. Integrating multi-scale convolution and attention mechanisms in HybridHAR for high-performance human activity recognition. Sci Rep 16, 10143 (2026). https://doi.org/10.1038/s41598-026-40904-w

Keywords: human activity recognition, wearable sensors, deep learning, attention mechanisms, health monitoring