Clear Sky Science · en

Mixture of experts extra tree-based sEMG hand gesture recognition

Reading Muscles to Move Artificial Hands

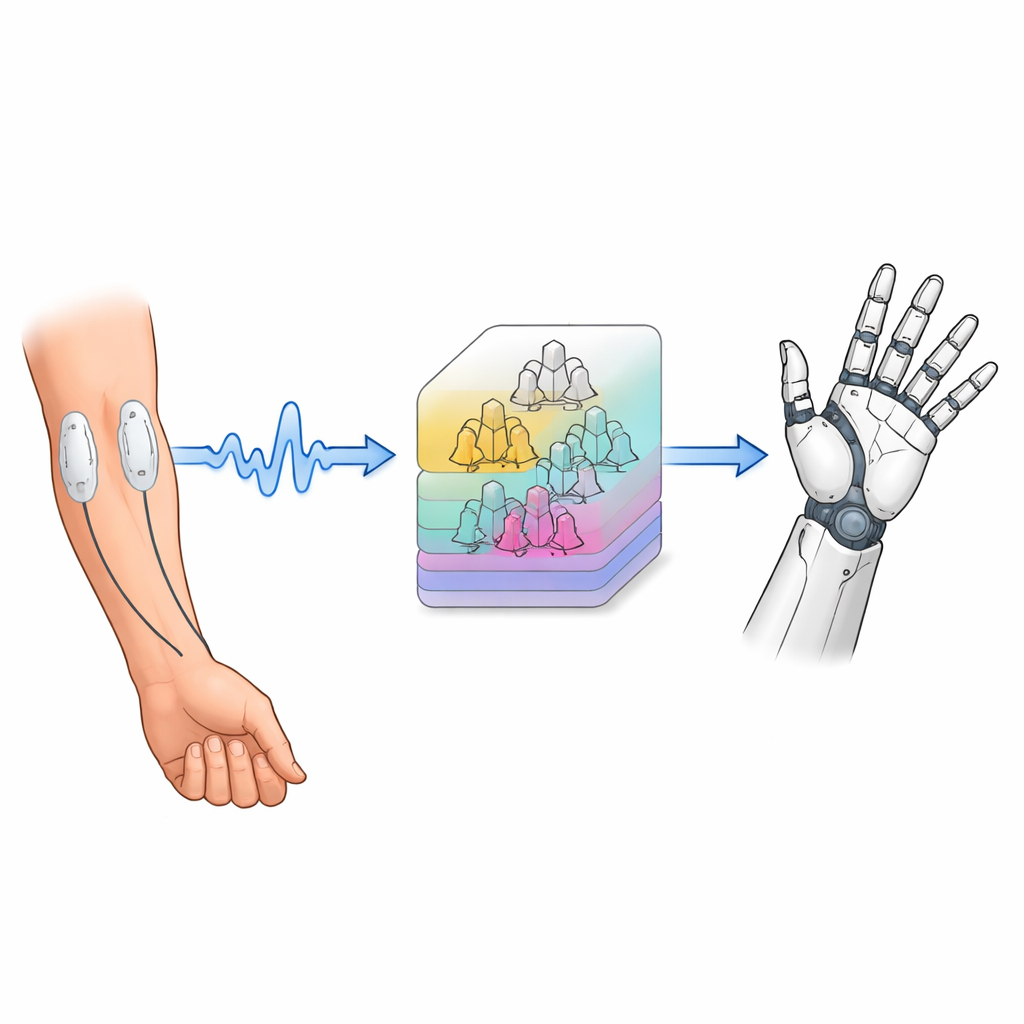

Imagine being able to control a robotic hand just by tensing your forearm muscles, as naturally as you would move your own fingers. This study explores how to turn those faint electrical signals from muscles into reliable commands for prosthetic hands and other devices, using a smarter kind of computer model that can recognize many different hand gestures in real time.

Signals Hidden Beneath the Skin

When we move our hands, our muscles produce tiny electrical signals that can be detected on the skin’s surface. The researchers use surface electromyography, or sEMG, where sticky electrodes are placed on the forearm to pick up these signals without needles or surgery. For each gesture, such as opening the hand or flexing certain fingers, the pattern of electrical activity is slightly different. The challenge is that these signals are very small and easily contaminated by noise from movement, nearby electronics, or other muscles, so they must be carefully cleaned and translated into numbers that a computer can understand.

Cleaning and Chopping Up the Muscle Signals

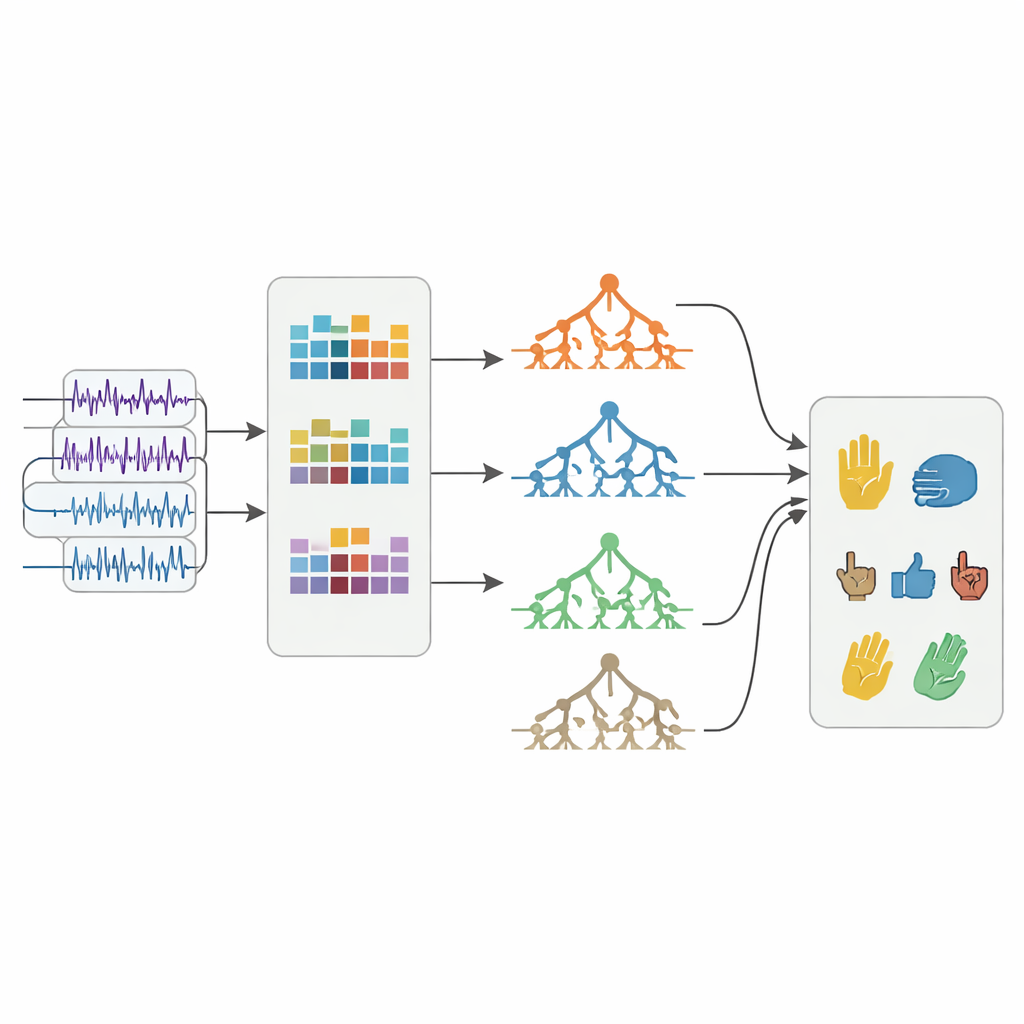

To make sense of the raw sEMG recordings, the team first filters out unwanted hum from power lines and other low‑ and high‑frequency disturbances, leaving mainly the true muscle activity. Instead of treating each long recording as one big lump, they slice the signals into short, overlapping time windows about a quarter of a second long. From every window they calculate 17 simple numerical features that describe how strong, variable, and energetic the signal is, both in time and in frequency. This produces thousands of compact snapshots of how the muscles behaved during each gesture, forming the raw material on which recognition algorithms can be trained.

Many Small Specialists Instead of One Big Judge

Most earlier systems trained a single machine‑learning model on all gesture types at once, which can lead to biased decisions when some gestures look very similar. In this work, the authors propose a different strategy called MEET (Mixture of Experts Extra Trees). Instead of one all‑purpose judge, MEET uses several “expert” models, each trained on just a small subset of gestures, plus one “gate” model that has seen every gesture. All of these models are based on Extra Trees, a tree‑ensemble technique that builds many simple decision trees with added randomness to avoid overfitting. During use, the experts each make their own prediction, while the gate decides how much to trust each expert for the current signal. The final choice is a weighted blend of these opinions, which reduces bias and sharpens the boundaries between similar gestures.

Testing on Real People and Public Data

The researchers recorded sEMG data from four healthy volunteers, each performing six different hand actions for tens of seconds at a time. They trained MEET and ten standard machine‑learning methods on most of the data and tested them on the rest. MEET consistently recognized the correct gesture more often than the competing models, reaching accuracies between about 78% and 89% across the four people, and outperforming its own building block, the plain Extra Trees model. To check that the approach was not tailored only to their lab recordings, they also evaluated MEET on a well‑known public sEMG database covering 15 hand gestures and eight subjects. Even there, MEET achieved the best performance, improving mean accuracy by around 1.25% over the next‑best method while remaining computationally light enough for use in small embedded devices.

Why This Matters for Everyday Life

In simple terms, this study shows that a “team of specialists” can read muscle signals more reliably than a single all‑purpose model. By combining multiple focused classifiers with a gate that balances their influence, MEET reduces common problems like overfitting and bias while keeping the method efficient enough for real‑time control. For people using prosthetic hands, gaming interfaces, or wearable controllers, this could translate into smoother, more accurate responses that feel closer to moving a natural hand. While the current work involves only a small number of volunteers and a fixed set of gestures, it lays the groundwork for more flexible and trustworthy muscle‑driven control systems that can one day support a wider variety of users and movements.

Citation: Gehlot, N., Jena, A., Kumar, R. et al. Mixture of experts extra tree-based sEMG hand gesture recognition. Sci Rep 16, 11787 (2026). https://doi.org/10.1038/s41598-026-40305-z

Keywords: hand gesture recognition, surface electromyography, prosthetic control, machine learning, mixture of experts