Clear Sky Science · en

MSSA: memory-driven and simplified scaled attention for enhanced image captioning

Teaching Computers to Describe Pictures

Imagine scrolling through your photo library and having every image automatically labeled with a vivid, accurate sentence: who is there, what they’re doing, and how everything fits together. That is the promise of image captioning, a technology that turns pictures into words. This paper introduces a new system, called MSSA, that helps computers generate richer, more precise captions by looking at images in a more detailed and memory-aware way, while still keeping the underlying machinery efficient.

Seeing More Than Just Objects

Most earlier captioning systems learned to describe images by first recognizing broad visual patterns and then feeding them into a language model that strings words together. These systems work well for simple scenes, but they often miss subtle details: where things are, how they relate to each other, and what materials or textures are present. The authors argue that a single, high-level snapshot of an image is not enough. Their MSSA framework therefore starts by extracting a richer set of visual clues from each important region of an image. It considers geometry (where an object is and how big it is), color distributions, texture patterns, edges, and frequency-based signals that capture repeating structures. By combining all these cues, the system builds a more nuanced portrait of each object, which helps distinguish, for example, a tennis court from a baseball field or a slice of pizza from a piece of cake.

Letting the System Refocus as It Writes

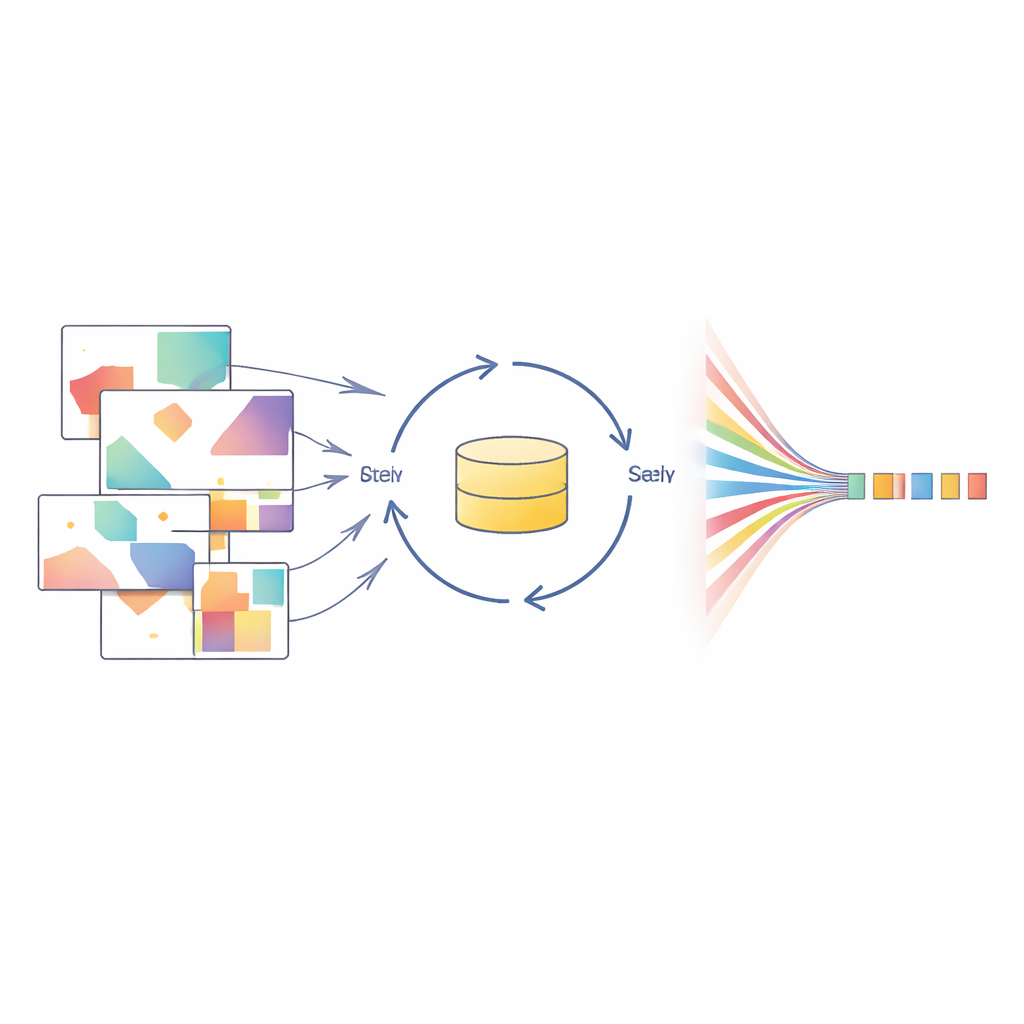

Another challenge in captioning is that descriptions are generated one word at a time. If the system pays attention to the wrong part of the image early on, that mistake can snowball as the sentence grows. To address this, MSSA introduces a memory-driven attention module. Instead of taking a single, one-shot pass over the visual regions, this module uses a memory loop that repeatedly revisits the same set of regions. At each step, it refines which parts of the image are most relevant, guided by what has already been “said” in the caption so far. This iterative process helps the model correct early misjudgments, balance competing objects in busy scenes, and keep the evolving sentence anchored to the right visual evidence.

Simplifying How Focus Is Computed

Modern attention mechanisms, which decide where the model should focus, can themselves become heavy and complex. Many systems add extra “gates” that reweight dozens or hundreds of internal channels. The authors show that, in their setting, this extra complexity brings little benefit. MSSA uses a Simplified Scaled Attention module that keeps the core idea of attention—matching a current textual state to image regions—but removes some of the expensive add-ons. It uses streamlined mathematical operations to capture how visual regions and the current word-in-progress relate, emphasizing spatial precision over intricate internal tuning. Because attention is called repeatedly for every new word, this simplification reduces computation and latency without sacrificing caption quality.

Testing Against Other Captioning Systems

To see whether these design choices pay off, the researchers evaluate MSSA on the widely used MSCOCO dataset, which pairs everyday photos with several human-written captions. They compare MSSA to a range of strong captioning models, including both older systems and recent attention- and transformer-based designs. Using standard quality measures that assess grammar, similarity to human descriptions, and how well key relationships are captured, MSSA consistently matches or outperforms most state-of-the-art baselines. Importantly, it does so while using a simplified attention path that slightly reduces the number of parameters, the amount of computation per caption, and the time needed to generate each sentence. Qualitative examples reveal that MSSA often notices extra contextual details—such as a bottle of water on a table, the direction of smoke from a plane, or which person in a crowd is most important for the description—that rival systems either miss or misinterpret.

What This Means for Everyday Images

For non-specialists, the take-home message is that better captions do not just come from larger models; they come from smarter use of visual detail and memory. By enriching what the model “sees” in each image region and allowing it to repeatedly refocus while writing, MSSA can produce descriptions that feel more human: they mention key objects, capture their relationships, and add small but telling details. At the same time, its simplified attention design avoids unnecessary complexity, offering a practical balance between accuracy and efficiency. This makes MSSA a promising building block for applications ranging from accessible photo libraries for visually impaired users to more intuitive search and organization of the vast image collections that shape our digital lives.

Citation: Hossain, M.A., Ye, Z., Hossen, M.B. et al. MSSA: memory-driven and simplified scaled attention for enhanced image captioning. Sci Rep 16, 11203 (2026). https://doi.org/10.1038/s41598-026-40164-8

Keywords: image captioning, attention mechanisms, multimodal learning, computer vision, deep learning