Clear Sky Science · en

Hybrid deep learning framework for image restoration in Fso systems affected by log-normal fading

Why this matters for everyday images

As more of our lives move online, we expect photos and videos to arrive instantly and clearly—whether they come from a medical scanner, a security camera, or a satellite. But when these images are sent through the air using beams of light, the atmosphere can scramble them, turning crisp scenes into grainy, washed-out pictures. This study explores how a blend of modern deep learning and classic image sharpening can rescue those damaged images, restoring fine details even when the transmission channel is very hostile.

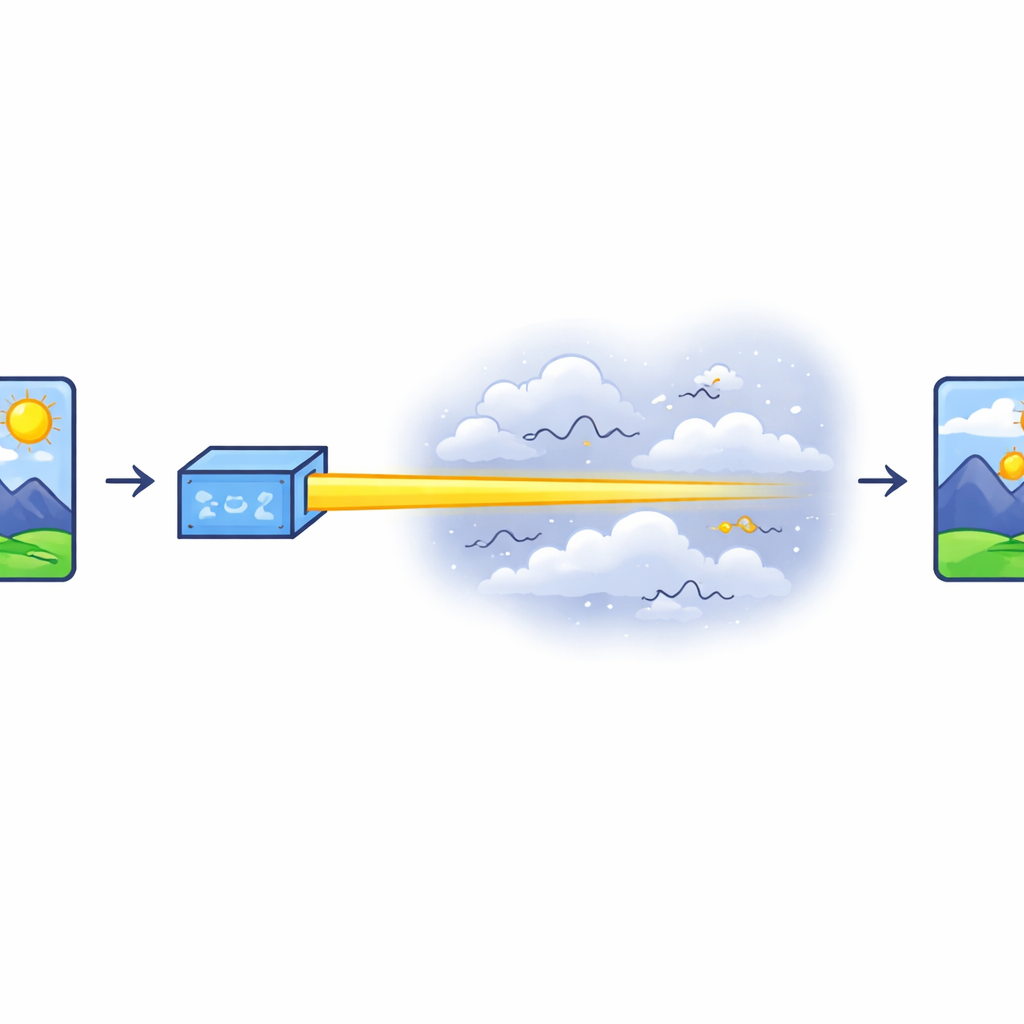

Sending pictures through the air with light

The work focuses on Free Space Optical (FSO) communication, a technology that sends data using tightly focused light beams instead of radio waves. FSO is attractive because it can carry huge amounts of information—ideal for high-resolution images and video—without needing a radio license. However, the air between transmitter and receiver is rarely calm. Temperature shifts, wind, and turbulence cause the light beam to flicker and fluctuate in strength, a phenomenon the authors describe statistically as log-normal fading. When combined with electronic noise and dense data packing schemes used to squeeze more information into the beam, these distortions can severely degrade image quality.

Limits of traditional clean-up tricks

Engineers have long tried to fight these distortions using techniques such as multiple antennas (MIMO), diversity schemes that send similar information along different paths, adaptive optics that reshape the beam in real time, and sophisticated filters like Gaussian and Wiener filters. While helpful, these methods struggle when the environment changes quickly or behaves in complex, nonlinear ways. They may smooth out some noise but often blur important edges and textures, and they are not flexible enough to fully undo the random, rapidly varying fading that plagues real-world FSO links.

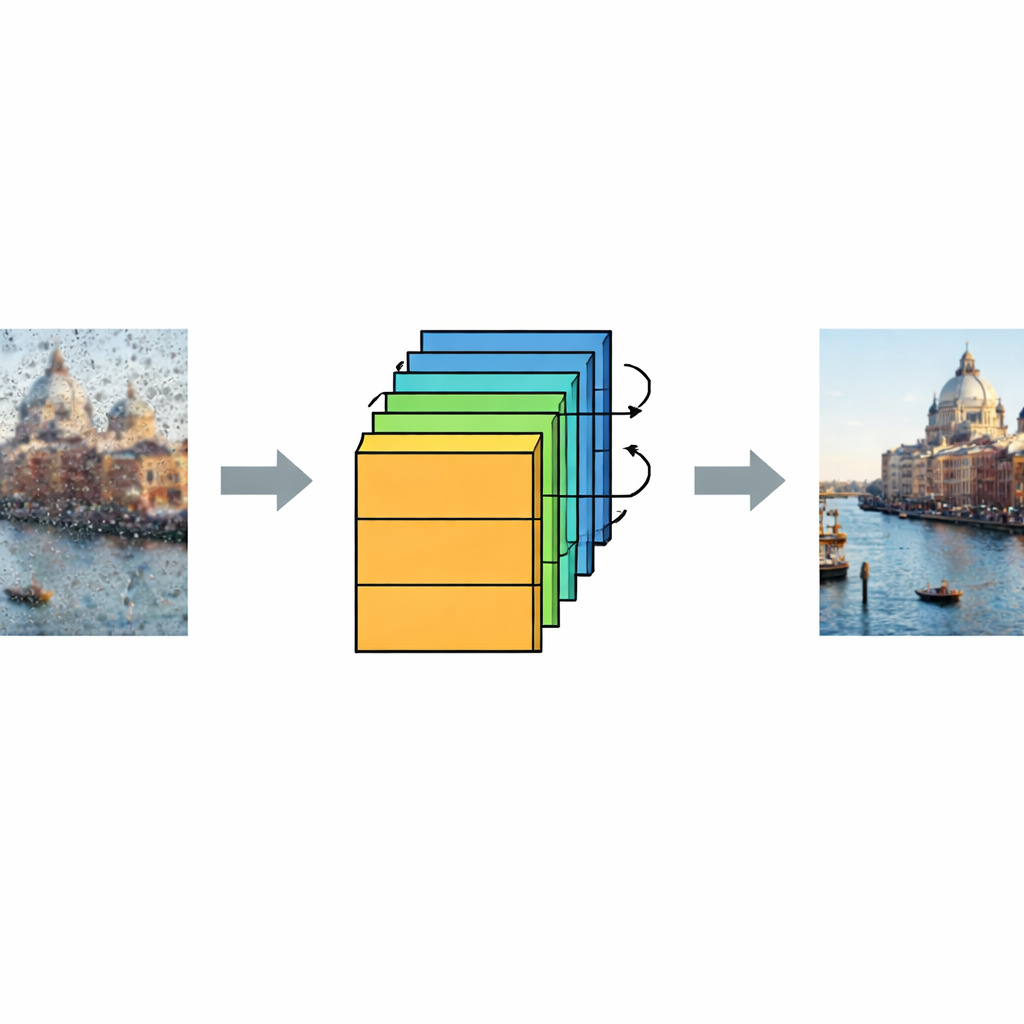

A learning machine that understands distortion

To overcome these limits, the authors design a deep convolutional neural network (DCNN) that learns, from examples, how fading and noise typically damage images. They create a large synthetic dataset of 30,000 pictures using AI image generators, then mathematically simulate how an FSO link with log-normal fading and added noise would corrupt them. Half of the images are kept clean, and half are faded and noisy, covering a wide range of signal-to-noise conditions. During training, the DCNN sees many pairs of damaged and original images and gradually learns to estimate and subtract the noise pattern, using a stack of 22 layers and repeated residual blocks that specialize in teasing apart true structure from distortion.

Sharpening the details and checking reliability

After the DCNN removes most of the noise and fading effects, the authors add a dedicated sharpening step that enhances edges and fine structures that may have been softened along the way. This two-stage pipeline—denoising followed by sharpening—proves crucial for producing images that not only score well on numerical measures but also look clear to human observers. The team evaluates quality using Peak Signal-to-Noise Ratio (PSNR) and Root Mean Square Error (RMSE), standard metrics that compare the restored images to their original versions. Under harsh simulated conditions, where the faded images can drop to around 7–9 dB PSNR, the restored images climb to around 67–68 dB, firmly in the “excellent” range and far beyond what traditional filters achieve.

Testing on classification and real-world-like cases

To ensure that the restored images are not just pretty but also useful for further analysis, the authors train deep residual networks (ResNet-18, ResNet-50, and ResNet-101) to distinguish between faded and clean images. These classifiers reach over 99.8% accuracy, confirming that the dataset is well structured and that the restoration process preserves meaningful visual patterns. The framework is also tested on images not seen during training, including a real human portrait sent through a simulated FSO link. Even in these challenging cases, the model sharply improves clarity, demonstrating that it has learned general principles of restoration rather than simply memorizing training examples.

What this means for future imaging systems

For a non-specialist, the key takeaway is that the authors have built a smart “image rescue” system that can take severely damaged pictures sent through turbulent air and make them look almost as good as the originals. By combining a learned deep network with a final sharpening step, the method turns noisy, faded faces and scenes back into clear, detailed images. This approach could strengthen future applications ranging from telemedicine and remote diagnostics to surveillance and Earth observation, where decisions depend on seeing small details reliably despite rough transmission conditions.

Citation: Soliman, F.A., Ragab, D.A., El-Shafai, W. et al. Hybrid deep learning framework for image restoration in Fso systems affected by log-normal fading. Sci Rep 16, 14653 (2026). https://doi.org/10.1038/s41598-026-39768-x

Keywords: free space optical communication, image denoising, deep learning, atmospheric turbulence, image restoration