Clear Sky Science · en

LLM-enabled adaptive scheduling in IoT sensing for optimized network performance

Why smarter sensors matter

Billions of tiny sensors now watch over our homes, cities, farms, factories, and even our bodies. They quietly send temperature readings, motion alerts, heart rates, and more across wireless networks. But this nonstop stream of information can easily overwhelm the system: links get congested, batteries drain quickly, and important messages may be delayed or lost. This paper explores how a new kind of artificial intelligence, based on large language models (LLMs), can act as a smart traffic controller for these sensors, deciding what should be sent, when, and at what power level so the whole network runs more smoothly and efficiently.

The problem with always-on sensing

Today’s Internet of Things (IoT) often works in a very simple way: sensors collect data and send it out continuously, regardless of whether the information is new, urgent, or even useful. When thousands of such devices share the same network, repeated and redundant messages clog the airwaves. This leads to delays, dropped packets, and wasted battery power, especially in remote or battery-powered devices. Traditional control methods, which rely on fixed rules or basic statistics, struggle when the network’s shape, traffic load, and radio conditions keep changing over time. As a result, important events can be delayed, while unimportant data consumes much of the limited energy and bandwidth.

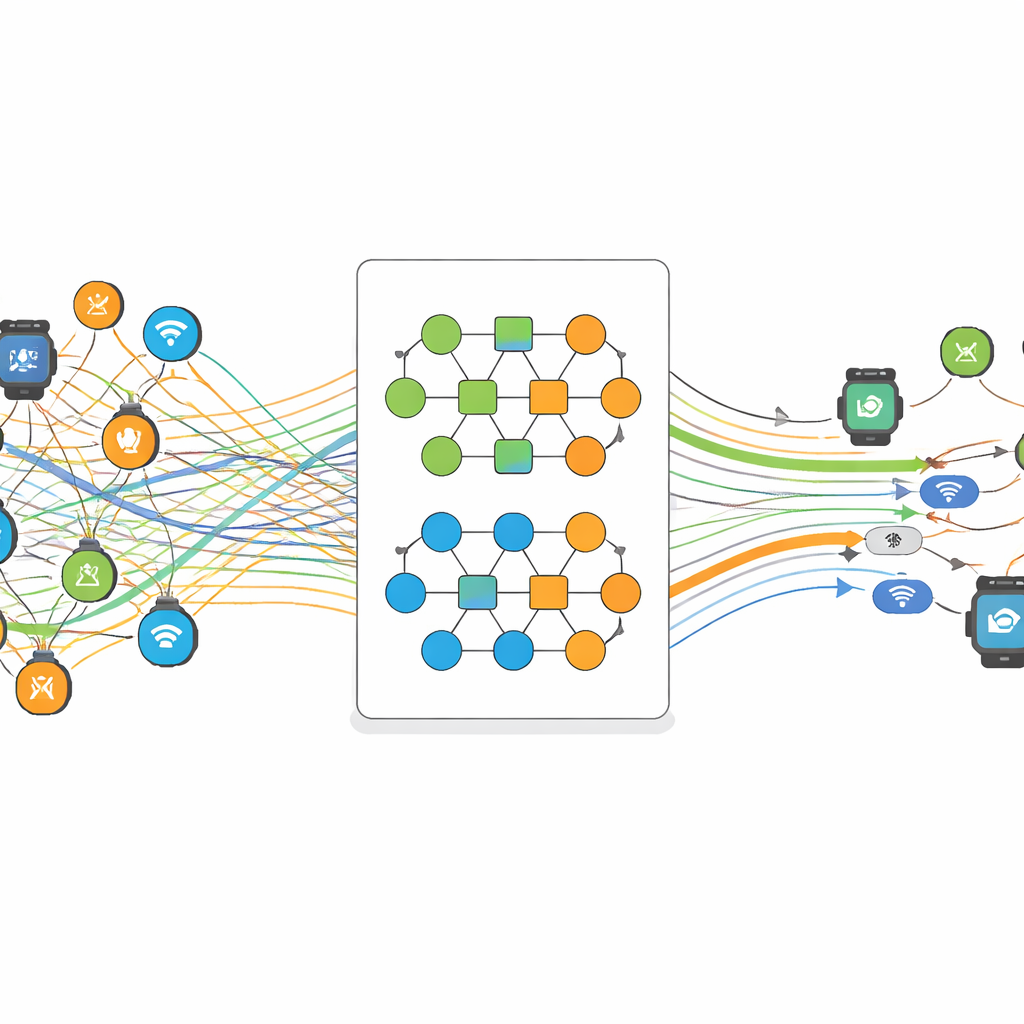

A language model as a sensor scheduler

The authors propose a scheme called LLM-AS (LLM-Enabled Adaptive Scheduling), which treats the IoT network as something that can be reasoned about in plain-language style. Sensor readings, their battery levels, signal quality, and recent history are turned into structured descriptions and given to an LLM (GPT-J-6B) running at a nearby edge computer. Instead of the sensors making blind, fixed decisions, the LLM analyzes the context and outputs a probability that a given sensor should transmit now or wait. A separate scheduling algorithm then turns this recommendation into an actual yes-or-no decision, while obeying basic rules: important data must eventually be sent, signal strength must be good enough for a reliable transmission, and batteries must not be drained to zero.

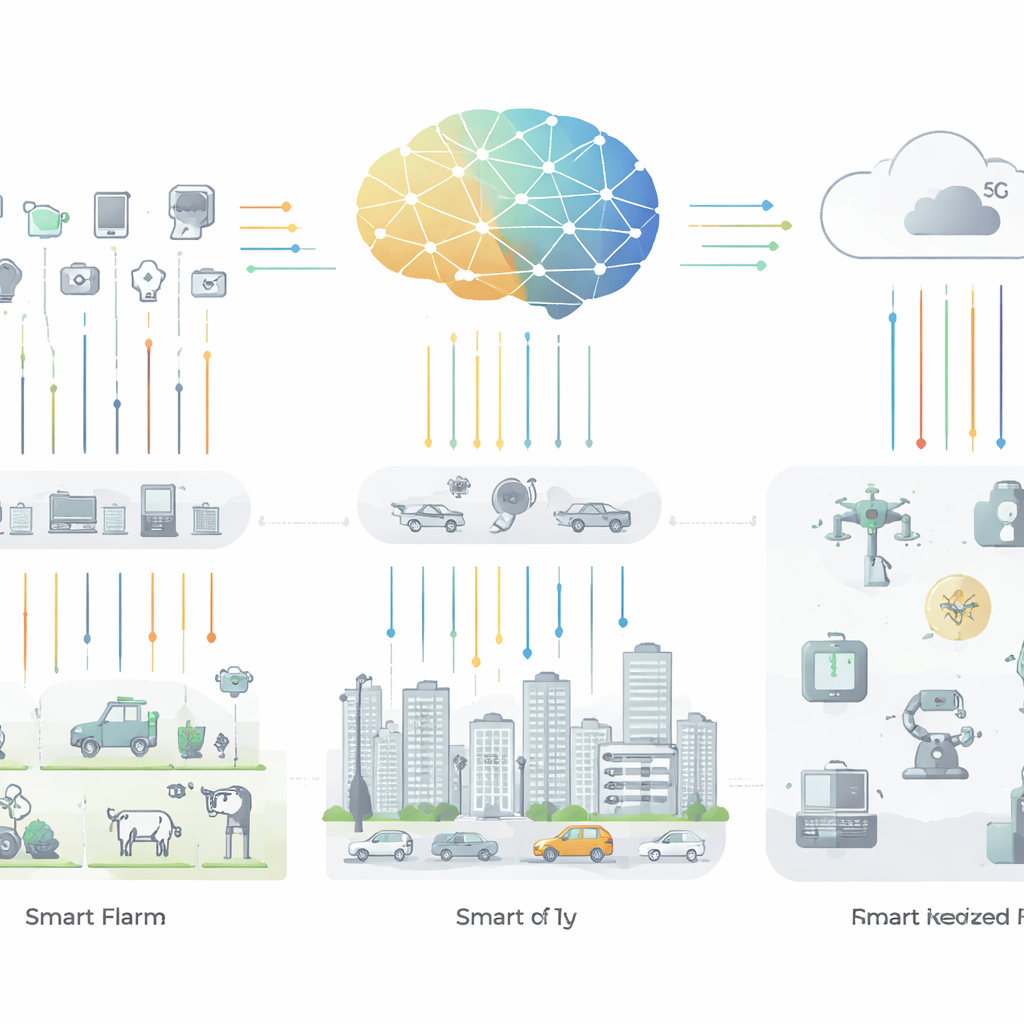

Layers of smart control in the network

LLM-AS is organized into four layers. At the bottom, a “cognitive sensor” layer gathers data such as temperature, motion, and location. Above that, gateway devices group and forward these readings to edge nodes. The edge nodes host the LLM-based logic that cleans and interprets the incoming data, learning which situations are normal and which hint at problems like congestion, failures, or unusual behavior. At the top, a 5G edge layer runs optimization routines that balance three goals at once: reducing energy use, cutting down delay, and limiting data loss. By continuously adjusting when sensors transmit, and with what power, the system reduces repetitive messages while still capturing the key events that matter for applications like smart homes and remote health monitoring.

How the system was tested

To test LLM-AS, the researchers used a well-known collection of real sensor data called the CASAS dataset, which includes readings from smart homes and similar environments. They built simulations where context awareness—the model’s sensitivity to changes in conditions—could be tuned from very low to very high. As the model became more context-aware, it learned to avoid sending unnecessary packets, shrinking the average energy used for transmissions and shortening delays. The study reports that median transmit power improved by nearly 60 percent and median delay dropped by up to 60 percent compared with a baseline that did not use the LLM-based optimization. The system also achieved strong statistical scores for distinguishing healthy from problematic network conditions, with high precision and recall and a low error rate, suggesting that its decisions are consistent and reliable across many scenarios.

What this means for everyday connected life

In plain terms, this work shows how an advanced language model can act like a clever dispatcher for vast fleets of sensors, deciding when each one should speak up and when it is safe to stay quiet. By cutting down on redundant chatter while keeping important signals timely and accurate, LLM-AS helps preserve battery life, reduce congestion, and make better use of wireless capacity. If refined and validated on more datasets and in real-world deployments, such approaches could make future smart homes, hospitals, farms, and cities more responsive and dependable—without requiring constant human tuning of network settings.

Citation: Khan, M.N., Lee, S., Lee, S.S. et al. LLM-enabled adaptive scheduling in IoT sensing for optimized network performance. Sci Rep 16, 13007 (2026). https://doi.org/10.1038/s41598-025-32660-0

Keywords: Internet of Things, sensor scheduling, energy-efficient networks, edge AI, large language models