Clear Sky Science · en

A drone imagery dataset for semantic segmentation of urban garden ground covers in biodiversity studies

Why city gardens seen from above matter

Walk through a community garden and you’ll see a patchwork of grass paths, vegetable beds, mulch, stones and bare soil. All of these “ground covers” quietly shape how well gardens support bees, natural pest control and healthy soil. Yet from traditional maps and satellite images, these fine details all blur together. This study shows how drones and modern image-analysis techniques can zoom in on urban gardens in remarkable detail, providing a new open dataset that helps researchers and planners understand how the ground under our feet supports city biodiversity.

Hidden variety on the garden floor

Urban gardens are more than decorative green spots. Their different ground covers—such as herbs, grass, straw mulches, woodchips, stones and exposed soil—offer food and shelter for pollinators, hunting grounds for pest-eating insects, and living space for soil organisms. Together, they help cycle nutrients, hold soil in place and connect wildlife across otherwise built-up neighborhoods. Because these features vary over just a few meters, they are hard to capture with coarse satellite images or time-consuming field surveys, leaving a major information gap for people trying to design wildlife-friendly cities.

Drones as flying magnifying glasses

To close this gap, the researchers flew a small camera-equipped drone over five community gardens in Munich, Germany, during several visits in 2021 and 2022. From 2,521 raw photographs, they stitched 24 ultra-sharp “orthomosaics”—map-like images in which every pixel on the ground is only 3.2 to 7.9 millimeters across. These mosaics reveal individual plants, paths and mulch layers in high detail. The team then carefully traced thousands of regions by hand, labelling eight common ground-cover types: grass, herbaceous plants, litter such as dead leaves, bare soil, stone, straw, solid wood (like boards and logs) and loose woodchips. The resulting label layers act as reference maps for what the camera actually sees on the ground.

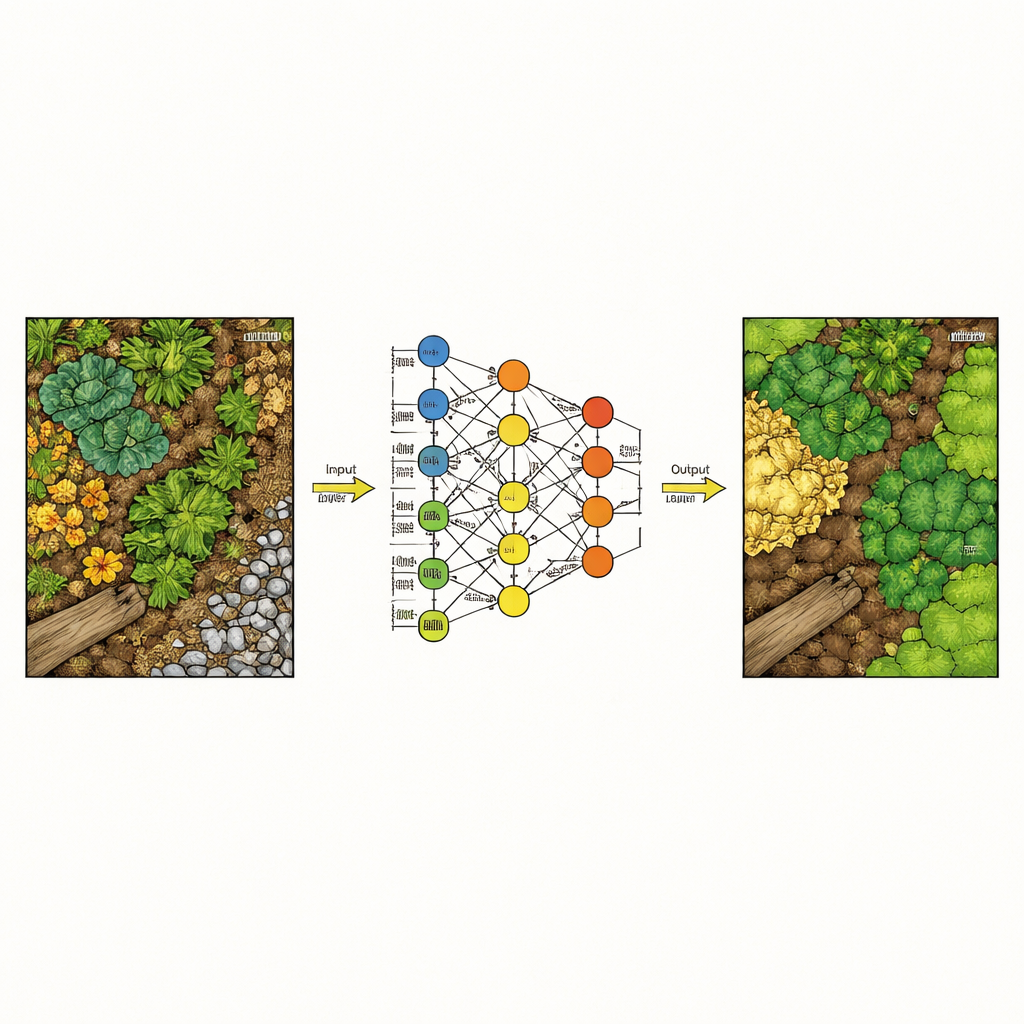

Teaching computers to read garden patterns

With these labeled images in hand, the team tested how well different computer models can automatically recognize each ground-cover type, pixel by pixel. They compared traditional techniques, which rely on hand-crafted texture and color measures, with deep learning approaches that learn patterns directly from image patches. To keep the task realistic, they divided the 24 mosaics into separate training, validation and test groups so that models were always evaluated on garden images they had never “seen” during training. Two deep learning models—UNet and especially DeepLabV3+—clearly outperformed classic methods like Random Forests and gradient-boosted trees, correctly assigning around 93% of pixels overall and handling visually tricky distinctions such as soil versus stone much better.

What the new dataset offers

The heart of this work is an openly available dataset rather than a single finished map. It includes the full drone mosaics, matching label masks, and a patch-based version that is easier to feed into modern neural networks. A companion metadata table lists details such as flight height, image size and processing settings, making the data reusable for many purposes. Researchers can build new algorithms, compare different modeling strategies, or combine these maps with other information—such as insect counts or soil samples—to explore how specific ground-cover mixes support particular ecological benefits.

From pixels to greener cities

For non-specialists, the main takeaway is that drones can now map the fine-grained fabric of city gardens in enough detail for computers to tell where grass ends and woodchips begin. The study shows that deep learning models can translate this visual mosaic into reliable ground-cover maps, which in turn can inform better garden design and management for biodiversity, pest control and soil health. By releasing their data and code to the public, the authors provide a foundation for future tools that could help gardeners, city planners and ecologists see how small design choices on the ground scale up to healthier urban ecosystems.

Citation: Afrasiabian, Y., Lu, C., Belwalkar, A. et al. A drone imagery dataset for semantic segmentation of urban garden ground covers in biodiversity studies. Sci Data 13, 590 (2026). https://doi.org/10.1038/s41597-026-07152-z

Keywords: urban gardens, drone imagery, semantic segmentation, ground cover, biodiversity monitoring