Clear Sky Science · en

Distributed multisensor ISAC

Turning Phone Networks into Invisible Radar

Imagine if the same wireless networks that connect our phones could also watch over roads, protect power plants, and spot rogue drones—without installing a single extra radar tower. This paper explores how future 6G mobile networks can double as a vast, distributed sensing system, using their existing radio signals to detect and track objects in the environment, much like a giant, invisible radar spread across cities, highways, and the skies.

Seeing with Many Ears Instead of One Eye

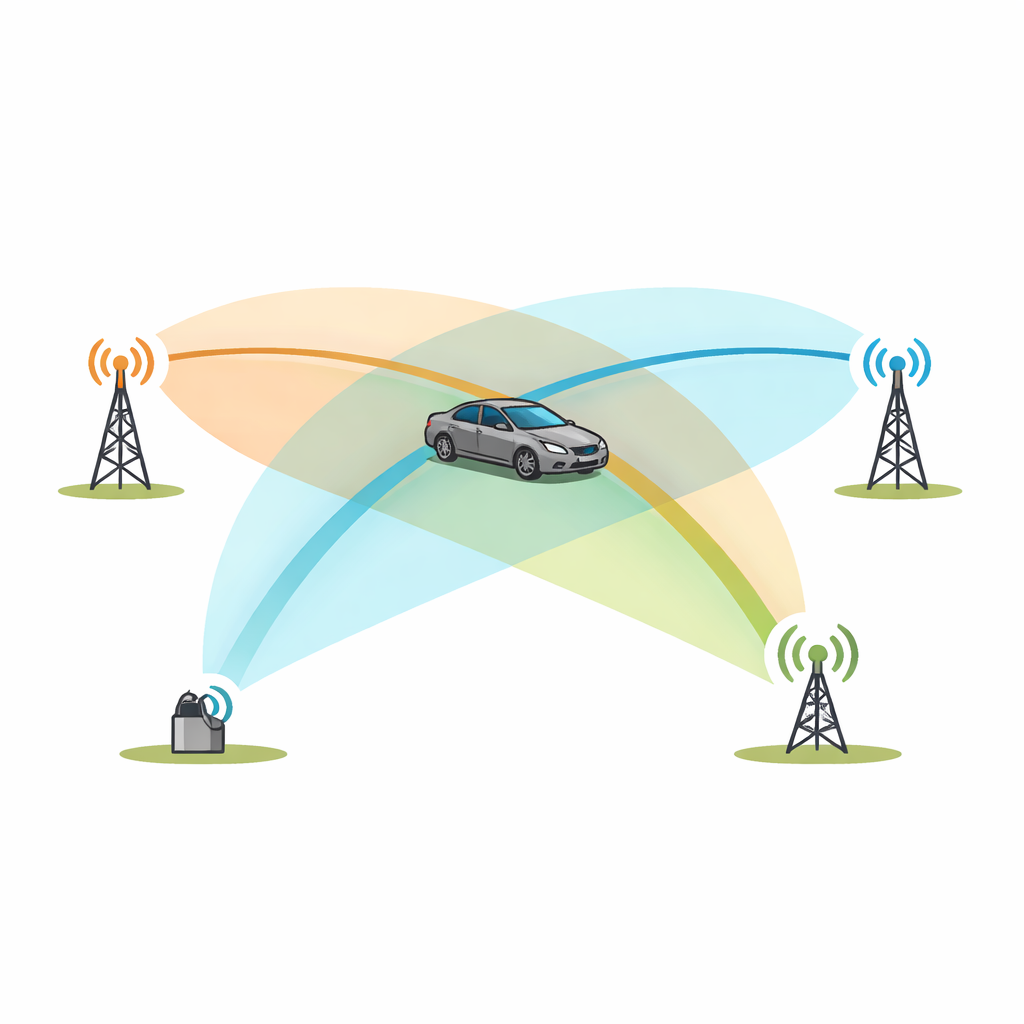

Traditional radar works like a powerful spotlight and a camera mounted at one place: it sends out its own pulses and listens for echoes from targets. The authors instead propose “distributed multi-sensor ISAC,” where many radio nodes—cell towers, remote radio units, and even vehicles or drones—cooperate. Each one may transmit or receive ordinary communication signals, but together they form a web of measurement paths that bounce off objects in the scene. By comparing the travel time and frequency shift of these reflections across multiple transmitter–receiver pairs, the network can infer where targets are and how they move in three dimensions, even if no single node has a perfect view.

Three Ways a Network Can Sense the World

The paper outlines three main architectural flavors. In infrastructure-only sensing, only fixed network elements—like base stations and small “sniffer” receivers—participate. This is well suited to watching over large industrial sites, ports, power lines, or highway junctions around the clock, without involving users’ devices or their data. In uplink/downlink sensing, user devices such as cars or drones become part of the sensing loop: sometimes they illuminate the scene, sometimes they act as mobile receivers, adding new vantage points where the infrastructure is sparse or blocked. A third mode relies on direct device-to-device links, forming ad hoc meshes of cars or drones that exchange both communication and sensing signals. In dense traffic or drone swarms, this “side-link” sensing can piece together a shared view of the surroundings that is richer than any single vehicle’s onboard sensors.

From Messy Echoes to Clear Pictures

Real-world radio signals bounce, scatter, and diffract off buildings, vehicles, and the ground, creating a tangle of overlapping echoes. For communications, much of this is treated as a nuisance to be equalized away; for sensing, it can be both a challenge and an asset. The authors explain how the network can reconstruct the actual transmitted waveform at the receiver, then use advanced signal processing to separate useful target echoes from clutter. Instead of relying on simple grid-based Fourier transforms—which break down when radio resources are sparse and fragmented—they advocate for model-based estimation that fits a small number of paths with specific delays and Doppler shifts directly to the measured data. This allows high-resolution ranging and speed estimation even when only a subset of frequencies and time slots are available, as is typical in a busy 5G/6G frame.

Working Together in Space, Time, and Frequency

Because many nodes share the same airwaves, their sensing activities must be carefully scheduled. The paper describes how time and frequency “resource blocks” can be carved up so that multiple sensing links coexist without stepping on each other, while still leaving room for normal data traffic. Some blocks may be placed at the edges of the available spectrum to sharpen distance resolution; others are allocated in time so that moving targets can be followed without ambiguity. Antenna arrays add another layer: by steering beams toward targets or along key directions, the network can suppress clutter and improve sensitivity. Over a larger area, multiple sites or swarms share their local estimates, fusing them into tracks of cars, drones, or people, helped by classical tracking methods such as Kalman filtering and more recent machine learning techniques.

From Concept to Guardian Network

To show that these ideas work in practice, the authors report a field experiment with one transmitter, two receivers, and a moving car. In simple time-versus-range plots, the car’s echo is almost invisible amid stronger reflections from buildings. Once the data are processed into a joint range–Doppler view, however, the moving car stands out clearly from the static background. By combining measurements from both receivers, the network can estimate the car’s position, though the geometry in this small test is not yet optimal. Scaling up to dense 6G deployments, the same principles could give mobile operators a new role: providing “sensing as a service” for traffic safety, drone management, and protection of critical infrastructure, all by reusing the radios and spectrum they already operate.

Citation: Thomä, R., Andrich, C., Döbereiner, M. et al. Distributed multisensor ISAC. npj Wirel. Technol. 2, 22 (2026). https://doi.org/10.1038/s44459-026-00041-2

Keywords: integrated sensing and communication, distributed MIMO radar, 6G mobile networks, wireless localization, smart infrastructure