Clear Sky Science · en

Modeling head- and pinna-related transfer functions using boundary elements and finite differences with volume penalization

Why the shape of your ears matters for virtual sound

When you put on headphones and hear a sound as if it comes from behind or above you, your brain is using tiny acoustic cues created by the unique shape of your head and ears. This paper explores how to simulate those cues on a computer with high realism, without spending hours measuring every listener in a lab. The authors compare two advanced numerical approaches to see how well they can mimic the way sound bends, reflects, and diffracts around the head and outer ear.

How our ears encode three-dimensional sound

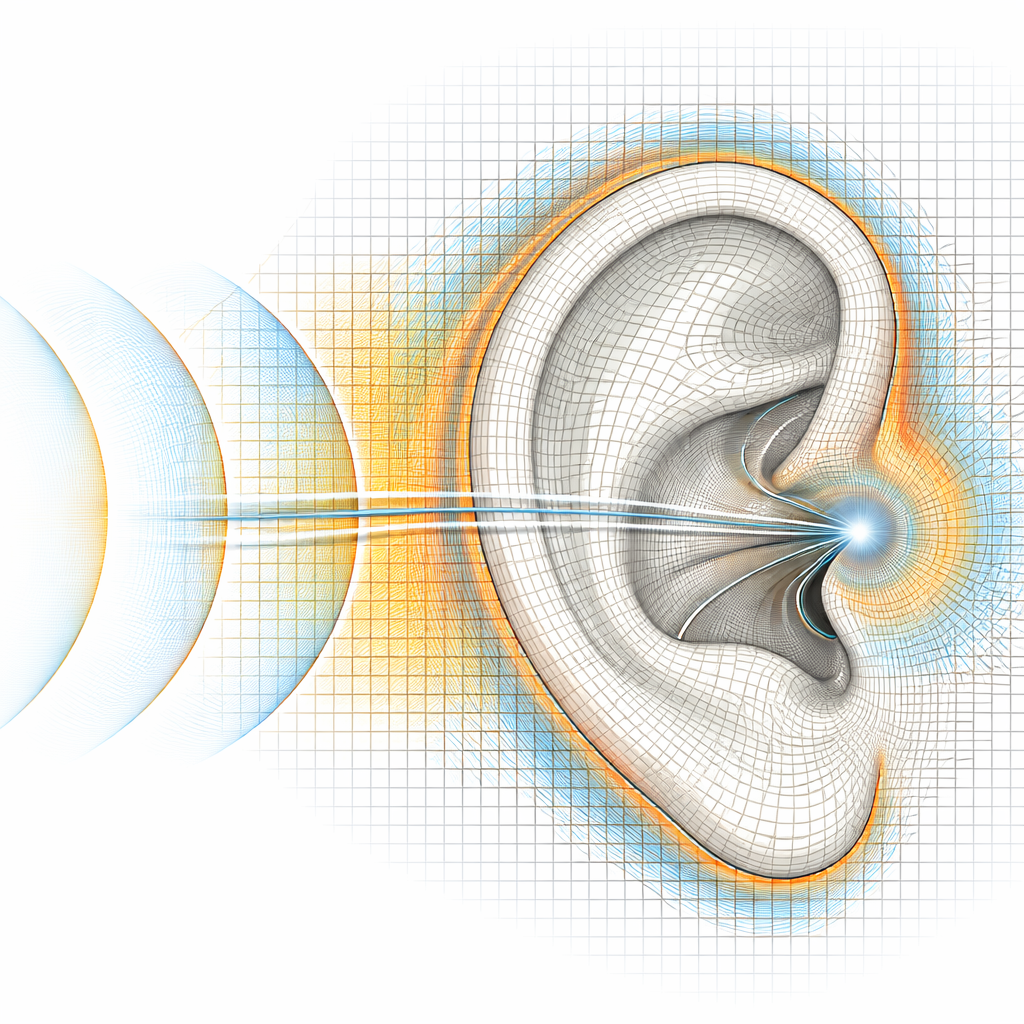

Each of us has a personal “acoustic fingerprint” called a head-related transfer function, or HRTF. As sound waves hit the torso, head, and the intricate folds of the outer ear, certain frequencies are boosted while others are damped. These changes differ with direction: front versus back, above versus below, left versus right. The brain has learned to read these patterns to tell where sounds come from. For convincing virtual and augmented reality, audio engineers want HRTFs that are tailored to each listener and sampled very densely in space. Measuring them directly around a real person or a dummy head is possible, but slow, technically demanding, and prone to small positioning errors that can audibly affect the result.

Two mathematical lenses on the same listening problem

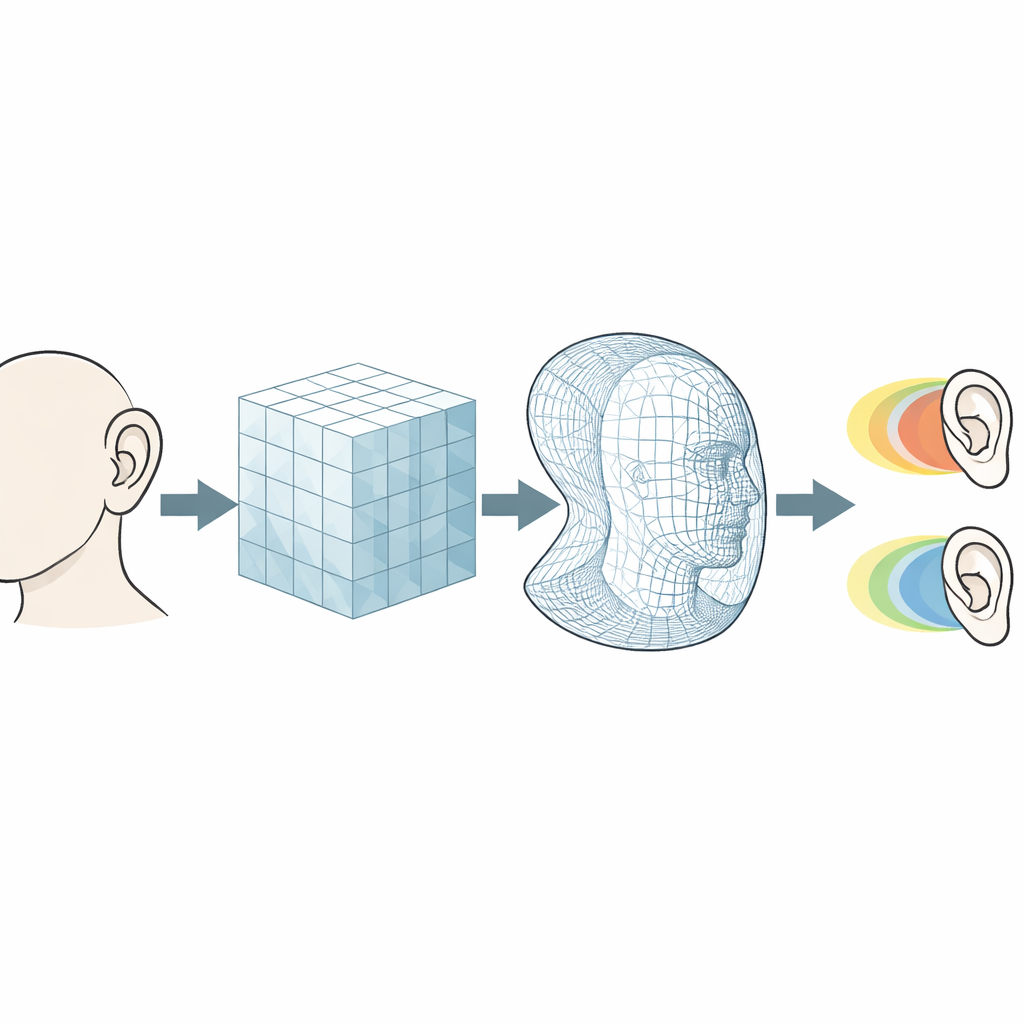

To sidestep lengthy measurements, researchers simulate sound propagation around highly detailed 3D models of the head and ear. This study compares two leading strategies. One, known as a boundary element method, only describes the surface of the head and ear and solves how that surface scatters sound. The other, called a finite-difference time-domain method, fills a volume of space around the head with a grid and steps sound waves forward in time. The volumetric method is more flexible but can become very costly for large regions. The authors enhance it with a “volume penalization” trick: instead of approximating the ear surface as a jagged staircase on the grid, they smoothly blend between air and solid material across a thin layer, which greatly improves how reflections and shadows are represented.

Testing the models on simple shapes and a real ear

Before trusting the methods on a full human head, the team validates them on carefully controlled test cases. They first simulate sound diffracting around a rigid sphere, where an exact textbook solution is known. Both methods closely track this reference across the audible range, with the boundary method and finely spaced grids in the volumetric method staying within fractions of a decibel. Next they study a single flat wall to find how many grid points are needed in the transition layer so that reflected sound has the correct peaks and notches. From these tests they derive simple rules that link grid spacing to the minimum wall thickness that can be modeled without introducing errors that might be heard. Applying those rules, they simulate a high-resolution 3D-printed pinna and compare the results to precise measurements. With sufficiently fine grids, the simulated ear responses differ from the measured ones by roughly one decibel on average—close to the threshold where coloration becomes noticeable in listening tests.

From an isolated ear to a complete head

As a final step, the authors simulate a full head mesh commonly used in 3D audio research. They compute how sound arriving from many horizontal directions is transformed at the blocked ear canal and compare the volumetric method with penalization to the established boundary method. When the grid is fine enough to resolve the thinnest parts of the ear, the two approaches agree extremely well for most directions and frequencies, even when judged through an auditory model that predicts perceived tonal changes. Coarser grids, by contrast, shift the frequencies and strength of important peaks and notches linked to ear resonances and reflections, underscoring that geometric detail cannot be sacrificed without audible consequences.

What this means for future virtual audio

For far-field scenarios and large domains, the boundary-based approach remains more efficient on today’s computers, but the improved volumetric method offers important advantages. It can naturally handle small internal cavities, spatially varying materials, and future optimization tasks where the shape of headphones or ears themselves might be tuned in simulation. The study shows that, if grid spacing is chosen according to the derived guidelines, volume-penalized volumetric simulations can match both analytical solutions and measured ear data to within or near just-noticeable differences. In practical terms, this brings us closer to computing highly realistic, listener-specific 3D sound scenes—without having to measure every ear in the lab.

Citation: Hölter, A.B., Lemke, M., Weinzierl, S. et al. Modeling head- and pinna-related transfer functions using boundary elements and finite differences with volume penalization. npj Acoust. 2, 16 (2026). https://doi.org/10.1038/s44384-026-00052-x

Keywords: head-related transfer functions, 3D audio, numerical acoustics, outer ear simulation, virtual reality sound