Clear Sky Science · en

Liver transplant donor-recipient matching with offline reinforcement learning

Why these liver transplant decisions matter

For people with severe liver disease, getting a transplant can be the difference between life and death—but there are far fewer donor livers than patients who need them. Doctors must constantly decide whether to accept a particular liver for a specific patient, keep waiting for a better match, or remove someone from the waitlist if they improve or become too sick. This study explores whether a form of artificial intelligence, known as offline reinforcement learning, can learn from years of transplant data to suggest safer, smarter matching decisions that save more lives and make better use of each donated organ.

From one-time predictions to ongoing decisions

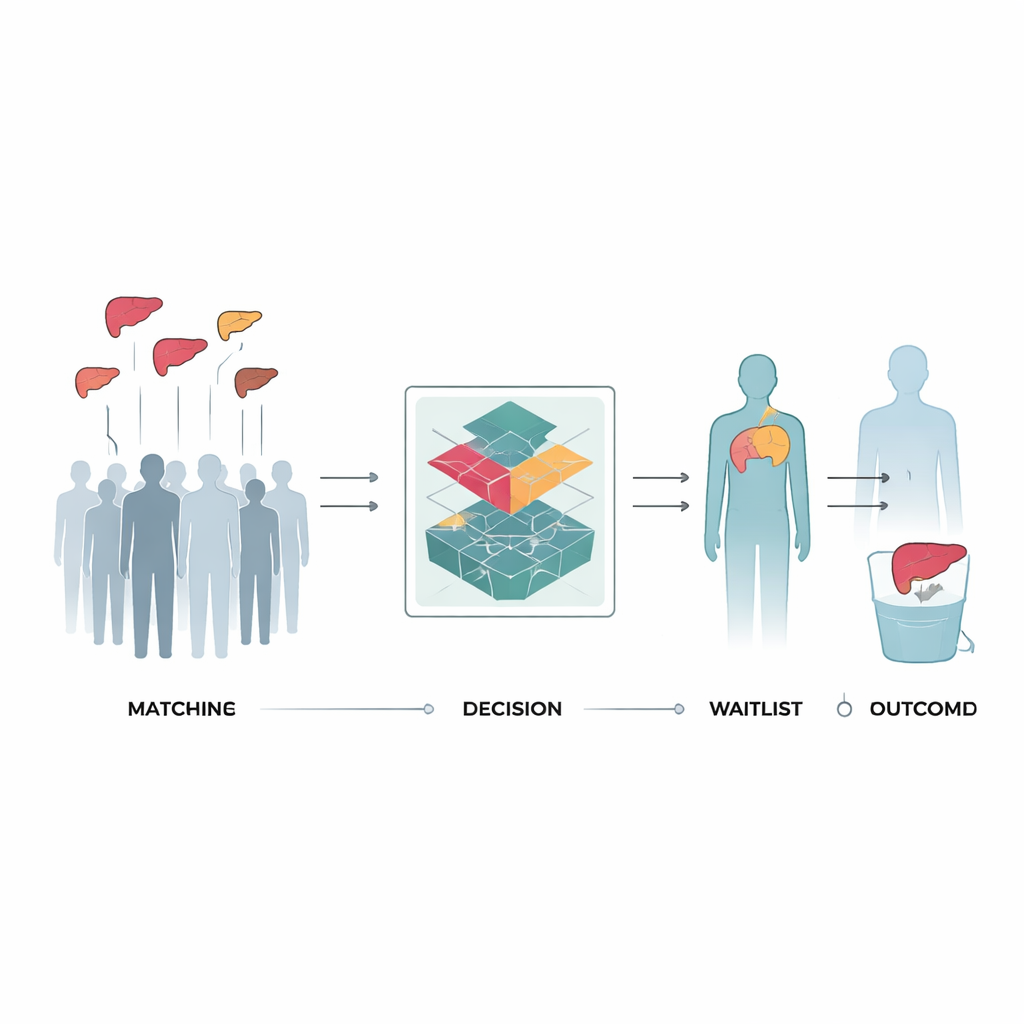

Most current tools used in liver transplantation look at a single moment in time—for example, the day of surgery—and estimate the chance a patient will survive with a given donor organ. They often rely on scores such as the Model for End-Stage Liver Disease (MELD), which summarizes lab test results into a number that helps prioritize the sickest patients. But these tools leave out a crucial part of reality: a patient’s condition changes over weeks and months, and every decision to accept or decline an offered organ affects what happens next, including the risk of dying while still on the waitlist. The authors instead framed transplant as a sequence of decisions over time, where each choice to transplant, wait, or remove a patient from the list leads to different possible futures.

Teaching a computer to learn from past transplant choices

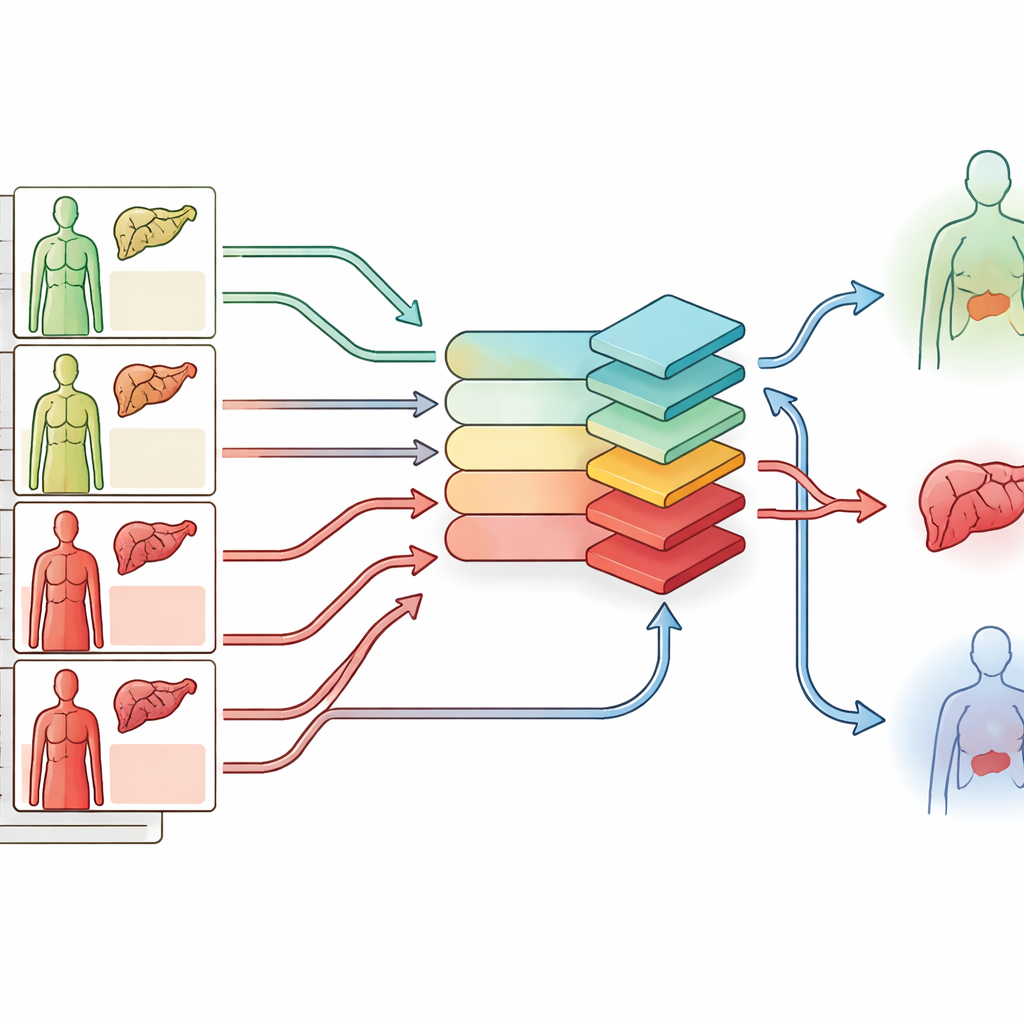

The researchers used records from the U.S. Scientific Registry of Transplant Recipients, focusing on more than 43,000 adults listed for a first-time liver transplant between 2017 and 2022. For each person, they reconstructed a timeline of MELD scores and possible donor offers, including organs that were ultimately discarded. At every step, the computer saw the patient’s characteristics, the potential donor’s features, and three possible actions: wait, transplant, or delist. It then received a reward signal based on what actually happened later—whether the patient’s condition improved or worsened, whether a transplant succeeded, failed, or never happened at all. Using a technique called Conservative Q-learning, the system was trained to favor decisions that, over many such timelines, led to fewer deaths and fewer failed transplants.

What the learned policy would have changed

When the trained system was tested on a separate group of patients, it avoided 73% of the donor–recipient pairings that in real life led to graft failure or death within a year of transplant, while still preserving 93% of the successful transplants. It also identified earlier transplant opportunities for nearly half of the patients who had died on the waitlist, suggesting that different timing or donors might have improved their chances. Importantly, unlike very aggressive strategies that simply transplant almost everyone once they are sick enough, this approach limited the overall number of transplants while still improving outcomes. In a more realistic simulation, where each donor liver could only be used once and patients left the pool after transplant or removal, the model still managed to match the majority of patients to donors while reducing avoidable failures.

Clues to better matches hidden in the data

Beyond raw performance numbers, the team examined what kinds of donor–recipient pairs the system tended to favor. Compared with simple MELD-based rules, the learned strategy chose patients who were somewhat less critically ill, and donors that looked more like those in historically successful transplants—fewer marginal organs and fewer recipients needing intensive life support. Strikingly, it also rescued a subset of livers that had been discarded in practice but appeared to be of relatively good quality. The model’s transplant choices within groups already labeled “high risk” by standard scores were associated with better actual survival, even though those scores themselves did not differ, hinting that the algorithm had picked up on complex patterns that existing tools miss.

What this could mean for patients and doctors

This work does not yet deliver a bedside-ready system, but it shows that an AI method trained entirely on past data can more closely mirror the real trade-offs faced in liver transplantation—balancing the danger of waiting too long against the risk of using a poor match. By learning when to say yes, no, or not yet to each organ offer, the approach reduced simulated graft failures while keeping most successes and offered potential lifelines to many who might otherwise have died on the waitlist. With further refinement, better data, and careful testing, similar decision-support tools could help transplant teams use each donated liver more wisely and give more patients a lasting second chance.

Citation: Melehy, A., Feng, J., Amara, D. et al. Liver transplant donor-recipient matching with offline reinforcement learning. npj Digit. Med. 9, 351 (2026). https://doi.org/10.1038/s41746-026-02529-1

Keywords: liver transplantation, organ allocation, reinforcement learning, medical AI, donor recipient matching