Clear Sky Science · en

A novel digital twin strategy to examine the implications of randomized clinical trials for real-world populations

Why this matters for everyday patients

When doctors read the results of a big clinical trial, a nagging question always remains: will these results really hold for the patients sitting in front of me? This study introduces a new way to answer that question using "digital twins" of clinical trials—computer-built copies of real studies that can be replayed inside different patient populations, including those drawn from electronic health records. The work focuses on blood pressure trials, but the approach could eventually help tailor evidence from almost any trial to the people who actually show up in clinics and hospitals.

The problem with one-size-fits-all trials

Randomized clinical trials are the gold standard for figuring out whether a treatment works, but they are usually run in carefully selected groups of patients. Many everyday patients—older adults, people with multiple illnesses, or those from underrepresented communities—may not resemble the people who volunteered for the original trials. As a result, doctors often have to guess how much to trust trial results for their own patients. This problem becomes especially troubling when different trials of what looks like the same treatment come to conflicting conclusions, leaving clinicians and guideline writers uncertain about what to recommend.

A puzzling disagreement between two blood pressure trials

The researchers focus on a well-known puzzle. One major trial, SPRINT, showed that aggressively lowering systolic blood pressure (aiming below 120 mmHg) clearly reduced major heart and blood vessel problems compared with standard care (aiming below 140 mmHg). Another trial, ACCORD, tested the same aggressive strategy but in people with type 2 diabetes and did not find a clear benefit. Many explanations have been suggested, including differences in who was enrolled and how often events occurred, but there has been no rigorous way to "transport" the result from one trial’s population into another and see whether the outcome would change.

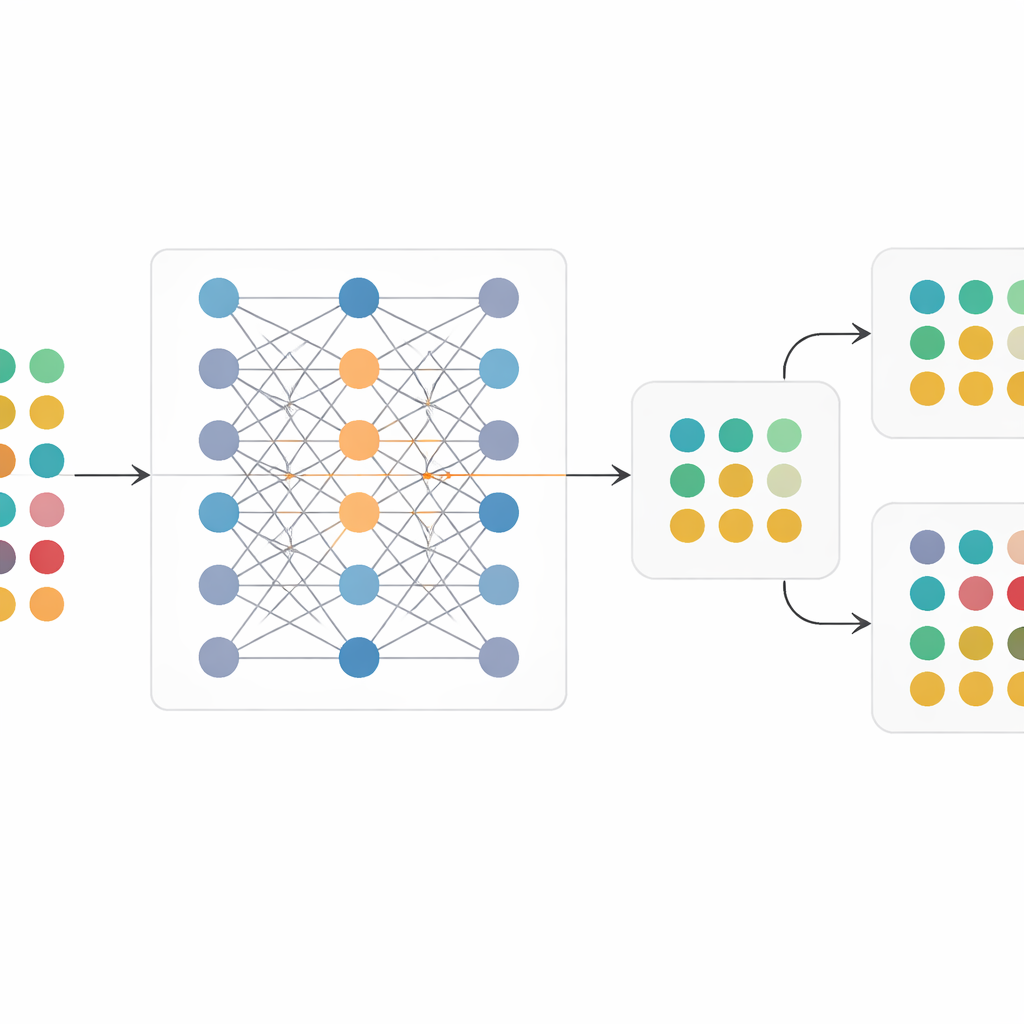

Building a digital twin of a trial

To tackle this, the team created RCT-Twin-GAN, a deep-learning framework that builds a digital twin of a randomized trial. The method uses a type of generative model that learns how many different patient characteristics—such as age, kidney function, heart rate, prior heart disease, and medication use—relate to each other and to trial outcomes. Clinical expertise is baked in through a directed map of cause-and-effect relationships, which guides the model to focus on connections that make medical sense and avoid spurious patterns. Once trained on an original trial, the model can then be “conditioned” on a second population: it takes in the profile of that new group and generates a synthetic version of the trial as if it had been run in those patients, while preserving randomization between treatment and control arms.

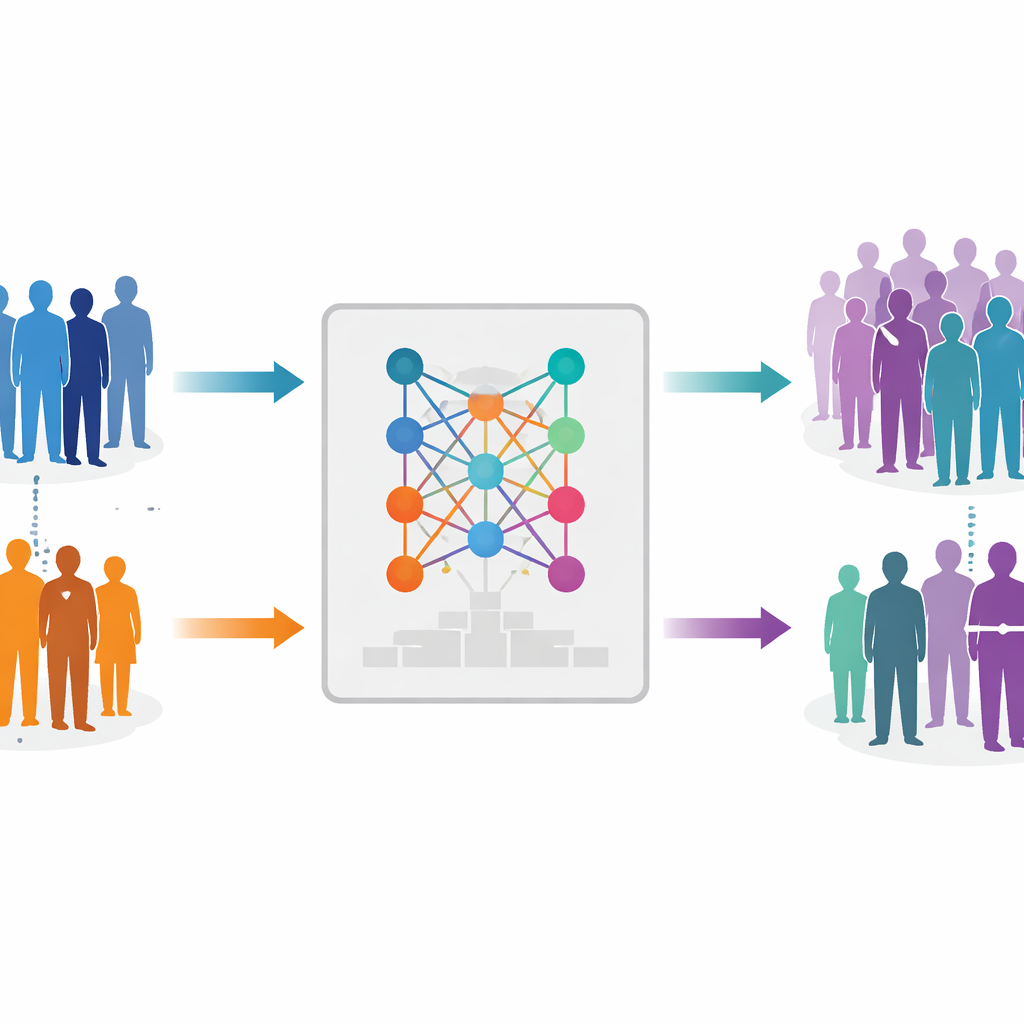

Replaying trials in new patient populations

The authors first checked that their digital twin could faithfully reproduce the original SPRINT and ACCORD trials. The synthetic versions closely matched the real trials in baseline characteristics, relationships between variables, and, crucially, in the size of the treatment benefit—or lack of benefit—seen in each study. They then performed a thought experiment: they trained the model on SPRINT but conditioned it on the ACCORD population, and vice versa. When SPRINT was replayed inside the ACCORD population, the digital twin showed no clear advantage of intensive blood pressure control, mirroring ACCORD’s real result. When ACCORD was replayed inside the SPRINT-like population, the digital twin showed a significant benefit, echoing SPRINT. Finally, they conditioned the model on real-world patients from a large health system’s electronic health records, creating trial twins that reflected local patient profiles and estimating what the SPRINT and ACCORD interventions might have achieved in those broader groups.

What this means for care and future trials

To a layperson, the takeaway is that the conflicting results of SPRINT and ACCORD likely stem more from differences in who was studied than from the blood pressure strategy itself. The same treatment can look helpful in one mix of patients and neutral in another. RCT-Twin-GAN offers a way to explore these "what if" scenarios quantitatively, without re-running expensive and time-consuming trials. While the estimates produced for electronic health record populations are not ready to guide individual care, they highlight where trial findings may or may not generalize. Over time, approaches like this could help health systems and regulators anticipate how new treatments will perform in real-world patients and design future trials that better match the people who need answers most.

Citation: Thangaraj, P.M., Shankar, S.V., Huang, S. et al. A novel digital twin strategy to examine the implications of randomized clinical trials for real-world populations. npj Digit. Med. 9, 329 (2026). https://doi.org/10.1038/s41746-026-02464-1

Keywords: digital twins, clinical trials, blood pressure, electronic health records, generative AI