Clear Sky Science · en

Comparing artificial intelligence and healthcare professional performance in surgical and interventional video analysis: a systematic review and meta-analysis

Smarter Eyes in the Operating Room

Every year, hundreds of millions of people undergo operations and minimally invasive procedures guided by video—think colonoscopies, keyhole surgery, or tiny cameras snaked into blood vessels. In these moments, a doctor’s ability to spot subtle warning signs on a screen can mean the difference between catching a cancer early or missing it. This study asks a question that matters to any future patient: when surgical and interventional videos are analyzed, how well do artificial intelligence systems perform compared with human clinicians, and what happens when the two work together?

Bringing Order to a Flood of Surgical Video

Modern medicine now records vast numbers of procedure videos, from digestive endoscopies to robot-assisted operations. These recordings are rich with information: tiny polyps in the colon, early tumors in the stomach or esophagus, delicate nerves that must be avoided, or steps in a complex operation. Researchers have been training AI systems to scan these images, flag suspicious areas, and even recognize where surgeons are in a procedure. Yet until now, most studies pitted AI against doctors in artificial head-to-head contests, rather than asking how the technology might realistically be used—as a helper at the clinician’s side. This review set out to systematically collect and analyze that scattered evidence across many specialties.

What the Researchers Examined

The team searched major medical and engineering databases and started with nearly 38,000 papers. After applying strict criteria—only primary studies that used AI on real surgical or interventional videos and directly compared its performance with that of healthcare professionals—only 146 studies remained. These covered a broad range of procedures, especially gastrointestinal endoscopy, but also lung, thyroid, brain, heart, and urologic interventions. Most used modern deep-learning methods, such as convolutional neural networks, trained to detect disease, recognize anatomy, grade the cleanliness of the bowel, or identify steps in an operation. Seventy-six of these studies reported enough detail to allow the authors to pool results and calculate how often AI and humans were right or wrong.

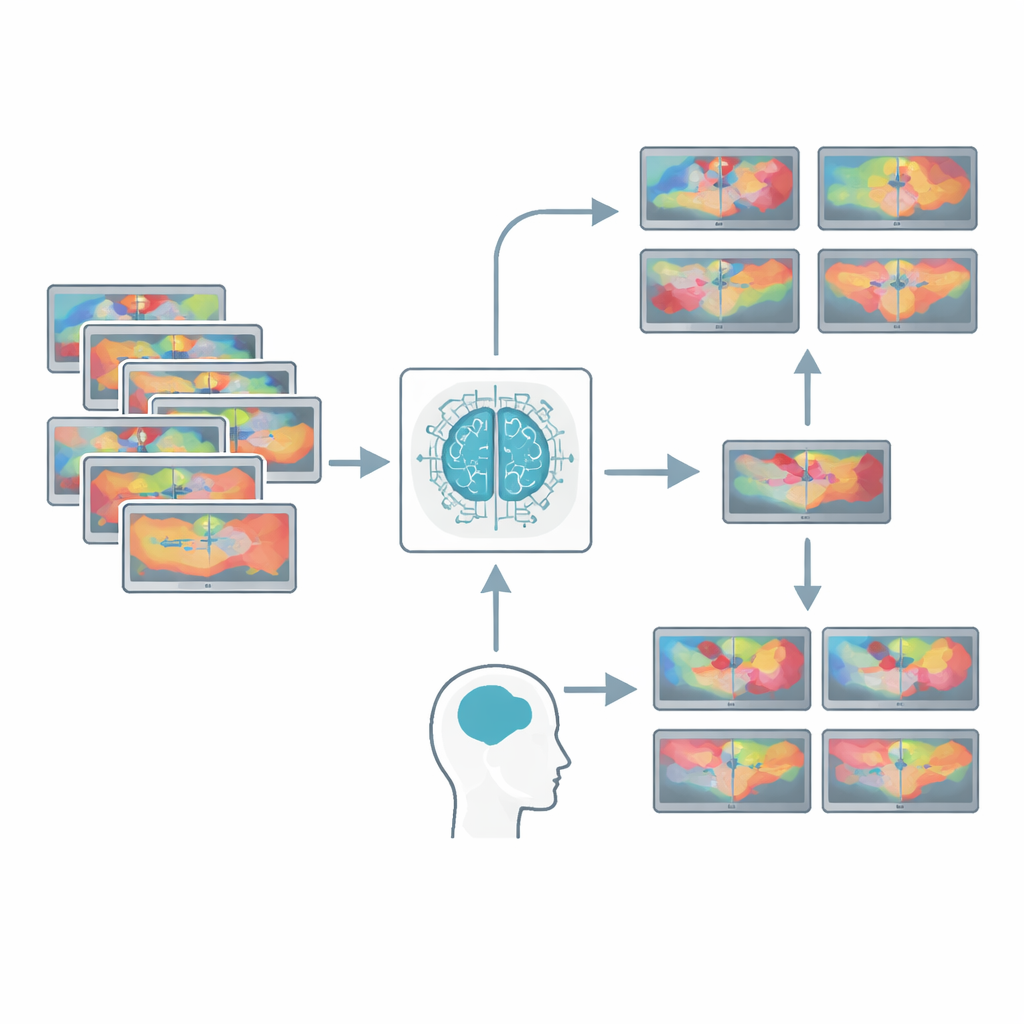

AI Alone Versus Doctors, and AI as a Teammate

When the researchers compared AI to unaided clinicians looking at the same videos, AI systems generally picked up more true problems (higher sensitivity) without causing more false alarms (similar specificity). This pattern held both when models were tested on familiar data and when they faced new, external datasets. However, the most clinically relevant finding came when AI was used as an assistant. Across a wide range of tasks, clinicians who could see AI suggestions were better at finding disease and less likely to misclassify normal tissue than those working alone. This boost was especially striking for non-experts, such as trainees, who gained the most from AI guidance. For seasoned specialists, AI assistance and AI alone performed at roughly similar levels, suggesting that in expert hands, the human–machine combination can match the best standalone algorithms.

Gaps Between Lab Conditions and Real Life

Despite these promising numbers, the review highlights a gap between how AI is currently tested and how it must work in real clinical settings. Many studies cleaned their data by removing blurry or low-quality video frames, even though real operating rooms and endoscopy suites often deal with exactly such imperfections. Others analyzed isolated snapshots instead of continuous video, sidestepping the challenge of following motion and timing. Few studies evaluated AI in real time at the bedside, and most relied on high-end equipment that may not be available in resource-limited hospitals. Reporting practices were also inconsistent: key details about how models were tuned and validated were often missing, making it difficult for others to reproduce or fairly judge the results.

Building Trustworthy Human–AI Partnerships

The authors argue that AI in surgery and interventional medicine should be developed and tested from the outset as a partner for clinicians, not a replacement. That means designing studies that mirror real-world conditions, sharing diverse video datasets across centers, and adopting clear reporting standards so other teams can verify and improve on published work. It also means training clinicians to understand AI’s strengths and biases, rather than blindly trusting or dismissing its suggestions. While the meta-analysis shows that AI can already match or surpass unaided human performance in many video-based tasks, the most meaningful benefit lies in how it can sharpen human judgment. For patients, the takeaway is not that machines will take over the operating room, but that carefully designed human–AI teams could make procedures safer, diagnoses earlier, and outcomes better.

Citation: Rafati Fard, A., Williams, S.C., Smith, K.J. et al. Comparing artificial intelligence and healthcare professional performance in surgical and interventional video analysis: a systematic review and meta-analysis. npj Digit. Med. 9, 323 (2026). https://doi.org/10.1038/s41746-026-02401-2

Keywords: surgical video AI, computer-assisted endoscopy, human–AI collaboration, medical image analysis, clinical decision support