Clear Sky Science · en

Optimized Lightweight U-Net and YOLACT framework for multi-disease severity detection in pome fruit leaves

Why smarter leaf checks matter

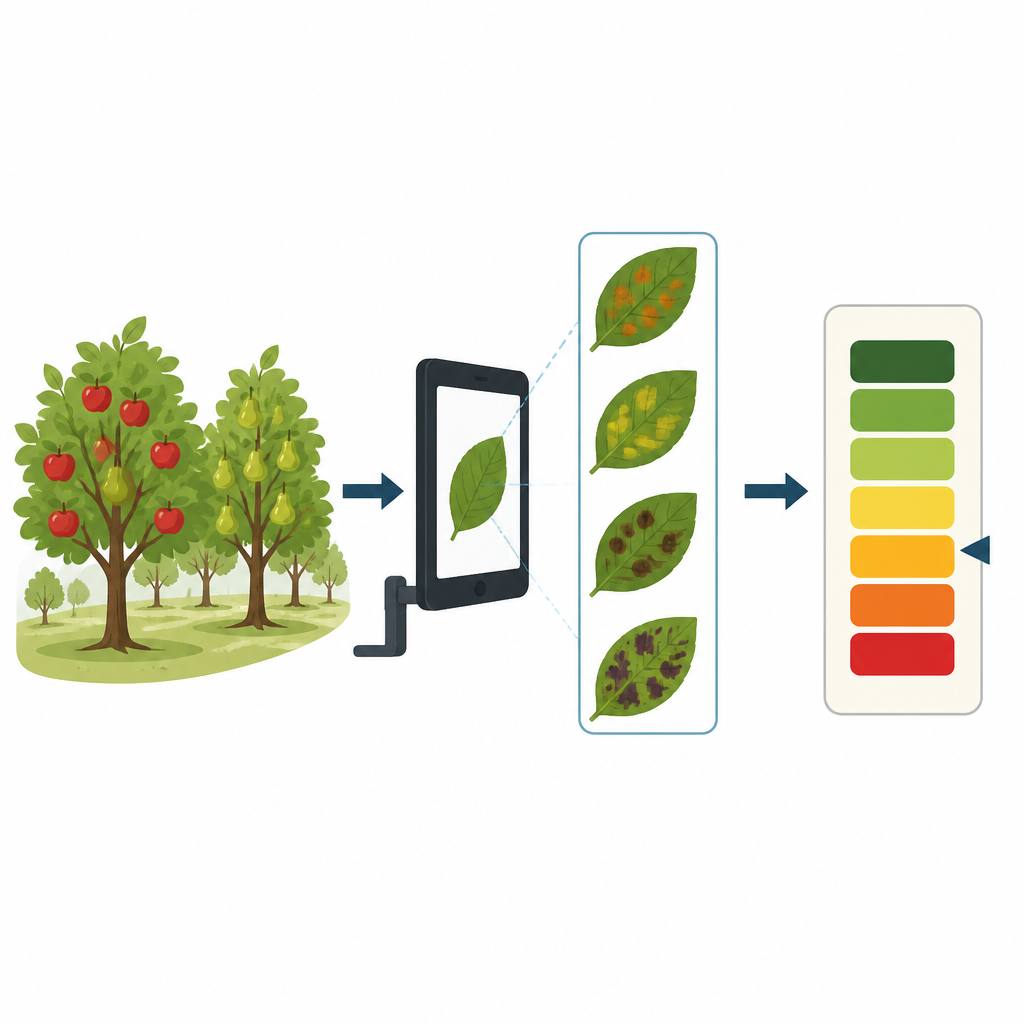

Farmers who grow apples and pears know that leaf spots and blemishes can spread fast and quietly drain a harvest. Walking through orchards and judging disease by eye is slow and often uncertain, especially when more than one infection attacks the same leaf. This study presents an automated, camera-based approach that can spot several diseases on a single pome fruit leaf and estimate how bad each infection is, offering a practical path toward faster, more reliable crop health checks.

Seeing more than the human eye

The researchers focus on pome fruits such as apples and pears, which are highly vulnerable to leaf diseases that scar fruit quality and cut yield. Traditional inspection relies on experts visually grading symptom size and color, a process that is time consuming and can vary from person to person. Real orchards also add cluttered backgrounds, changing light, and leaves that overlap or curl. The team set out to build a tool that could handle all this visual noise, work from ordinary color photos, and still distinguish multiple overlapping infections on a single leaf while rating how advanced each disease is.

A two step view of sick leaves

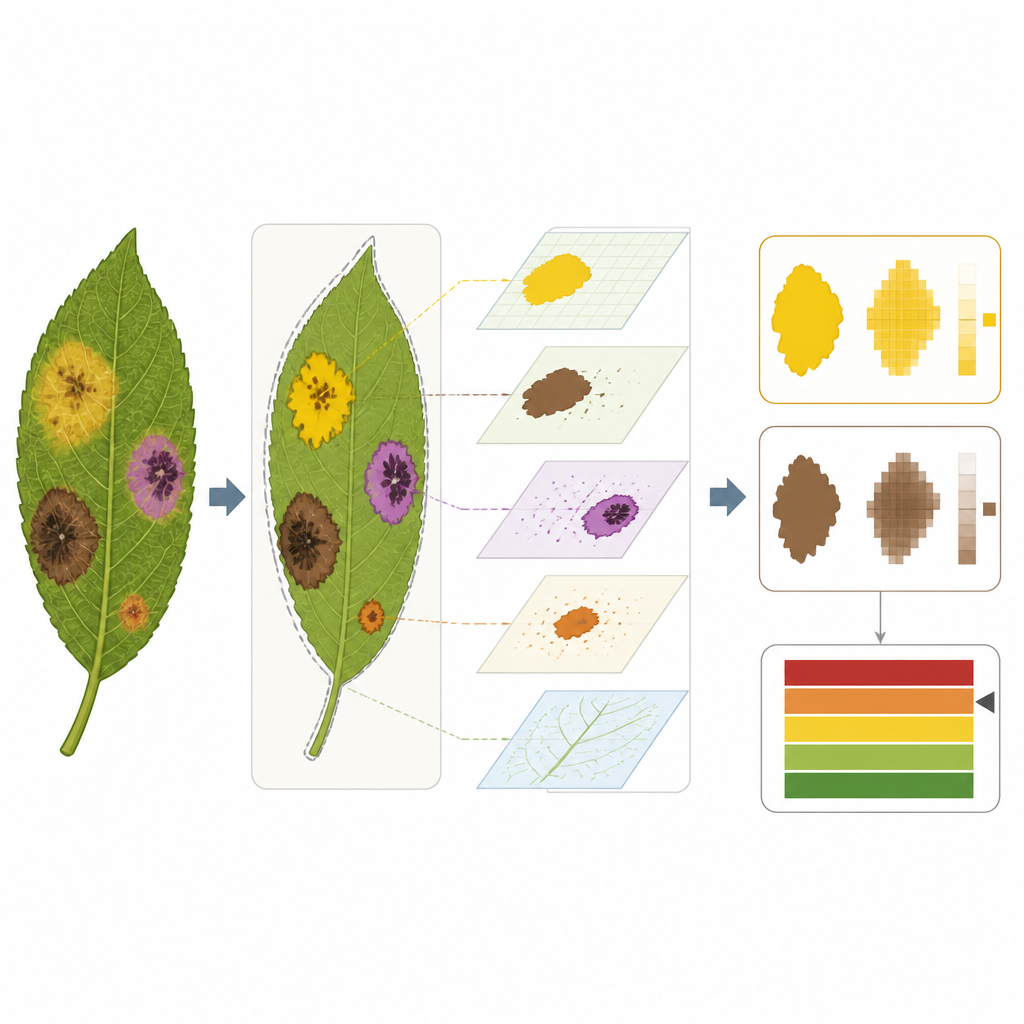

To tackle this, the study uses a dual digital vision pipeline. First, a streamlined version of a popular medical imaging network, called Lite U Net, is trained to separate the leaf from its surroundings and mark the general diseased areas. This step trims away branches, sky, and soil, ensuring that later analysis concentrates only on plant tissue. Next, a lightened version of another network, Lite YOLACT, zooms in further to pick out each distinct diseased patch as its own object. Together, these two steps let the system recognize that a single leaf might, for example, carry both scab and rust, each with its own size and spread.

Turning spots into simple scores

Once each diseased area is outlined, the method measures how much of the leaf surface is covered by each infection. The authors design a new nine level severity scale that translates these percentages into easy to understand stages, from tiny early specks to near total damage. The scale is built to handle two diseases at once, describing whether both are mild, both advanced, or one clearly dominating the other. To help users trust the system, the researchers add a visualization technique called Grad CAM that produces heatmaps, showing which parts of the leaf the model relied on when deciding how severe the infections are.

Testing in orchards and digital labs

The team trains and tests their approach on two large public image collections of apple and pear leaves. These datasets include several common diseases, photographed under real field conditions and labeled by plant health specialists. After careful preprocessing, data balancing, and training on modern graphics hardware, the combined system reaches about 95 percent accuracy when grading multi disease severity. It also runs efficiently: by using compact network designs and clever ways of sharing computations, the model needs fewer calculations and less memory than many standard deep learning systems, making it suitable for phones or edge devices used directly in the field.

What this means for growers

In everyday terms, this work shows that an inexpensive camera plus a lean artificial intelligence model can reliably spot and grade several leaf diseases at once on apples and pears. Instead of rough visual guesses, growers could receive consistent numeric estimates of how much of each leaf is infected and how far each disease has progressed. While the method still needs broader testing on more crops and conditions, it points toward practical digital scouts that help farmers act earlier, target treatments more precisely, and reduce both waste and chemical use in orchards.

Citation: Qasim, M., Adnan, S.M. & Safi, Q.G.K. Optimized Lightweight U-Net and YOLACT framework for multi-disease severity detection in pome fruit leaves. Sci Rep 16, 15132 (2026). https://doi.org/10.1038/s41598-026-45947-7

Keywords: plant disease detection, apple leaf disease, pear leaf disease, deep learning agriculture, disease severity grading