Clear Sky Science · en

Machine learning driven clustering for silhouetting 5G network throughput

Why faster uploads on your phone matter

Streaming a live event, joining a video call, or sending big files from your phone all depend on how quickly data can travel from your device back to the network—the uplink. While fifth‑generation (5G) mobile networks promise blazing speeds, making sure every user in a crowded area actually enjoys smooth, reliable service is far from trivial. This paper explores how simple machine learning can help a 5G cell juggle many demanding users at once, boosting upload speeds and treating them more fairly.

A crowded small cell in the real world

The study focuses on a “picocell,” a small 5G base station that serves just fifteen nearby users within a radius of up to 250 meters—think a busy café, office floor, or stadium corner. Instead of assuming perfect conditions, the authors simulate four realistic wireless environments, including common effects such as signal fading and path loss as radio waves travel and bounce around obstacles. Each user is assigned a particular service type—audio streaming, video calls, or high‑definition video—each with a minimum data rate that must be met for the experience to feel smooth. The system calculates, for every user, how strong their signal is, how much interference they face, and the resulting data capacity of their link back to the base station.

When 5G promises fall short

Before machine learning enters the picture, the researchers examine how well this tiny network serves its users if treated in a more traditional way. They find that only 9 out of 15 users (60%) actually receive enough capacity to satisfy their service’s minimum requirement. Some users enjoy several megabits per second of throughput, while others barely scrape by with a few tens of kilobits, leading to choppy calls or stalled video. This imbalance arises because users share radio resources in a complex, constantly changing environment, where devices at different distances and angles from the base station compete for limited bandwidth.

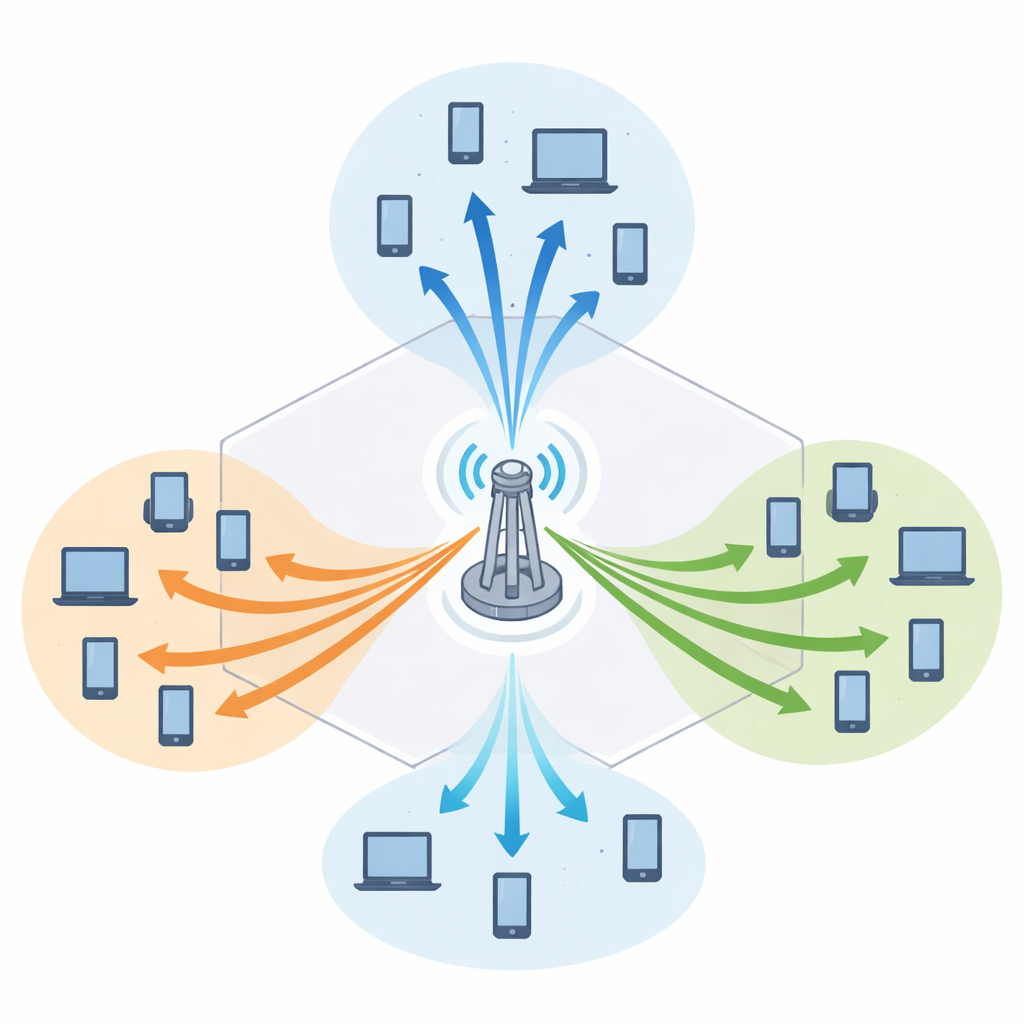

Letting data sort itself into groups

To tackle this, the authors turn to a classic unsupervised machine learning method called clustering. Instead of treating every user in isolation, the network groups users that have similar channel conditions and service needs. The study compares three clustering methods—K‑means, DBSCAN, and Gaussian mixture models—but finds that K‑means works best for this task. It is both simple and fast, scaling linearly with the number of users, which is crucial for real‑time decisions in busy 5G cells. The algorithm uses three main pieces of information for each user: how good the channel is, the current data rate, and the minimum required service rate. After carefully scaling these features so none dominates the others, K‑means forms three user clusters that reflect both radio conditions and service demands.

Turning smart grouping into real speed gains

Once users are clustered, the base station can allocate bandwidth to each group more intelligently, effectively “bundling” resources for users whose needs and conditions align. This reorganization significantly raises the total data carried by the cell. With normalization of the input features, the cumulative throughput rises from 19.39 to 21.54 megabits per second—an improvement of about 11%. Within the best‑performing cluster, the users collectively achieve a cumulative rate of 9.52 Mbps and a high average rate per user. Just as important, every user now meets the minimum requirement of their assigned service: video calls, audio streams, and HD video all run at or above their target rates. The study also shows that this approach remains efficient as more users join, unlike heavier clustering techniques whose computation time grows too quickly.

What this means for your future 5G experience

In plain terms, the work shows that even straightforward machine learning can act as a traffic director for 5G uplink connections, sorting phones into sensible groups and handing out bandwidth in a way that boosts total capacity while preventing users from being left behind. Instead of relying on rigid, one‑size‑fits‑all rules, the network adapts to the actual signal conditions and app needs it observes. The authors suggest that future systems could go further by updating clusters in real time, adding more advanced learning methods, and combining this with focused signal steering (beamforming). Together, these ideas could make the promise of fast, fair 5G uploads a reality in the crowded places where we rely on our phones the most.

Citation: Ramesh, P., Bhuvaneswari, P.T.V. Machine learning driven clustering for silhouetting 5G network throughput. Sci Rep 16, 10583 (2026). https://doi.org/10.1038/s41598-026-45902-6

Keywords: 5G uplink, mobile broadband, machine learning, user clustering, network throughput